Nvidia's Maxwell architecture is a wonderfully impressive piece of engineering for efficiency geeks, and it reaches near-apotheosis with the GM200-powered GeForce GTX Titan X. This is an architecture that has very clearly been tailored and tuned to maximize gaming performance per watt, and while it loses some steam on the compute side to AMD’s more flexible but also more power hungry GCN architecture, it’s very hard to not be at least a little impressed by the monstrous Titan X.

Guest author Dustin Sklavos is a Technical Marketing Specialist at Corsair and has been writing in the industry since 2005. This article was originally published on the Corsair blog.

Better still, across the board, Nvidia’s Maxwell cards have left plenty of gas in the tank on release that a hypothetical HG10 would certainly help take advantage of. Can’t imagine why anyone would want to produce something like that. But since this mythical sea creature doesn’t exist, we have to look at how a GTX 980 and Titan X overclock when under a reference card or under some kind of heretofore unannounced watercooling apparatus.

I’ve had a decent amount of experience playing around with overclocking the GTX 980 (under reference and under water) and the Titan X (again, under reference and under water), and there are some modest differences – especially with the Titan X – compared to the Kepler generation cards.

Last generation’s Kepler cards could have their overclocks tested fairly reliably with just 3DMark Fire Strike Extreme; if your VRAM clock was unstable, the sparks in Graphics Test 2 would flicker, and if your VRAM and/or GPU clock were unstable, the Nvidia driver would crash. That’s not happening with the 980s or Titan X.

For sure, just completing a run of 3DMark Fire Strike Extreme or Ultra typically means you’re about 75% certain of a stable overclock still. But the Titan X especially can seem deceptively stable and be having problems, and that’s why you expand your stability testing a little more – and pay attention to the testing. Likewise, my GTX 980s under water will rocket through 3DMark Fire Strike Extreme and then artifact or crash in something else.

If you’re overclocking either card, it’s smart to first see what your top GPU overclock is, and then your top VRAM overclock, and then combine the two. You may have to notch one down; given how efficient Nvidia’s memory compression is on Maxwell, the VRAM overclock is the safer one to reduce.

As a stability testing procedure, I recommend these steps:

First, 3DMark Fire Strike Extreme will catch really unstable overclocks; assuming it doesn’t crash, artifacting will be most prominent in Graphics Test 2.

Next, BioShock Infinite has an automated benchmark that’s been very handy. Run the benchmark at the highest settings you can; pay attention to the sunshafts in the very beginning coming through the glass. If your overclock is unstable, these will artifact.

Tomb Raider also has an automated benchmark. Run the benchmark at the highest settings you can, and make sure TressFX is enabled. The shadows cast by Lara’s hair will flicker in an SLI system; that’s normal. But if your overclock is unstable, black triangle artifacts will materialize out of Lara’s hair.

Finally, Far Cry 4. Seriously, just play the game for a minute or so. If your overclock is unstable, it’ll crash in a heartbeat.

By using these testing methods, I’ve been able to pretty reliably run these cards at high speeds without issue. So what can you typically expect as far as overclocks go? What kind of performance increases are we looking at?

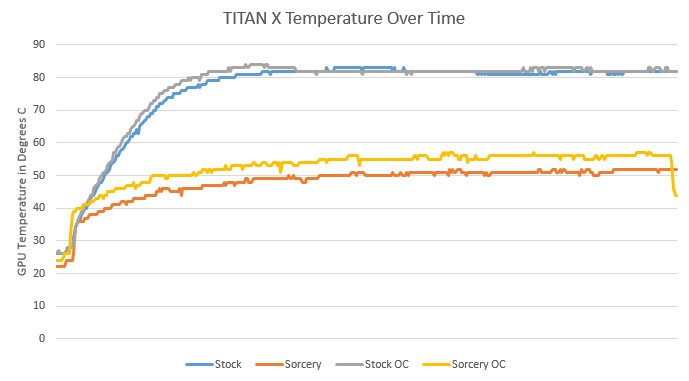

First things first: either water cooling or just running your cooler at its highest speed will allow your card to maintain higher clocks for longer periods of time. Cooling the power circuitry efficiently results in the card drawing less power in general because the VRMs don’t heat up as much and thus don’t have to work as hard; for you, this means you’re less likely to hit the TDP wall, and a high overclock is easier to sustain.

On a stock-clocked GeForce GTX 980, you can probably get your Core up to +200 before instability sets in, which results in peak clocks just north of 1450MHz. Because the 980 has a narrower memory bus and less VRAM overall than the Titan X, you can also get some additional mileage out of the VRAM. Ballpark +300 on the Memory (for 7.6GHz GDDR5), but most of the 980s I’ve played with have been able to go up to +500 (for an even 8GHz GDDR5). The higher you push your core clock, the more benefit you’ll get out of pushing the memory clock; combined, I’ve been able to get 15%-20% higher performance out of the 980.

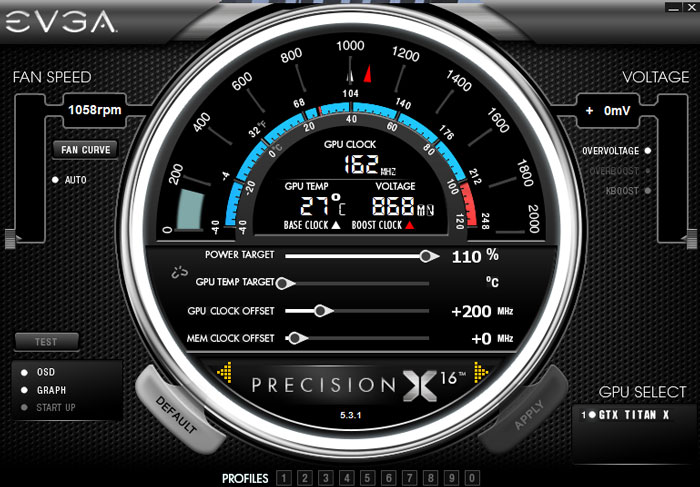

The Titan X is about 50% more card in general than the 980 and so it has a bit less headroom, but headroom it still has. +200 Core still seems to be the way to go, resulting in a peak clock of ~1420MHz. But I wouldn’t touch the memory. If you remember Kepler, 7GHz of GDDR5 on a 384-bit memory bus was next to impossible to saturate. Factor in Maxwell’s vastly improved memory compression, and performance gains from overclocking the memory become very minimal. If you want that last frame or two per second of performance, you can try it, but it’s imperceptible. Overclocking the Titan X can get you between 7% and 15% more performance.

Nvidia’s Maxwell-based cards are performance monsters, and once again Nvidia has left plenty of gas in the tank for us to play with. We lose a lot of Maxwell’s trademark efficiency, but not all of it, and in exchange we can gain a very respectable amount of performance. Of course, if you put it under water, suddenly your temperatures look like this over the course of two runs of Unigine Valley:

But why would anyone enable something like that?

https://www.techspot.com/news/60382-geforce-gtx-980-titan-overclocking.html