Be careful who's selling you that high-end AMD Radeon GPU, especially on Amazon

Path Tracing vs. Ray Tracing, Explained

#TBT Every few years it seems like there's an amazing new technology with the promise of making games look ever more realistic. We've had shaders, tessellation, shadow mapping, ray tracing – and now there's path tracing.

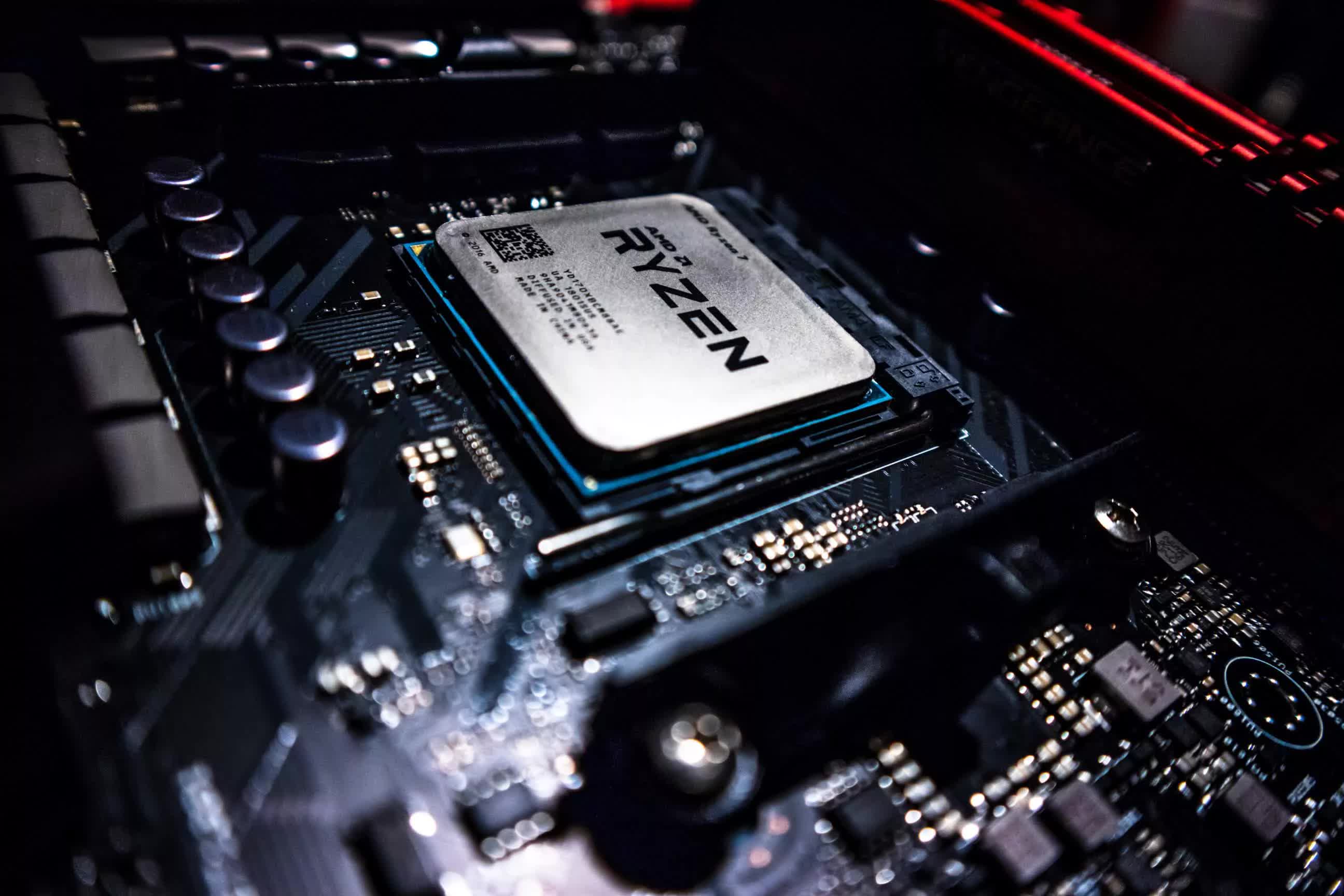

AMD Ryzen 5 3600 vs. Intel Core i5-9600K: 2023 Revisit

Let's revisit the gaming performance of two budget CPUs from years past: the Ryzen 5 3600 and Core i5-9600K to see how they compare in today's games but also determine which platform has aged best.

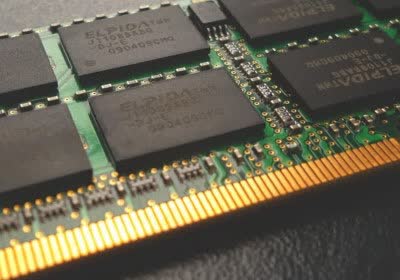

AMD 3D V-Cache CPU memory used to create incredibly fast RAM disk

AMD Ryzen 8000G APU specs, benchmarks, and release date leaked

AMD could launch a 'mid-range' RDNA 4 GPU that's faster than the Radeon RX 7900 XT

Best CPU Deals, AMD vs Intel: Holiday CPU Buying Guide

A look at the CPU market and pricing to see what would truly constitute a Black Friday sale and give our advice on the kind of deals you can expect during the holiday shopping season.

Black Friday GPU Buying Guide: November GPU Pricing Update

For this month's GPU pricing update, we have Nvidia's upcoming GeForce 40 Super rumors, current-gen GPU pricing, and what sort of deals you should be looking out for during Black Friday.

AMD Ryzen Threadripper 7980X and 7970X Review

It's not every day that we get to review a $5,000 CPU, so this should be fun. The Ryzen Threadripper 7980X and 7970X pack 64 and 32 cores, two new super-powerful chips part of AMD's latest HEDT lineup.

Radeon 7900M trades blows with laptop RTX 4090 in Vulkan benchmarks

AMD may be prepping new AM4 processors with 3D V-Cache

AMD gains CPU market share in desktops, laptops, and servers

The Upgrade Path: AMD Ryzen 3700X to 5800X3D vs. Intel Core i9-9900K

Following up with our CPU testing from last week: How do the 3700X and 9900K compare today, and how does the 9900K stack up against the 5800X3D? It's an interesting question and a great comparison to look back on.

AMD Ryzen 7 3700X vs. Intel Core i9-9900K: 8-Core CPUs, 4 Years Later

Today we're taking a look back at CPUs released in the last 4 to 5 years, the Ryzen 7 3700X and Core i9-9900K. There's a good reason (but not an obvious one) why we chose these two CPUs.

AMD Ryzen 5 7545U and Ryzen 3 7440U mobile processors launched with Zen 4c cores

Latest Steam survey sees AMD and Windows 11 crash as a new top language appears

PC CPUs are getting more interesting, and competition is coming

Why it matters: Five years ago there were only two companies that made CPUs, today there are a dozen. Most of the new entrants went after the big, profitable data center market, but now competitors are coming for PCs. Nvidia and AMD are reportedly preparing Arm-based CPUs for PCs. With Microsoft opening up the market for Arm laptop CPUs, this spells bad news for Qualcomm today, and potentially bad news for Intel over the very long term.

Nvidia and AMD are planning Arm-based CPUs for consumer PCs

Valve and AMD begin fixing Counter-Strike 2 driver bans

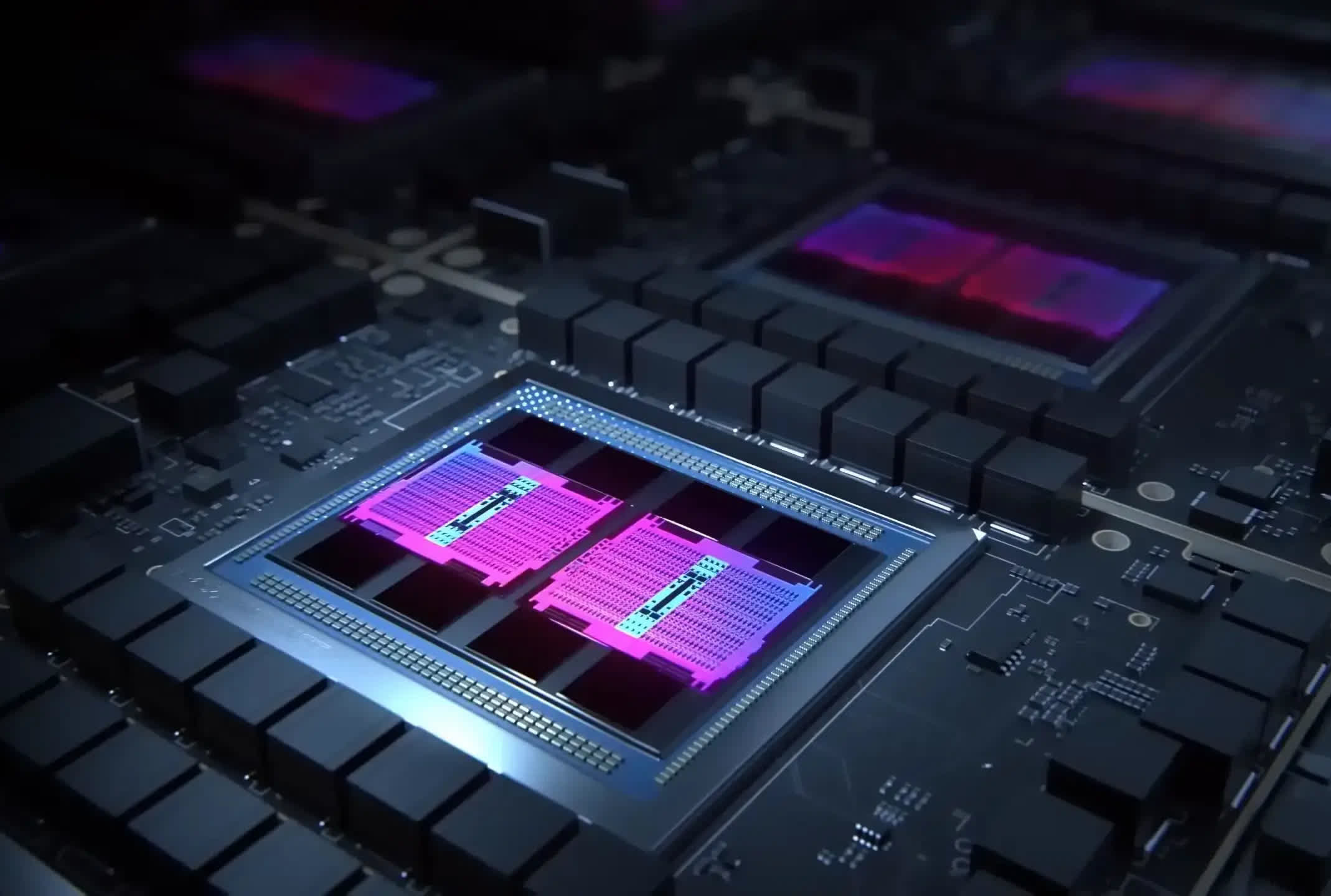

4 Years of AMD RDNA: Another Zen or a New Bulldozer?

It's been four years since AMD launched RDNA, the successor to the venerable GCN graphics architecture. We take a look through the tech and numbers to see just how successful it's been.

Are GPU Prices Going Up Now? October GPU Pricing Update

Welcome to our monthly GPU pricing update. There's bits of news to go through, a few odd GPU launches, and some unfortunate price hikes. There's also some positive GPU price adjustments to discuss.