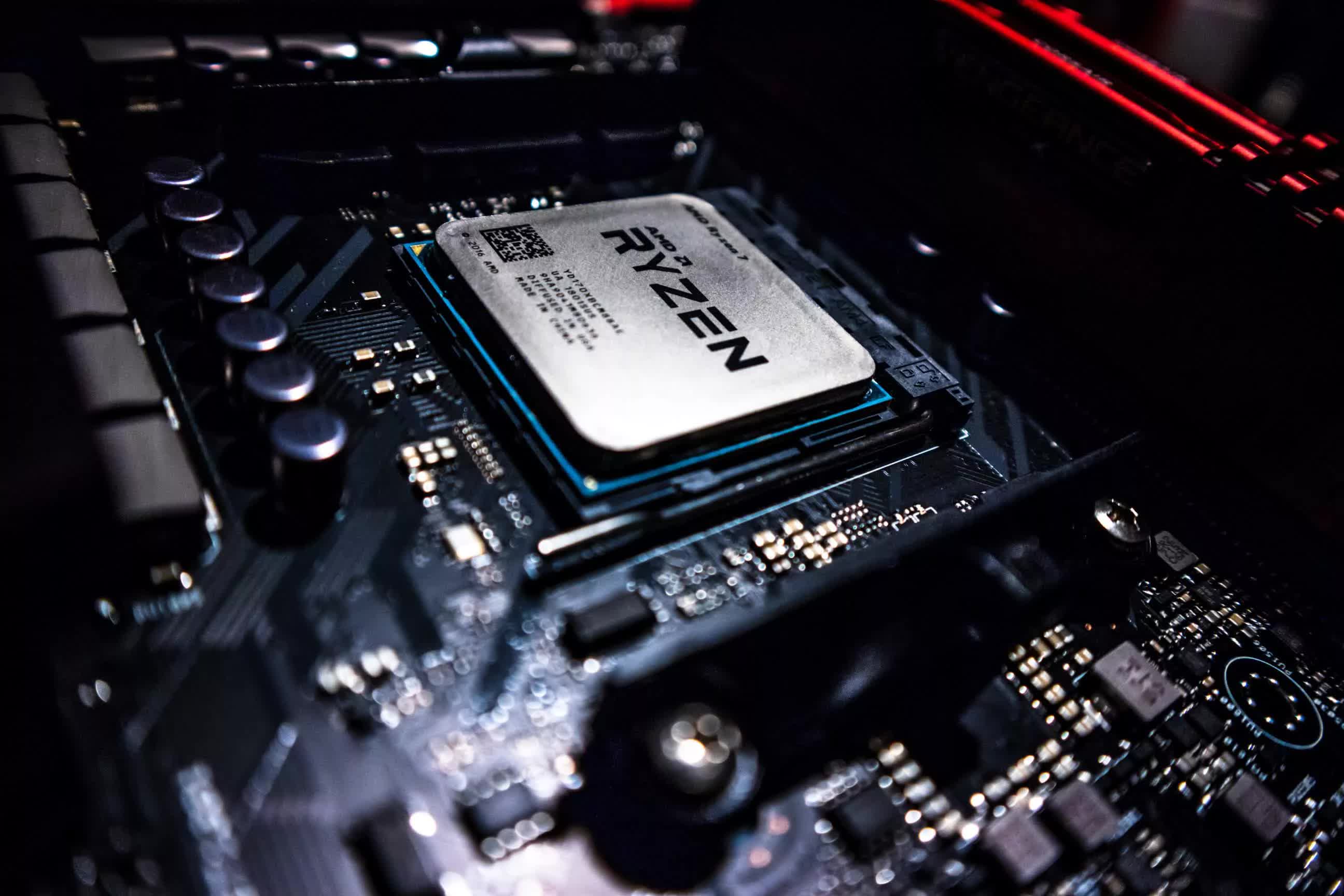

The Upgrade Path: AMD Ryzen 3700X to 5800X3D vs. Intel Core i9-9900K

Following up with our CPU testing from last week: How do the 3700X and 9900K compare today, and how does the 9900K stack up against the 5800X3D? It's an interesting question and a great comparison to look back on.