After teasing the technology back in January, Nvidia has finally thrown back the curtain on Optimus and is promising notebook users the full performance benefits of a discrete GPU with the battery life of an integrated graphics solution. In a nutshell, it allows notebooks to dynamically switch between graphics systems without any user interaction.

Notebook manufacturers have been combining discrete and integrated graphics for a while now, but thus far choosing which card should handle graphics at any given time has remained a manual process. Sometimes this involved a software switch and a lot of screen flickering as the discrete graphics chip turns on or off, sometimes an actual physical switch on the laptop and a system restart. However, it always required some form of interaction and prioritizing notebook performance or battery life.

This process is just too cumbersome and confusing for mainstream users, some of which may not even know they have switchable graphics, and will simply leave the discrete GPU permanently off or on. Optimus on the other hand is automatic. It determines the best processor for the workload and routes it accordingly, with the decision being entirely transparent to users as they fire up a game or start writing an email.

This seems like such an obvious thing, one might wonder why we weren't already doing it that way. But under the hood it's much more complicated. Nvidia claims it was an issue with both the integrated and discrete graphics sharing a multiplexer (or mux) connection to the monitor, which made it impossible to switch on the fly. Optimus takes a different approach by instead treating the GPU like a co-processor and routing its output through the IGP so there's only a single point of connection to the display.

The technology is designed to run on Nvidia's next-gen Ion and Geforce M products as well the upcoming Geforce 200M and 300M GPUs. What's more, it will work in conjunction with Intel's Arrandale Core i3, Core i5 and Core i7 processors as well as with its Penryn Core 2 Duo and Pine Trail Atom N4xx chips.

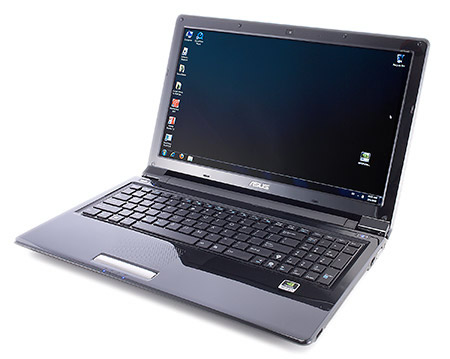

Nvidia says that several manufacturers are putting Optimus into their products. The first such laptops will hit at the end of this month courtesy of Asus, and will include the UL50Vf, N61Jv, N71Jv, N82Jv, and U30Jc.