AMD has revealed more information regarding Kaveri: its upcoming 28nm Steamroller-based APU. The company is touting Kaveri's heterogeneous system architecture – a design which aims to greatly increase the performance output of on-die graphics cores. This technological achievement likely mark the future direction of AMD's APUs.

Heterogeneous system architecture: that's the buzz term AMD is using to describe what sets Kaveri apart from previous APUs. In a nutshell, HSA solves a long-standing issue with existing CPU + GPU implementations by allowing both units to directly access each other's memory pools. Ars Technicia published an interesting (and detailed) write-up yesterday which explains why HSA makes Kaveri special.

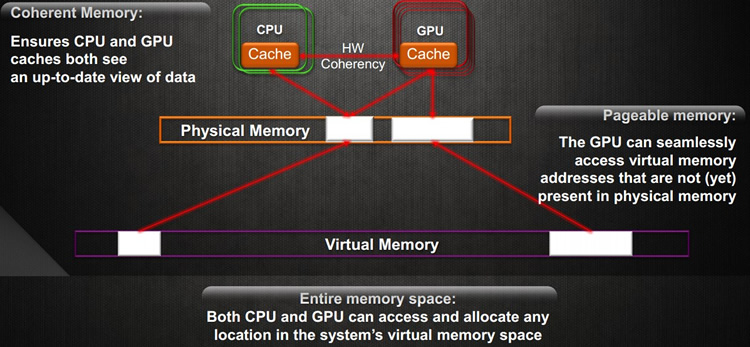

In more detail, communication between CPUs and GPUs has remained somewhat inefficient over the years. Each unit has always enjoyed its own dedicated pool of memory and as a result – for CPUs and GPUs to perform their duties – they've traditionally had to copy data back and forth between each other's memory pools. Even when CPUs and GPUs share the same physical die, this logical separation remains.

Naturally, all that copying comes at the cost of performance. The solution? Create a shared addressing space for CPUs and GPUs.

This is where HSA comes in. Although it falls just short of creating a single, unified memory pool; HSA does provide seprate processing units direct access to each other's memory pools. This eliminates the need for copying which substantially decreases the time and work required. The result will be a nice bump in GPU performance.

Kaveri will most likely hit the scene toward the tail-end of 2013, possibly just before Intel's Broadwell arrives. Although Kaveri's computational units will be based on a somewhat aging 28nm process, the APU will pack a Graphics Core Next GPU (i.e. Radeon 7000-series). It'll be interesting to see just how well Kaveri performs.

Interestingly, both Microsoft and Sony will be rolling out their next-gen consoles based on AMD technology. Could Kaveri be one of the reasons console makers are making a shift from RISC to x86?