This is a guest post by Kent Smith, senior director of marketing for LSI's Flash Components Division,

overseeing all outbound marketing and performance analysis for the company.

It may sound crazy, but hard disk drives do not actually have a delete command. Now we all know HDDs have a fixed capacity, so over time the older data must somehow get removed, right? Actually it is not removed, but overwritten. The operating system uses a reference table to track the locations (addresses) of all data on the HDD. This table tells the OS which spots on the HDD are used and which are free. When the OS or a user deletes a file from the system, the OS simply marks the corresponding spot in the table as free, making it available to store new data. The HDD is told nothing about this change, and it does not need to know since it would not do anything with that information. When the OS is ready to store new data in that location, it just sends the data to the HDD and tells it to write to that spot, directly overwriting the prior data. It is simple and efficient, and no delete command is required.

However, with the advent of NAND flash-based solid state drives (SSDs) a new problem emerged. In a previous article I wrote, Gassing up your SSD, I explained how NAND flash memory pages cannot be directly overwritten with new data, but must first be erased at the block level through a process called garbage collection.

The SSD uses non-user space in the flash memory (over provisioning or OP) to improve performance and longevity of the SSD. In addition, any user space not consumed by the user becomes what we call dynamic over provisioning - dynamic because it changes as the amount of stored data changes.

Garbage collection starts when a flash block is full of data, usually a mix of valid (good) and invalid (older, replaced) data. The invalid data must be tossed out to make room for new data, so the Flash Storage Processor (FSP) copies the valid data of a flash block to a previously erased block, and skips copying the invalid data of that block. The final step is to erase the original whole block, preparing it for new data to be written.

When less data is stored by the user, the amount of dynamic OP increases, further improving performance and endurance. The problem I alluded to earlier is caused by the lack of a delete command. Without a delete command, every SSD will eventually fill up with data, both valid and invalid, eliminating any dynamic OP. The result would be the lowest possible performance at that factory OP level. So unlike HDDs, SSDs need to know what data is invalid in order to provide optimum performance and endurance.

Keeping your SSD TRIM

A number of years ago, the storage industry got together and developed a solution between the OS and the SSD by creating a new SATA command called TRIM. It is not a command that forces the SSD to immediately erase data like some people believe. Actually the TRIM command can be thought of as a message from the OS about what previously used addresses on the SSD are no longer holding valid data. The SSD takes those addresses and updates its own internal map of its flash memory to mark those locations as invalid. With this information, the SSD no longer moves that invalid data during the GC process, eliminating wasted time rewriting invalid data to new flash pages. It also reduces the number of write cycles on the flash, increasing the SSD's endurance. Another benefit of the TRIM command is that more space is available for dynamic OP.

Today, most current operating systems and SSDs support TRIM, and all SandForce-based SSDs have always supported TRIM. Note that most RAID environments do not support TRIM, although some RAID 0 configurations have claimed to support it.

Can data reduction technology substitute TRIM and drain the invalid data away?

Imagine a bathtub full of water and asking someone to empty the tub while you turn your back for a moment. When you look again and see the water is gone, do you just assume someone pulled the drain plug? I think most people would, but what about the other methods of removing the water like with a siphon, or using buckets to bail out the water? In a typical bathroom you are not likely to see these other methods used, but that does not mean they do not exist. The point is that just because you see a certain result does not necessarily mean the obvious solution was used.

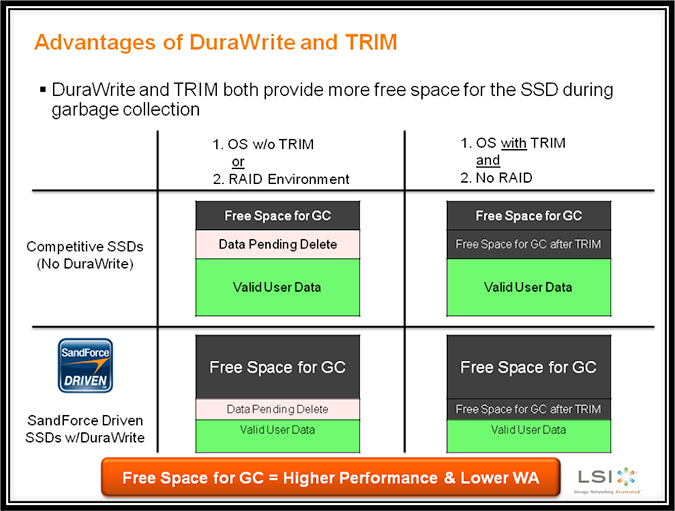

I see a lot of confusion in forum posts from SandForce-based SSD users and reviewers over how LSI's DuraWrite data reduction and advanced garbage collection technology relates to the SATA TRIM command. As before, without the TRIM command the SSD assumes all of the user capacity is valid data, and creating more free space through over provisioning or using less of the total capacity enables the SSD to operate more efficiently by reducing the write amplification, which leads to increased performance and flash memory endurance. So without TRIM the SSD operates at its lowest level of efficiency for a particular level of over provisioning.

Will you drown in invalid data without TRIM?

TRIM is a way to increase the free space on an SSD - what we call "dynamic over provisioning" - and DuraWrite technology is another method to increase the free space. Since DuraWrite technology is dependent upon the entropy (randomness) of the data, some users will get more free space than others depending on what data they store. Since the technology works on the basis of the aggregate of all data stored, boot SSDs with operating systems can still achieve some level of dynamic over provisioning even when all other files are at the highest entropy, e.g., encrypted or compressed files.

With an older operating system or in an environment that does not support TRIM (most RAID configurations), DuraWrite technology can provide enough free space to offer the same benefits as having TRIM fully operational. In cases where both TRIM and DuraWrite technology are operating, the combined result may not be as noticeable as when they're working independently since there are diminishing returns when the free space grows to greater than half of the SSD storage capacity.

So the next time you fill your bathtub, think about all the ways you can get the water out of the tub without using the drain. That will help you remember that both TRIM and DuraWrite technology can improve SSD performance using different approaches to the same problem. If that analogy does not work for you, consider the different ways to produce a furless feline, and think about what opening graphic image I might have used for a more jolting effect. I presented on this topic in detail at the Flash Memory Summit in 2011. You can read it in full here.

Kent Smith is senior director of marketing for LSI's Flash Components Division, overseeing all outbound marketing and performance analysis. Prior to LSI, Kent was senior director of corporate marketing at SandForce, acquired by LSI in 2012. His more than 25 years of marketing and management experience in computer storage and high-technology includes senior management positions at companies including Adaptec, Acer, Polycom, Quantum and SiliconStor.

Republished with permission.