A recent shipment of what appears to be Nvidia's upcoming Pascal GPU from Taiwan to Bangalore has revealed some possible information about the chip before its launch later this year.

The shipment information suggests that Nvidia's GP104 Pascal GPU will be 37.5 x 37.5 mm and will feature 2,152 pins. While there's no firm information to confirm such a move, the surprisingly small die size indicates Nvidia will not use high-bandwidth memory (HBM) along with this GPU.

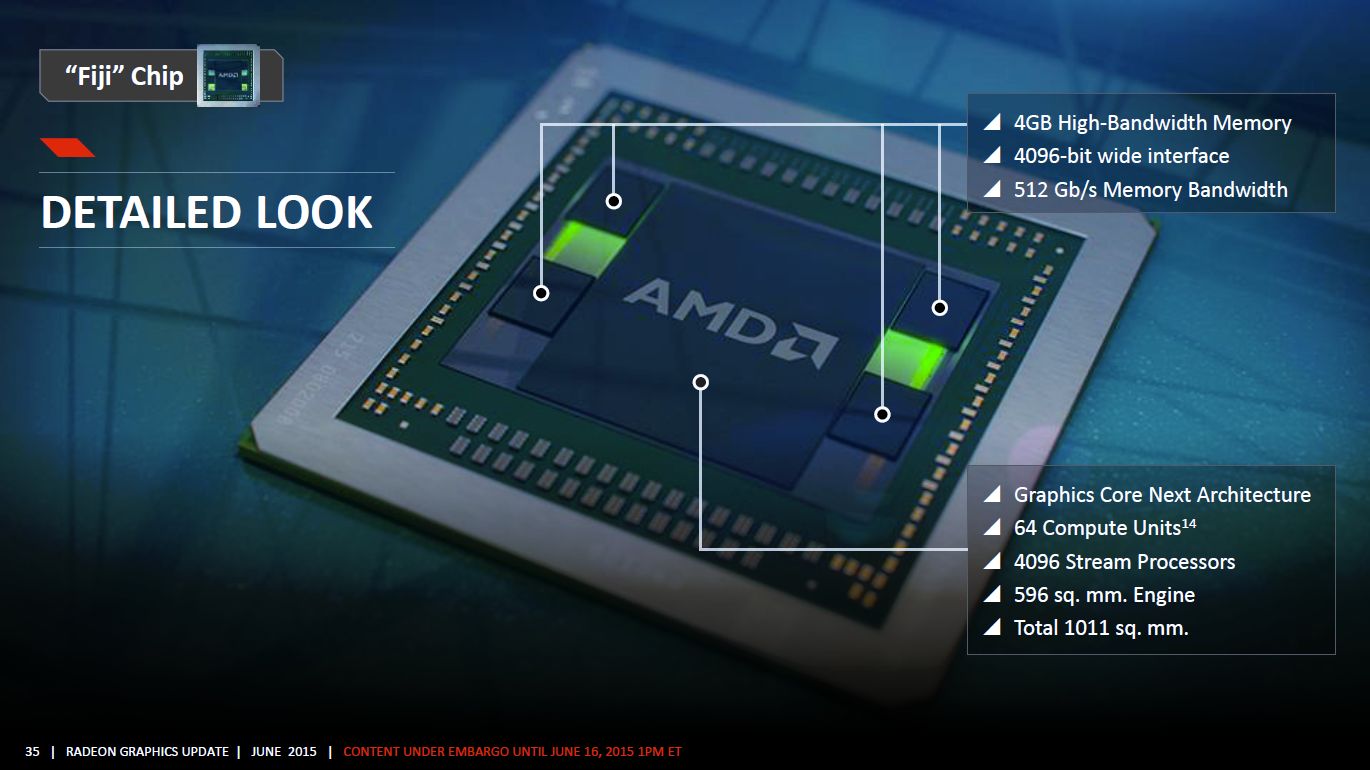

The reason why it appears Nvidia will not use HBM with the GP104 is that a die of this size could not accommodate both a high-performance GPU and the HBM chips, which sit on the same substrate. Instead, current speculation suggests GP104 graphics cards will use GDDR5 or GDDR5X, resulting in less memory bandwidth than a HBM solution.

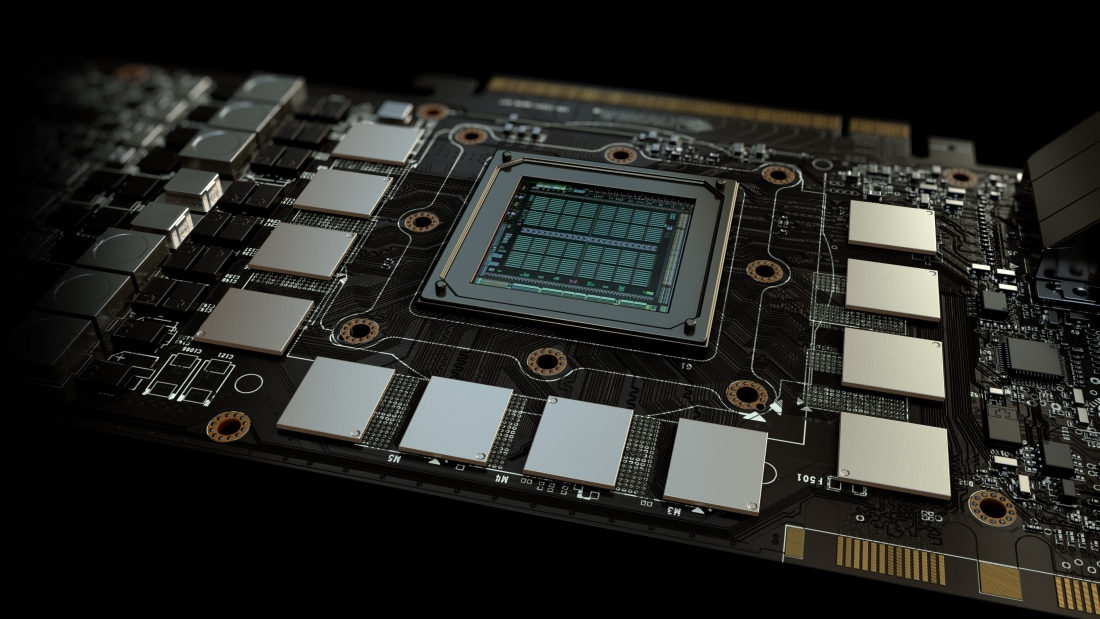

Meanwhile, the top-end GP100 GPU is expected to come in at 55 x 55mm, which is larger than the Maxwell-based GM200 (seen above). Factoring in a manufacturing process shrink to 16nm, GP100's extra die size will likely be used to accommodate HBM, making it Nvidia's first GPU to use the technology.

Pascal is still several months away, and the information in this latest report is far from concrete, so it should be taken with a grain of salt. However it shouldn't be too long before we get a look at what Nvidia has in store for 2016.

https://www.techspot.com/news/63436-nvidia-pascal-gp104-gpu-gddr5-hbm.html