Announced earlier this year, Nvidia's "GeForce Experience" has finally hit beta – albeit in limited scope. The service is open to the first 10,000 users who register, after which signups will be closed until Nvidia has a chance to analyze user feedback and squash major bugs. Along with being restricted to a relatively small number of users, the service currently only supports 32 games played with Fermi and Kepler GPUs, though more titles will be added in the coming weeks.

For those unfamiliar with the service, the GeForce Experience is an initiative that is intended to help folks run games with the most optimized settings for their hardware. According to a survey conducted last year, more than 80% of users play PC games with default settings – presumably because they simply don't know any better. Nvidia notes that even enthusiasts have to spend time – sometimes hours – researching and tinkering to get the most out of a game.

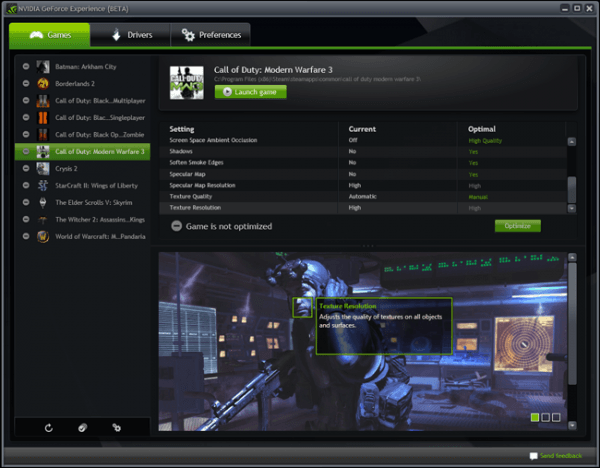

The company aims to simplify that process with the GeForce Experience, which is installed as a local client and taps into cloud-based supercomputers to determine and download the best in-game settings for your machine. In addition to automating the configuration of quality settings, the GeForce Experience software streamlines driver updates by automatically downloading them in the background and prompting you when they're ready to be installed.

Nvidia outlined the six-step process it uses to test each game:

- We start with expert game testers that play through key levels of the game (indoors, outdoors, multiplayer etc.) to get a feel for the load and how different settings affect quality and performance.

- The game tester identifies an area for automated testing. This area will be from a demanding portion of the game. We don't always select the absolute worst case since they tend to distort the results.

- As part of the game evaluation, the expert game tester will identify an appropriate FPS target. Fast paced games typically require higher FPS. Slower games lower FPS. We also define and test against a minimum FPS to minimize stuttering. The average framerate target is typically between 40-60 FPS, the minimum 25 FPS.

- The most difficult part of OPS is deciding which settings to turn on and which to leave off in a performance limited setting. This is done by analyzing each setting and assigning them quality and performance weights. The game tester compares how each setting (eg. shader, texture, shadow) and each quality level (eg. low, medium, high) affects image quality and performance. These are stored as weights which are fed to the automation algorithm.

- From here on the testing is automated. The GeForce Experience supercomputer tests the game by turning on settings until the FPS target is reached. This is done in the order of maximum bang for the buck; settings that provide the most visual benefit and least stress on the GPU (eg. texture quality) are turned on first; settings that are performance intensive but visually subtle (eg. 8xAA) are enabled last.

- Finally, the GeForce Experience supercomputer goes through and tests thousands of hardware configurations for the given game. Unique settings are generated for each CPU, GPU, and monitor resolution combination.