It always surprises us how often we get requests for Crossfire and SLI benchmarks. Despite flatout telling readers not to invest in either technology for years now, there still seems to be quite a lot of interest. It's even more surprising given AMD, and in particular Nvidia, have made no secret about the fact that they are pulling back on multi-GPU technology.

On our end, we've been stubborn about it and for the past year we have basically refused to check it out. But recently two RX 590 cards came our way and we thought, why not?

The last time we ran a multi-GPU comparison at TechSpot was back in 2015 when we tested a GeForce GTX 980 Ti SLI configuration against Radeon R9 Fury X Crossfire. The Fury X cards came out on top by a small 4% margin across the 10 games tested at 4K. After that we found less reasons to do a comparison and we went in the pursuit of achieving playable frame rates at 4K.

So we tested two GTX 1080s in SLI and later in 2016 we coupled two Titan X cards, which we called the ludicrous graphics test because the cost of the graphics cards alone was upwards of $2,400. Little did we know, GPU prices were going mad shortly thereafter.

For today's test we have a dozen modern games and we're going to see how well two RX 590s compare to individual cards in 1080p and 1440p. For comparison we have nine other graphics cards including high-end models such as Vega 64 and the RTX 2070. Our test bench system consists of a Core i7-8700K built inside the Corsair Crystal 570X packing 16GB of DDR4-3400 memory.

Benchmarks

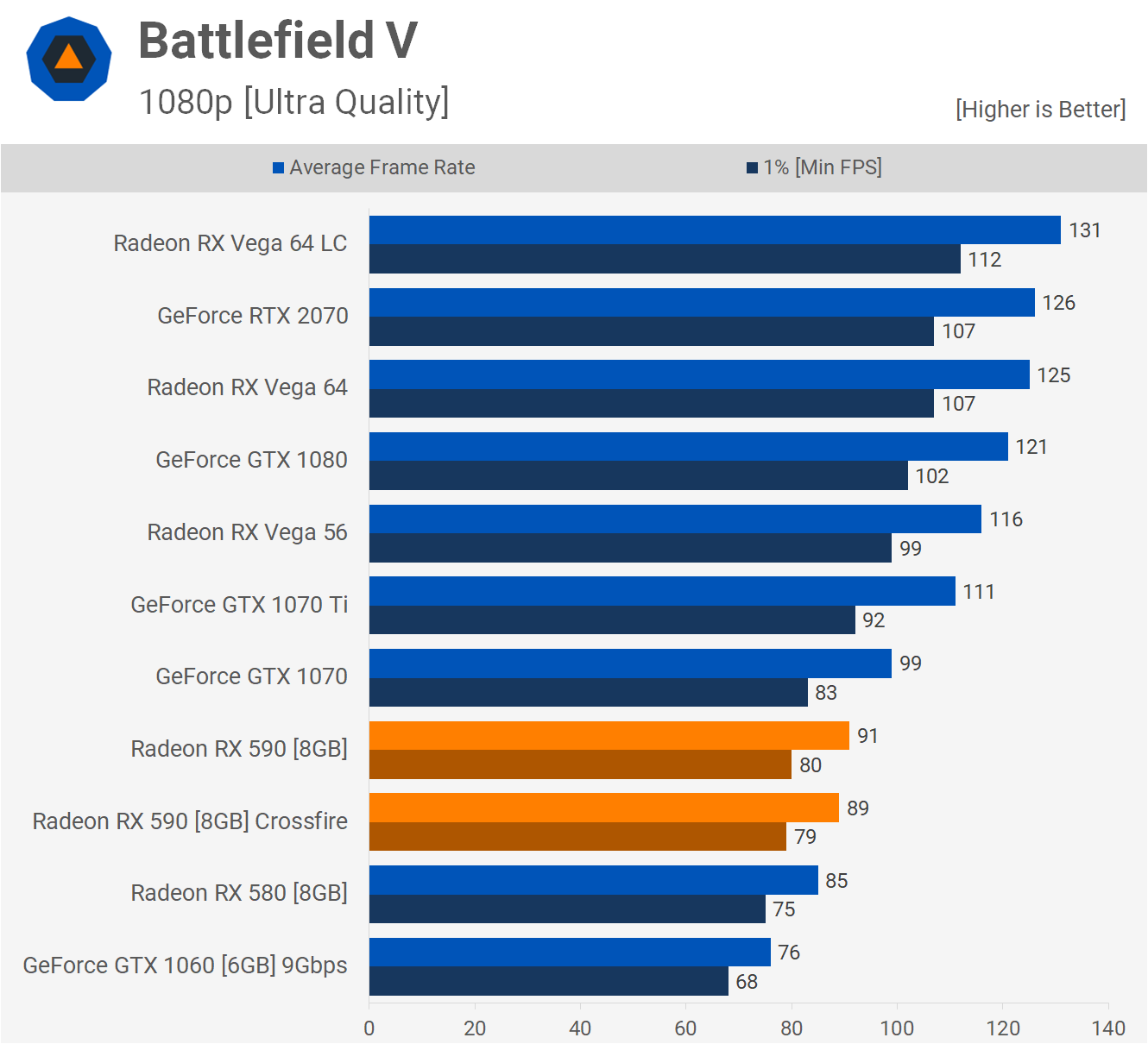

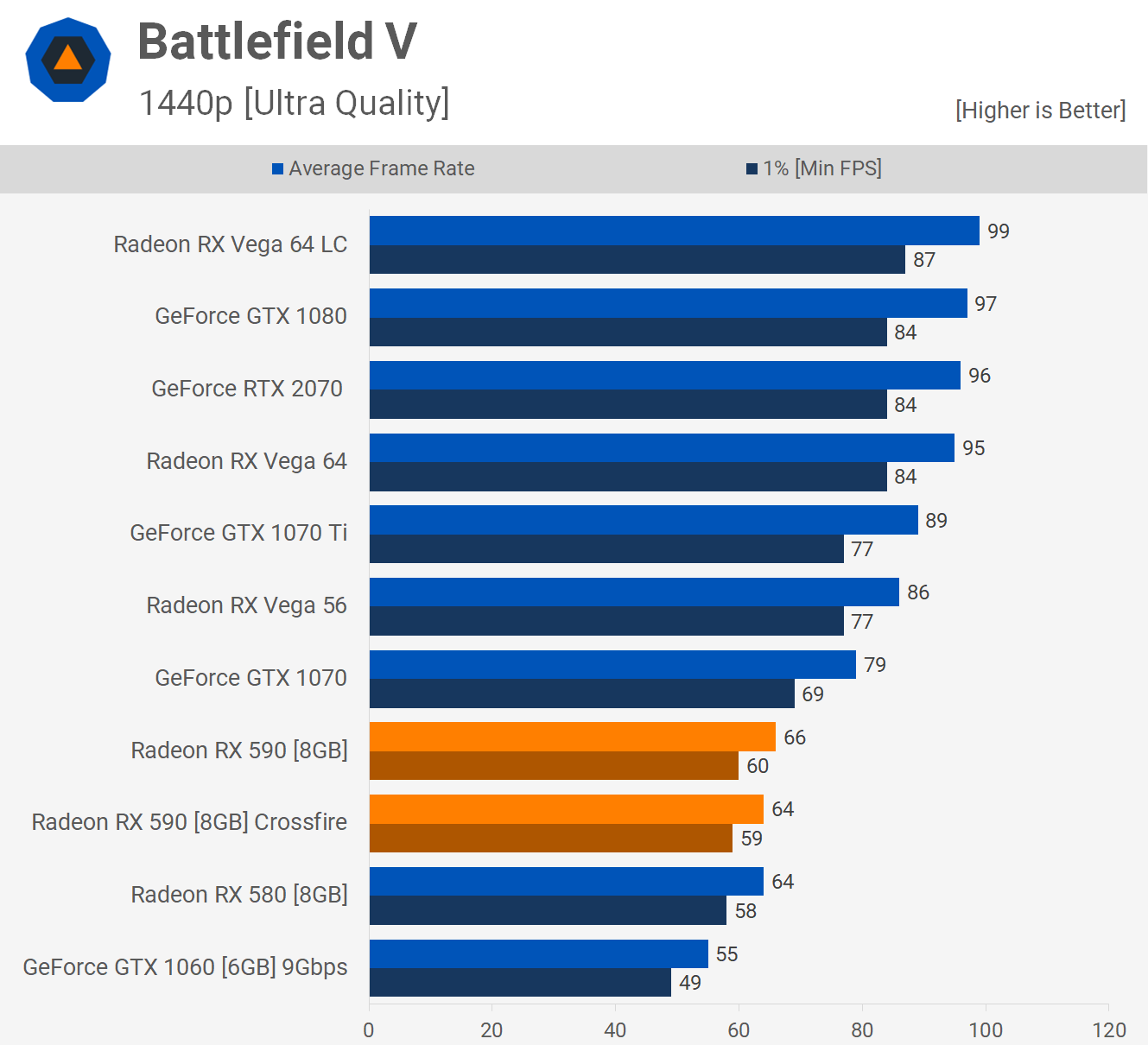

Battlefield V was the first title we tested and we're seeing no Crossfire in this title. No extra performance from the second card at 1080p, and the same is also true for the 1440p resolution.

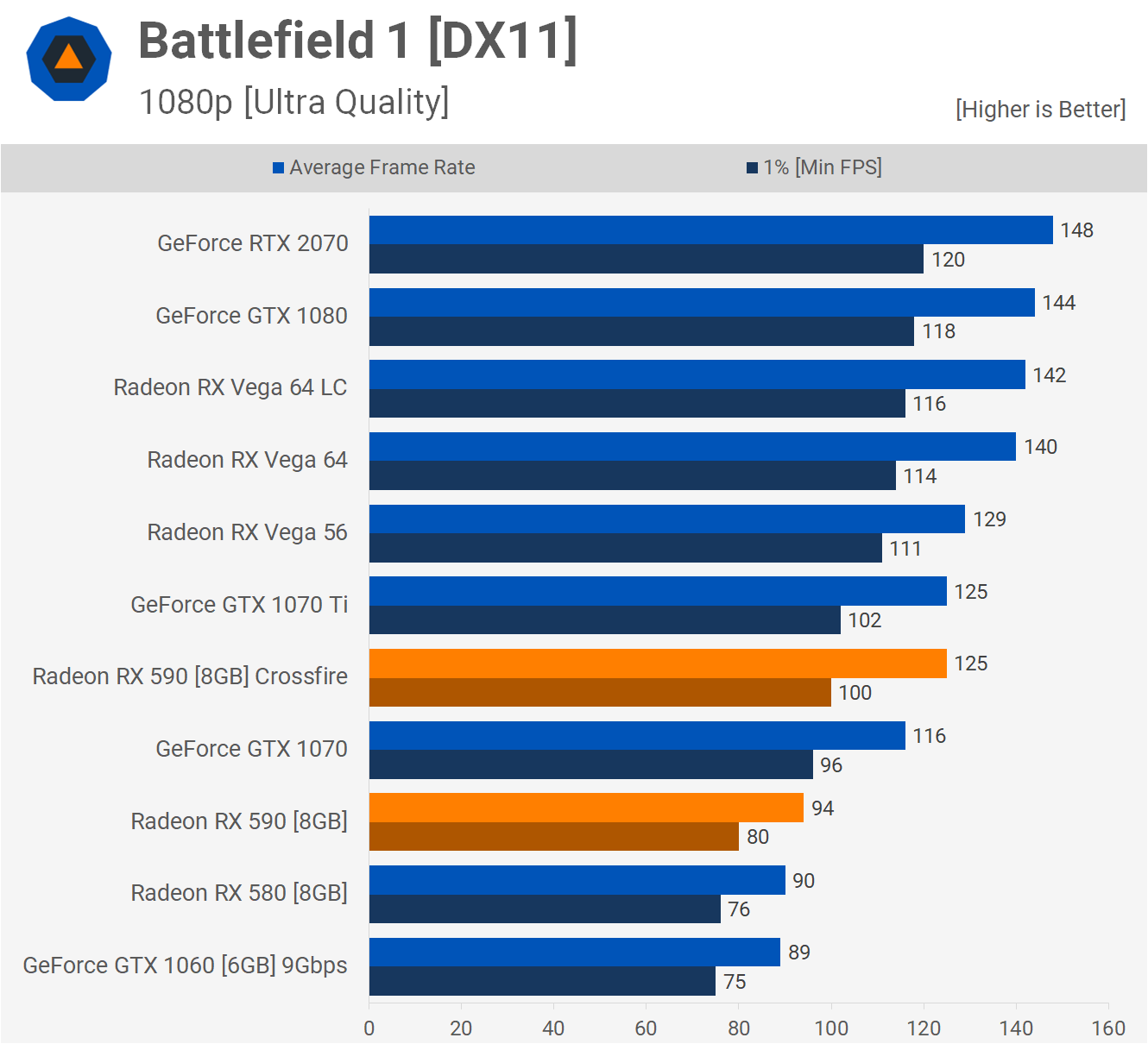

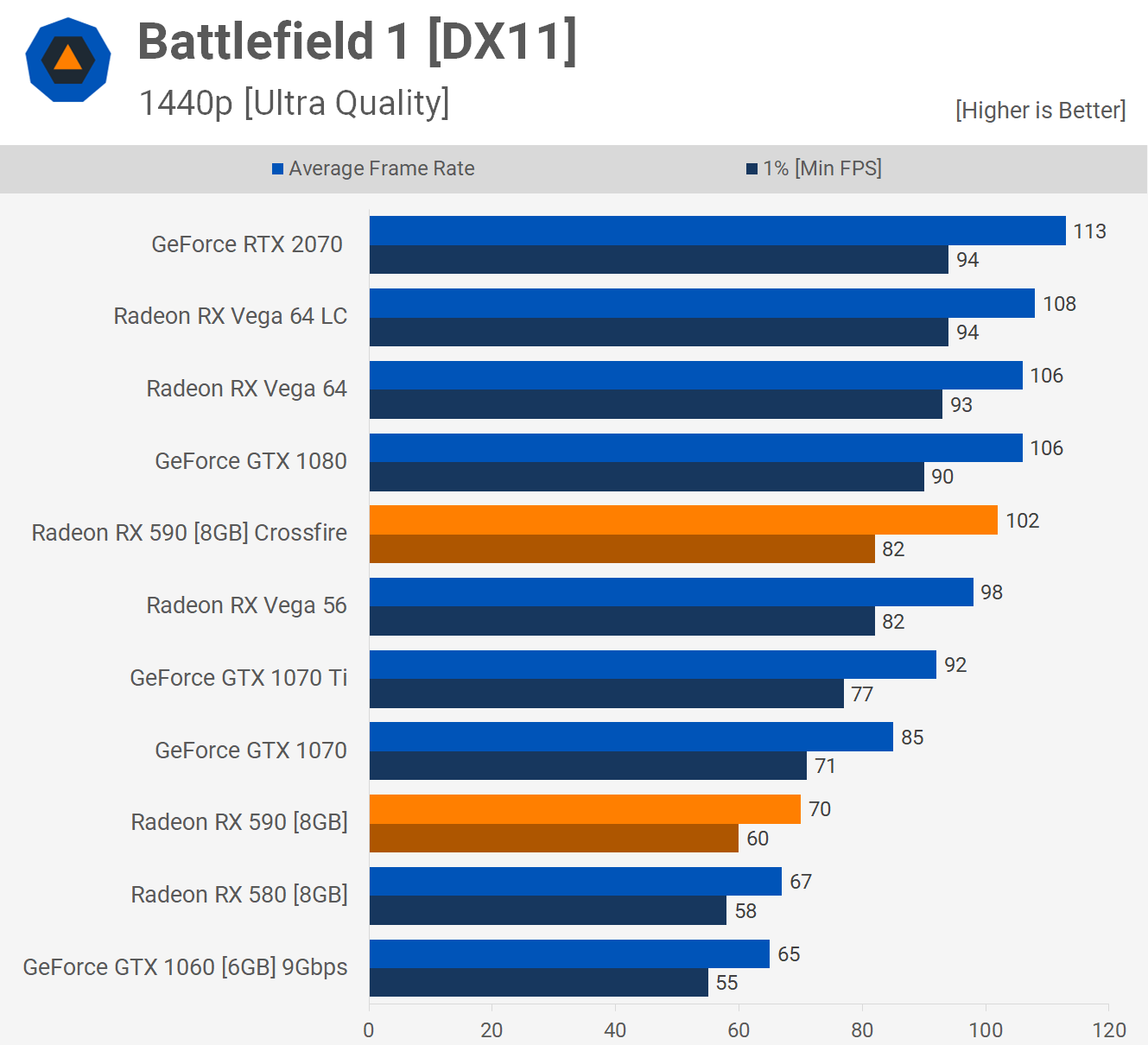

That was really surprising as Crossfire worked in Battlefield 1.

Here we see a 33% gain for the average frame rate at 1080p. Not great but a big improvement over nothing, and scaling improves at 1440p where the RX 590s in Crossfire boosted performance by 46%.

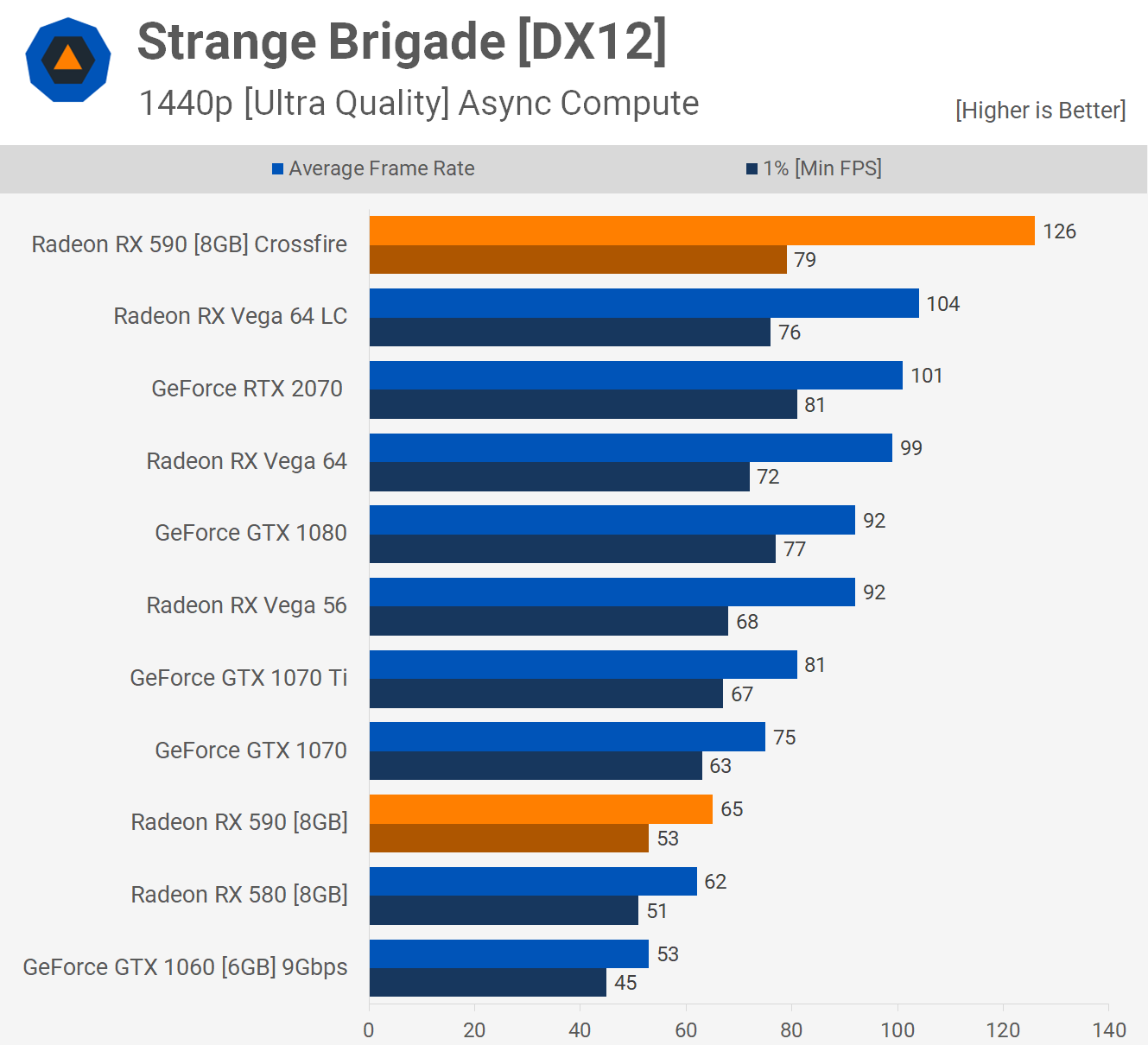

The best example of Crossfire scaling that we came across in this new batch of games was seen in Strange Brigade. Here the Crossfire RX 590s boosted the average frame rate by almost 90%... and better yet frame time performance was still very good.

Then at 1440p we see over 90% scaling hitting 94% so this is an exceptional result for the Crossfired 590s.

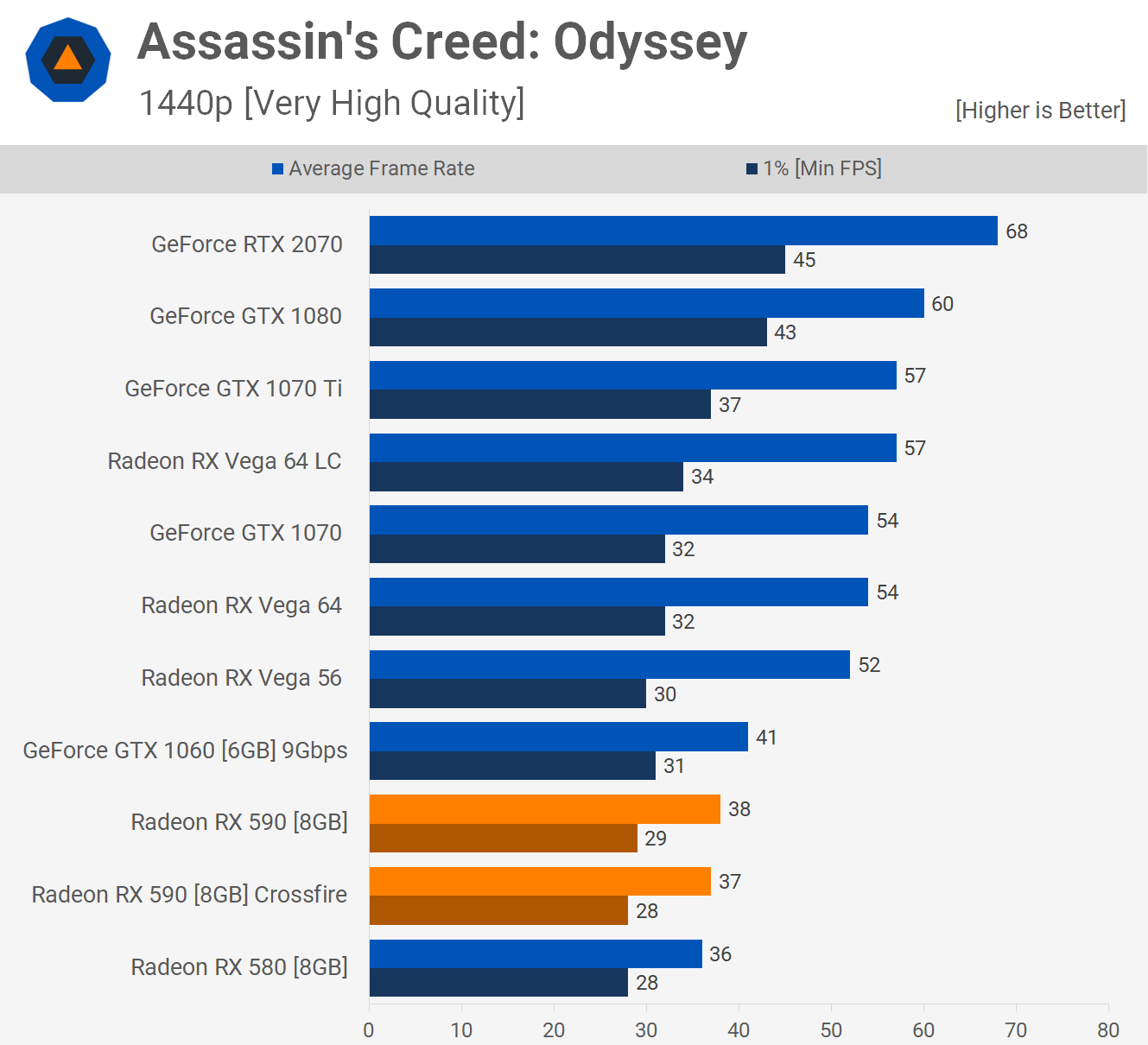

Radeon GPUs perform poorly in Assassin's Creed Odyssey so unsurprisingly Crossfire support is non-existent in this title. Whereas the 590s were faster than the RTX 2070 in Strange Brigade, here they are 44% slower.

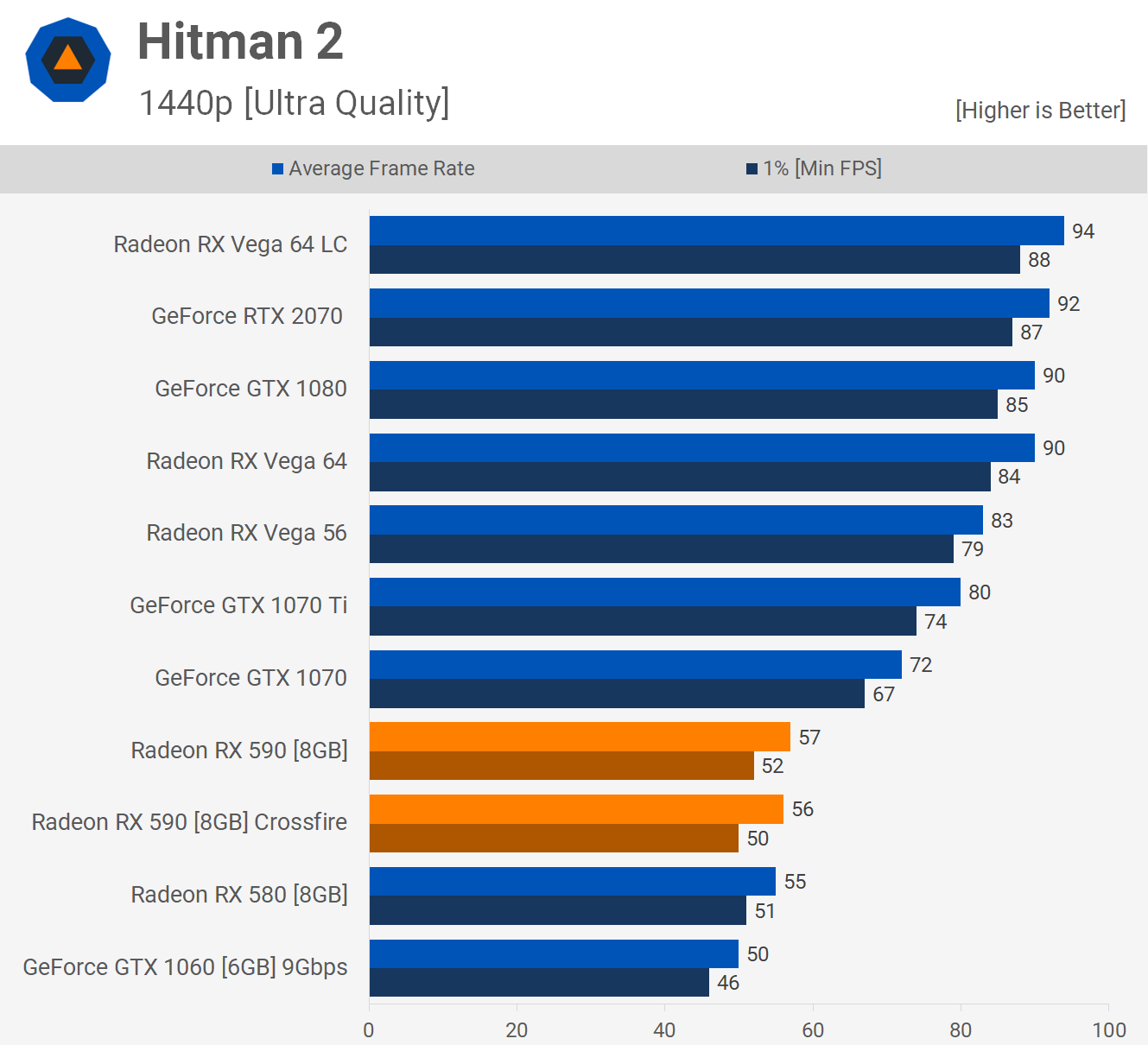

Moving on to Hitman 2, yet another title that lacks Crossfire support and therefore running with the technology enabled actually slightly reduces performance. This is also seen at 1440p.

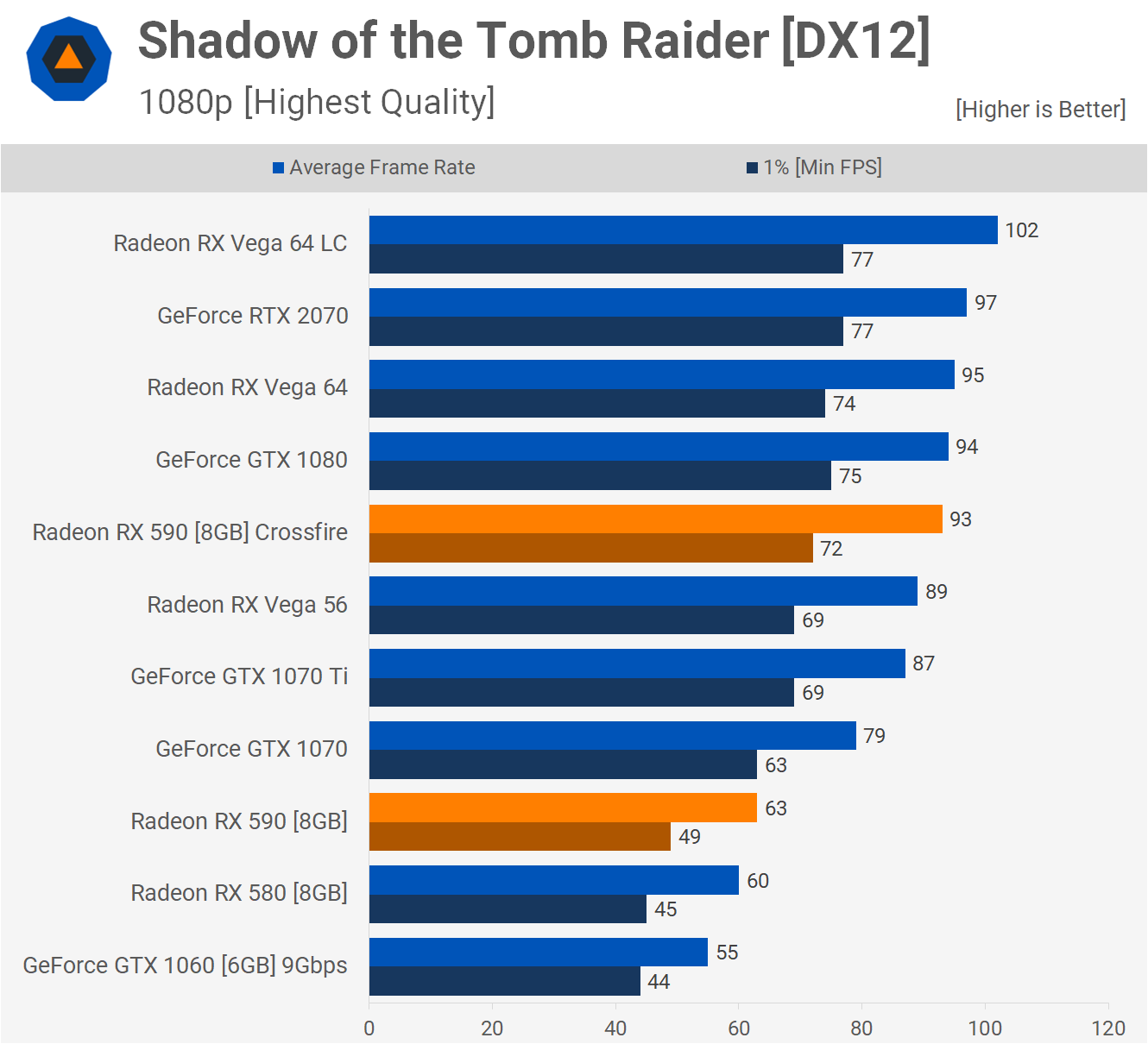

Shadow of the Tomb Raider gets us a 48% performance boost at 1080p and this placed the RX 590s alongside the GTX 1080 and Vega 64. Scaling is dramatically improved at 1440p and now we're seeing a 61% performance boost, this placed the 590s on par with the RTX 2070. A solid result for this game.

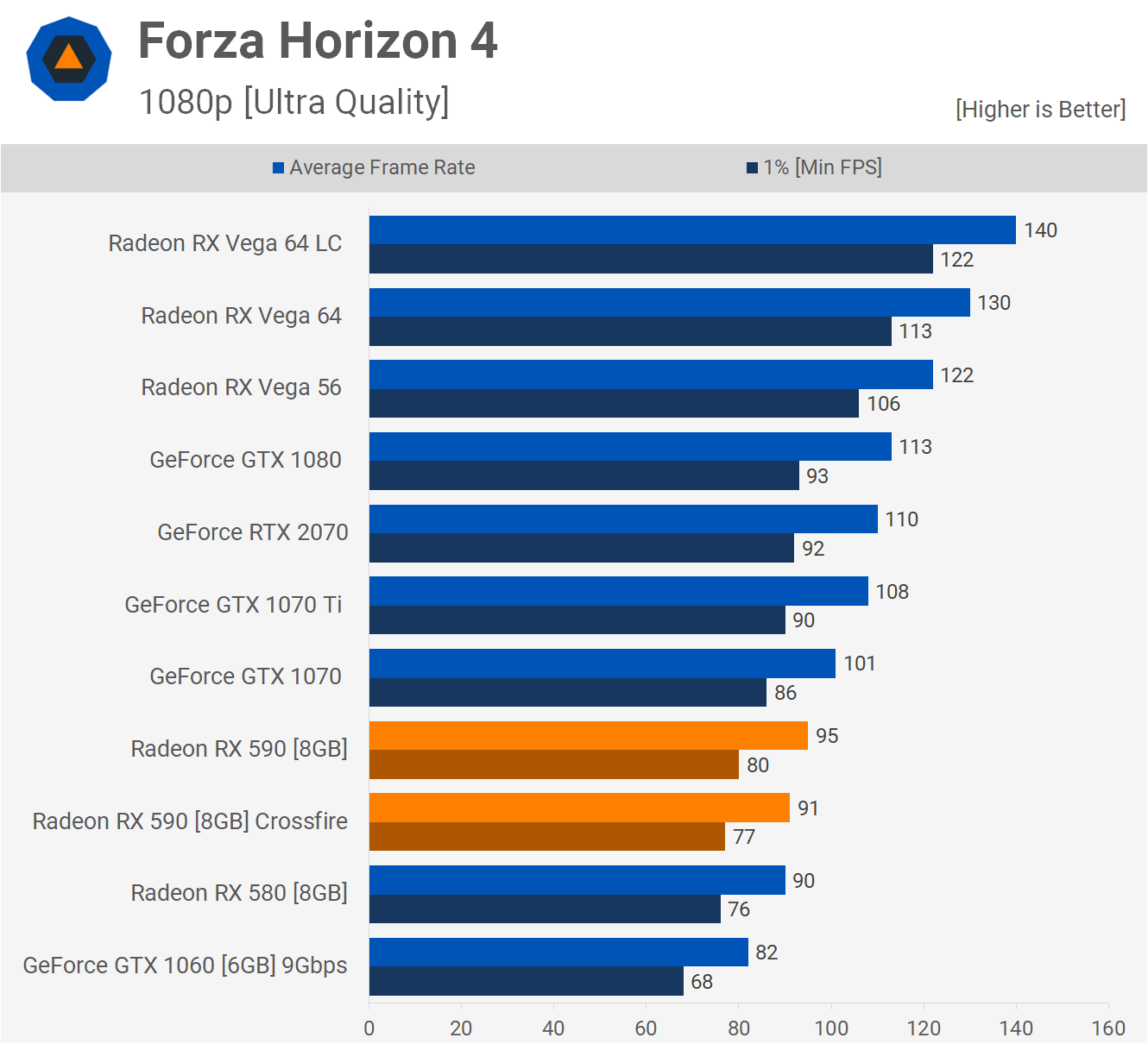

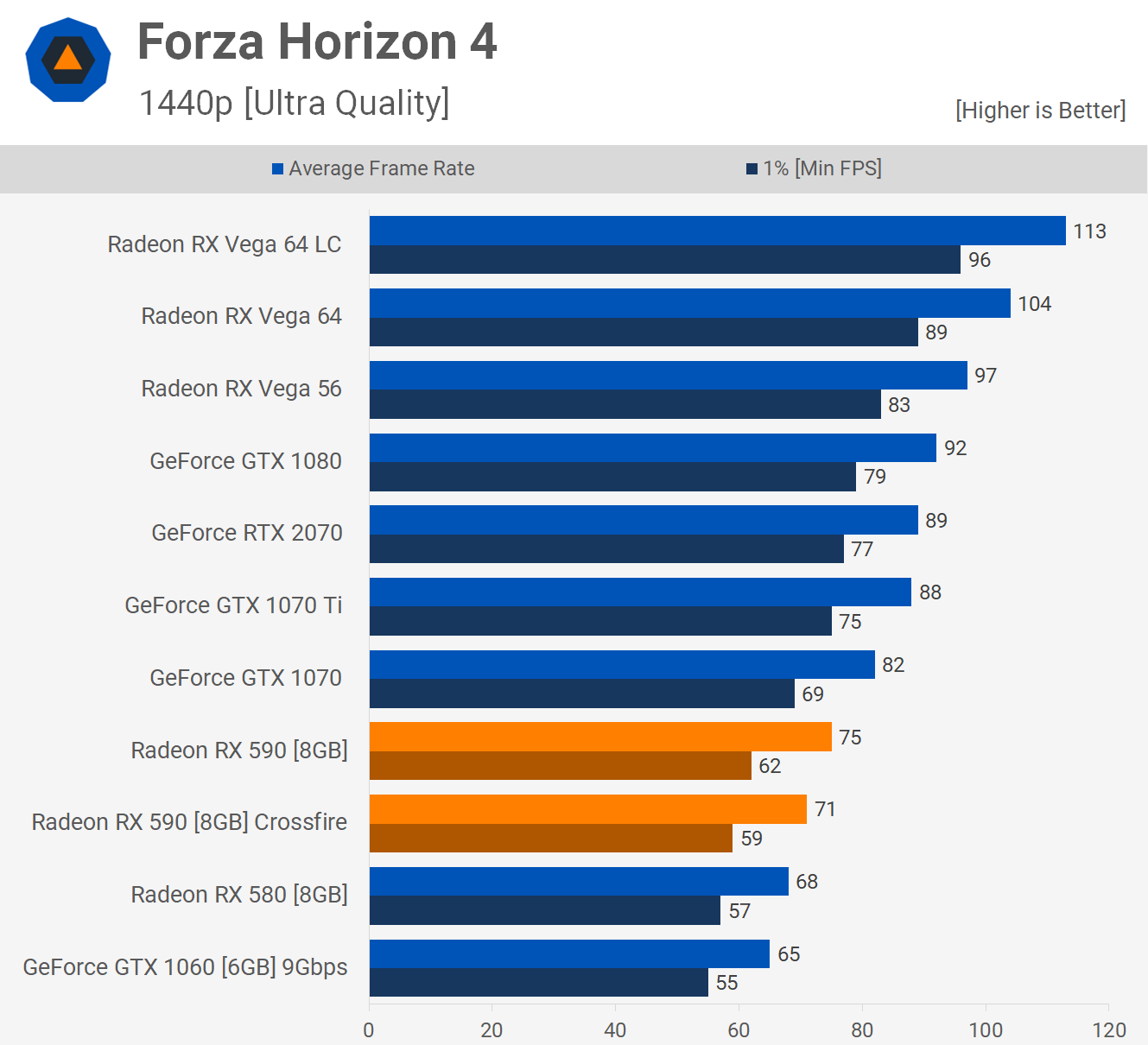

Forza Horizon 4 is another title where Crossfire isn't supported and therefore we saw no gains at 1080p or 1440p. In fact, we saw a slight performance regression.

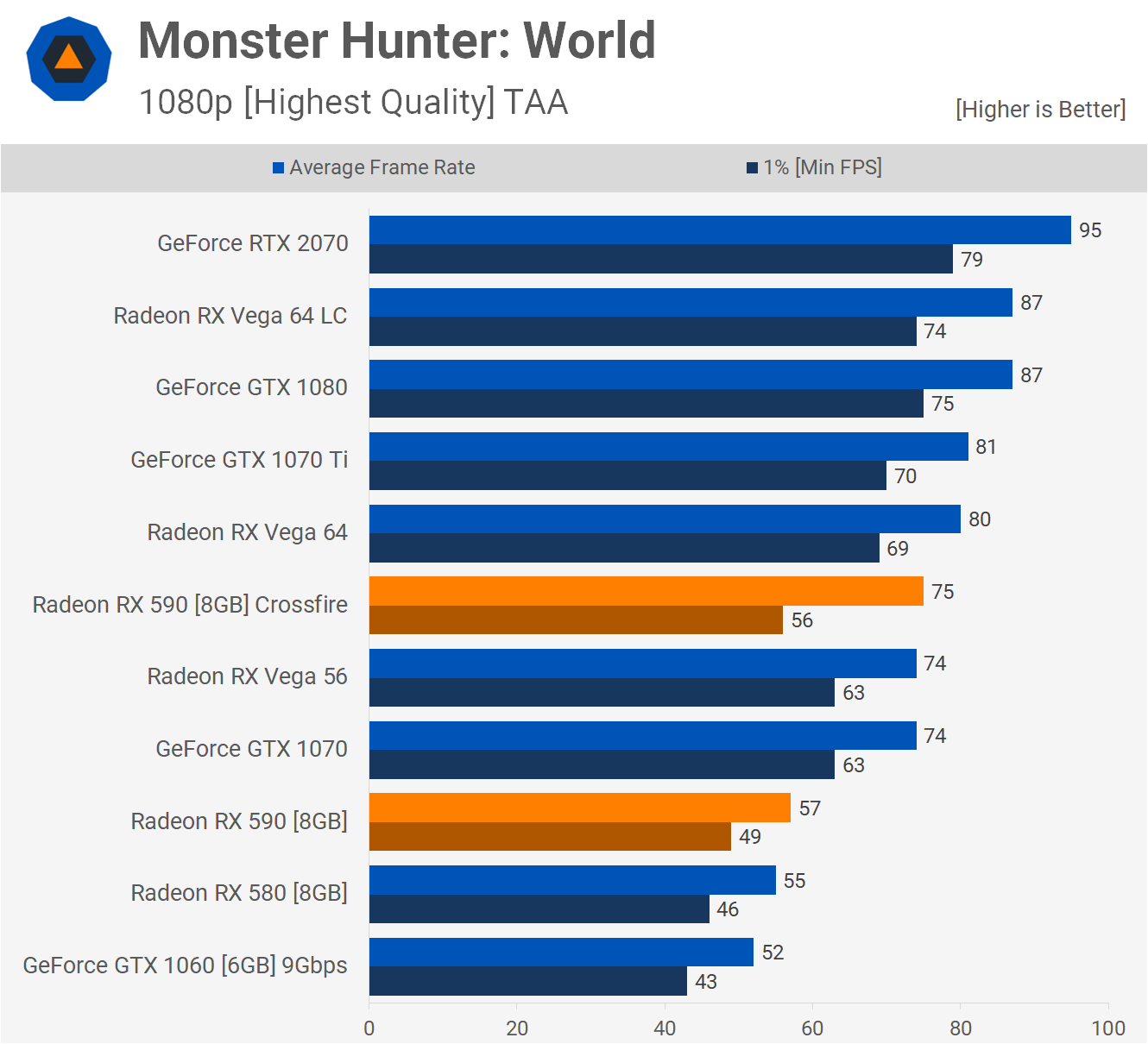

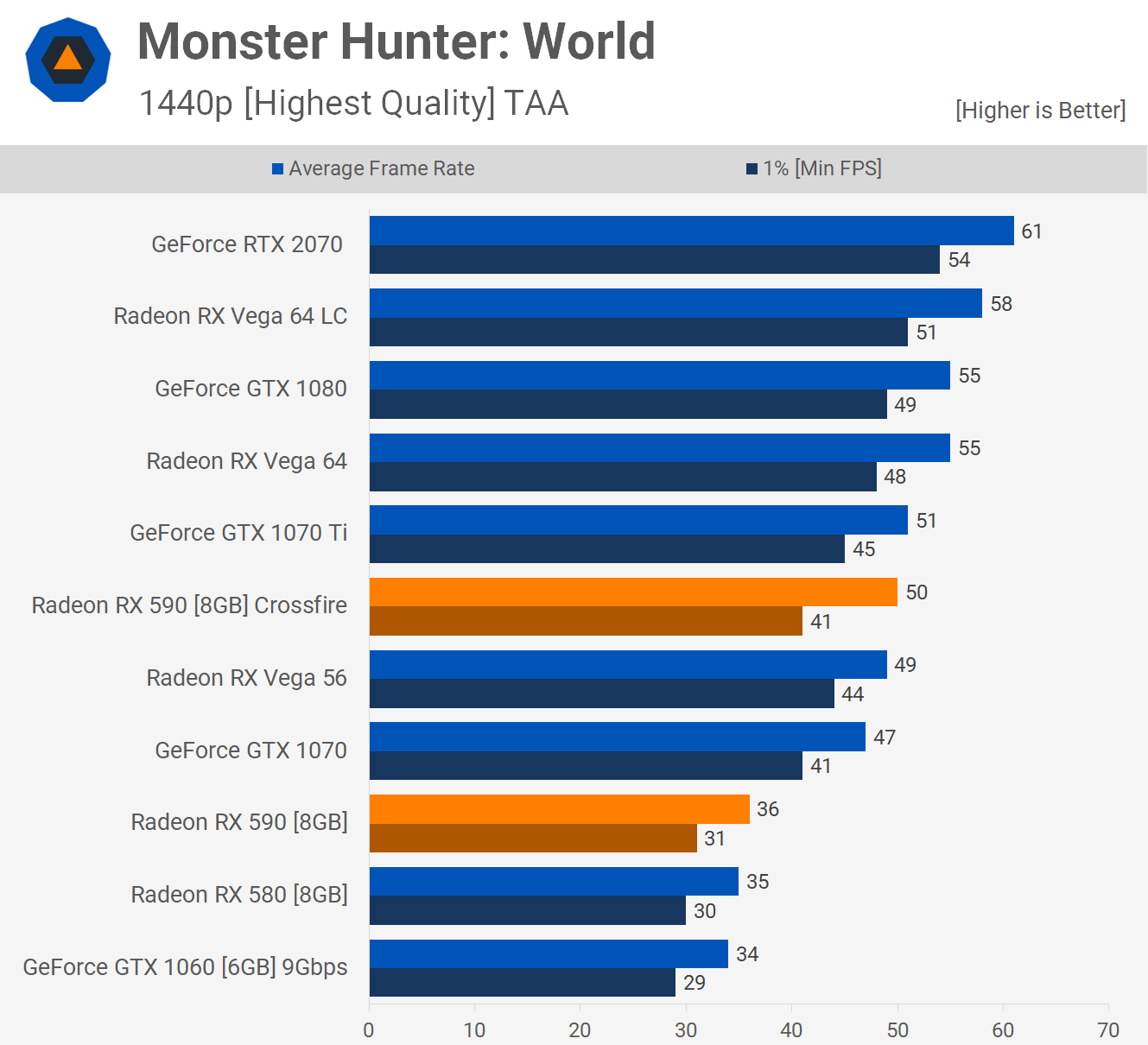

To my suprise Monster Hunter World did support Crossfire though the frame time performance at 1080p was a little sketchy. We saw a 32% boost for the average frame rate, but only a 14% improvement in frame time performance.

Jumping up to 1440p helps ironing this issue out, but even then scaling is below 40% which is weak given the investment, hardly justifying a second graphics card.

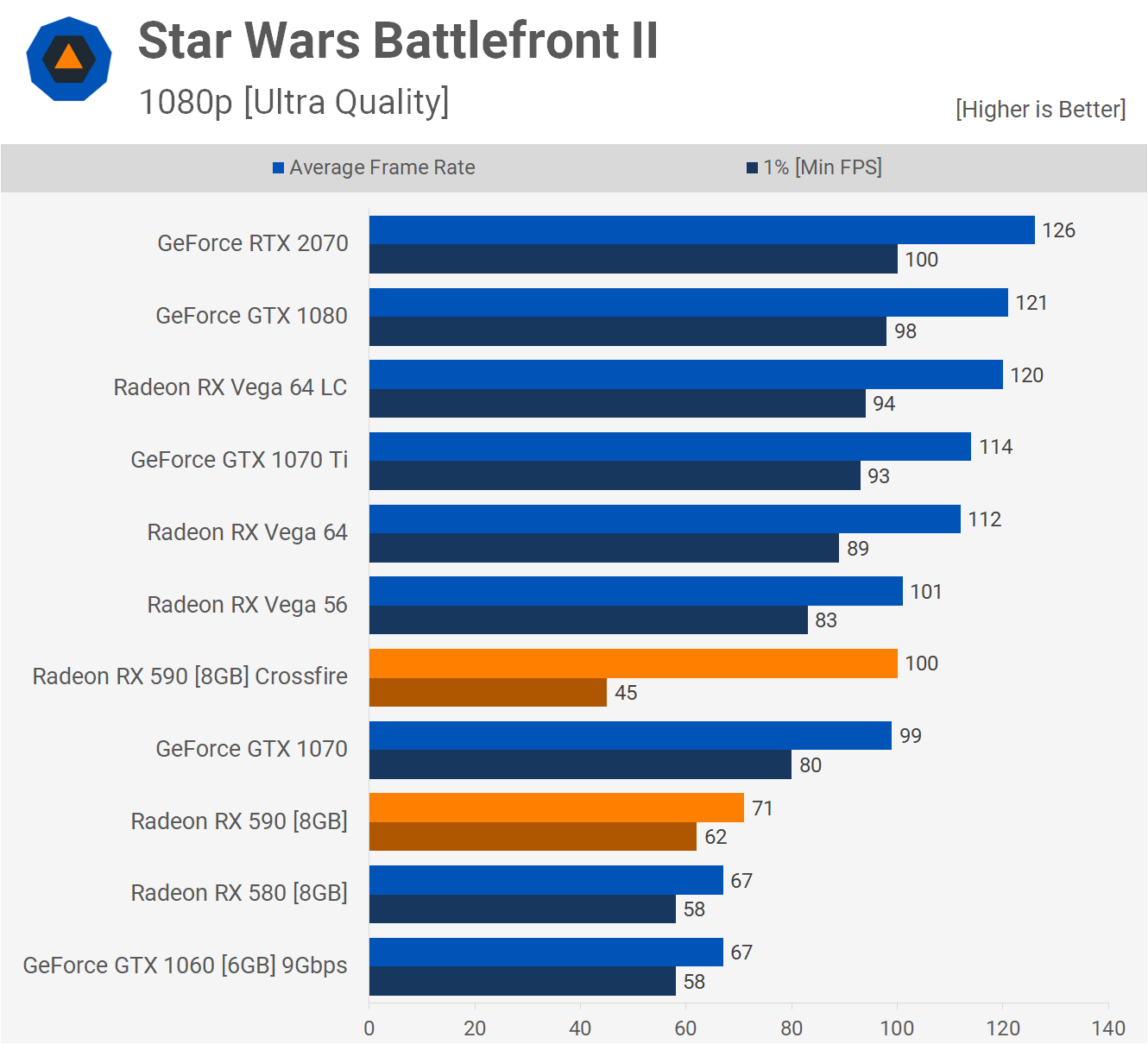

Here we have an example of a title that "looks good" when focusing on the average frame rate, but the experience was actually horrible. Despite averaging 100 fps in Star Wars Battlefront II at 1080p, the frame time performance was shocking, dropping down well below the result of a single 590. The game was basically unplayable and buggy with Crossfire enabled and in this instances a constant 30 fps provides a nicer gameplay experience.

The frame time issue persisted at 1440p. We didn't go far in researching a fix, but out of the box Crossfire does not work and thus you're better off with a single RX 590 in this title.

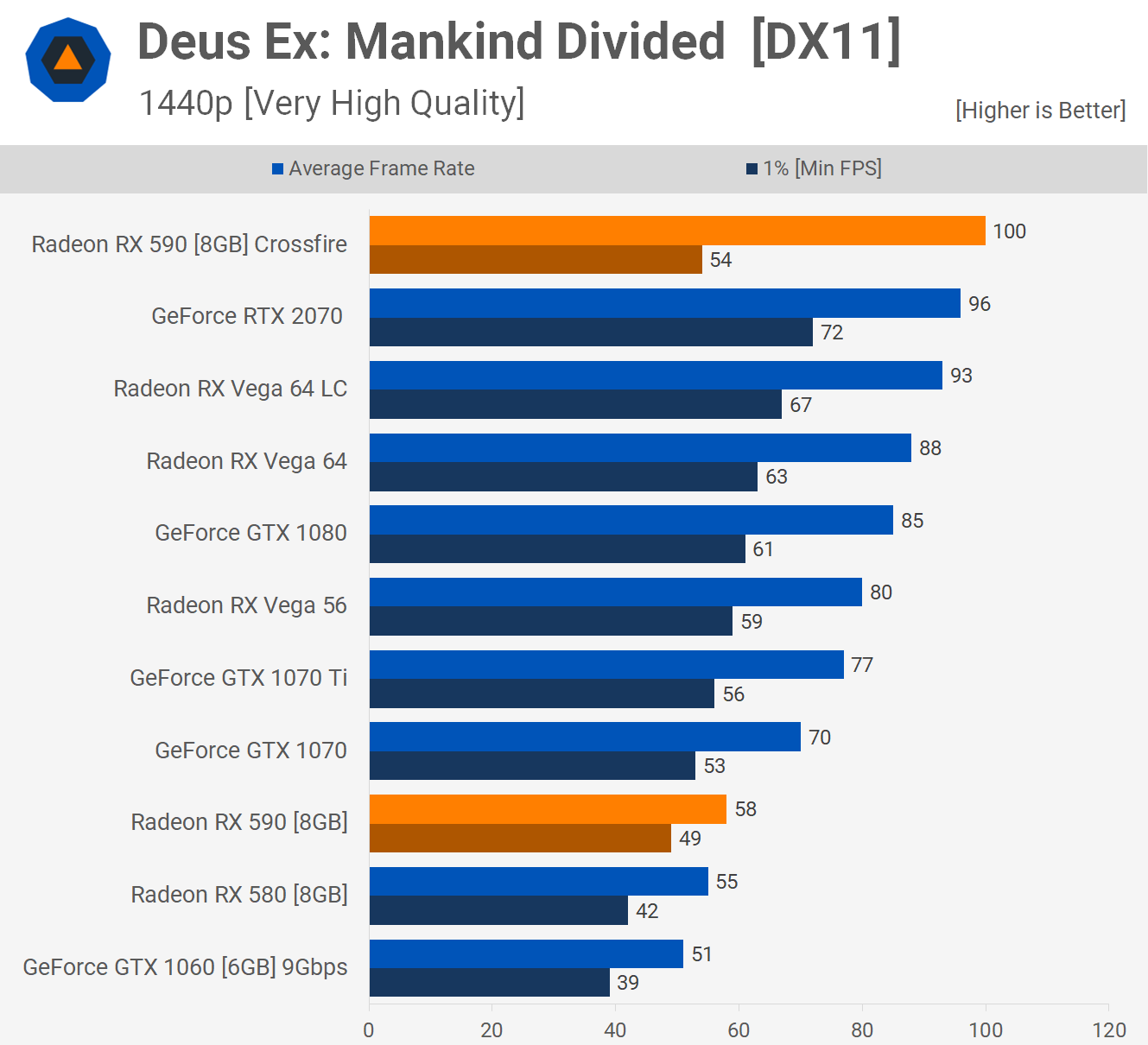

Frame time performance was also a little sketchy in Deus Ex Mankind Divided, though nowhere near as bad as what we saw in Battlefront II. The issue was a little more noticeable at 1440p and although the average frame rate is much improved with a second 590, the overall experience wasn't and due to the disparity between the average and 1% low result when using Crossfire, we'd rather play this title with a single 590.

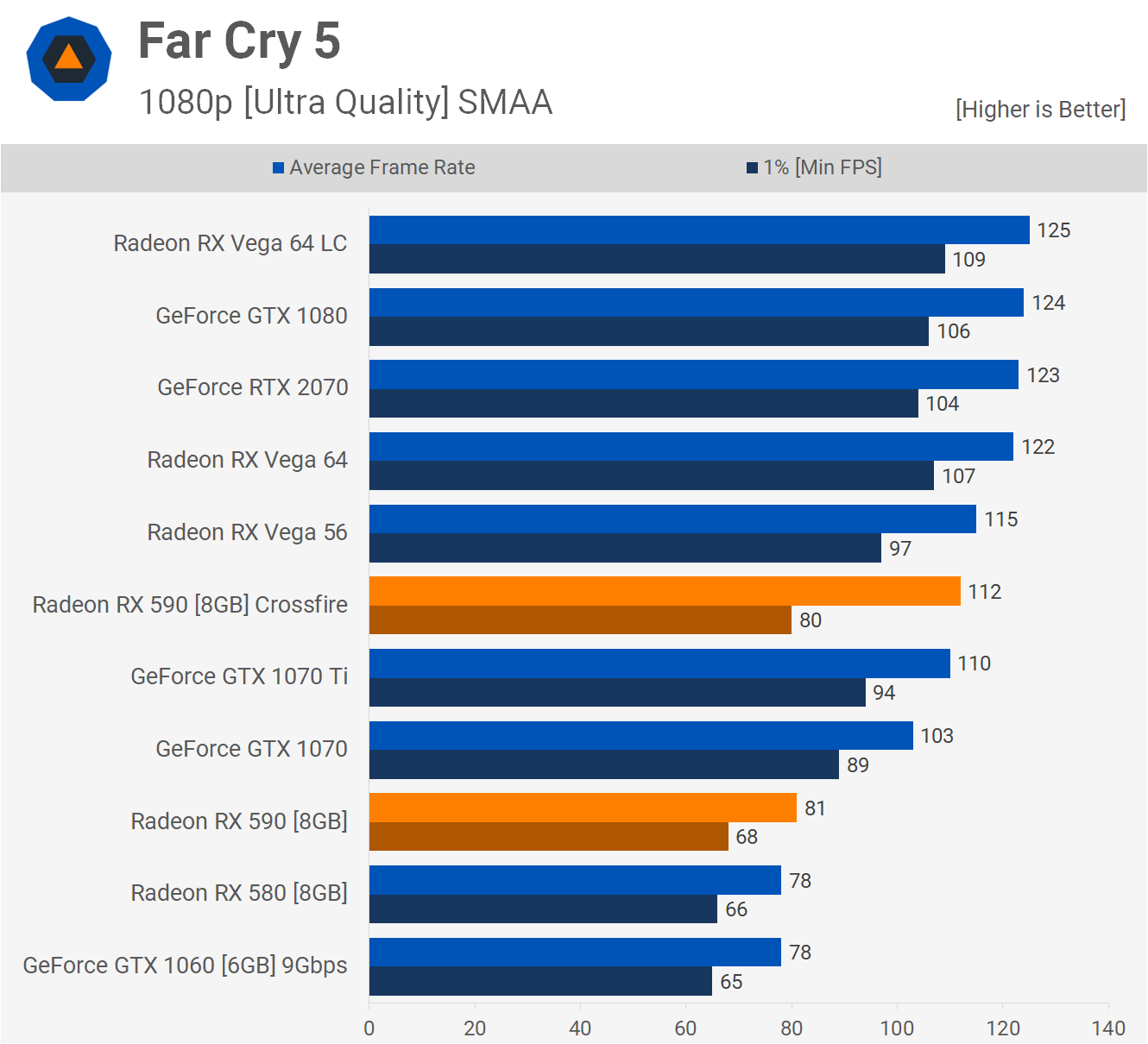

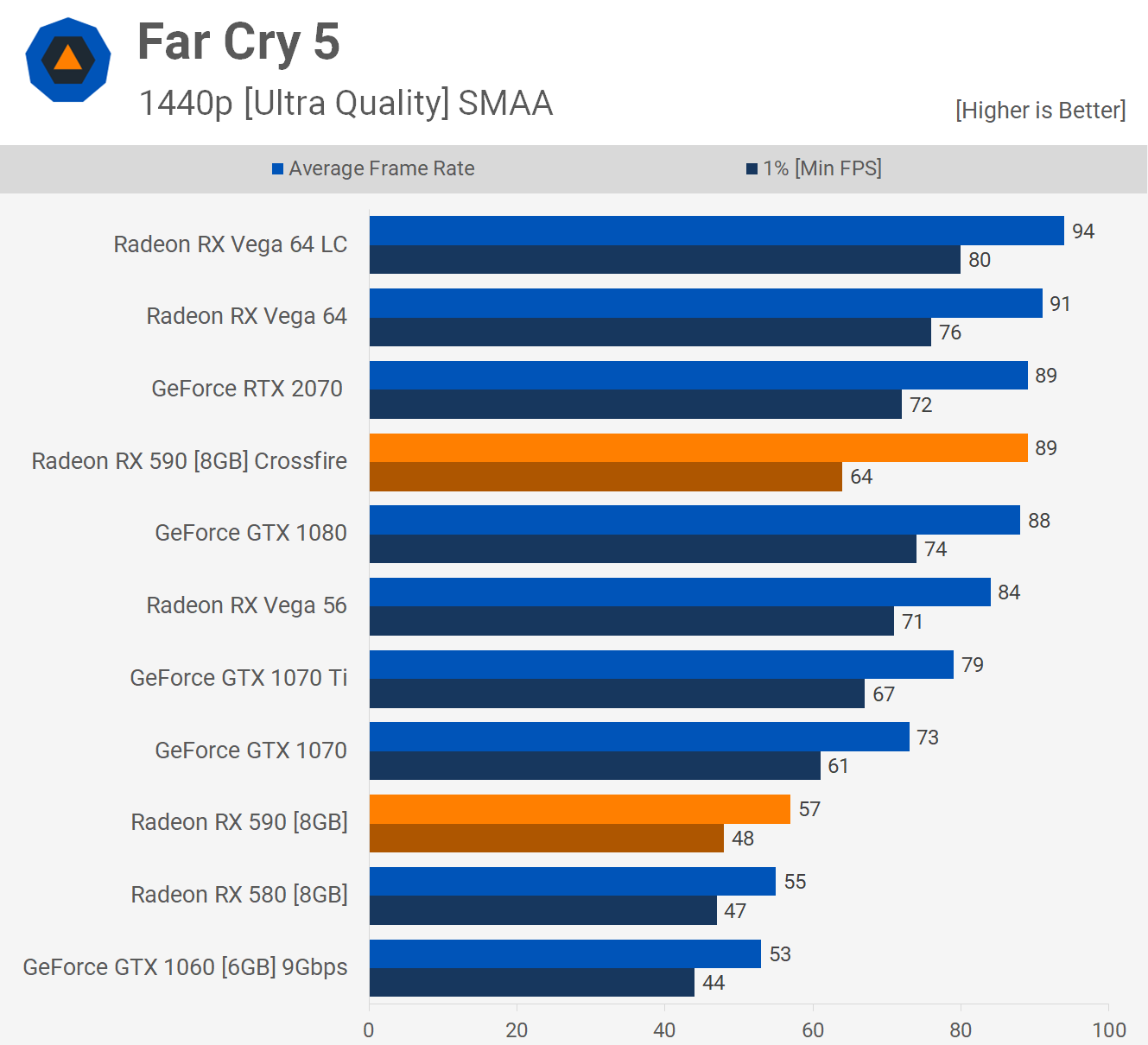

There was also a little bit of stuttering going on in Far Cry 5 and this was present at both 1080p and 1440p.

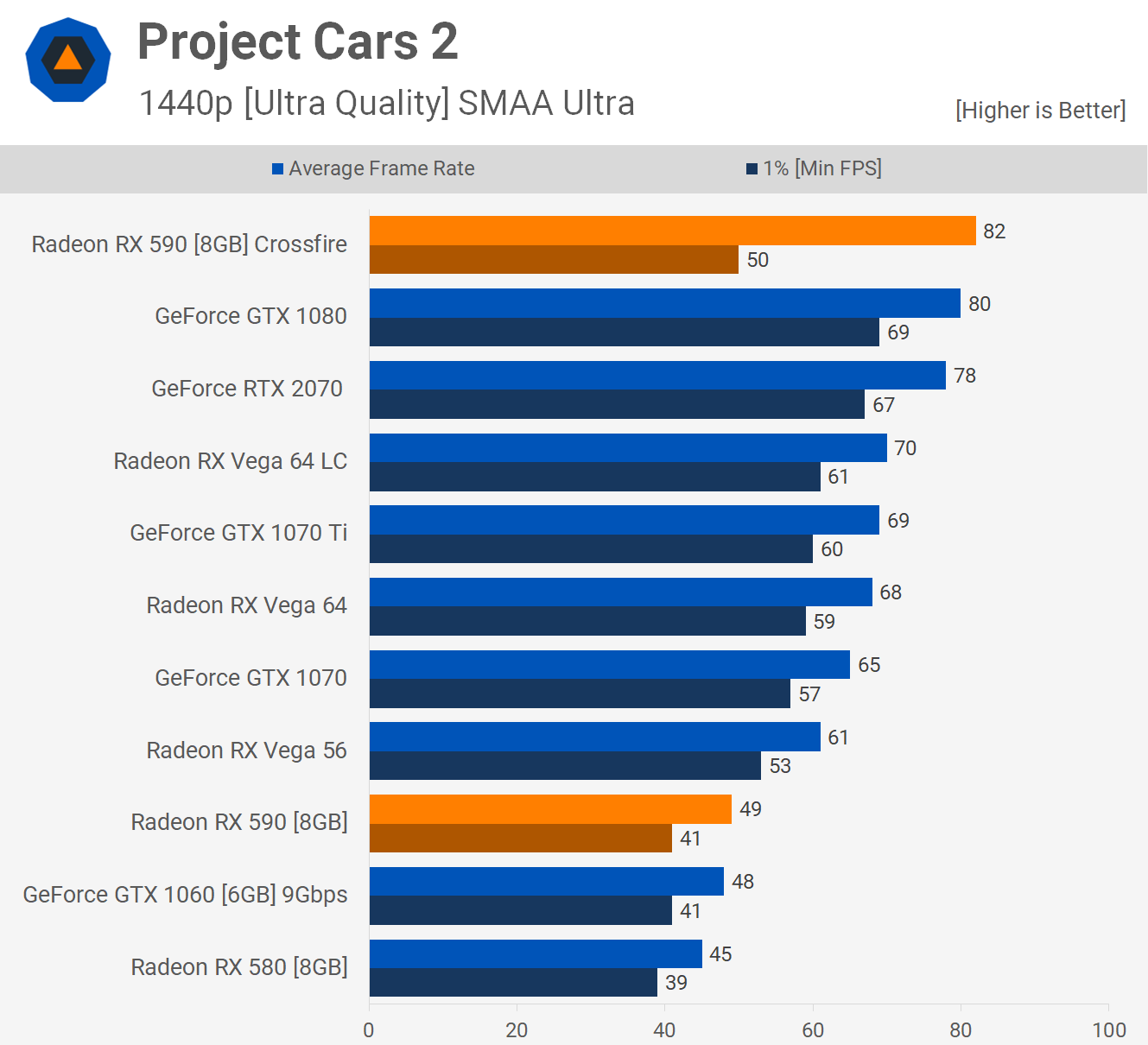

Project Cars 2 was another title where we saw performance gains, but the frame time performance wasn't great and much worse than that of higher end single GPU graphics cards.

Power Consumption

All graphics card configurations with the exception of the Crossfire cards were tested in Crysis 3 to measure power consumption. However Crossfire doesn't work in Crysis 3, so we used F1 2018 as it was one of the better games for scaling. Typically you're looking at a total system power increase of around 60% when using a second RX 590 and that leads to a pretty brutal consumption figure.

Here the Crossfire 590's pushed total system draw to 576 watts and I did observe just over 600 watts in Strange Brigade. This is quite a bit more than even a Vega 64 Liquid graphics card and almost twice that of a single RTX 2070, so pretty horrible stuff when it comes to power consumption.

Putting It All Together

When compared to a single RX 590 we saw a 37% boost to average frame rates at 1440p, but (and this is a big but) that figure alone is misleading. Frame time performance in Star Wars Battlefront II and Deus Ex Mankind Divided was so bad that I preferred to play with a single card. Stuttering was also an issue in Far Cry 5, Project Cars 2 and The Witcher 3.

Normally I also test with DiRT 4 but that title suffered serious graphical glitches with Crossfire enabled so I had to drop it from the batch of games tested.

So are you better off buying a faster single GPU graphics card or two cheaper graphics cards?

If you haven't worked that one out yet, here is your answer: The RTX 2070 costs $500 while two RX 590s will be a little more than that at $280 each. Even if you compared the RTX GPU to a pair of $200 RX 580s the outcome would be much the same. Get the more expensive higher-end graphics card.

When everything is going right for the multi-GPU plan, the RX 590's killed it, beating the RTX 2070 by a whopping 25% margin. But out of the 20 games tested, we saw that kind of margin only once. The next best was a 14% win in F1 2018, 9% in Prey, 5% in Project Cars 2 but frame time performance was poor, as was in Deux Ex and The Witcher 3.

Closing Remarks

Three years later we find that once again multi-GPU technology seems like a good idea on paper, but in practice it's a bit of a fail. In our opinion SLI/Crossfire only makes sense for those with money to burn. For example, right now RTX 2080 Ti SLI graphics cards are about the only multi-GPU configuration that makes sense. If you're at the end of the road and there is nothing faster, yet you want more and can spend the big bucks, you can get a second RTX 2080 Ti.

But it doesn't make sense to run RTX 2080 SLI cards, for example. Just get a single RTX 2080 Ti and you'll receive smoother performance in the vast majority of titles.

As for the RX 590s in Crossfire, we'd much rather have a single Vega 64 graphics card. It's extremely rare that two 590s will provide higher frame rates than a single Vega 64, while also offering stutter-free gaming.

If you're only ever going to play a game like F1 2018 that supports Crossfire really well, then getting two RX 570s for $300 will be a hard combo to beat. But who buys a graphics card to only ever play one or two games?

Other drawbacks that are also part of this conversation include heat and power consumption. Those two RX 590s were dumping so much heat into the Corsair Crystal 570X case that you could justify spending more money in case fans and even then you'll still be running hotter due to the way the cards are stacked. You'll also lose out on the power supply. The RTX 2070 works without an issue with a 500w unit, and 600w would be more than enough. The Crossfire 590s though will need an 800 watt unit, 750w would be the minimum.

Ultimately, it's the poor software support that kills these multi-GPU setups and it's why we feel no one should burden themselves with SLI or Crossfire today.

Shopping Shortcuts:

- GeForce GTX 1070 Ti on Amazon, Newegg

- Radeon RX 570 on Amazon, Newegg

- Radeon RX 580 on Amazon, Newegg

- Radeon RX 590 on Amazon, Newegg

- GeForce GTX 1060 6GB on Amazon

- GeForce GTX 1080 on Amazon, Newegg

- GeForce RTX 2080 Ti on Amazon, Newegg

- GeForce RTX 2080 on Amazon, Newegg

- GeForce RTX 2070 on Amazon, Newegg