Following last week's leaked list of codenames for AMD's Radeon HD 6000-series graphics chips, a user on Chinese-speaking forum PCInLife has shared what he claims are benchmark results for one of these still-unannounced products. The posting includes a 3DMark Vantage and GPU-Z screenshot, with the first showing an overall score of 11,963. That's above what the Nvidia GeForce GTX 480 can produce, and not far from the dual-GPU Radeon HD 5970, which suggests AMD has managed a major speed improvement with the same 40nm manufacturing process it currently uses.

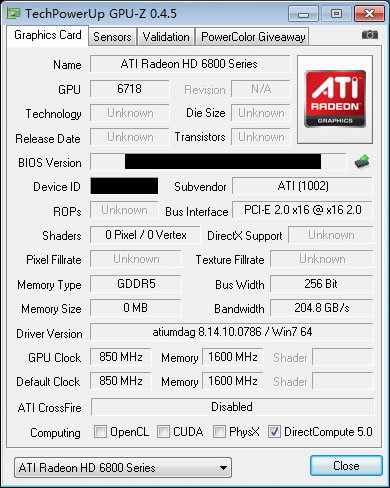

Some details revealed by GPU-Z include the GPU code number '6718', which corresponds to the Cayman XT core and will be used in cards designed to replace the Radeon HD 5800-series. It has the same 850MHz GPU clock speed as the Radeon HD 5870, so this is likely the Radeon HD 6870, while the memory is running 400MHz higher at 1,600MHz.

Two additional screenshots show the Radeon HD 6800 card hitting 43.55 fps in Crysis at 1920x1200 with 'Very high' settings and 4X antialiasing, as well as a Unigine Heaven score of 922 with 'extreme' tessellation. According to VR-Zone, the GTX 480 typically sees Unigine Heaven scores of 900+ while the Radeon HD 5870 is around the 500 mark, so it looks like AMD may have overcome one of the biggest weaknesses of the HD 5000 series. Unfortunately, we won't know for sure until the cards debut sometime before the end of the year, so be sure to take these numbers with a pinch of salt.

https://www.techspot.com/news/40111-alleged-radeon-hd-6800-benchmarks-leaked.html