Machine learning is an integral part of Google's strategy, powering many of the company's top applications. It's so important, in fact, that the search giant has been working behind closed doors for years developing its own custom solution to power its experiences.

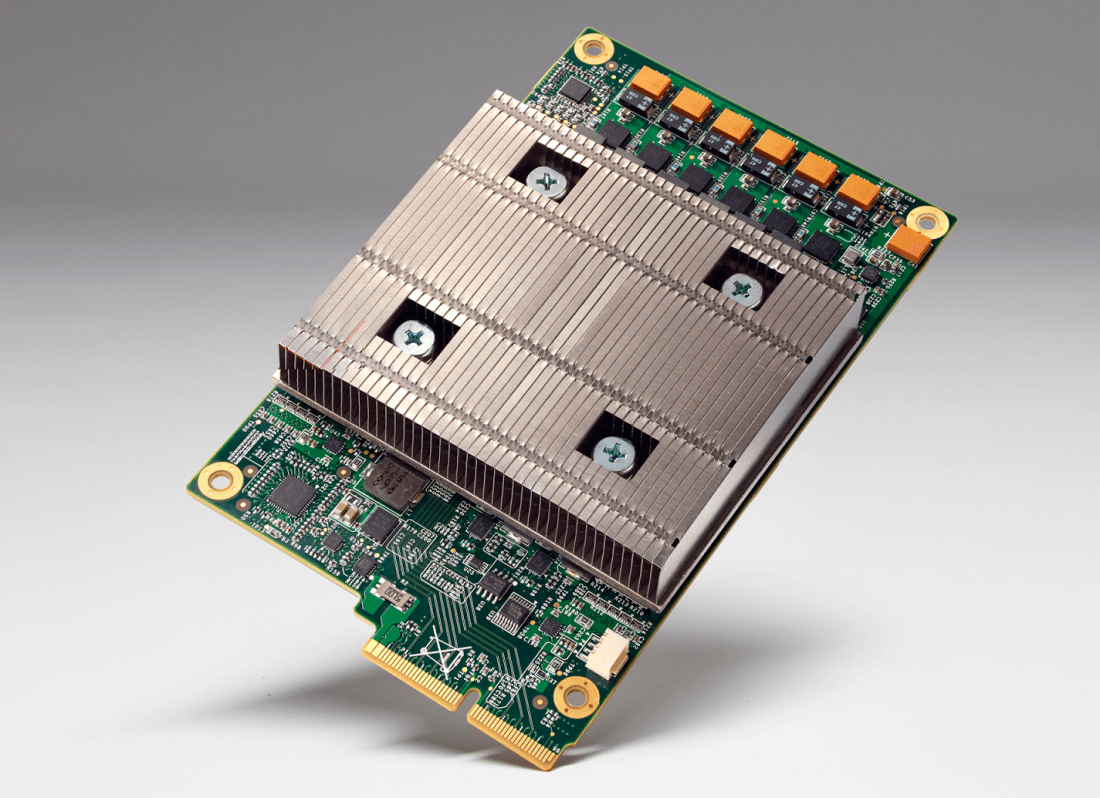

The result of that hard work is something Google calls a Tensor Processing Unit (TPU), a custom application-specific integrated circuit (ASIC) that works with TensorFlow, Google's second generation machine learning system.

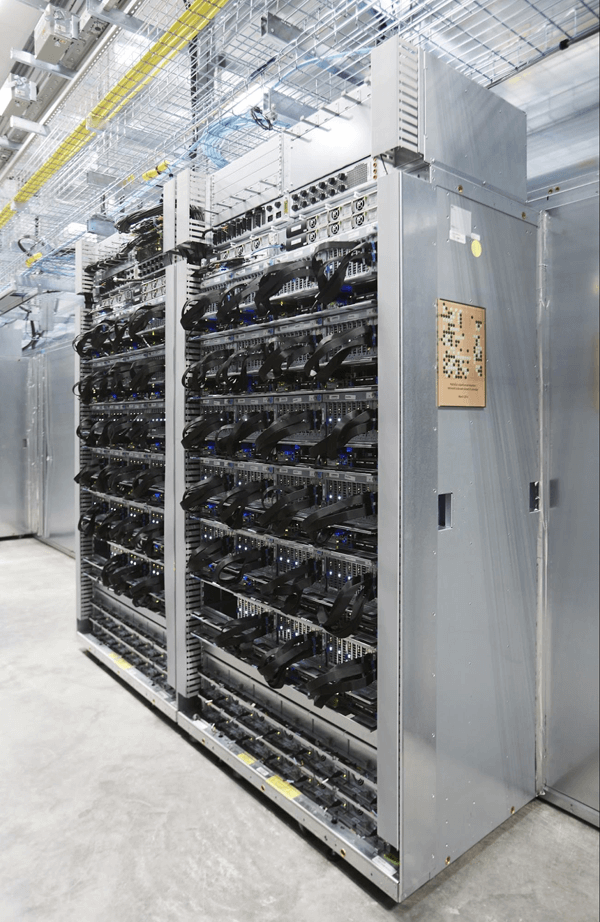

Norm Jouppi, a distinguished hardware engineer at Google, said they've been running TPUs inside their data centers for more than a year now and have found them to deliver an order of magnitude better-optimized performance per watt when handling machine learning. Performance, he noted, is roughly equivalent to fast-forwarding technology about seven years into the future (or three generations of Moore's Law).

In the case of the TPU, Jouppi said it's an example of how fast they can turn research into practice. From the first tested silicon, the engineer said they had them up and running applications at speed in data centers within 22 days.

As companies like Apple can attest to, good things happen when both hardware and software are developed under one roof.

Jouppi said the goal here is to lead the industry on machine learning and to make its innovations available to customers. Machine learning is transforming how developers build intelligent applications, he concluded, and they're excited to see the possibilities of it come to life.