Why it matters: As Intel rolls out its freshman line of dedicated graphics cards, the first benchmarks are emerging. The company's latest official test tries to make the case for the A750 against Nvidia's midrange GPU, but many questions remain as both companies and AMD prepare to launch new cards in the second half of this year.

This week, Intel published a brief benchmark of its upcoming Arc A750 graphics card, claiming it compares favorably with Nvidia's RTX 3060 in a few popular games. Previous Arc benchmarks, both official and unofficial, have been mixed thus far.

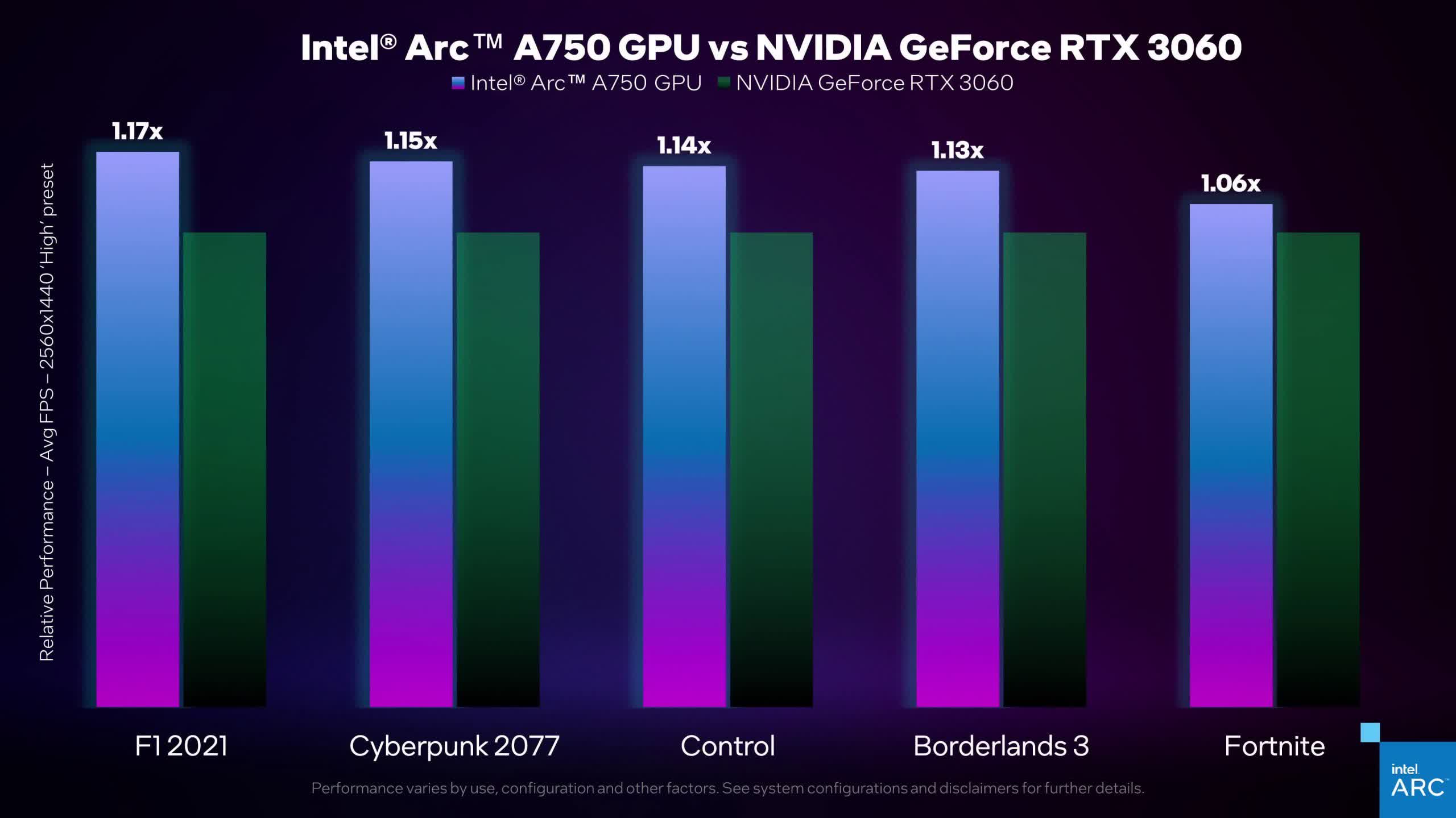

The primary title Intel tested in its video (above) is Cyberpunk 2077, but it also showed comparisons for Control, F1 2021, Borderlands 3, and Fortnite. All the comparisons show the A750 performing about 15 percent better than the 3060. Intel's card manages around 60 frames per second in Borderlands, Control, and Cyberpunk at 1440p using the high graphics preset. F1 and Fortnite run well north of 120fps.

However, the comparison doesn't mention image reconstruction -- another area where Intel wants to compete with Nvidia. All the tested games except Borderlands support Nvidia's DLSS, which would likely give the 3060 much higher framerates. Currently, none are on the list of games planning to feature Intel's XeSS. Only Cyberpunk and F1 support AMD FSR.

The other Arc GPUs are also set to compete with Nvidia's entry-level and mainstream cards, but some tests have been disappointing. Official A730M and A770M benchmarks put them close to the RTX 3050 Ti and 3060. The A770 is supposed to match the 3070 Ti, but tests in April showed markedly worse results, possibly owing to incomplete Intel drivers. Last month, an early review of the budget A380 was even more dismal.

While Intel's graphics cards are still only available in China and South Korea, the company maintains that it plans to launch them in other markets later this summer. Nvidia and AMD will each launch a new generation of GPUs later this year, which will likely further outperform Intel's products.

https://www.techspot.com/news/95322-intel-arc-a750-outperforms-rtx-3060-according-intel.html