One of the most important features of smartphones is the camera. Whether it's for photographing people, landscapes, flowers or food, buyers nowadays demand a good quality camera on the back of their handset. As they get better each year, for many they've replaced standalone point-and-shoot cameras as the go-to device for everyday photography, as they're easier to access and more compact to carry with you. The front-facing camera is increasingly important too with the trend of 'selfies' across social media and services like Snapchat.

The 16-megapixel camera on the back of the Samsung Galaxy S5

But just what goes in to making a smartphone camera? What hardware do companies use? What do pixel sizes and f-stops really mean? In this article I'll be exploring smartphone camera hardware, key terms associated with photography, and interesting comparisons along the way.

The Basics of Camera Hardware

Let's start right at the base components that form a camera. For smartphone cameras, like pretty much all cameras available on the market, there's two main components that form the camera module: the sensor and the lens. Without both of these crucial parts, you'll have a hard time taking a photo, which is why they're typically packaged together into a single unit that adds on to the smartphone's main board through a ribbon cable.

This is the camera module from the Galaxy S5; a Samsung S5K2P2XX 1/2.6" sensor with an f/2.2 lens. Photo: iFixit.

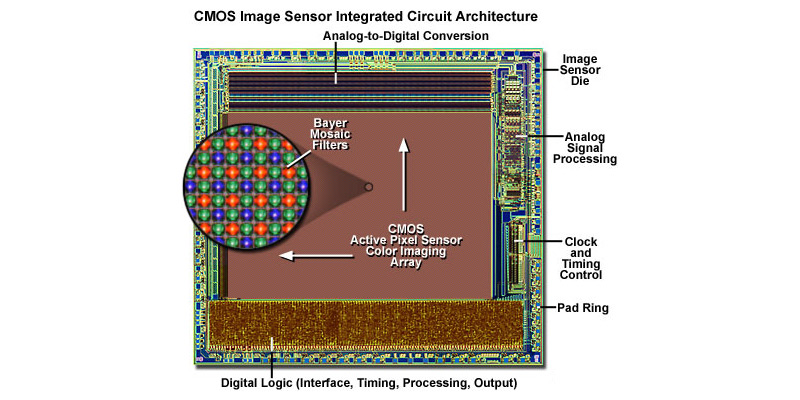

The sensor is the part of the camera that actually 'captures' the image. It's a complex integrated circuit that typically includes photodetectors - the key component that captures light - plus amplifiers, transistors, and often some form of processing hardware and power management. When the smartphone's camera software requests an image, the sensor provides all the necessary data.

Smartphone camera sensors almost universally use CMOS (complementary metal-oxide-semiconductor) technology, which is a form of active pixel sensor I described above. The other main sensor technology, CCD (charged-coupled device), is too power consuming and expensive for use in smartphones, even if historically CCD sensors have been of a higher quality.

A relatively simple explanation of how a CMOS sensor works is that the photodetectors corresponding to each pixel in the image capture analog information about the photons (light packets) hitting them. This information gets amplified and converted into a digital signal relating to the brightness of the photons. To get color data, an RGBG Bayer filter is layered over the array of photodetectors, and an interpolation software algorithm produces the final, full-color image.

The amount of megapixels a camera has refers directly to the amount of photodetectors in the sensor's array. An eight megapixel sensor, for example, means that there are eight million photodetectors in the array.

The lens focuses light onto the sensor so the image looks crisp and clear. While it's possible to use a camera without a lens, the resulting picture will be just a blur of colors as photons from all angles hit the sensor. Basically, you need a lens so the light from the large scene in front of the camera can be reduced and focused down to fit the small size of the sensor.

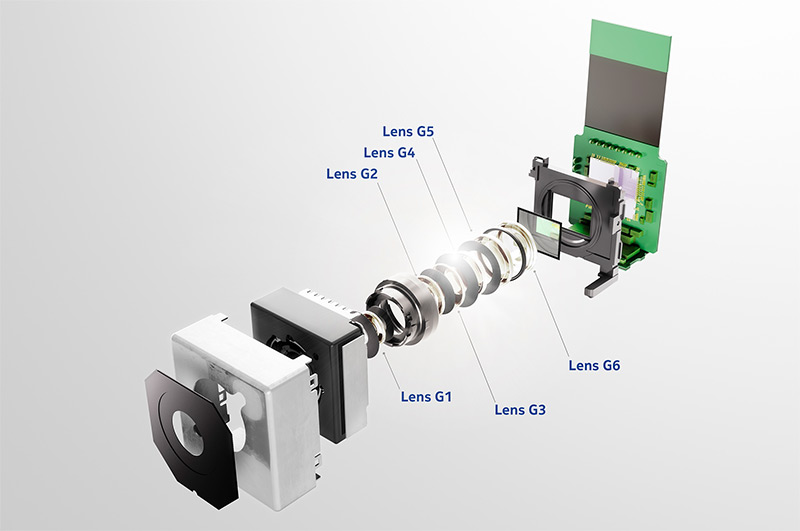

A breakout of the construction of Nokia's PureView camera

The lens is a collection of multiple plastic or glass elements, with glass usually providing a higher quality, sharper result. Each element has a specific function in focusing the light onto the sensor, whether that's generally shaping the light to fit the size of the sensor, correcting issues, or providing the final focus point.

In a camera with autofocus, the final lens element (or collection of a few elements) will move closer to or further away from the sensor, thanks to the assistance of a motor. This allows different areas of the image to appear in focus, and is one of the key aspects of a practical camera system.

The lens elements work in conjunction with an aperture, which is a hole before the sensor, of a certain size, that the focussed light travels through. The size of the hole determines how much light passes to the sensor, and how sharp and in focus the image will be; something I'll talk about later on in this article. Unlike professional cameras and some point-and-shoots, the aperture on a smartphone camera is always fixed, meaning it's impossible to adjust the light gathering properties of the lens to alter the way the image looks.

This photo was captured using a Samsung Galaxy S5 at ISO 40, 1/580s, f/2.2

The distance between the lens elements and the sensor is what's known as the focal length. This distance determines both the effective magnification of the camera system, as well as its field of view. A short focal length equates to a wide-angle lens with little magnification, and vice versa.

How far the lens needs to be from the sensor changes depending on the size of the sensor itself, and what function you want the lens to perform. Smartphone cameras almost universally use wide-angle lenses and small sensors, meaning focal lengths are below 5mm.

So that's a basic look at what a smartphone camera consists of. As you may have noticed, it's very similar to a human eye, where the sensor is the retina, and the lens is the... well... lens. Now it's time to take a deeper look.