For some, Apple's WWDC keynote event went liked they hoped, with the company introducing some exciting new products or technologies that hit all the sweet spots in today's dramatically reshaped tech environment. Augmented reality (AR), artificial intelligence, smart speakers, digital assistants, convolutional neural networks, machine learning and computer vision were all mentioned in some way, shape or form during the address.

For others, the event went like they expected, with Apple delivering on virtually all the big rumors they were "supposed" to meet: updated Macs and iPads, a platform for building AR apps on iOS devices, and a Siri-driven smart speaker.

For me, the event was a satisfying affirmation that the company has not fallen behind its many competitors and is working on products and platforms that take advantage of the most interesting and potentially exciting new technologies across hardware, software and services that we've seen for some time. In addition, they laid the groundwork for ongoing advancements in overall contextual intelligence, which will likely be a critical distinction across digital assistants for some time to come.

Part of the reason for my viewpoint is that there were several interesting, though perhaps a bit subtle, surprises sprinkled throughout the event. Some of the biggest were around Siri, which a few people pointed out didn't really get much direct attention and focus in the presentation.

However, Apple described several enhancements to Siri that are intended to make it more aware of where you are, what you're doing, and knowing what things you care about. Most importantly, a lot of this AI or machine learning-based work is going to happen directly on iOS devices. Just last year, Apple caught grief for talking about differential privacy and the ability to do machine learning on an iPhone because the general thinking then was that you could only do that kind of work by collecting massive amounts of data and performing that work in large data centers.

Now, a year later, the thinking around device-based AI has done a 180 and there's increasing talk about being able to do both inferencing and learning---two key aspects of machine learning---on client devices. Apple didn't mention differential privacy this year, but they did highlight that by doing a lot of this AI/machine learning work on the device, they can keep people's information local and not have to send it up to large cloud-based datacenters. Not everyone will grasp this subtlety, but for those who do care a lot about privacy, it's a big advantage for Apple.

...the thinking around device-based AI has done a 180 and there's increasing talk about being able to do both inferencing and learning --- two key aspects of machine learning --- on client devices

On a completely different front, some of Apple's hardware updates, particularly around the Mac, highlight how serious they've once again come about computing. Not only did they successfully catch up to many of their PC brethren, they were demoing new kinds of computing architectures---such as Thunderbolt attached external graphics for notebooks---that very few PC companies have explored. In addition, bringing 10-bit color displays to mainstream iMacs is a subtle, but critical distinction for driving higher-quality computing experiences.

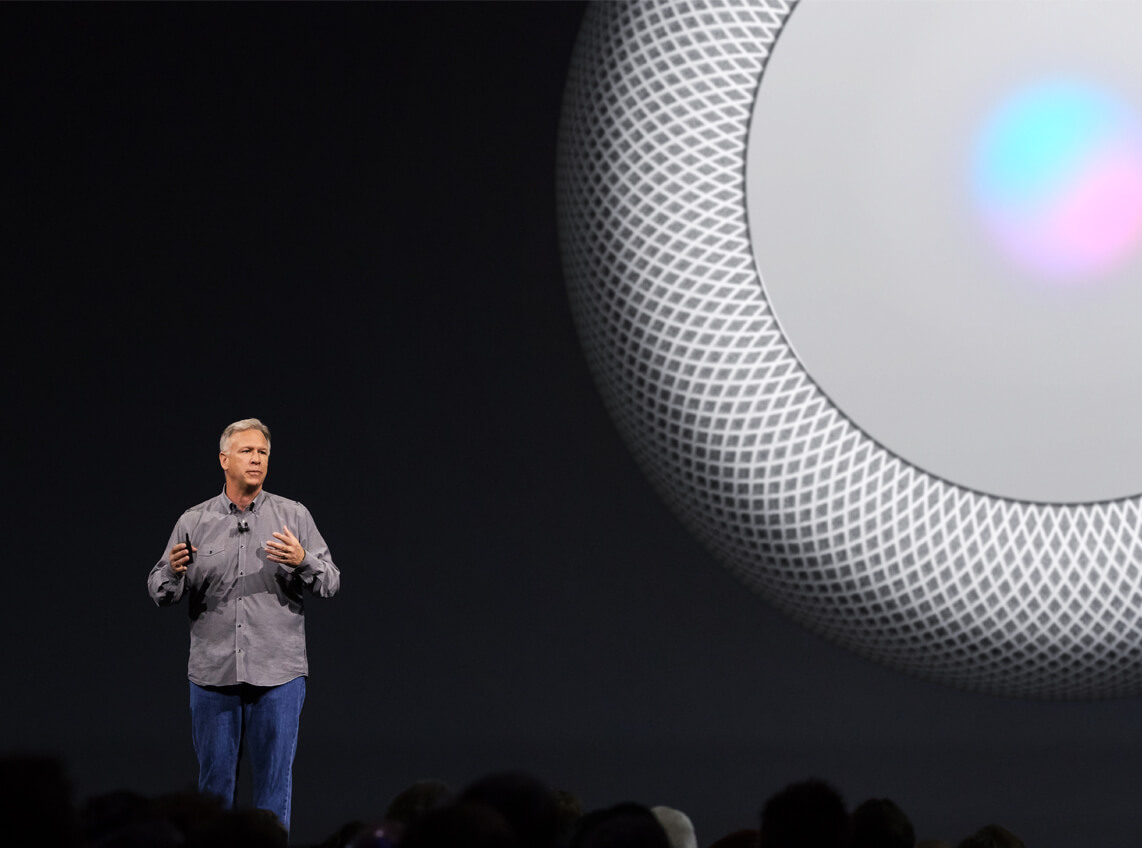

On the less positive front, there are some key questions on the detailed aspects of the HomePod's audio processing. To be fair, I did not get to hear an audio demo, but conceptually, the idea of doing fairly major processing on a mono speaker of audio that was already significantly processed to sound a certain way on stereo speakers during its creation strikes me as a bit challenging. Yes, some songs may sound pleasing, but for true audiophiles who actually want to hear what the artist and producer intended, Apple's positioning of the HomePod as super high-quality speaker is going to be a very tough sell.

Of course, the real question with HomePod will be how good of a Siri experience it can deliver. Though it's several months from shipping, I was a bit surprised there weren't more demos of interactions with Siri on the HomePod. If that doesn't work well, the extra audio enhancements won't be enough to keep the productive competitive in what is bound to be a rapidly evolving smart speaker market.

The real challenge for Apple and other major tech companies moving forward is that many of the enhancements and capabilities they're going to introduce over the next several years are likely to be a lot subtler refinements of existing products or services. In fact, I've seen and heard some say that's what they felt about this year's WWDC keynote. Things like making smart assistants smarter and digital speakers more accurate require a lot of difficult engineering work that few people can really appreciate. Similarly, while AI and machine learning sound like exotic, exciting technological breakthroughs, their real-world benefits should actually be subtle, but practical extensions to things like contextual intelligence, which is a difficult message to deliver.

If Apple can successfully do so, that will be yet another surprise outcome of this year's WWDC.

Bob O'Donnell is the founder and chief analyst of TECHnalysis Research, LLC a technology consulting and market research firm. You can follow him on Twitter @bobodtech. This article was originally published on Tech.pinions.