In context: The recent influx of announcements showcases a global trend of new offerings aimed at introducing generative AI capabilities to businesses. From tech giants like IBM, Google, Salesforce, Microsoft, Amazon, to Meta, it appears that every tech company is capitalizing on the excitement surrounding this transformative new technology.

It has become increasingly clear that most organizations are eager to embrace AI. Businesses are rapidly identifying potential productivity enhancements, efficiencies, and other benefits that AI can provide. However, a significant problem arises when these companies aren't entirely sure how they can start leveraging generative AI. Experts with deep knowledge of how the technology works and how it can be implemented are scarce and, not to mention, very expensive.

Recognizing this disconnect, Dell Technologies and Nvidia have put together an offering called Project Helix, specifically designed to simplify the process of getting started with generative AI. Project Helix focuses on creating full-stack, on-premises generative AI solutions that allow companies to either build new or customize existing generative AI foundation models using their own data.

One problem that has emerged in businesses beginning to use generative AI services is the risk of internal IP leakage. In fact, several companies, including Samsung and Apple, have implemented policies preventing their employees from using tools like ChatGPT for work purposes due to concerns related to this issue.

Part of the reason for this concern is that virtually all the early iterations of generative AI could only run in massive cloud-based data centers, many of which collected the data entered into their prompt inputs. However, in the incredibly rapid evolution of the foundation models that underpin generative AI applications, a number of these concerns have been addressed. Notably, there is now a wide range of open-source models available from marketplaces like Hugging Face. Many of these open-source models can run very efficiently with more reasonable computing requirements, such as those in an appropriately equipped on-premises data center. Additionally, some of the big tech companies have started to shift the rules about where their models can be run and are creating smaller versions of their models optimized for on-site use.

Moreover, we've seen several companies, including Nvidia, begin to offer models specifically designed for enterprise applications. Nvidia's development is interesting on multiple levels. The company is strongly associated with generative AI primarily because of its hardware. Nvidia's GPU chips power a large majority of current generative AI applications and services in the cloud. At the company's last GTC conference in March, they surprised many by unveiling an entire range of generative AI-related software, including industry-specific software foundation models and enterprise-focused development tools, notably its NeMo large language model (LLM) frameworks and NeMo Guardrails for filtering out unwanted topics. It was no surprise that these models were optimized to run on Nvidia hardware.

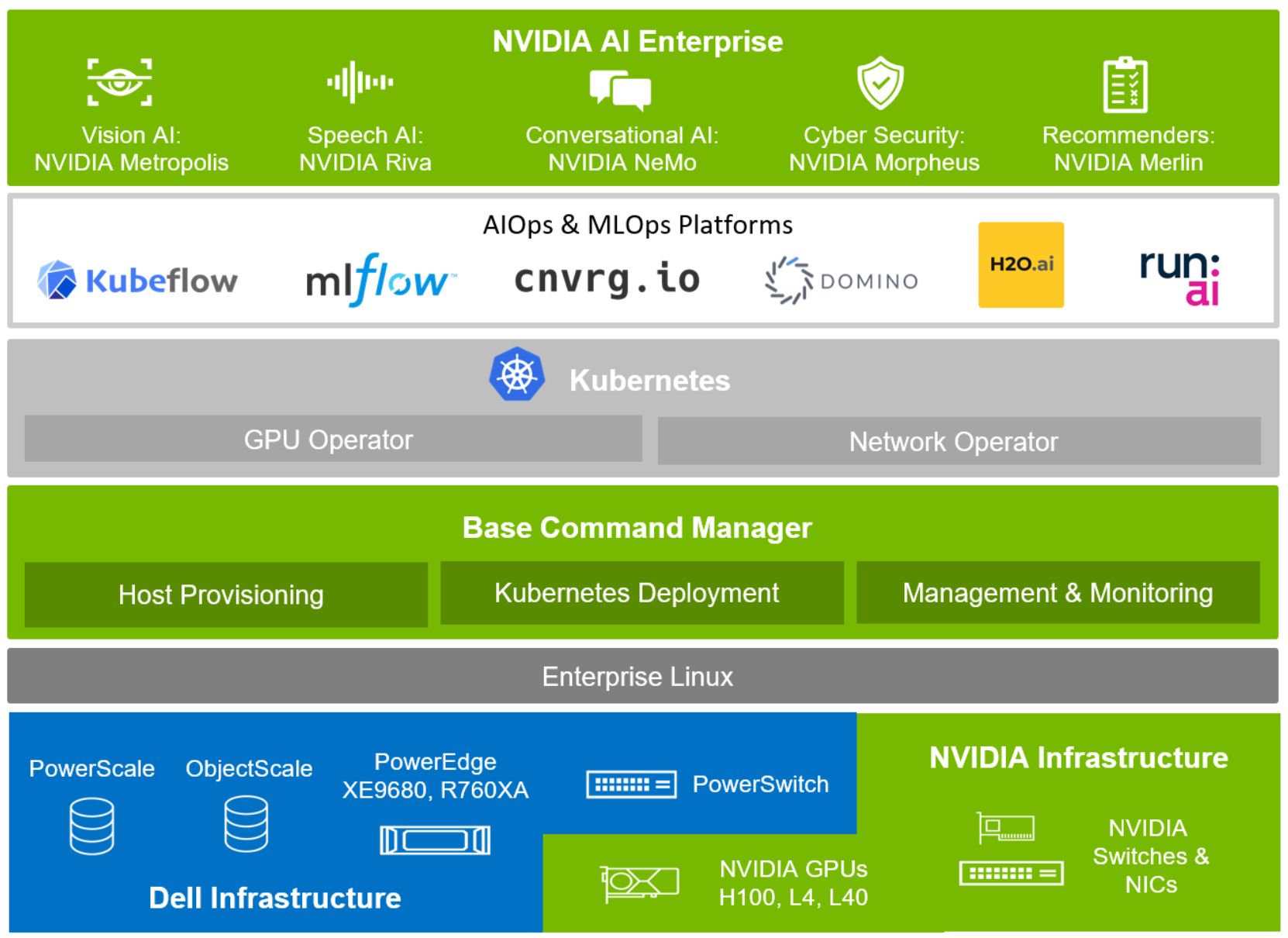

Project Helix represents a collaborative effort by Dell and Nvidia to assemble a range of Dell PowerEdge server systems. These include Nvidia H100 GPUs and Nvidia's line of Bluefield DPUs (Data Processing Units, used for the high-speed interconnects between servers that AI workloads require) and are bundled with Nvidia's Enterprise AI software.

Additionally, Dell provides several different storage options from its PowerScale and ECS Enterprise Object Storage lines, optimized for AI workloads. The result is a comprehensive solution that enables companies to begin building or customizing generative AI models. Potential customers can either use one of Nvidia's foundation model options or, if they prefer, select an open-source model from Hugging Face (or a solution from another tech provider) and start the process.

The bundled Nvidia software allows importing an organization's existing corpus of data – ranging from documents, customer service chats, social media posts, and much more – and then using that to either train a new model or customize an existing one. Once the training process is complete, the tools necessary to run inferences and create new applications leveraging the newly trained model are included as well. Dell's bundle also provides a blueprint for helping companies navigate the process of creating/customizing these models and building these tools, along with a range of technical support services.

Most importantly, because this work is done internally, Project Helix can help mitigate the IP leakage issues that concern many companies – even those that have started working with generative AI tools.

Another significant benefit of Project Helix is that it allows companies to leverage generative AI in a more unique and personalized way. While the general-purpose tools currently available can undoubtedly help with certain types of applications and environments, most companies recognize that the real competitive advantage of generative AI lies in customization. There's considerable interest in incorporating a company's own data into these tools, but there's also much confusion about how exactly to do that.

Putting together an "easy kit" for generative AI doesn't mean many organizations won't face challenges in leveraging their data and technology to create the solutions they need. It's crucial to remember that the concepts behind generative AI are still very new, and it's an extremely complex technology. Nevertheless, by bundling the necessary hardware and software that's been pretested to work together, along with information on how to navigate the process, Project Helix appears to be an attractive option for organizations that are eager – or feel competitively compelled – to dive into this exciting new realm.

Bob O'Donnell is the founder and chief analyst of TECHnalysis Research, LLC a technology consulting firm that provides strategic consulting and market research services to the technology industry and professional financial community. You can follow him on Twitter @bobodtech

https://www.techspot.com/news/98786-dell-nvidia-partner-generative-ai-businesses.html