Something to look forward to: Nvidia has announced that its 'GeForce RTX: Game On' event takes place on January 12, 2021, at 9 AM PST. The company hasn't revealed what will be on show, but you can expect new Ampere cards to be announced, with the RTX 3080 Ti and RTX 3060 the most likely candidates.

The broadcast lands during the online-only Consumer Electronics Show (CES), which runs from Monday, January 11, and Thursday, January 14. Jeff Fisher, senior vice president of Nvidia's GeForce business, will present the event. He was also on stage last year to unveil the RTX 2060 and Max-Q laptops.

It's rumored that the successor to that RTX 2060—the non-Ti version of the RTX 3060—will be revealed next month. All rumors point to two versions of the card: one with 12GB and one with 6GB. The latter is thought to have started life as the RTX 3050 Ti before being rebranded by Nvidia.

A potentially more exciting announcement could be the RTX 3080 Ti. Recent leaks claim that Nvidia had been planning to launch the card in January but decided to delay the release until February, partly due to Big Navi not being as threatening as it expected.

The RTX 3080 Ti is thought to feature 20GB of GDDR6X, double that of the vanilla RTX 3080. It could cost $999, putting it in direct competition with the Radeon RX 6900 XT, which packs 16GB of GDDR6. Nvidia's offering also has a 320-bit memory bus, 760 GBps memory bandwidth, 9,984 CUDA cores (78 streaming multiprocessors), and a 320W TDP, according to reports.

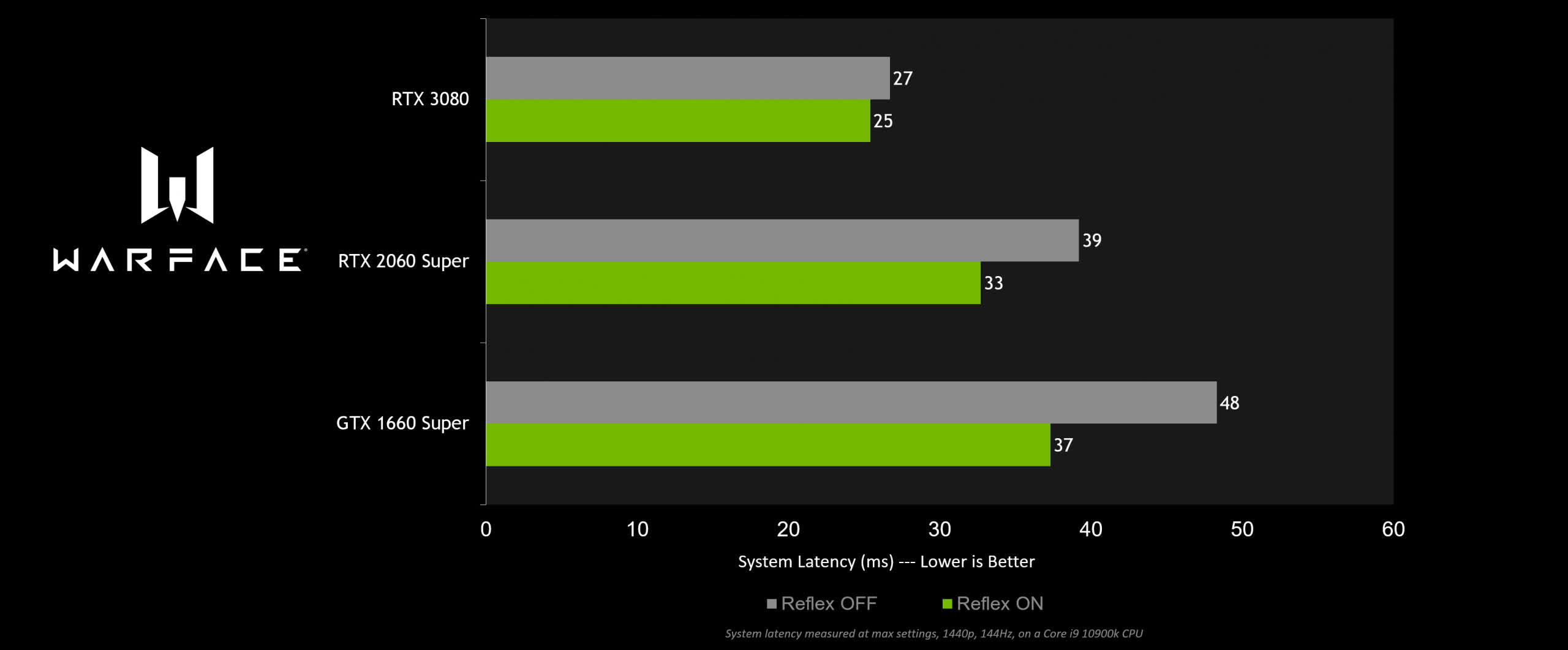

In addition to announcing the GeForce RTX: Game On broadcast, the company revealed four new multiplayer games now support its latency-lowering Nvidia Reflex. CRSED: F.O.A.D. (formerly Cuisine Royale), Enlisted, Mordhau, and Warface are joining the likes of Fortnite, Valorant, and Call of Duty Modern Warfare in offering the feature. You can read more about Reflex here.

https://www.techspot.com/news/88010-nvidia-announces-january-geforce-event-could-reveal-rtx.html