It's time we get to explore something we've been eager to investigate since our day-one GeForce RTX 3080 review, and that's the weaker-than-expected resolution scaling of the new Ampere architecture.

Now before we get into it, do note, this article is not designed to change your opinion (our ours) about Ampere. The RTX 3080 is the best value high-end GPU on the market by a country mile... if you can get your hands on one. But back to Ampere and its interesting quirks, the aim here is to investigate and explain what's going on.

For those of you not up to speed, in our RTX 3080 review we found that gaming performance at 1440p was not as impressive relative to what was seen at 4K. We saw this as did many other reviewers, some of which simply suggested it was a CPU bottleneck, the RTX 3080 is powerful enough that even the latest and greatest CPUs can't keep up.

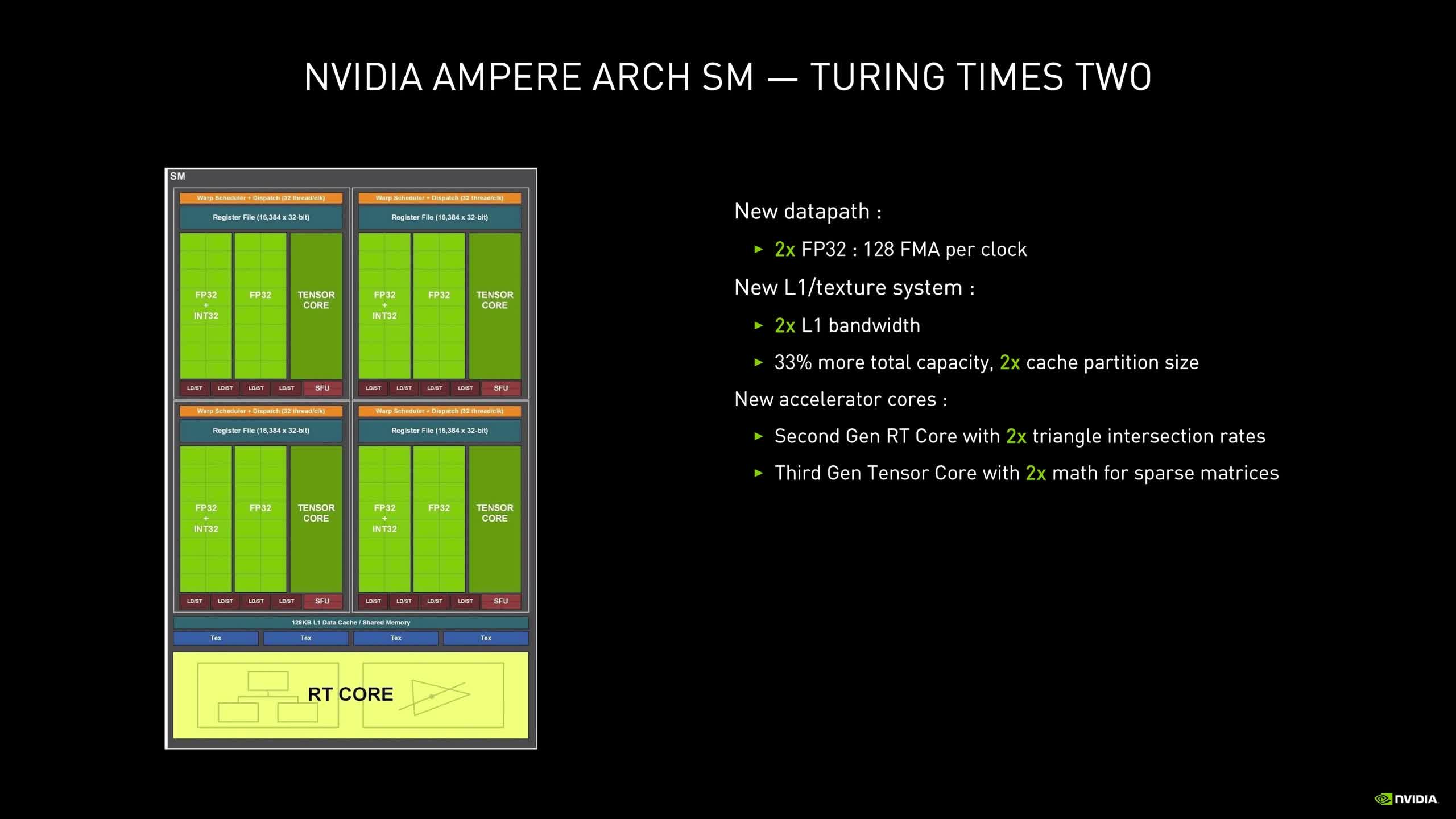

But simply writing this off as a CPU issue didn't sit right with us. In our review we tested with both the Ryzen 9 3950X and Core i9-10900K and both often saw the same resolution scaling. We noted part of the reason for the weaker than expected 1440p performance was down to the Ampere architecture and the change to the SM configuration. The two times FP32 design can only be fully utilized at 4K and beyond. This is because at 4K the portion of the render time per frame is heavier on FP32 shaders. At lower resolutions like 1440p, the vertice and triangle load is identical to what we see at 4K, but at the higher resolution pixel shaders and compute effect shaders are more intensive, so take longer and therefore can fill the SMs FP32 ALUs better.

We often see high performance GPUs better utilized at higher resolutions for similar reasons, so that in itself isn't unusual. Higher resolutions always tend to put core-heavy GPUs to work better, but they also minimize other system's bottlenecks.

In our RTX 3080 review, we could also see how the RTX 2080 Ti extended its lead over the vanilla 2080 at 4K, going from ~23% faster at 1440p to ~28% faster at 4K. That's only a 5% performance disparity and the scaling at 1440p and 4K looks very similar.

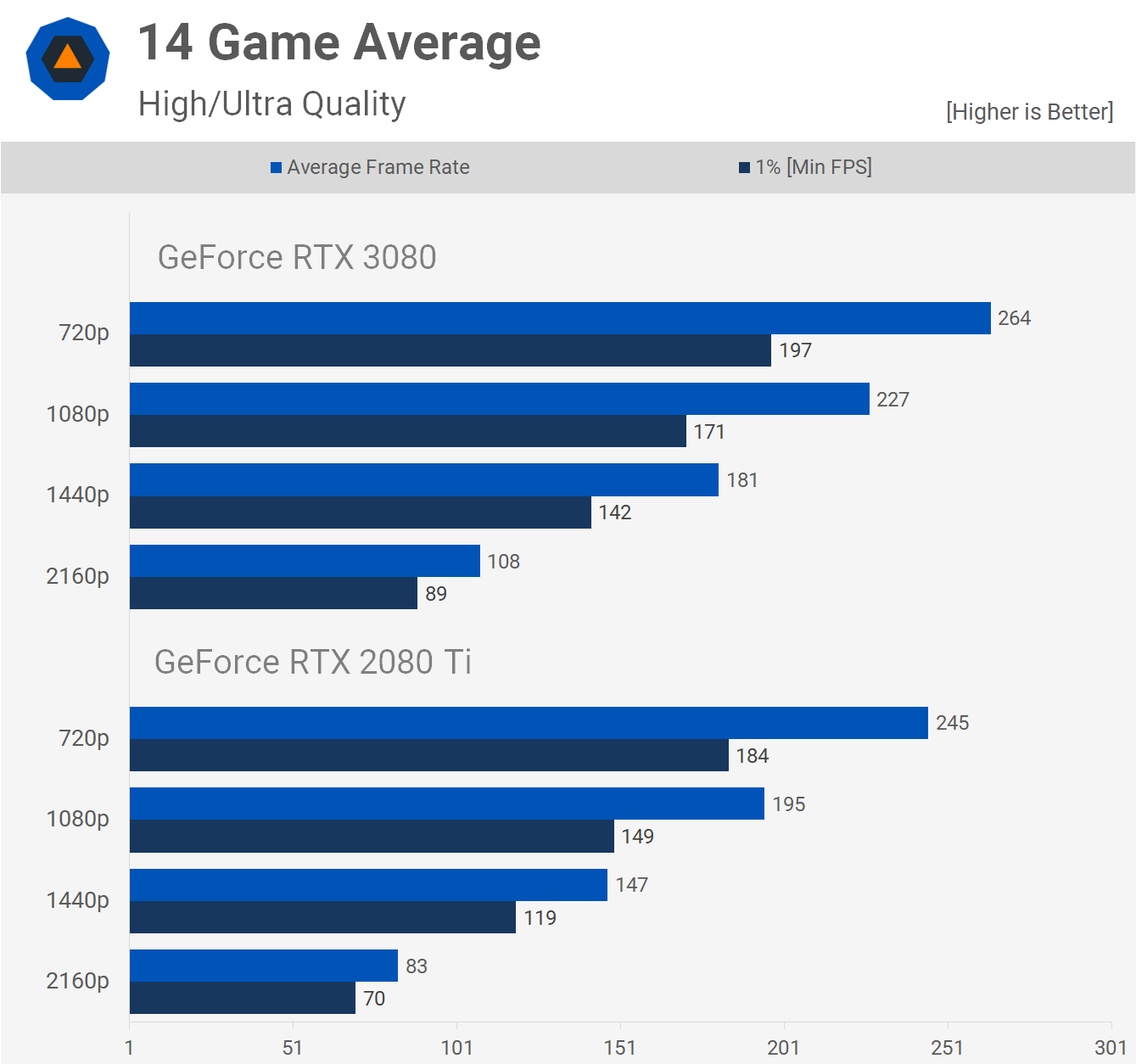

With the RTX 3080 we saw a 21% advantage over the 2080 Ti at 1440p and then a much larger 32% margin at 4K. We suggested this could be explained by the Ampere architecture's nature which is more compute heavy, datacenter and AI oriented than gaming-first. So with this article we're going to explore how true that statement is by adding 1080p and 720p data to the 14 games we test with. If we're seeing CPU performance influence the 1440p results, this will become very apparent with the 1080p data and extremely obvious at 720p.

For this test we'll be running a Core i9-10900K at stock clocks and comparing the RTX 3080 against the 2080 Ti. Turing isn't necessarily the best architecture at efficiently scaling lower resolutions, but it does seem to be better than Ampere and considering there's no other GPU that comes close in terms of performance, it is what has to be used. Let's get into the results.

Benchmarks

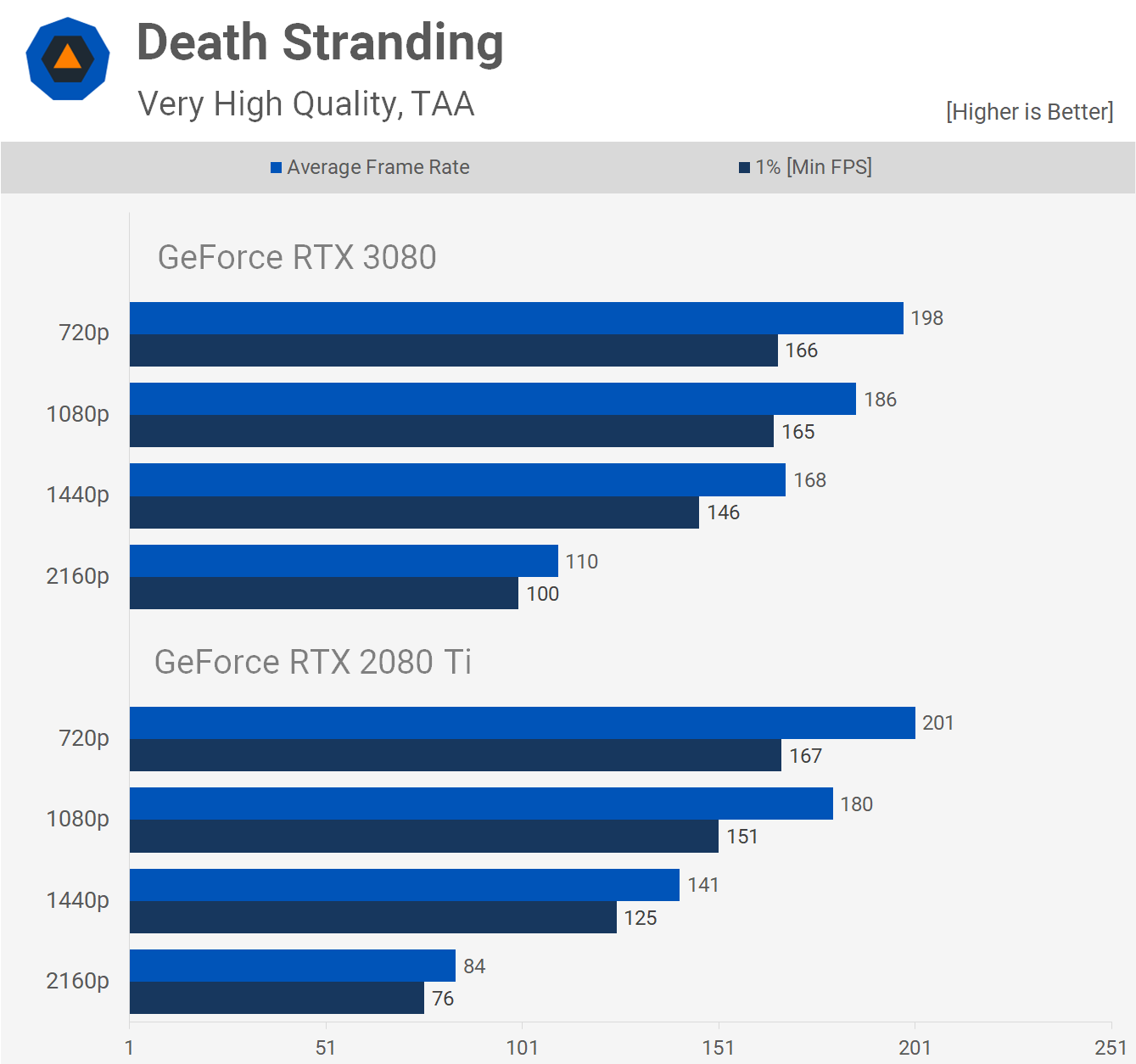

Starting with Death Stranding we see that frame rates for the 2080 Ti increased by 68% when moving from 4K to 1440p, and then 28% from 1440p to 1080p before maxing out at 201 fps at 720p. The RTX 3080 saw a 53% performance increase when dropping down to 1440p from 4K, and then just a further 11% increase from 1440p to 1080p.

By the time we hit the 1080p resolution we start to see the CPU limit performance, leaving very little headroom at 720p. That's interesting to note and it does mean that there's no CPU limitation at 1440p, so the weaker scaling cannot be blamed on the 10900K, not one bit.

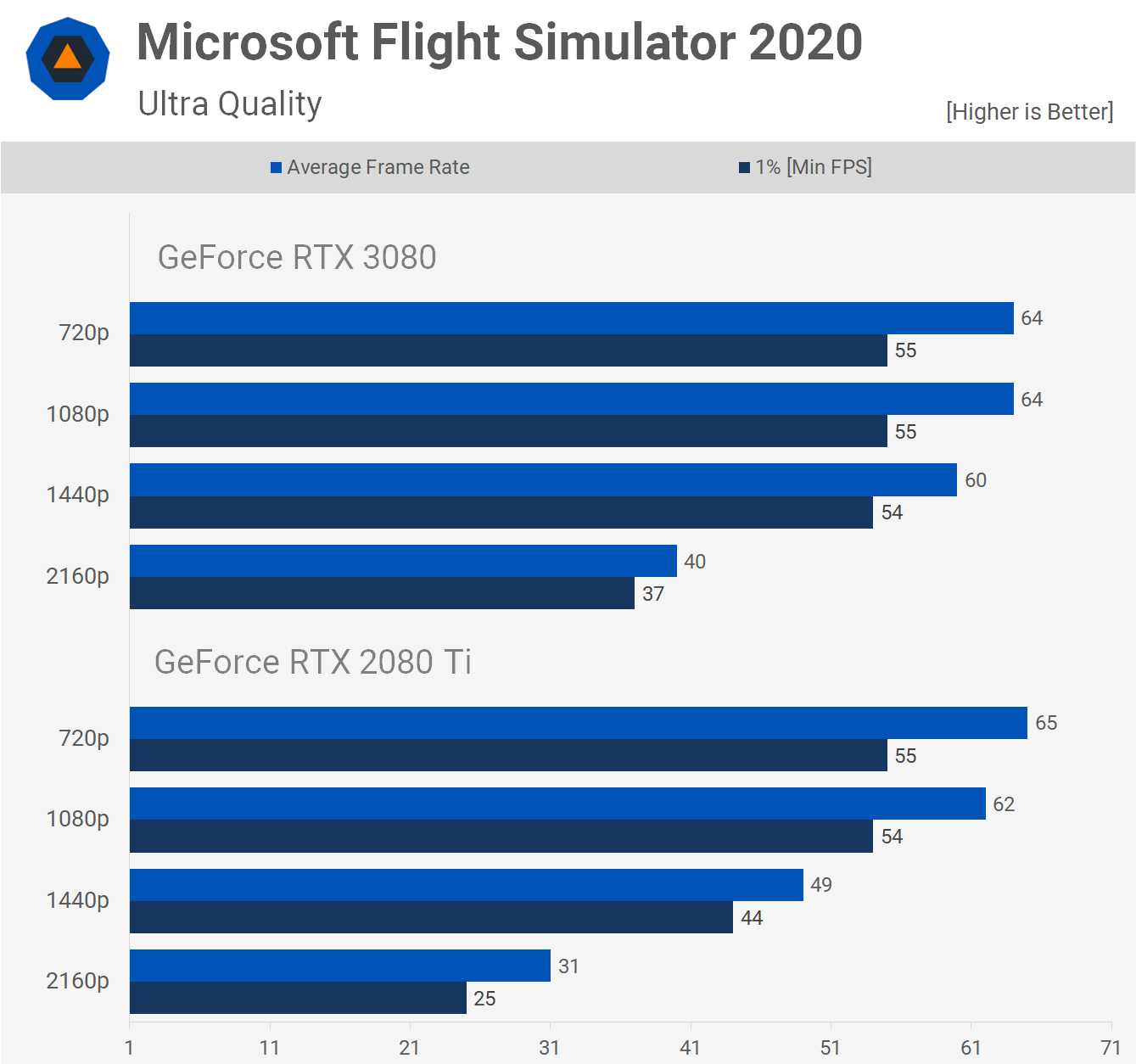

Microsoft Flight Simulator 2020 is heavily limited by CPU performance as its DX11 implementation mostly hammers a single core. Based on what we're seeing here it's plausible that the RTX 3080 is limited with the 10900K at 1440p, though given we do see a little more performance at 1080p and then a hard limit at 720p, we'd say we're just shy of limiting the 3080 at 1440p in this title.

Now if you thought the RTX 3080 was in any way CPU limited in Shadow of the Tomb Raider, well you'd be wrong. Starting with the 2080 Ti results, we're seeing an 82% performance uplift when dropping the resolution from 4K to 1440p, then a 45% increase from 1440p to 1080p and a further 47% increase from 1080p to 720p.

The RTX 3080 appears to scale reasonably well as performance is improved by 76% when dropping down to 1440p from 4K, then a 42% increase from 1440p to 1080p and finally a 33% increase from 1080p to 720p at which point the CPU might be starting to become the bottleneck. In other words, we're seeing good scaling from the 3080 in this game.

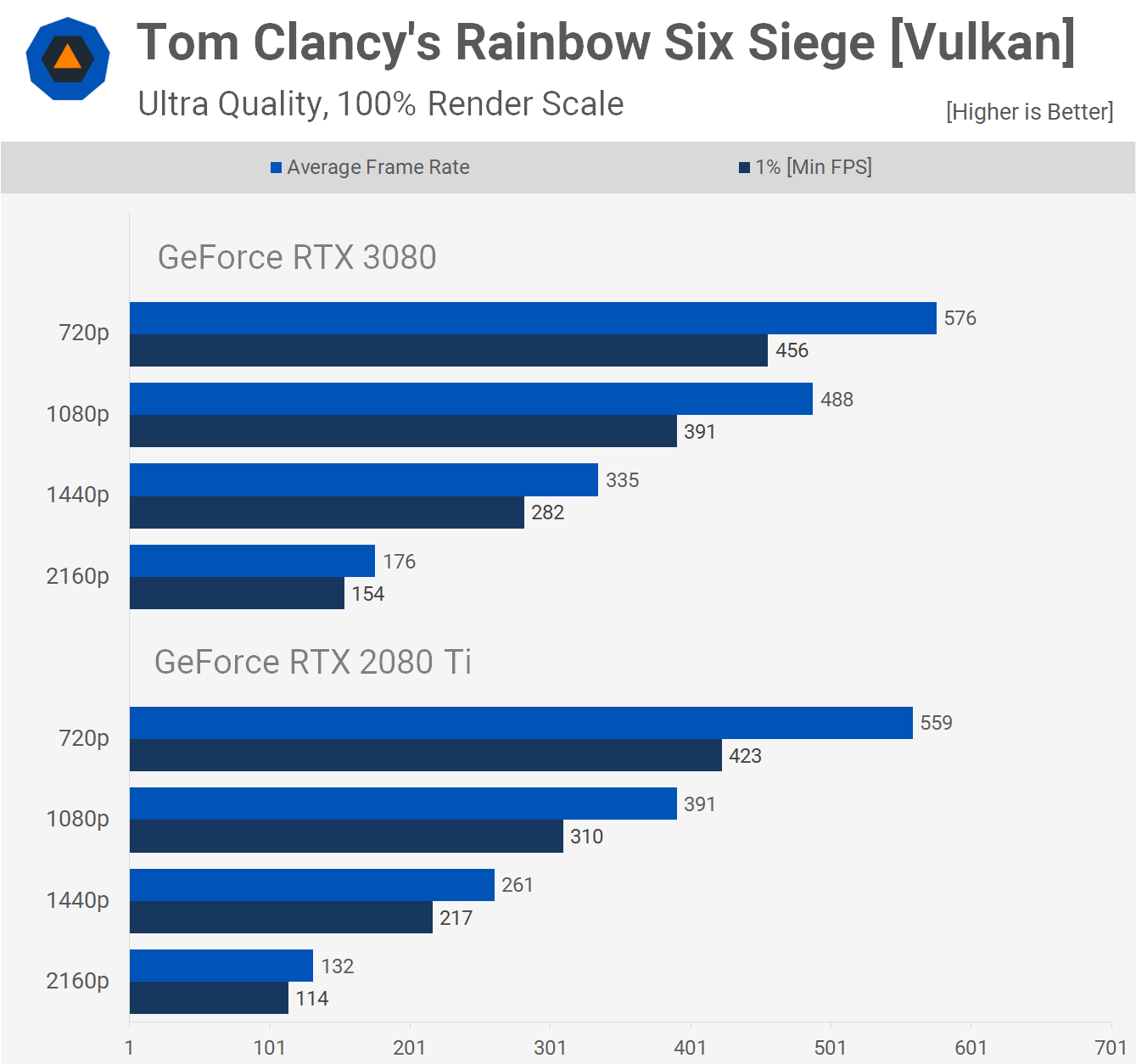

Next up we have the Rainbow Six Siege where we're clearly not CPU limited at 1080p, let alone 1440p. As we often see in Vulkan-based titles, scaling for core-heavy GPUs is actually quite good here.

Relative to the 2080 Ti, the RTX 3080 scales quite well, whereas the 2080 Ti sees a 98% improvement when lowering the resolution to 1440p, the RTX 3080 saw a 90% improvement.

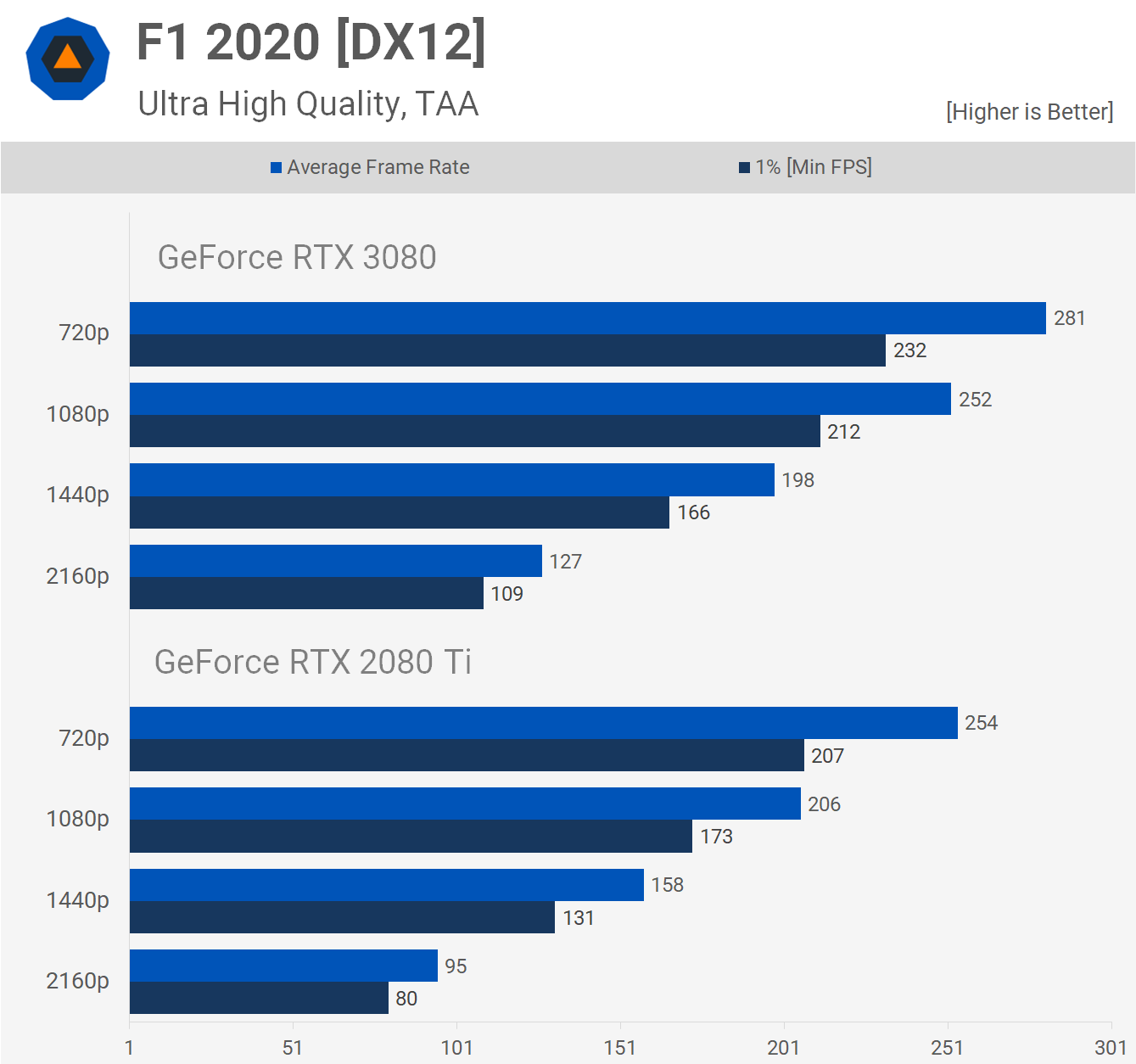

F1 2020 is another game where we're not CPU limited even at 1080p with the RTX 3080/10900K combo. Resolution scaling is also much better with the RTX 2080 Ti, which saw a 66% performance uplift when reducing the resolution from 4K to 1440p and then 30% from 1440p to 1080p. The RTX 3080 only saw a 56% performance improvement when lowering the resolution from 4K to 1440p and then 27% from 1440p to 1080p.

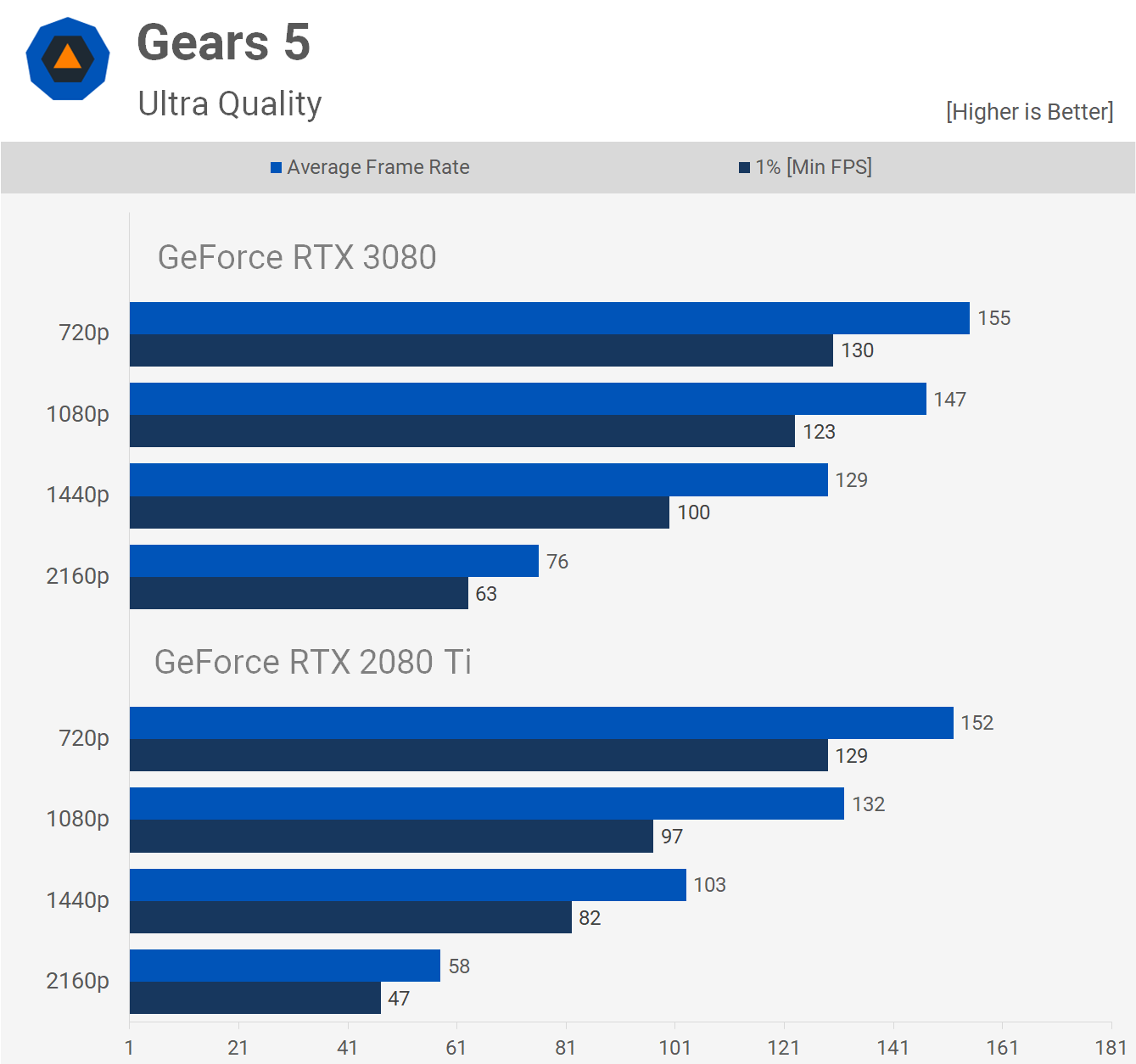

We also find no CPU bottleneck in Gears 5 with the RTX 3080, all the way down to 1080p. The 2080 Ti does scale better from 4K to 1440p, but it's not a significant difference. The margin does widen when going from 1440p to 1080p, but we are starting to approach the hard limits of the CPU.

The RTX 2080 Ti scales considerably better in Horizon Zero Dawn. We see that from 4K to 1440p the Turing GPU enjoys an 83% performance boost, and then a 46% boost from 1440p to 1080p. The RTX 3080 sees a 70% performance increase when lowering the resolution from 4K to 1440p, and then just 21% from 1440p to 1080p despite not running into a CPU bottleneck. The game simply can't keep with all those CUDA cores loaded with work at these lower resolutions and therefore efficiency drops off considerably.

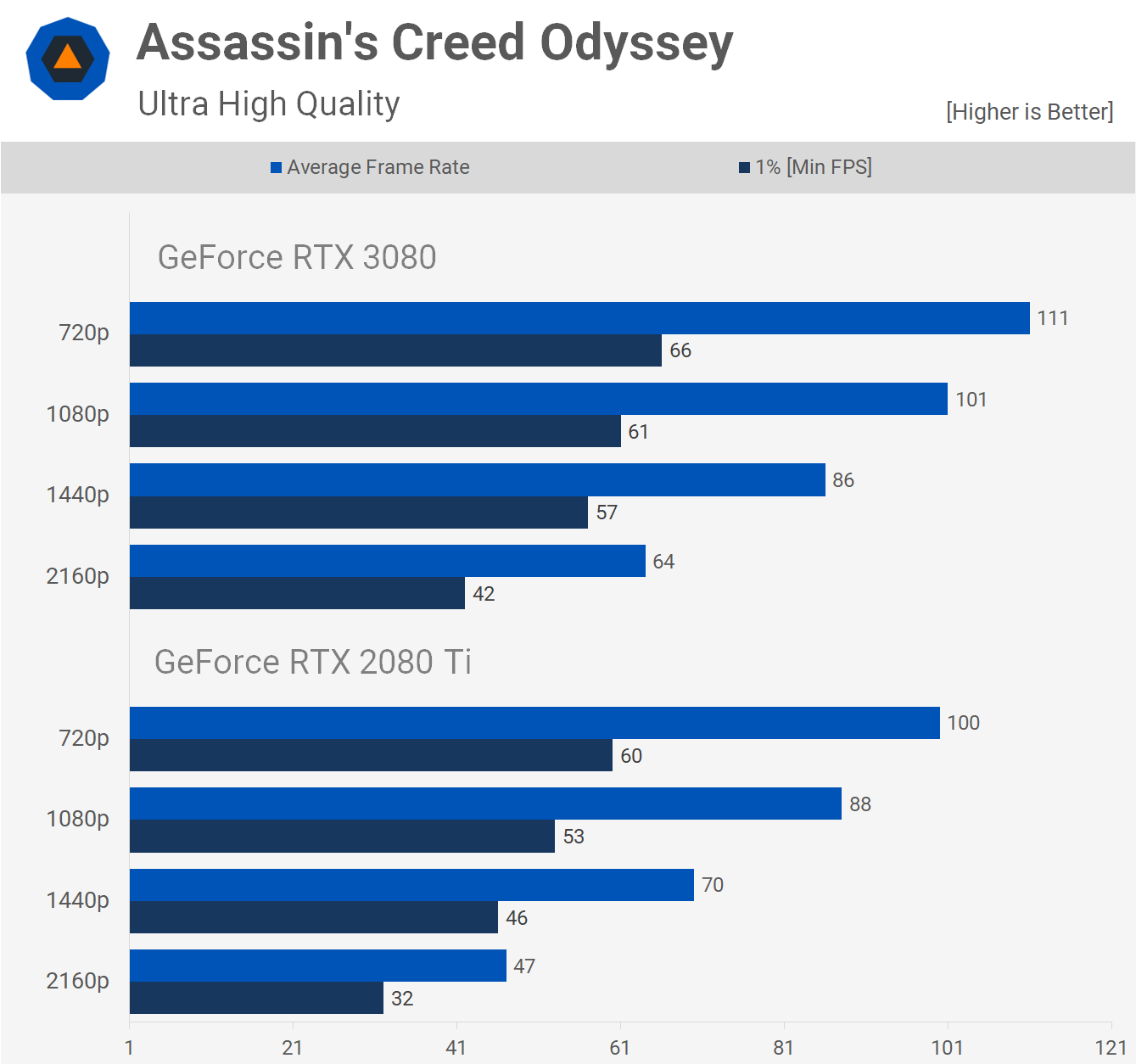

It's a similar story when testing with Assassin's Creed Odyssey. The 2080 Ti saw a 49% jump when lowering the resolution to 1440p, while the 3080 gets bumped by just 34%, and a similar thing is seen when reducing the resolution from 1440p to 1080p.

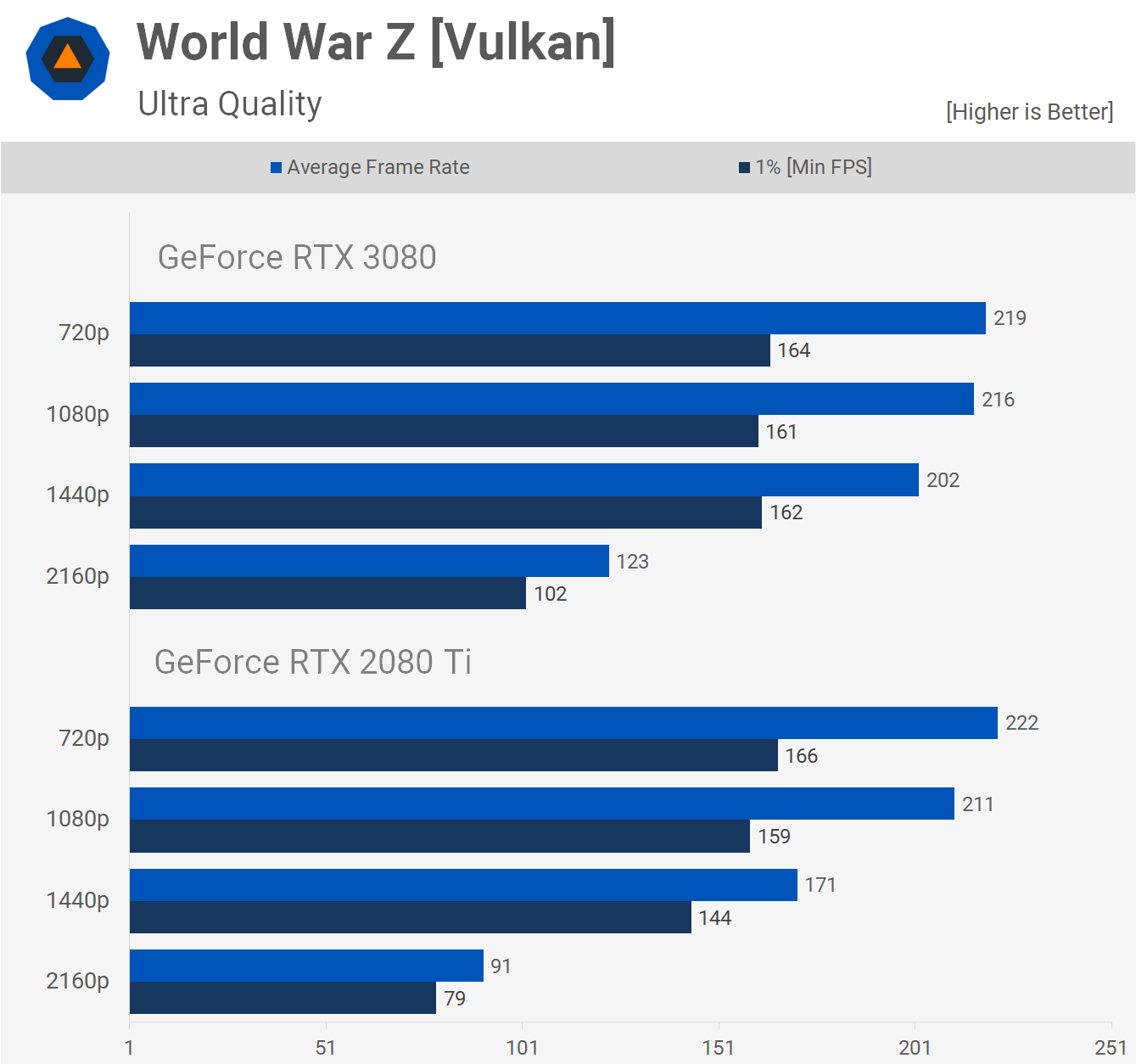

World War Z is another title based on the Vulkan API which sees good utilization for the RTX 3080, even at 1440p. In this instance we do run into a CPU bottleneck, evidenced by the 1% low results. These results are not an example of the Ampere architecture scaling poorly across lower resolutions, but rather an example of a game that does become CPU limited at 1440p even with a fast Core i9 CPU when coupled with a high performance GPU.

Metro Exodus is a bit strange when it comes to performance, though we are talking about frame rates up around 200 fps. There's some kind of bottleneck going on with the RTX 3080, but we don't think it's necessarily the CPU, but rather a driver overhead issue that affects scheduling for core heavy GPUs like the RTX 3080.

Either way, we think these results are very interesting. The 2080 Ti and 3080 deliver identical performance at 1080p, while the 2080 Ti gains more performance when lowering the resolution to 720p. Of course, this 720p performance metric is irrelevant for gaming as no one is going to use it, but it does show how Turing appears to scale better at lower resolutions.

Resolution scaling performance is fairly consistent in Resident Evil 3 between the 2080 Ti and 3080. Both saw roughly a 90% performance uplift when dropping from 4K to 144p, and then exactly 47% when going from 1440p to 1080p. It's not until we drop down to 720p that the 2080 Ti sees a larger performance gain, but we could also be reaching the limits of the CPU with the RTX 3080 which in absolute terms remains the faster GPU at all resolutions regardless.

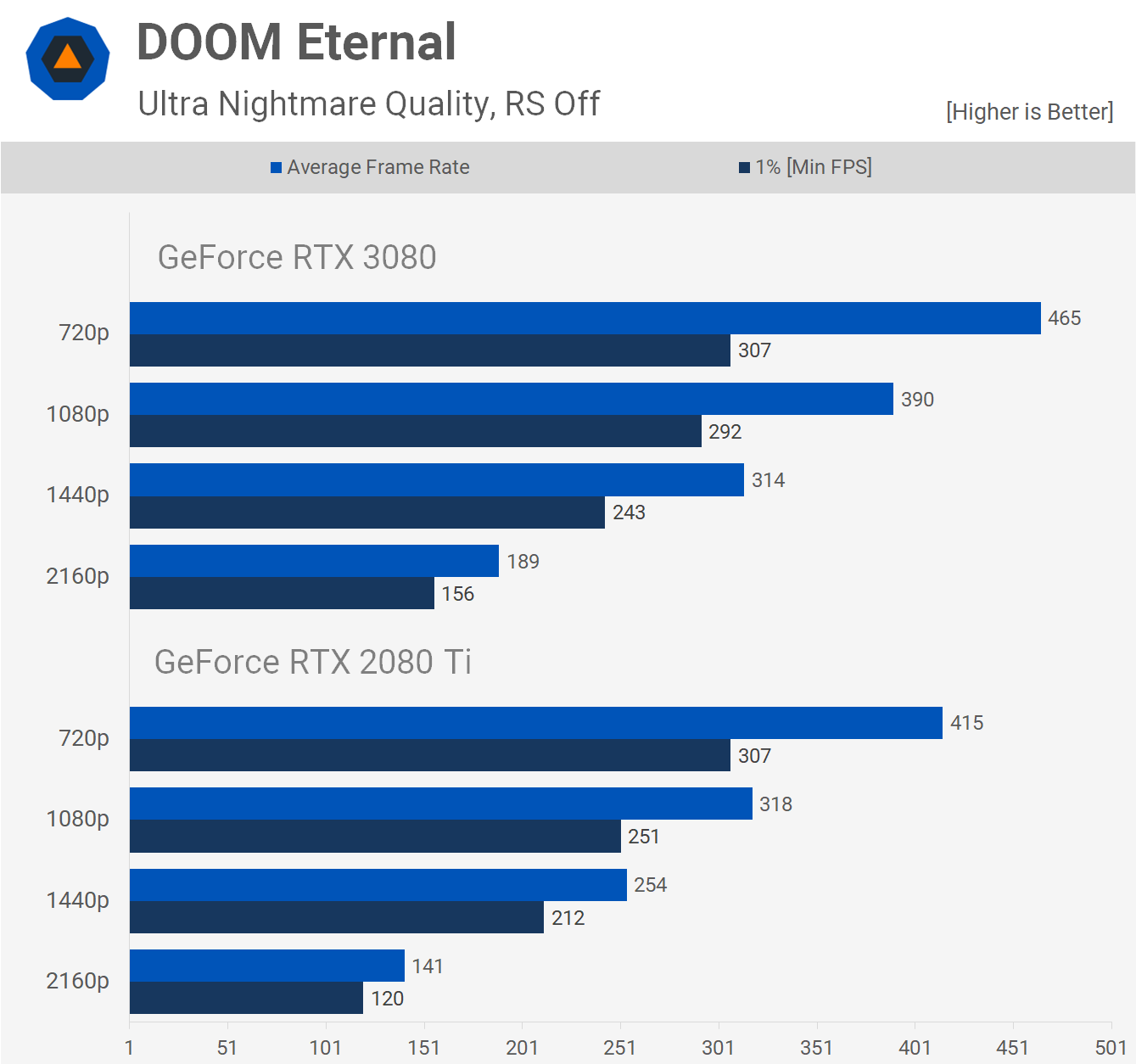

Moving on to Doom Eternal, this is a game you might have expected to scale well on the RTX 3080, at least I did. While not terrible, we're getting much better margins with the 2080 Ti which saw an 80% performance increase when going from 4K to 1440p, the 3080 on the other hand saw a 66% improvement. There is no chance the CPU is limiting performance at 1440p given we see almost 50% more frames when dropping right down to 720p.

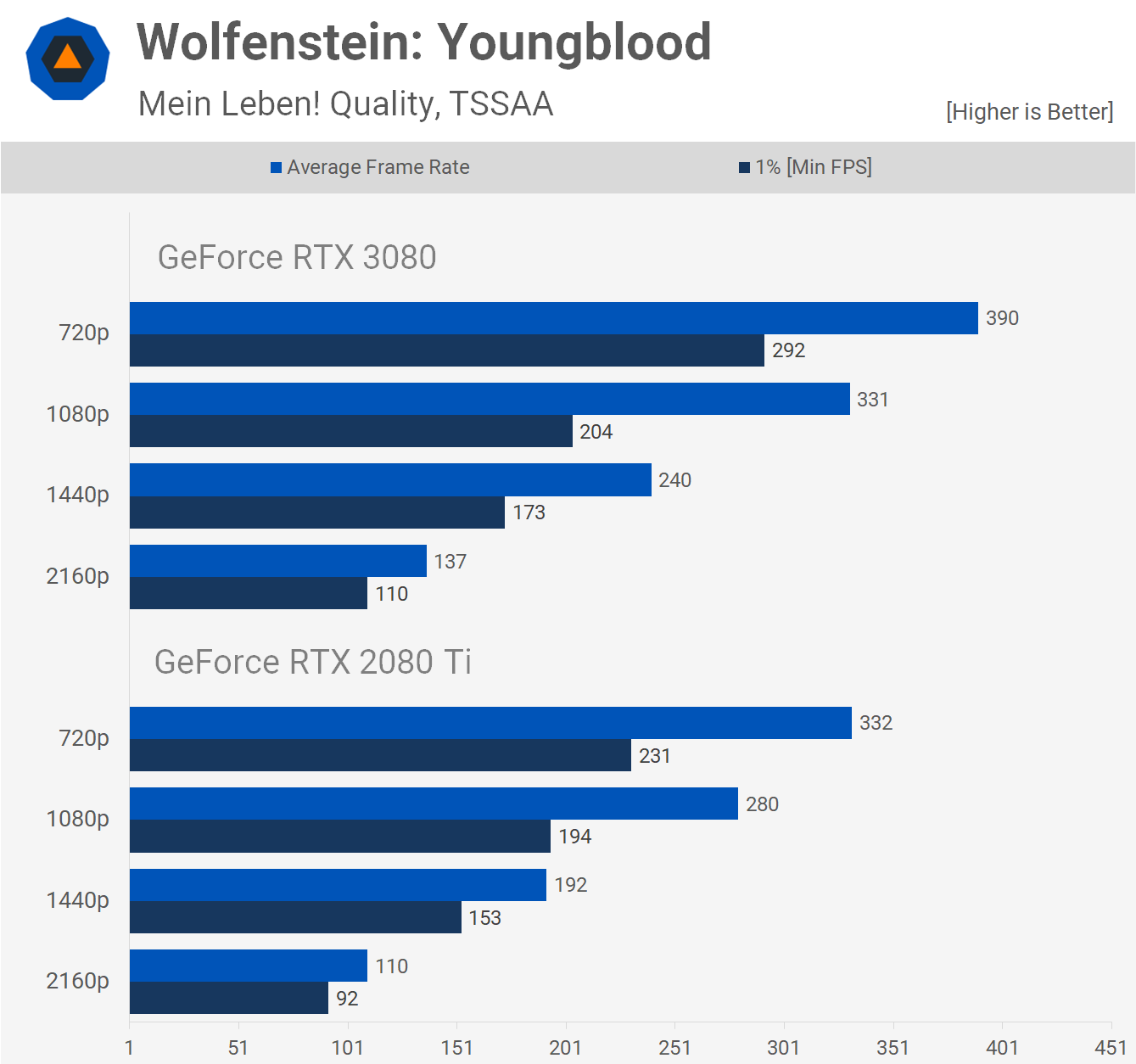

Despite what we saw in Doom Eternal, scaling between the 2080 Ti and 3080 is very consistent in Wolfenstein: Youngblood. Both saw a 75% performance boost when dropping the resolution from 4K to 1440p and frankly this is more what we expected to see in Doom.

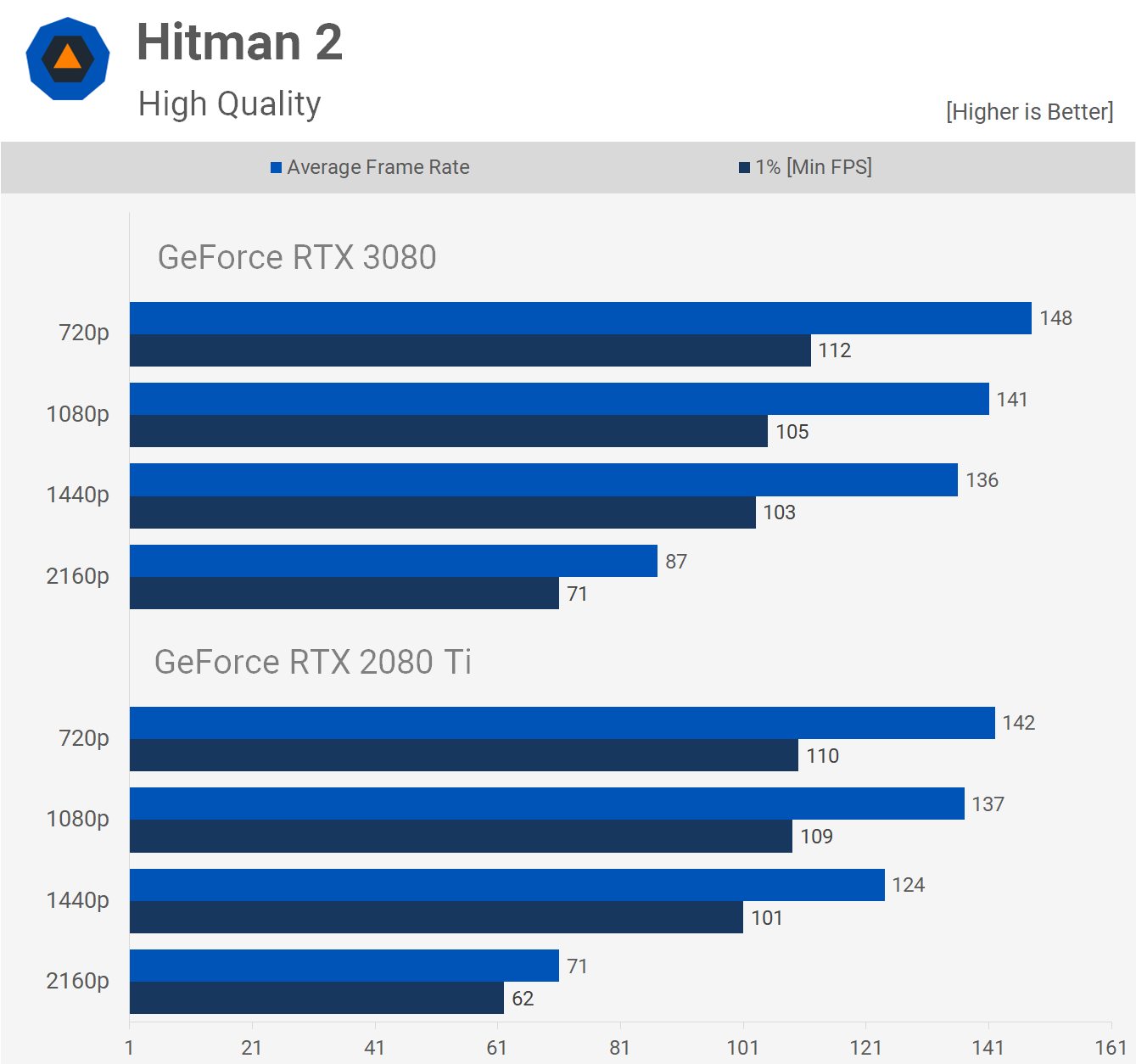

Finally we have Hitman 2 which does start to limit the performance of the RTX 3080 at 1440p due to the CPU, though we think this is down to how the game is coded.

Here's a look at the 14 game average which lines up exactly with what we saw in our GeForce RTX 3080 review using the Ryzen 9 3950X. The 2080 Ti scales ~10% better from 4K to 1440p on average, with a similar margin seen when going from 1440p to 1080p.

What We Learned

Based on the results we just saw, going into lower resolutions and not scaling performance as previous architectures, we can't always blame Ampere for this, while equally we can't always blame a CPU bottleneck. It will depend on the game and the hardware used for testing.

It's clear though that in games like Assassin's Creed Odyssey, Doom Eternal, F1 2020, Horizon Zero Dawn and Rainbow Six Siege, the Ampere architecture is what limits 1440p performance relative to what's seen at 4K. In those games there was no CPU bottleneck at the 1440p resolution, and the same was true for even 1080p. Death Stranding is another game that didn't run into a CPU bottleneck at 1440p, but we did start to find the CPU limitations at 1080p. A similar situation was seen with Gears 5 and then some weirdness in Metro Exodus that was likely the result of a combination of things.

Then we found just three examples where you could blame the CPU for limiting 1440p performance, or at least it was right on the verge of doing so: Hitman 2, Microsoft Flight Simulator 2020 and World War Z.

We also saw three examples where the Ampere architecture scaled very well, at least relative to the 2080 Ti: Resident Evil 3, Shadow of the Tomb Raider, and Wolfenstein Youngblood. So it's not always that 1440p performance is limited relative to the 4K throughput, and this was seen in our day-one review where the RTX 3080 ranged anywhere from ~70% faster than the vanilla RTX 2080 to as little as 40% when not CPU limited.

Our overall outlook for the RTX 3080 has not changed, and that means the GPU is on average about 70% faster than the 2080 at 4K, and 50% faster at 1440p which is awesome.

It'll be interesting to see if AMD can deliver a gaming-focused GPU with RTX 3080/3090-like performance at 4K and if so, if they can deliver a performance advantage at lower resolutions like 1440p where Ampere is often less impressive.

Claiming the RTX 3080 is CPU limited at 1440p, assuming you're using Core i7-8700K or better, chances are more often than not you'll be wrong on that call. But this really wasn't about right or wrong, but rather working out what's going on as that helps you work out what you do or don't need to upgrade.

On that note, we'll have a follow up benchmark test with the RTX 3080 using the $200 Ryzen 5 3600, both running at stock and overclocked with manually tuned memory, then we'll take those results and pit them against the 3950X and 10900K. Stay tuned for that comparison soon.