Today's comparison uses brand new fresh data for the GeForce RTX 2080 Ti, GTX 1080 Ti and GTX 980 Ti. We're currently in the process of updating all our GPU data in anticipation of Nvidia's soon to be released GeForce 30 series. So we thought, why not compare five years of flagship GeForce GPUs while we're at it?

Before we jump into all the benchmark data, let's compare these three GPUs on paper. Looking at this basic side-by-side spec breakdown we see that back in 2015 the most you were paying for a GeForce GPU was $650, a price that no doubt seemed steep at the time. Then two years later the GTX 1080 Ti pumped that price point up to $700, which seemed reasonable given how significant that upgrade was in every conceivable way. Then 18 months after that we got the RTX 2080 Ti and this is where things seemed to get out of hand.

Pricing for the flagship GeForce GPU jumped by ~43% to $1,000, or at least that's what Nvidia told us upon release. The plan was for Nvidia to charge a fat premium for their Founders Edition model, flogging them off for $1,200. To our knowledge, the $1,000 models never really happened, at least not for long if they did and certainly not without some kind of special deal.

So we're not going to pretend the RTX 2080 Ti is or was a $1,000 product, when $1,200 is far more realistic. That's about a 70% increase in price over the previous generation flagship part. Nvidia justified this premium with the promise of ray tracing and DLSS, the latter of which is now delivering some great results, despite the very limited game support. In our opinion ray tracing was a massive bust for this generation, but let's not get bogged down by all that in this article, let's just focus on raw performance.

| GeForce GTX 980 Ti | GeForce GTX 1080 Ti | GeForce GTX 2080 Ti | |

| Price (at release) | $650 | $700 | $1,200 |

| Release Date | June 2015 | March 2017 | September 2018 |

| Process | TSMC 28HP | TSMC 16FF | TSMC12FFN |

| Transistors (billion) | 8 | 12 | 18.6 |

| Die Size (mm2) | 601 | 471 | 754 |

| Core Config | 2816 / 176 / 96 | 3584 / 224 / 88 | 4352 / 272 / 88 |

| Core Clock Frequency | 1000 / 1075 MHz | 1480 / 1582 MHz | 1350 / 1545 MHz |

| Memory Capacity | 6 GB | 11 GB | 11 GB |

| Memory Speed | 7 Gbps | 11 Gbps | 14 Gbps |

| Memory Type | GDDR5 | GDDR5X | GDDR6 |

| Bus Type | 384-bit | 352-bit | 352-bit |

| TDP | 250 watt | 250 watt | 250 watt |

We've established that the RTX 2080 Ti cost ~70% more than the 1080 Ti, but looking at the specs it's hard to justify why, at least when comparing specifications that translate into tangible performance gains. Yes, the die size increased by 60%, but a lot of that space was occupied by wider cores supporting stuff like accelerated ray tracing.

The core count was expanded by 21% and while they include added functionality, we don't think we've seen that translate into performance gains that come anywhere near justifying the price increase. The memory spec is also somewhat underwhelming as Nvidia only offered an 11 GB memory buffer, the same capacity seen with the 1080 Ti which was an 83% upgrade over its predecessor, the 980 Ti.

Moreover, whereas the 1080 Ti memory throughput was boosted by almost 60% over the 980 Ti, the 2080 Ti's memory was just shy of 30% faster than what we saw with the 1080 Ti.

In the end, the GTX 1080 Ti was a huge upgrade over the 980 Ti, offering ~70% more performance upon release. The 2080 Ti on the other hand was on average 30% faster than the 1080 Ti at 4K, and 23% faster at 1440p. Essentially, Nvidia made no improvement to the price to performance ratio of their GeForce products with the release of Turing.

Nvidia did suggest that performance would improve as games better utilized the new architecture. A key architectural change between Pascal and Turing was the addition of two extra blocks in each SM unit. Where Pascal was essentially just a massive collection of floating point units, Turing introduced Tensor cores and integer units in addition to the standard floating point units. Although this made the core larger, Nvidia claimed it was worth it as the concurrent FP32 and INT32 execution would enable a handy performance boost.

The idea is that integer instructions that previously ran on the floating point units could now be offloaded to the integer pipeline in Turing and run concurrently with floating point instructions. Nvidia claimed that on average, 36 integer instructions could be offloaded and run on the integer cores per 100 floating point instructions, though this would vary depending on the game. In short, Nvidia claimed that concurrent execution helps improve shading performance by 50% or more in some situations.

Two years down that track and we're about to see the RTX 2080 Ti become a thing of the past... so has the margin between it and the 1080 Ti grown, or are we still looking at about a 30% increase on average? To find out we'll be testing both GPUs along with the 980 Ti in our new Ryzen 9 3950X GPU test system.

Benchmarks

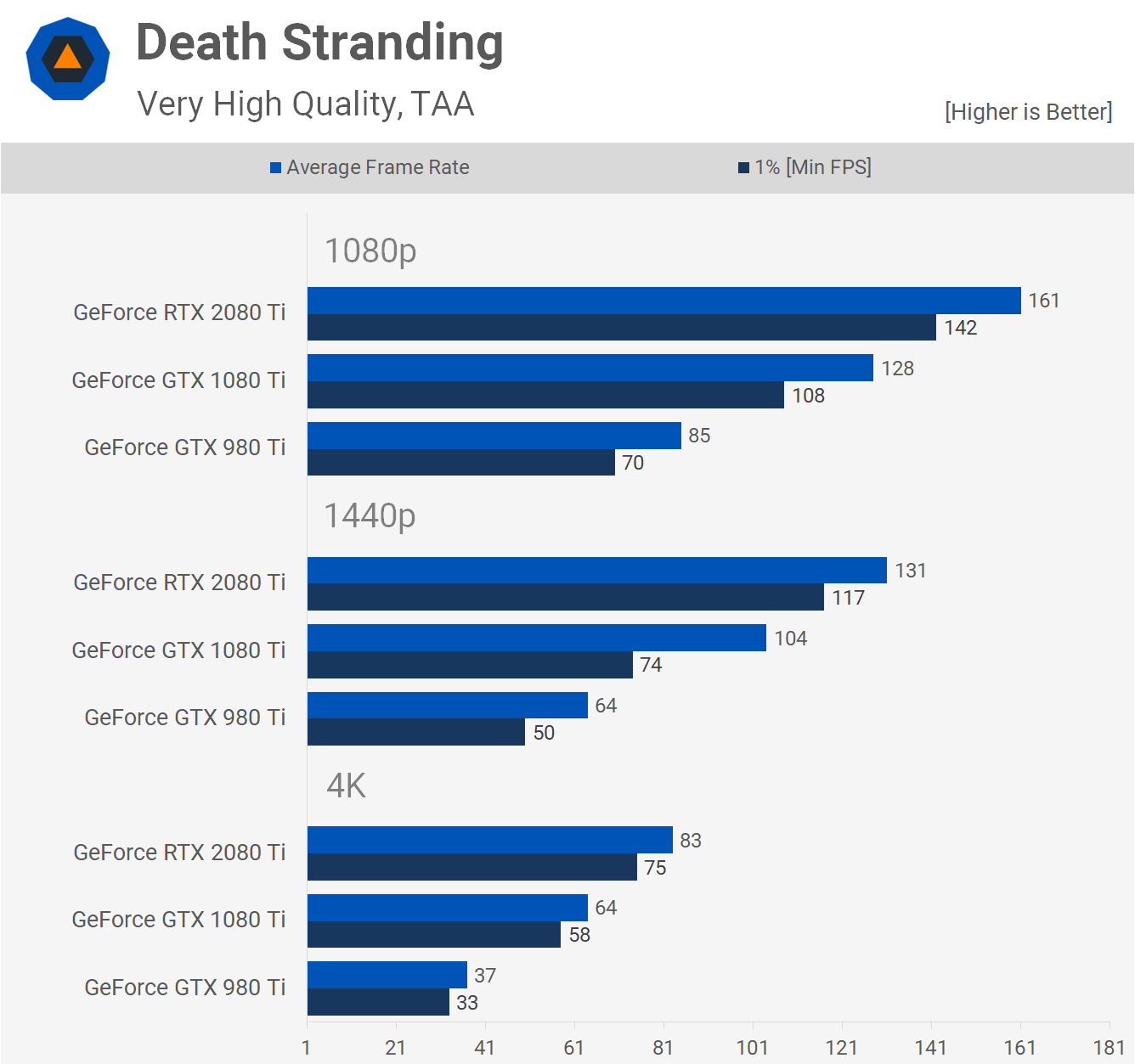

Starting with Death Stranding at 1440p, we can see the 1080 Ti is 63% faster than the 980 Ti. Quite a massive performance boost there. The 2080 Ti is 26% faster than the 1080 Ti and that margin grows to ~30% at 4K. So in one of the newest and most visually impressive games released to date, the margins are pretty much bang on with what we saw two years back.

Next up we have Microsoft Flight Simulator 2020, where we see a strong CPU bottleneck at 1080p, though this also happens with the Core i9-10900K as the game only supports DX11 at this point. Anyway, we're mostly interested in the 1440p and 4K results where the CPU is not a performance limiting component.

At 1440p the 2080 Ti was 22% faster than the 1080 Ti while the 1080 Ti was 80% faster than the 980 Ti. The margin between the 2080 Ti and 1080 Ti did grow considerably at 4K as here the Turing GPU was 35% faster.

Shadow of the Tomb Raider sees the 2080 Ti beating the 1080 Ti by a 20% margin at 1440p, which is disappointing given the 1080 Ti is seen to be a whopping 81% faster than the 980 Ti. Those margins are replicated at 4K as well, again the 2080 Ti was just 20% faster than the 1080 Ti. Granted, that's an important improvement at 4K, taking the average frame rate from 56 fps up to 67 fps, but still a disappointing result overall.

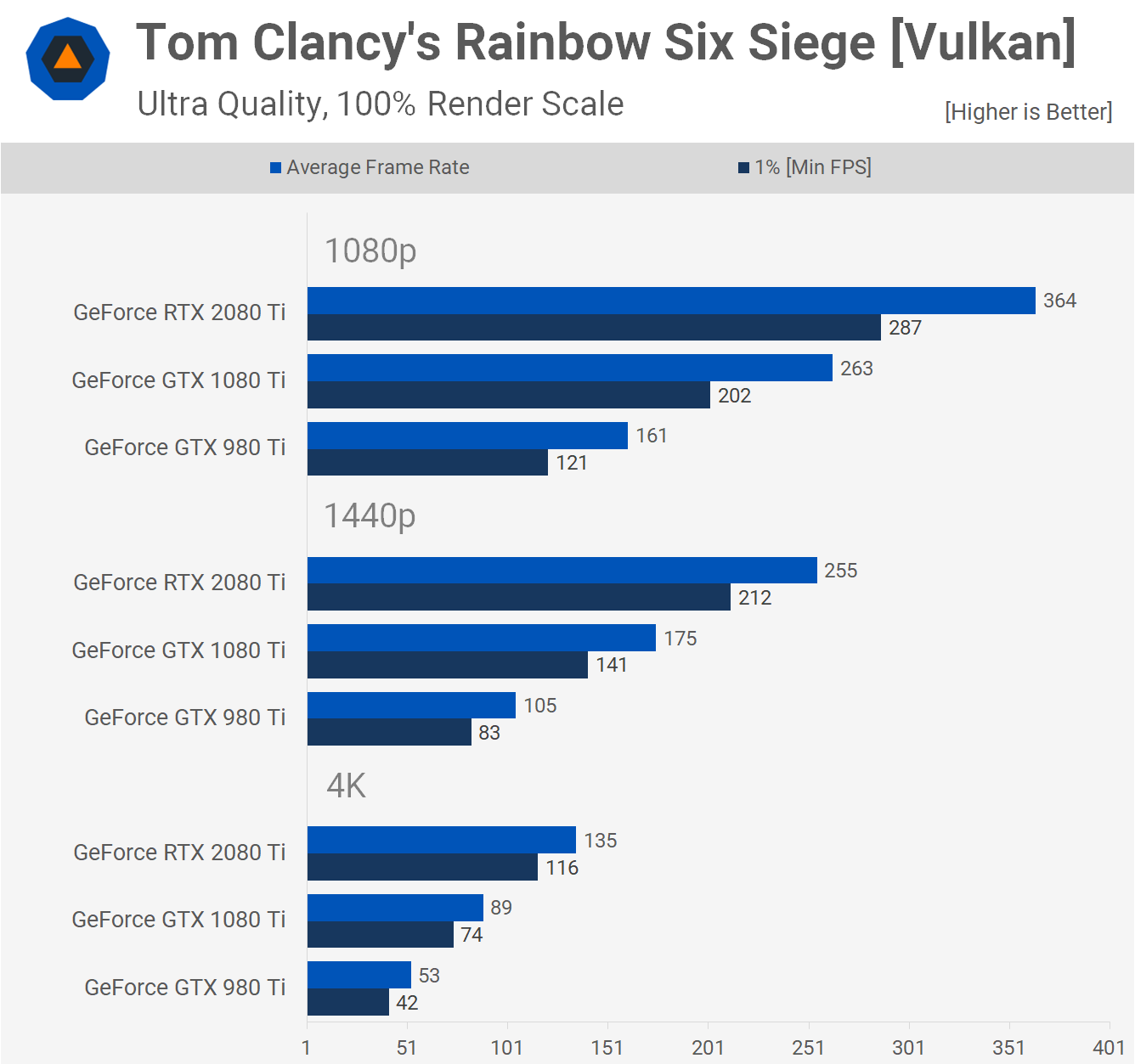

A game where Turing has been very successful is Rainbow Six Siege, especially when using the Vulkan API which enables AsyncCompute. This is a hardware capability that allows the GPU to execute tasks in parallel and Ubisoft has used it to optimize graphics techniques such as ambient occlusion and screen space reflections.

Turing introduced hardware support for AsyncCompute and this has enabled larger than expected performance gains in Rainbow Six Siege. Here the 2080 Ti was 46% faster than the 1080 Ti at 1440p and 52% faster at 4K. This is a much more impressive margin.

The 1080 Ti was also 67% faster than the 980 Ti at 1440p and 68% faster at 4K, so we're still seeing a bigger generational leap there nonetheless.

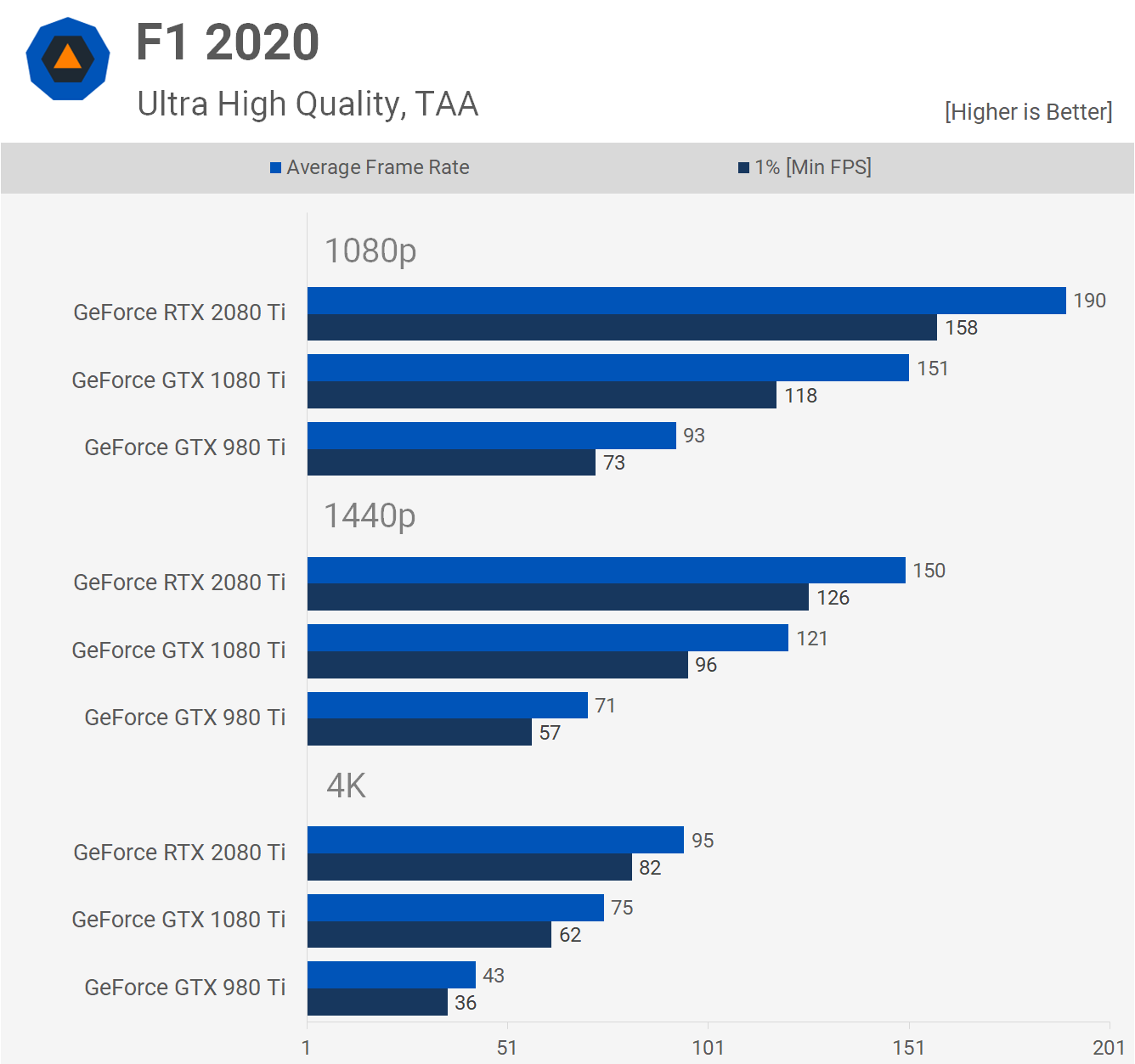

F1 2020 was tested using DirectX 12 and we're seeing mild performance gains for the 2080 Ti. At 1440p it's just 24% faster than the 1080 Ti and while that is usually what we'd consider a big margin, it's not when you factor in the 70% increase in price. Moreover, the 1080 Ti was 70% faster than the 980 Ti.

Similar margins were again at 4K. The 2080 Ti was 27% faster than the 1080 Ti, while the 1080 Ti was 74% faster than the 980 Ti.

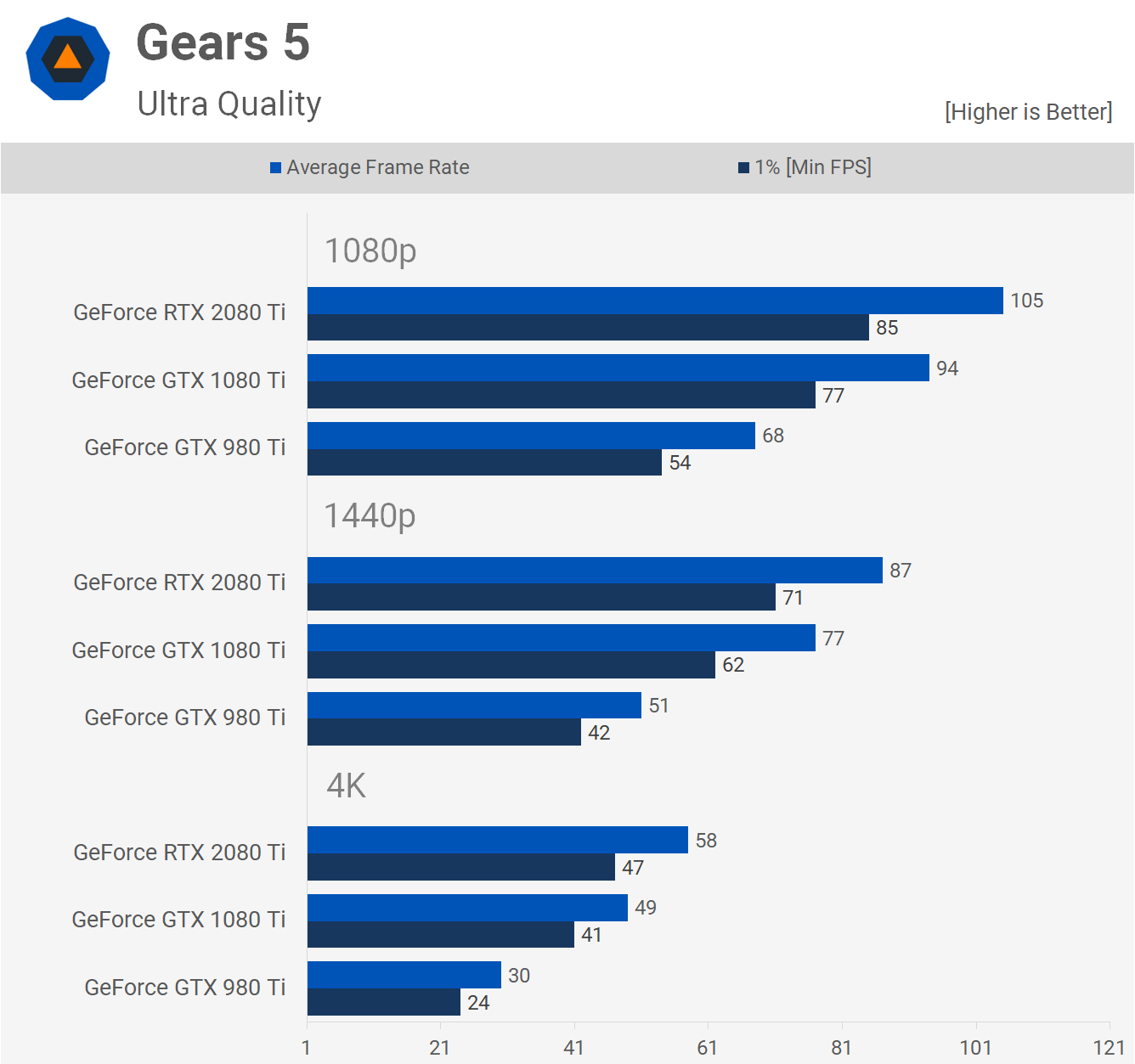

The Gears 5 performance is particularly disappointing for the 2080 Ti, it was just 13% faster than the 1080 Ti at 1440p and 18% faster at 4K. Those margins pale in comparison to what we see when going from the 980 Ti to the 1080 Ti, the Pascal based GPU was 51% faster at 1440p and 63% faster at 4K.

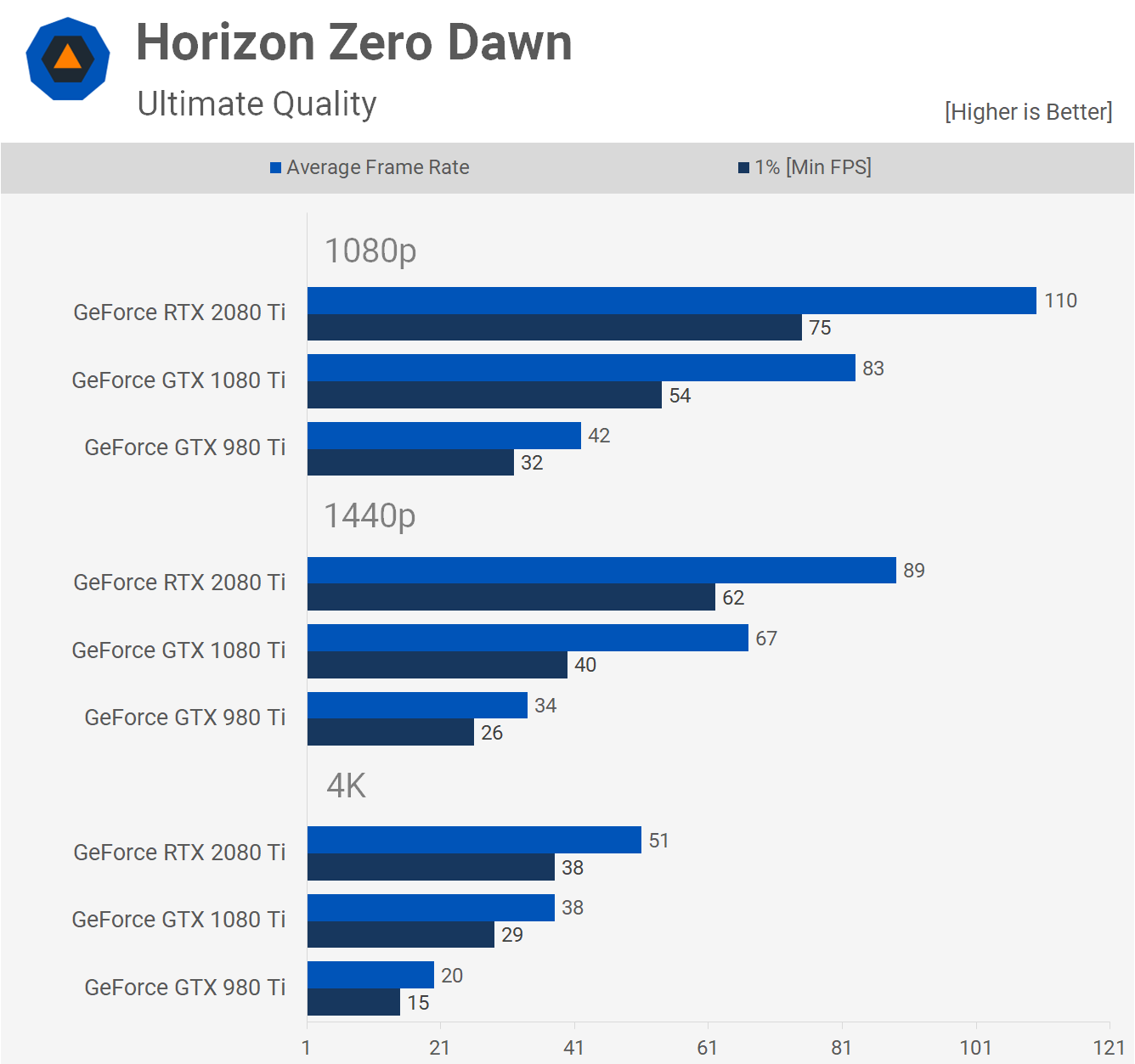

Moving on to Horizon Zero Dawn, one of the newest and most visually impressive games to be released this year. We're seeing a 33% performance improvement for the 2080 Ti at 1440p over the 1080 Ti, so one of the better margins seen so far.

The 1080 Ti was a stunning 97% faster than the 980 Ti. Some of that margin is probably due to a lack of driver optimization for the Maxwell generation, but a lot of it also has to do with the aging architecture and the limited VRAM buffer.

Then at 4K the 2080 Ti was 34% faster than the 1080 Ti, and more crucially it took the average frame rate from 38 fps up to a much more playable 51 fps.

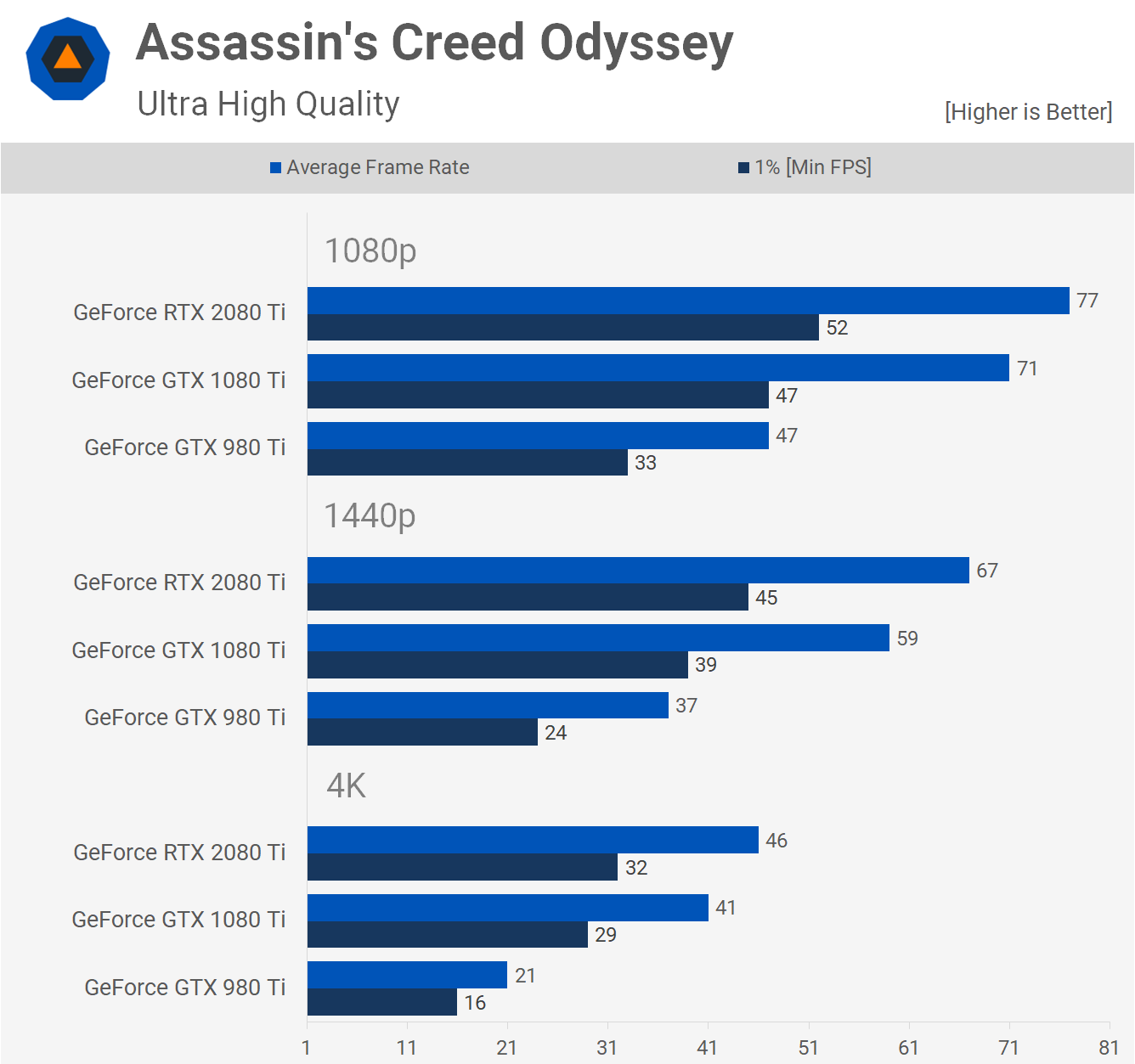

Assassin's Creed Odyssey is one of the older games included in this benchmark, released in late 2018. The game hasn't seen any major updates to include low-level API support and it's a good example of just how small the margin between the 1080 Ti and 2080 Ti often is in older titles.

Here the 2080 Ti was 14% faster at 1440p and 12% faster at 4K when compared to the 1080 Ti. Then the 1080 Ti was almost 60% faster than the 980 Ti at 1440p and 95% faster at 4K.

World War Z utilizes the Vulkan API and here we're back up to a 33% margin in favor of the 2080 Ti at 1440p and 4K, this is another of the larger performance gains over the 1080 Ti. We're also seeing a fairly typical 62% performance boost for the 1080 Ti over the 980 Ti at 1440p and 65% at 4K.

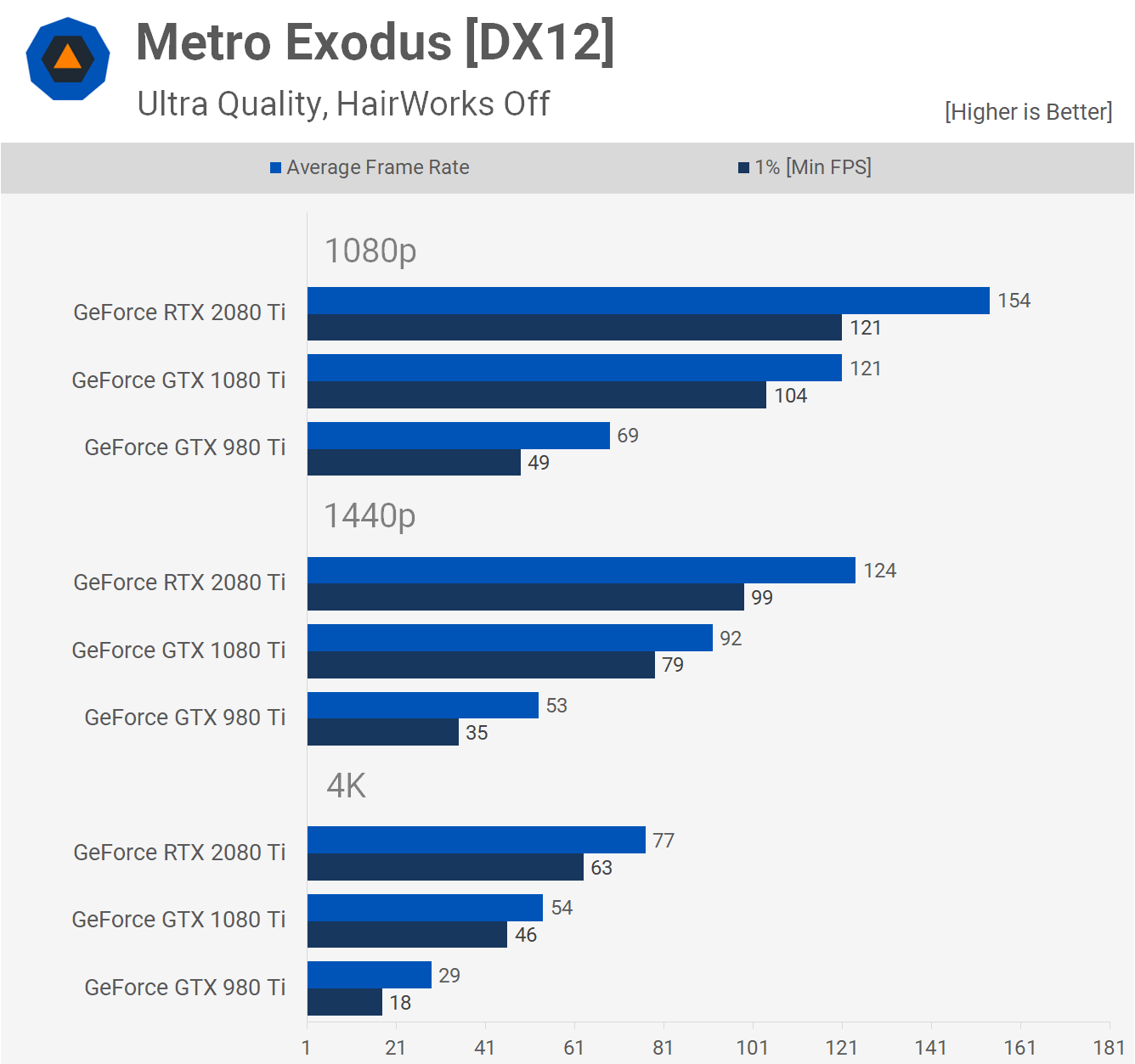

Metro Exodus was one of the first games to implement DLSS and ray tracing, so it's not surprising to see a 35% performance boost for the 2080 Ti over the 1080 Ti at 1440p. Certainly not the biggest performance increase that we've seen, but it's certainly on the larger side. Still, it's nothing like the 74% performance increase we see when comparing the 1080 Ti and 980 Ti at the same resolution.

Here we're seeing in Resident Evil 3 what we've come to recognize as pretty typical margins. The 2080 Ti was just 27% faster than the 1080 Ti at 1440p, though that margin did grow to 36% at 4K. The 1080 Ti on the other hand was 66% faster than the 980 Ti at 1440p and 64% faster at 4K.

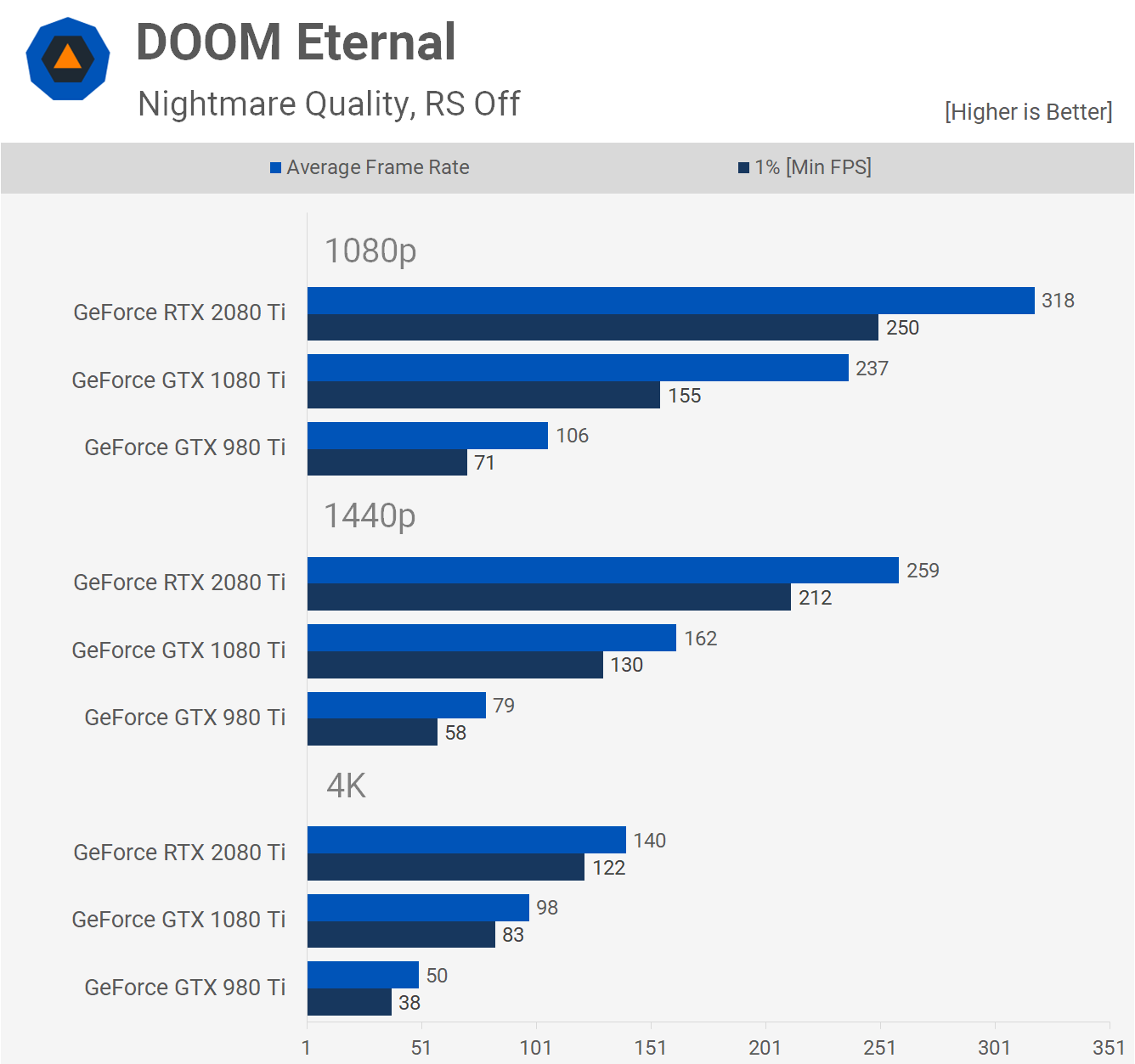

DOOM Eternal, like Rainbow Six Siege, is another example of a game that makes heavy use of AsyncCompute and this results in much stronger performance gains for the 2080 Ti than you'd normally expect to see. For example, at 1440p it was 60% faster than the 1080 Ti, though that margin was reduced to 43% at 4K.

The 1080 Ti was also significantly faster than the 980 Ti, at times doubling the performance, though we suspect VRAM capacity and driver optimization are the two main issues for the now five year old Maxwell part.

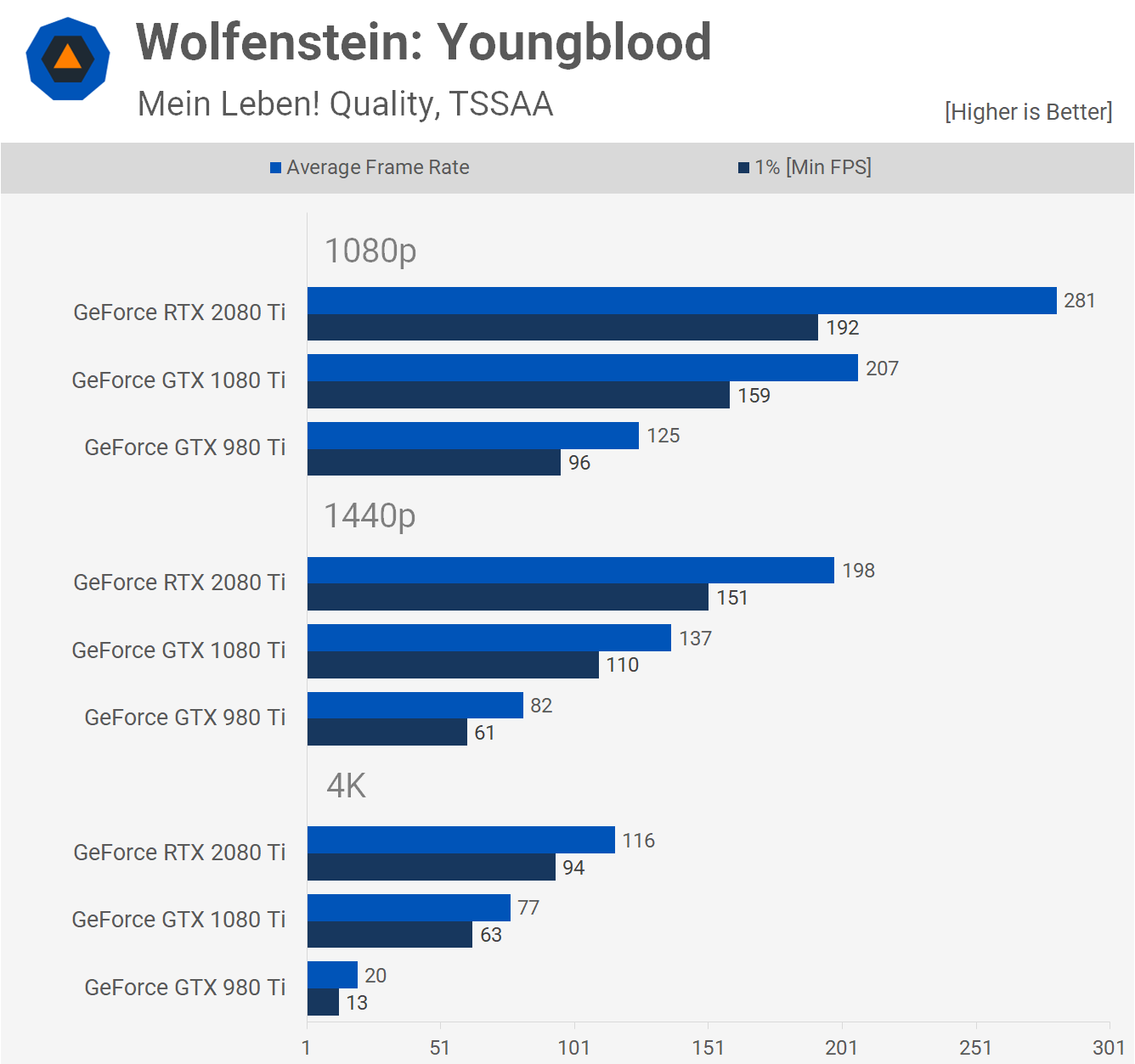

The performance gains offered by the 2080 Ti were also very impressive in Wolfenstein: Youngblood. Here the mighty Turing GPU was 45% faster than the 1080 Ti at 1440p and 51% faster at 4K.

Meanwhile, the 1080 Ti was 67% faster than the 980 Ti at 1440p and we see that margin blow out massively at 4K, as the 6GB VRAM buffer of the 980 Ti simply doesn't cut it here.

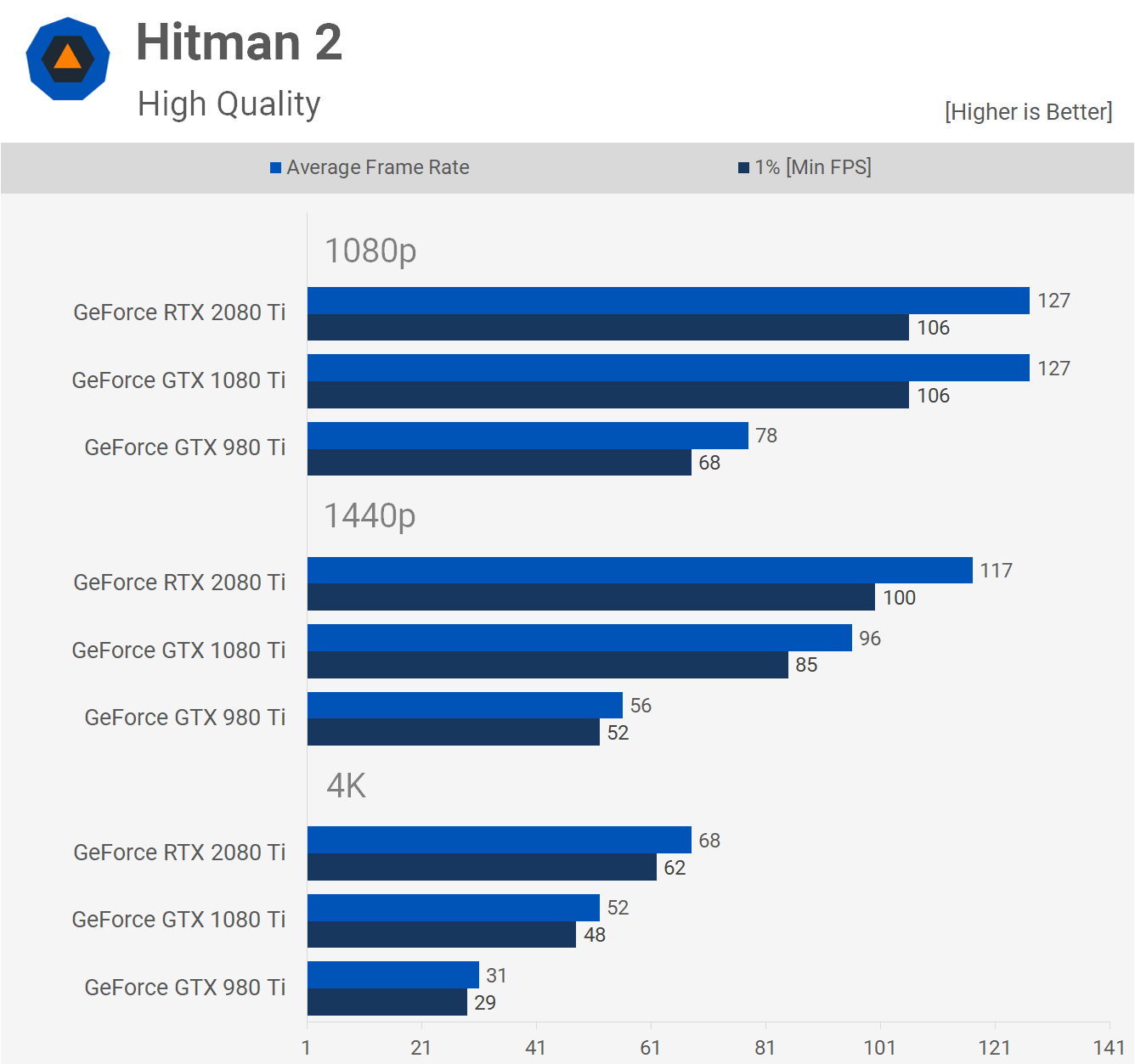

Finally we have Hitman 2, using DirectX 12. Here the 2080 Ti was just 22% faster than the 1080 Ti at 1440p and 31% faster at 4K. Then as you'd typically expect to see, the 1080 Ti was 71% faster than the 980 Ti at 1440p.

Performance Summary

There were no real surprises here. Two years ago we found the biggest gains for the 2080 Ti over the 1080 Ti were seen in Rainbow Six Siege and Wolfenstein, though in both instances slightly different versions of those games. Back then we compared both GPUs in over 30 games and found the 2080 Ti was on average 23% faster at 1440p and 30% faster at 4K. Let's check out how they compare in today's 14 game sample...

Based on our new data, the RTX 2080 Ti was 33% faster than the 1080 Ti at 1440p on average. That's a 10% increase over what we saw two years ago at launch, albeit with a larger sample of games, many of which were older titles, even at that time.

At 4K the gap between the two has grown to 35%. Even at 1080p where we were at times CPU limited, the margin was still 26%, very similar to what was seen in the previous test.

Something to Look Forward to

In terms of performance, not a lot has changed since the release of GeForce RTX two years ago. We've seen few games come along where Turing GPUs are much faster than their Pascal equivalents, and we've also seen very few games that support ray tracing and almost no examples where it works well, at least in our opinion.

The initial implementation of DLSS died a slow and blurry death, but to Nvidia's credit they were able to reinvent the technology and make it something quite special with DLSS 2.0. It's unfortunate the game list remains very limited. It's our opinion that ray tracing and DLSS are nice bonus features, but not key selling points of the RTX product line. This is not how Nvidia sold them to gamers, which was over promising and under delivering.

Regardless of your opinion on ray tracing, as we don't want to get bogged down in how good it looks or how well it works, there's no denying that the game support list is quite limited and therefore there's a good chance that none of the games you've wanted to play on your GeForce RTX graphics card actually supported any RTX features.

In that case, it's really all about raw performance, and how much bang for your buck Turing delivered. When compared to the previous Pascal-based GeForce 10 series, the answer hasn't changed: not much. For the most part the RTX 2080 and GTX 1080 Ti are evenly matched and both command a $700 MSRP.

While we could observe a few instances where the RTX 2080 Ti was ~50-60% faster than the 1080 Ti, that's not quite enough to justify the 70% increase in price, especially when talking about GPUs of different generations. In most games that margin is closer to 20-30%, and frankly we'd have hoped that would be a worse case for Turing vs. Pascal at the same price point.

Is it fair to say Turing was a disappointment? That was my opinion upon release and it's still my opinion two years later, and I'd have loved to be proven wrong. At one point we thought Nvidia would be forced to replace the GeForce 20 series much sooner than they have, but our mistake was placing too much trust in AMD's ability to hit them with a range of Radeon 7nm GPUs, Radeon VII anyone?

Ultimately, the RTX 2080 Ti was a justifiable purchase for gamers looking for the fastest graphics card money could buy, and it did enable a level of performance that was previously unseen at 4K. In a number of the games we tested today, the 2080 Ti was only 30 to 40% faster than the 1080 Ti at 4K, but that was the difference between laggy frame rates and silky smooth gameplay.

We're now at the end of the GeForce 20 series chapter, it's time to start a new one and it looks like Nvidia has some pretty incredible GPUs in store for us. Fingers crossed this upcoming release is as exciting as we think it's going to be, and here's hoping for fiercer competition from both AMD, and eventually Intel, in the high performance space.