Today we're revisiting the battle between the most reasonable previous-gen high-end GPUs, the AMD Radeon RX 6800 XT and Nvidia GeForce RTX 3080 10GB, and we'll be doing so in 50 games at 1440p and 4K.

Before we get into the blue bar graphs, a quick refresher on this GPU battle:

Back in early 2021, we compared them in a 30 game benchmark and found that the Radeon 6800 XT was on average 5% faster at 1080p, we saw identical performance at 1440p, and then the Radeon was 6% slower at 4K. Then in 2022 we re-tested with 50 games and found the GeForce RTX 3080 was 3% slower at 1080p, 1% faster at 1440p and 7% faster at 4K – so fairly similar margins to the 2021 test between the two GPUs.

However, both comparisons have come with a few caveats. For example, if you're interested in ray tracing performance, then the RTX 3080 is generally much faster than the averages would suggest. The Nvidia GPU also enjoys support for superior upscaling tech in DLSS.

But the Radeon 6800 XT packs more VRAM, though to date the RTX 3080 has gotten away almost unscathed with its 10GB buffer.

This 2023 comparison will still include 50 games, but this time there are more ray tracing configurations included, so that should swing things more in favor of the GeForce RTX 3080, though we'll also make a comparison using just the rasterized data, for those of you not interested in ray tracing performance. We've also done some exploration into VRAM usage on the RTX 3080 and we'll get into that towards the end of this review.

We should also note that this review isn't intended to be a buying guide. Rather the point of the revisit is to see how well each product has aged.

If you were in the market for either of these products, the Radeon 6800 XT is still available new at around $530, whereas the RTX 3080 is mostly out of stock or inexplicably expensive new, and only closer to the Radeon's pricing when buying second hand.

As a general reference, the MSRP for the RTX 3080 used to be $700, while the Radeon 6800 XT's MSRP was $650. It's crazy to note that when we last compared these two GPUs in early 2022, both were priced around $1,200, so things have certainly improved since the cryptocurrency boom – though you may also argue that the GPU market never fully recovered and if AMD and Nvidia get their way, it never will, so keep that in mind gamers.

For testing today, we used a Ryzen 9 7950X3D but with the second CCD disabled to ensure maximum performance in all games. This CPU has been tested on the Gigabyte X670E Aorus Master using 32GB of DDR5-6000 CL30 memory.

As usual we'll go over the data for around a dozen titles before jumping into the big breakdown graphs. The resolutions of interest are 1440p and 4K, so let's get into it…

Benchmarks

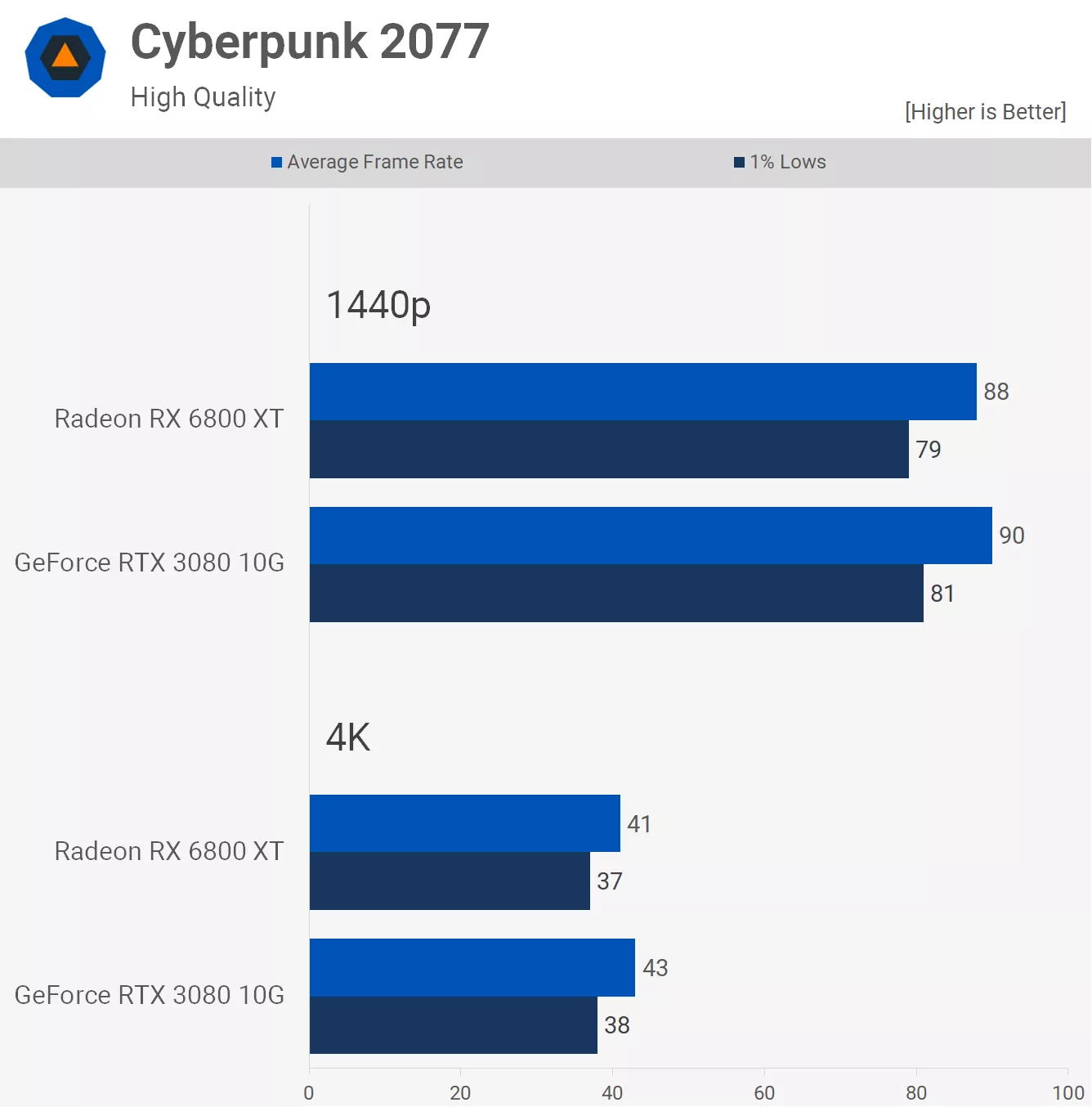

First up we have Cyberpunk 2077 using the high preset with ray tracing disabled and as you can see performance between these two GPUs is basically identical. The RTX 3080 is 2% faster at 1440p and 5% faster at 4K, basically we're looking at just a few frames at each tested resolution.

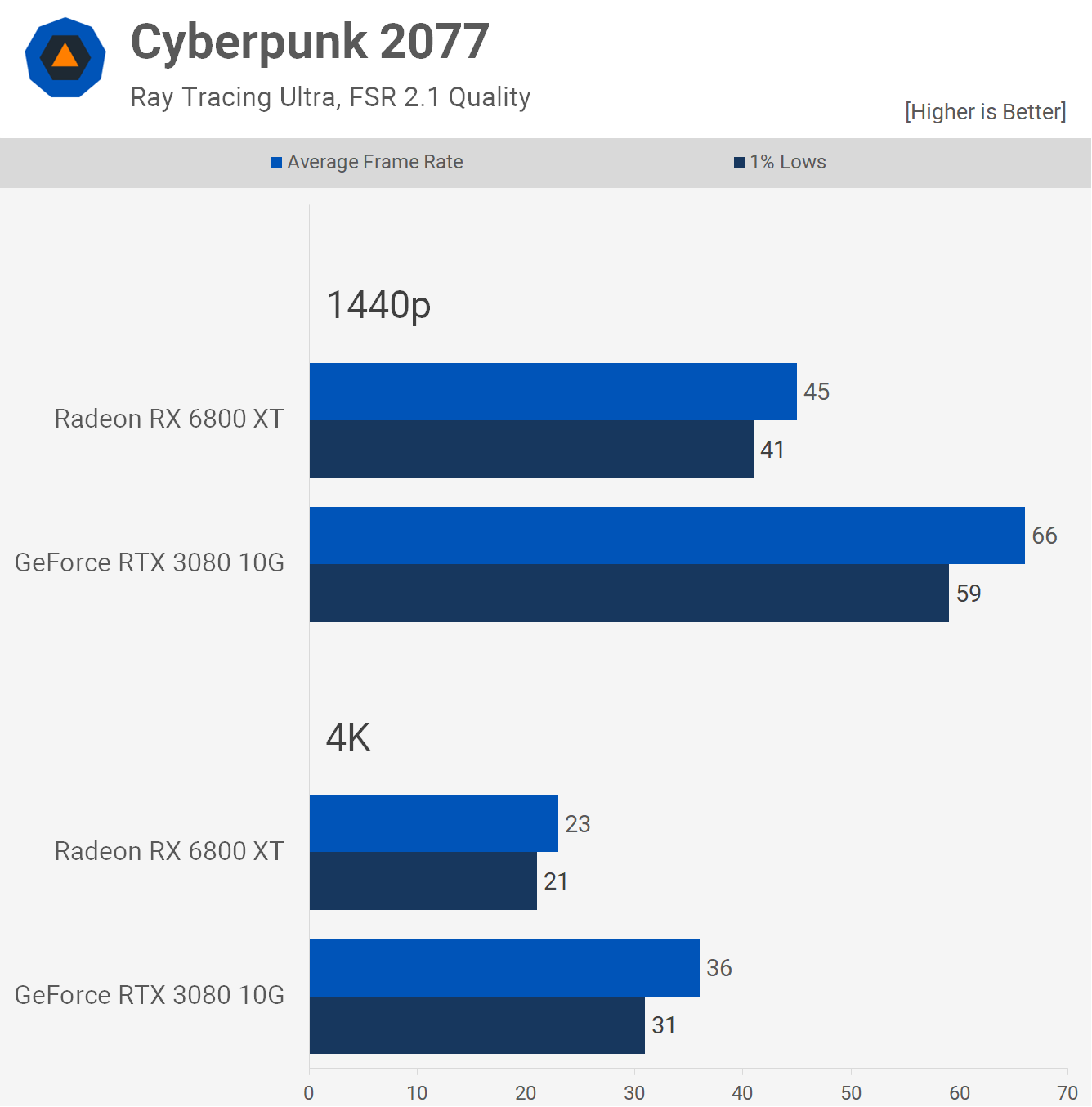

If we enable ray tracing, the RTX 3080 finds itself comfortably ahead, rendering 47% more frames at 1440p and 57% more at 4K, both massive wins. However we'd only consider the 1440p data as relevant considering the RTX 3080 was good for 66 fps on average which is highly playable. Also please note that while FSR 2 was used here, scaling with DLSS is identical using the RTX 3080.

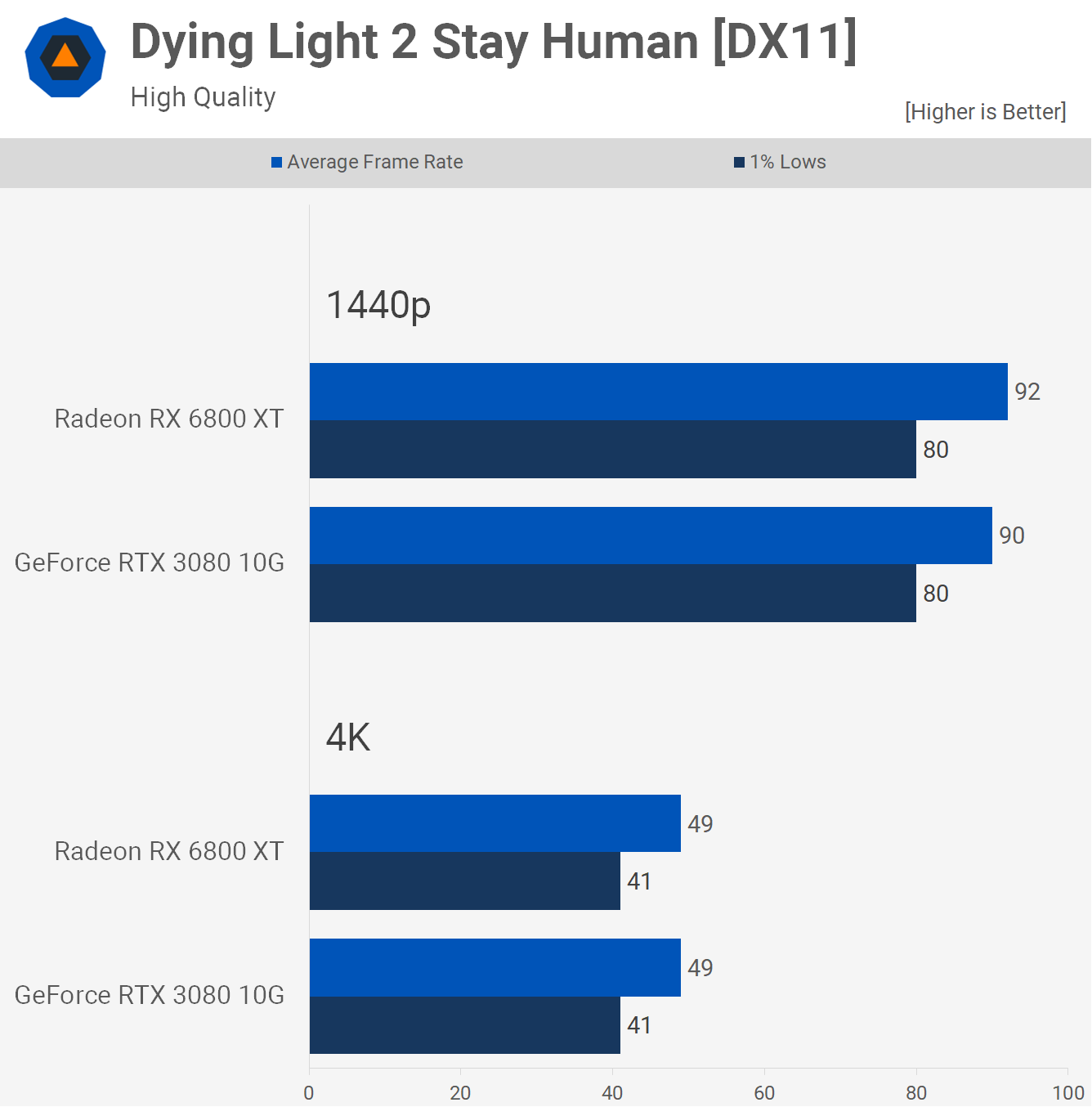

Dying Light 2 is another title that has been tested with and without ray tracing enabled, and first up we have the high quality preset which doesn't use ray tracing and forces the DX11 API. Here we find that performance between these two GPUs is identical, the 6800 XT was just 2% faster at 1440p and then both rendered 49 fps at 4K.

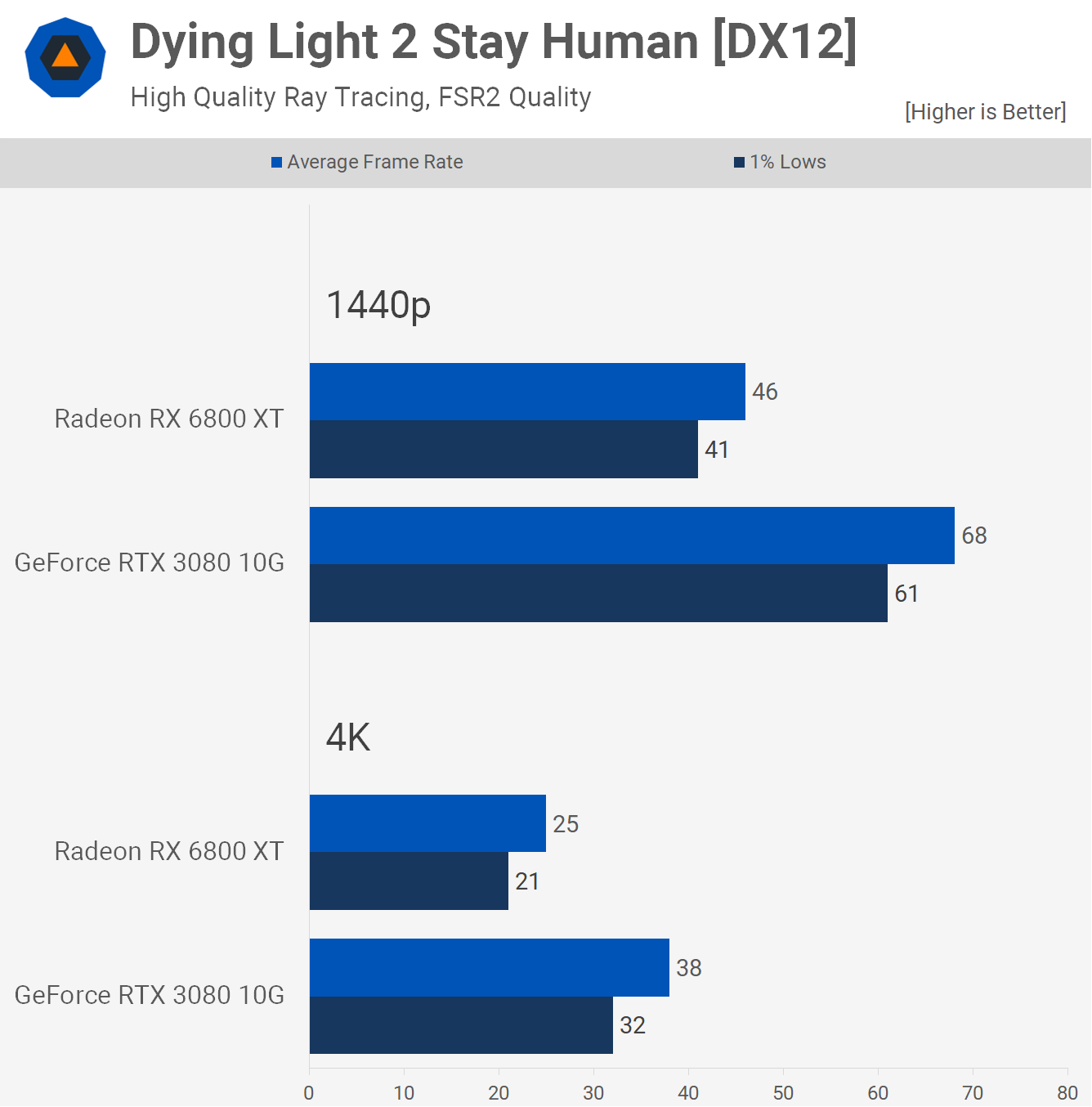

Then as we found in Cyberpunk 2077, enabling ray tracing hands the RTX 3080 a huge performance advantage thanks to its more mature RT support. The RTX 3080 was 48% faster at 1440p and then 52% faster at 4K.

Only the 1440p data is worth noting in our opinion as the RTX 3080 was good for 68 fps which is a playable frame rate compared to 38 fps at 4K. Also, you wouldn't enable RT on the Radeon 6800 XT in this title.

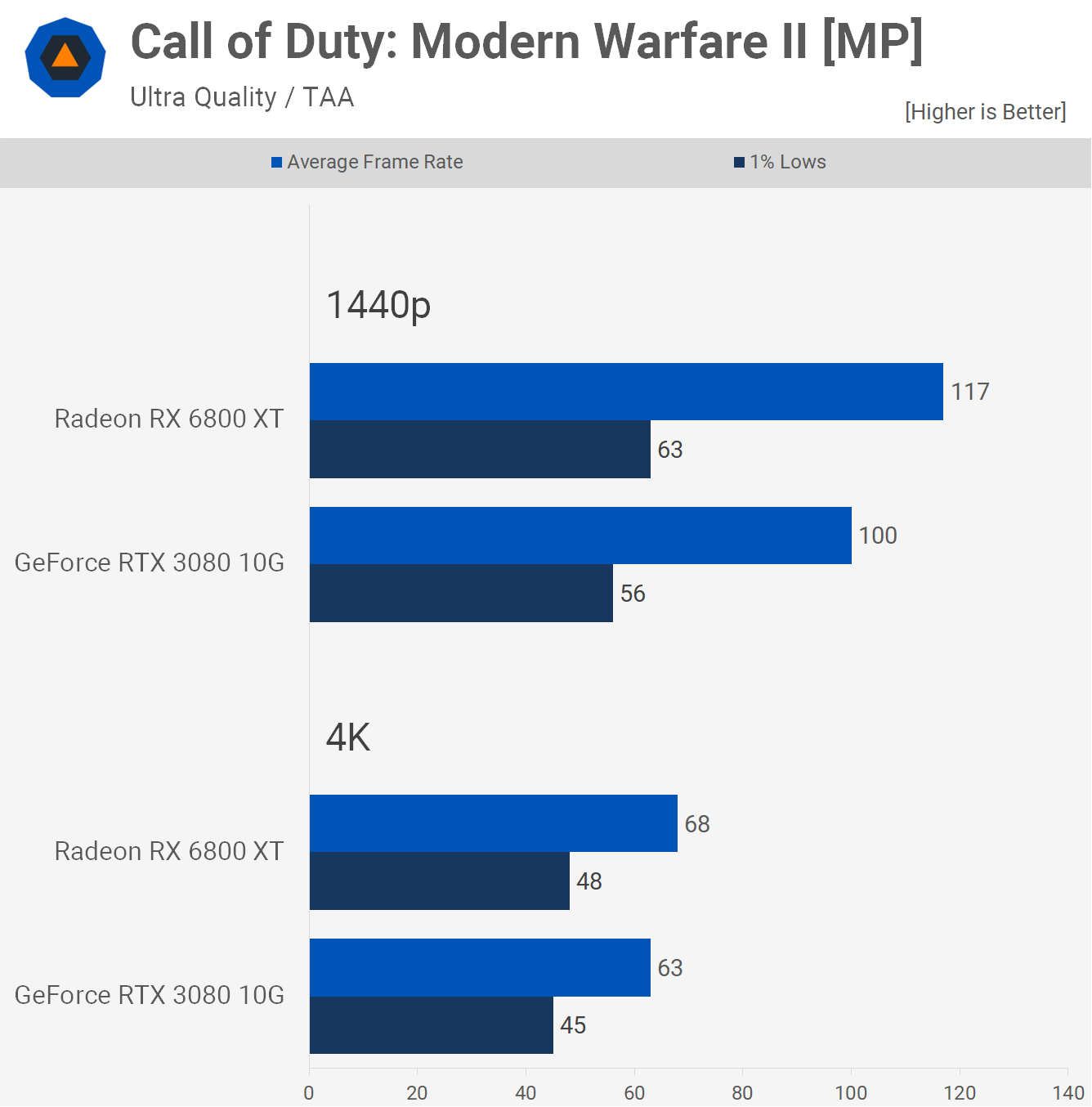

Next up we have Call of Duty Modern Warfare II and this title favors the Radeon GPU, allowing the 6800 XT to deliver 17% more frames at 1440p and 8% more at 4K when using the ultra high preset, and as we've found in the past scaling is much the same using the lower quality presets.

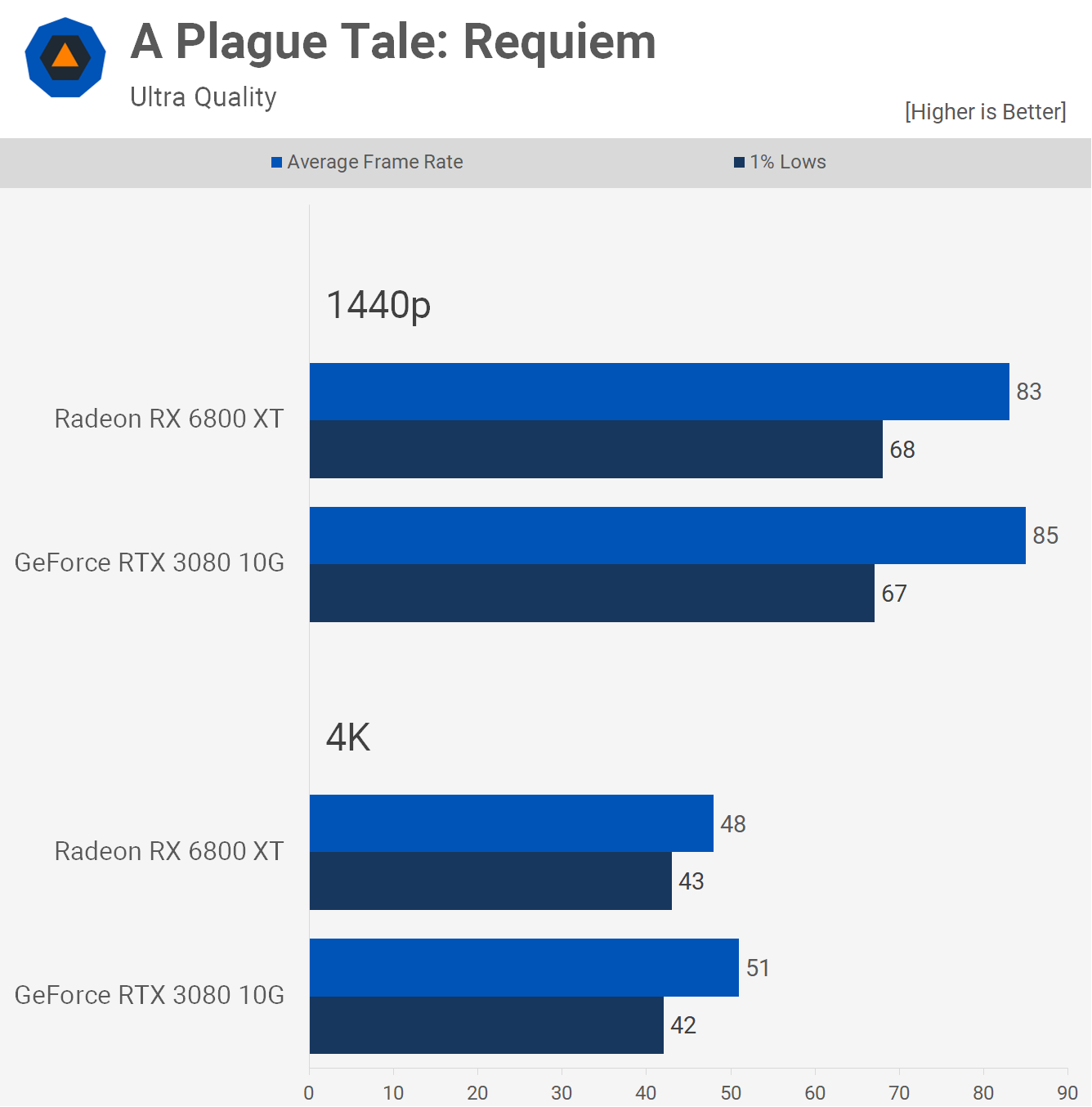

A Plague Tale: Requiem was also tested using the ultra quality preset and performance is much the same between these two GPUs, the RTX 3080 was a few frames faster at each tested resolution, but overall performance and the gaming experience was the same.

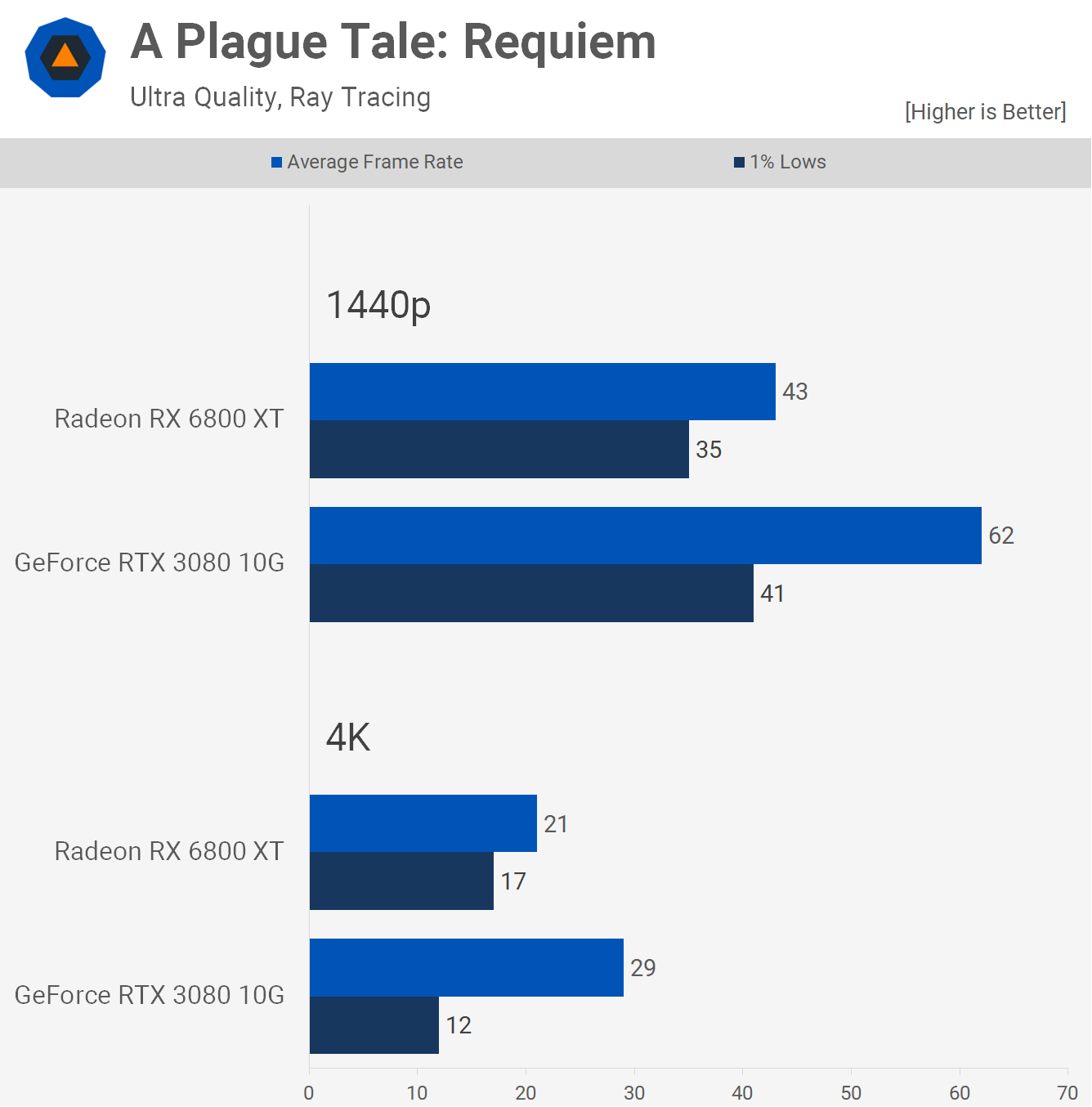

We went back and checked out ray tracing performance to see if it worked on the RTX 3080's 10GB VRAM buffer and found the game used 9.6 GB at 1440p and played at 60-70 fps, but 4K was too much requiring 13 GB and this resulted in stuttering on the 3080.

That said, this was of no real consequence for this matchup as the Radeon 6800 XT didn't have enough power to render playable frame rates anyway, despite having enough VRAM.

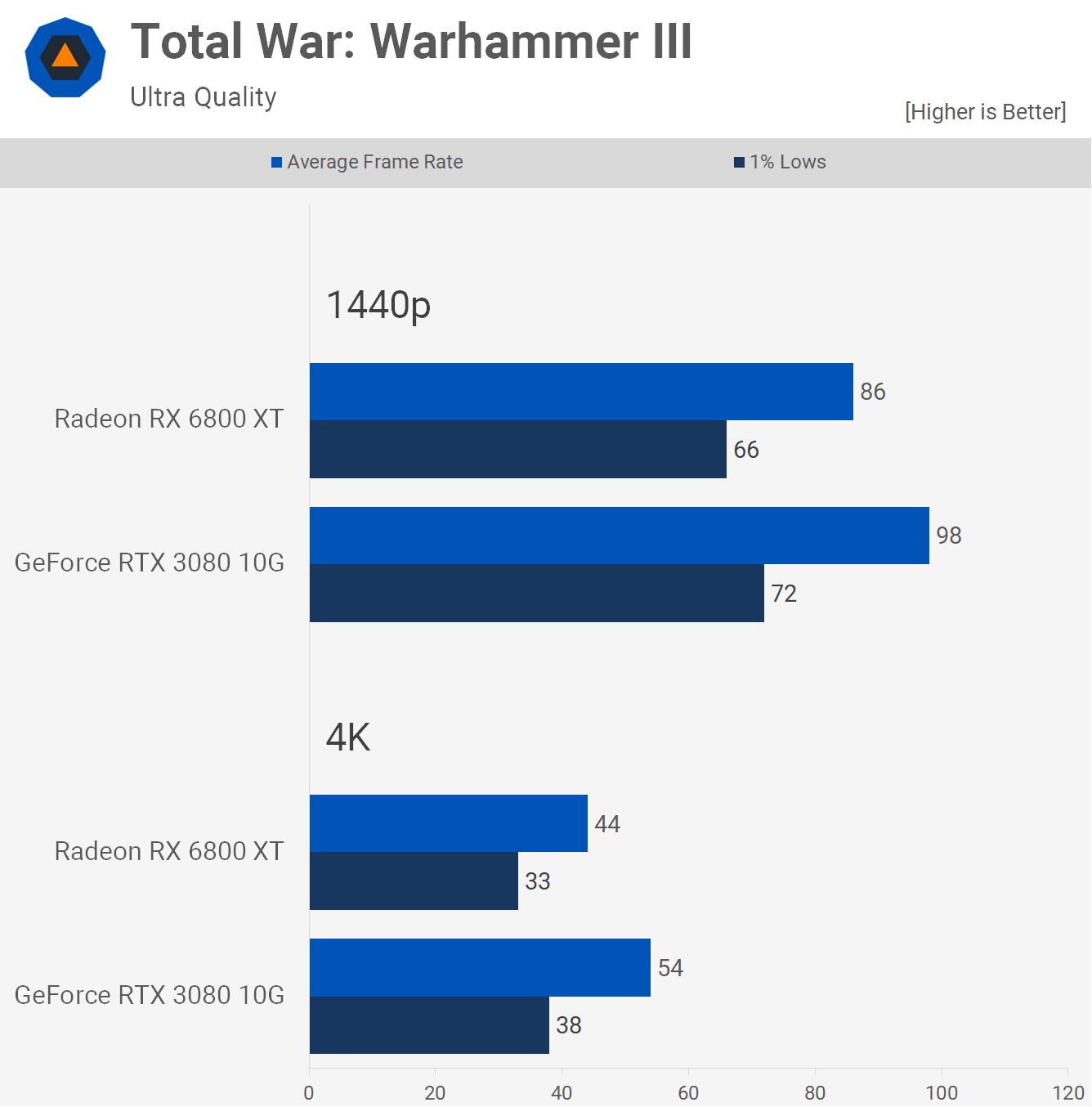

Next up we have Total War: Warhammer III and this has never been a good title for Radeon GPUs. Here the GeForce RTX 3080 was 14% faster at 1440p and 23% faster at 4K, both of which are substantial margins and clear wins for the Nvidia GPU.

The 4K performance will be particularly noticeable as the RTX 3080 delivers a reasonable experience here, whereas the 44 fps on average from the Radeon 6800 XT will feel very laggy.

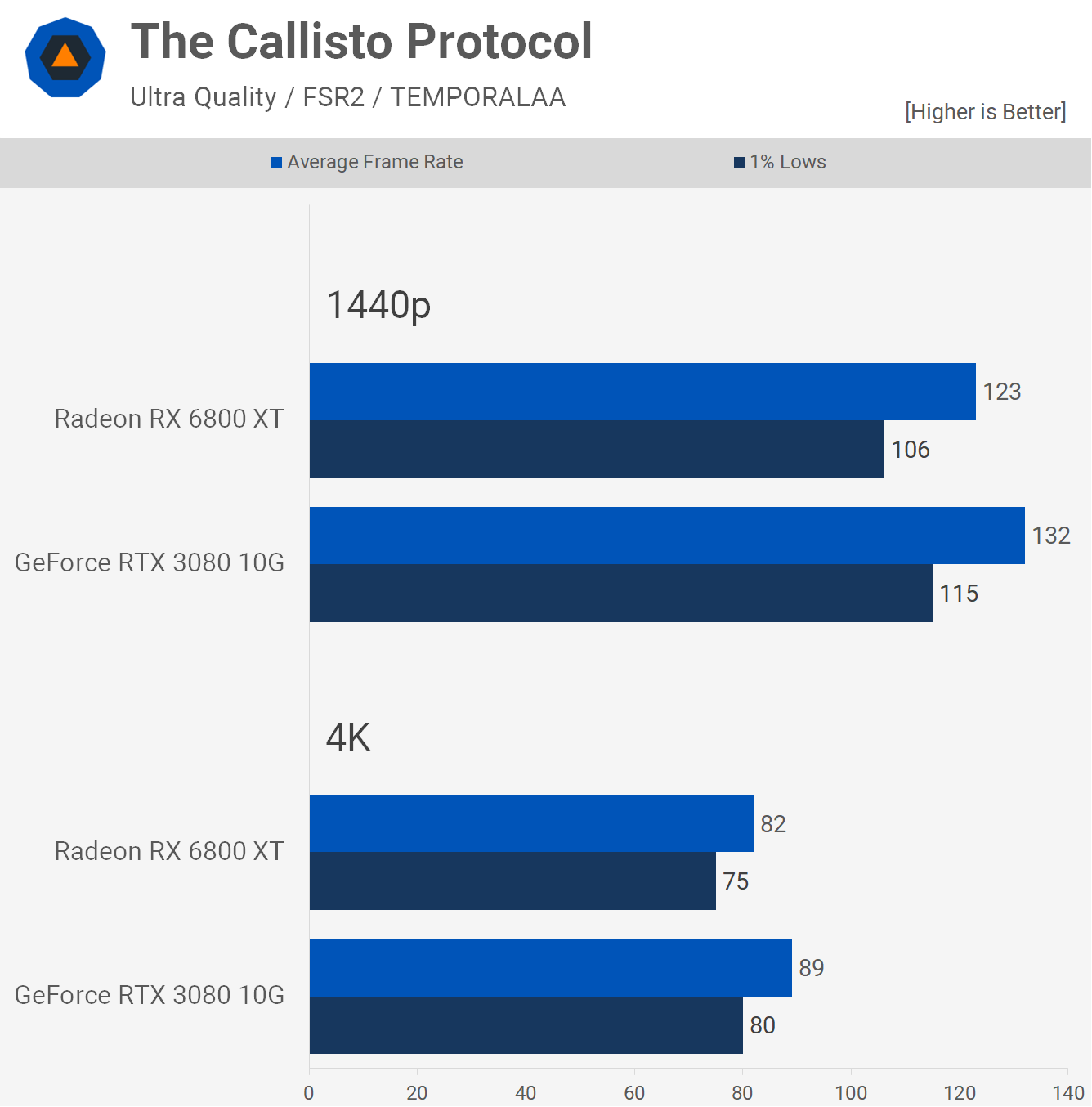

The Callisto Protocol at 1440p sees the GeForce RTX 3080 performing 7% faster than the Radeon 6800 XT and 9% faster at 4K. Not a massive margin, but the GeForce was clearly faster at both tested resolutions.

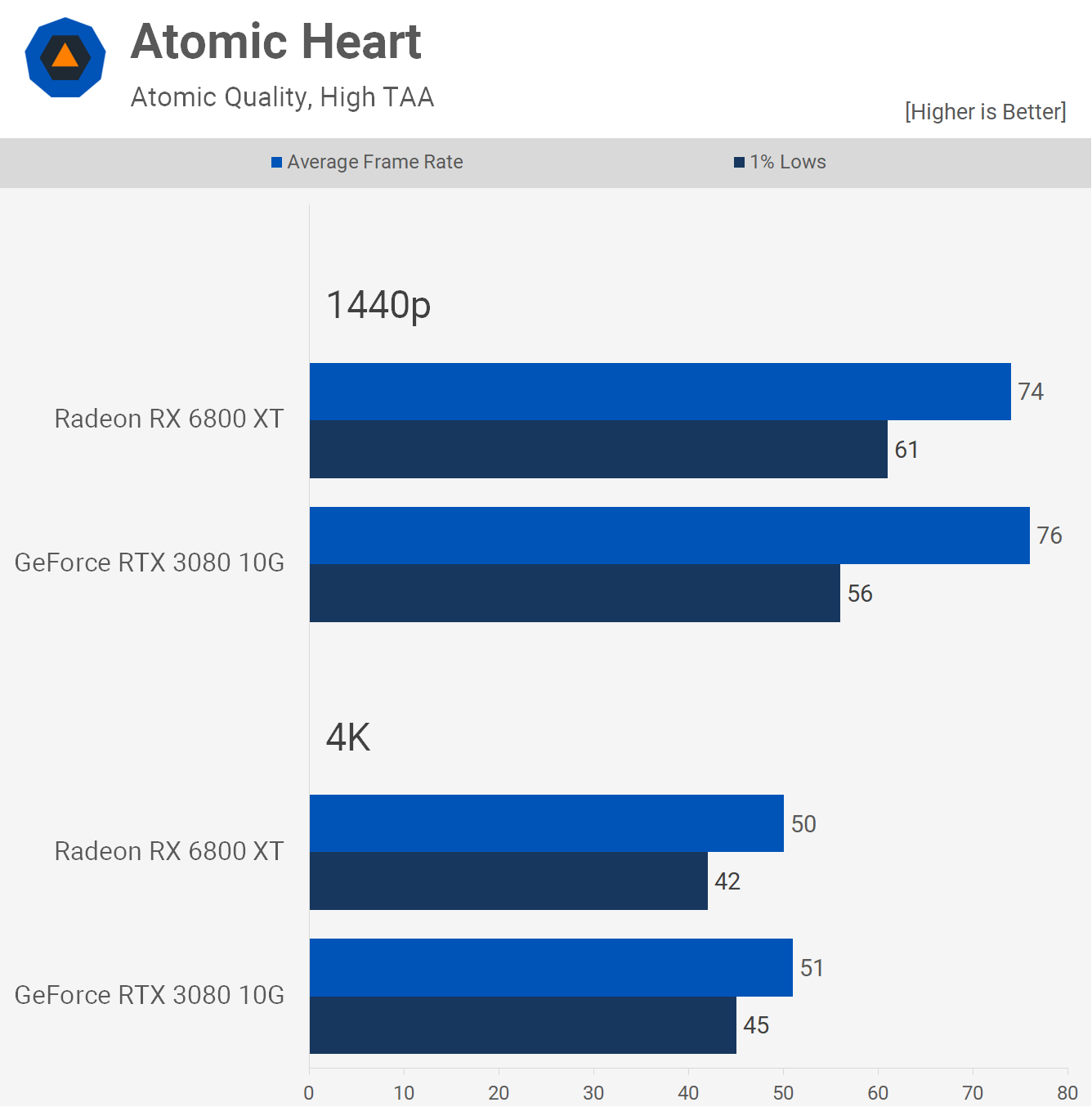

One of the newest games included in our 50 game benchmark is Atomic Heart and here the Radeon 6800 XT and GeForce RTX 3080 are evenly matched, with the GeForce nudging ahead by just a frame or two.

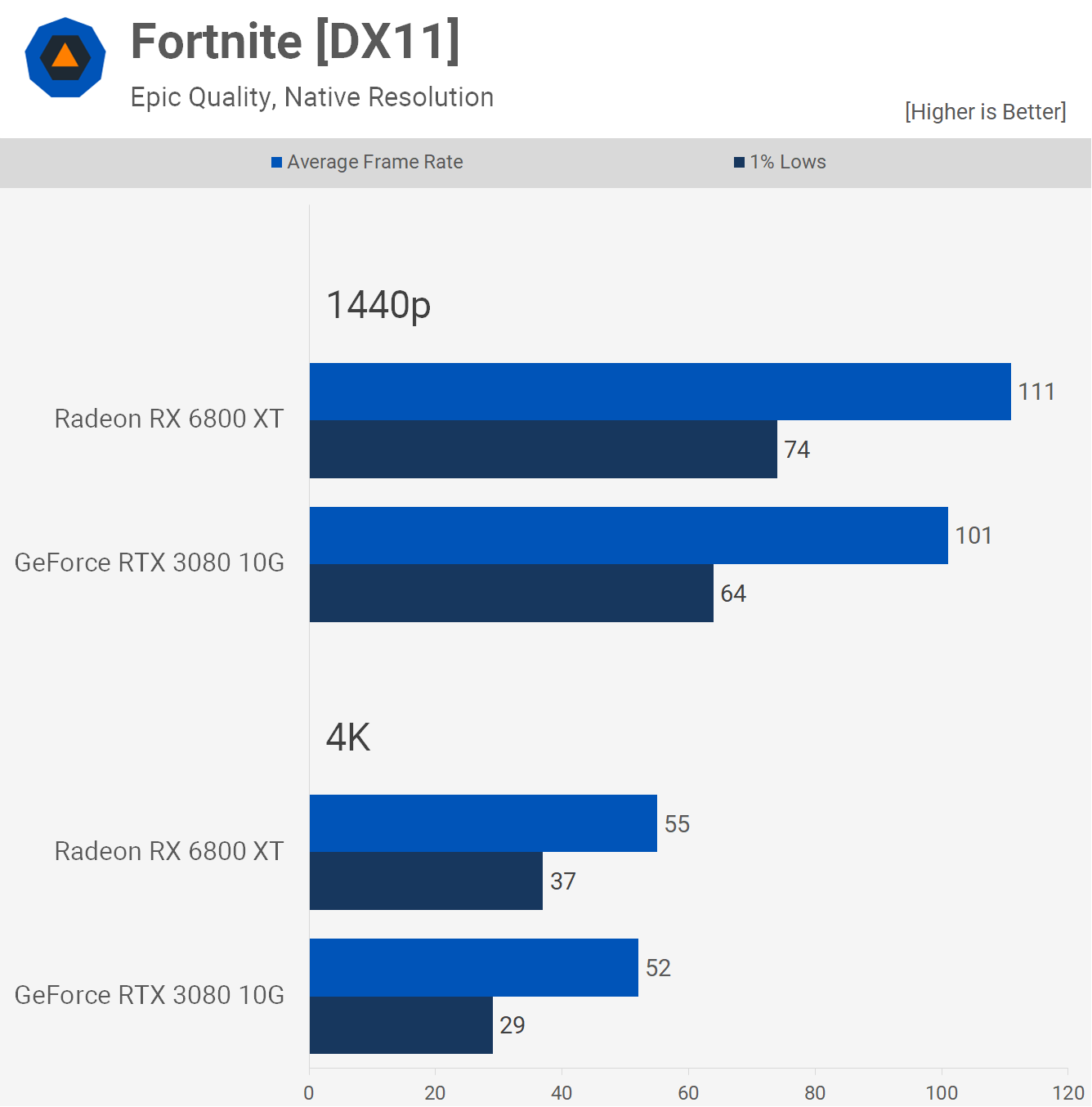

Next we have Fortnite and we'll start with the DirectX 11 mode, which generally delivers the best results if you're not heavily CPU limited, the game's performance mode also uses the DX11 API.

Under these conditions the Radeon 6800 XT was 10% faster at 1440p and then 6% faster at 4K, though 1% lows were almost 30% better.

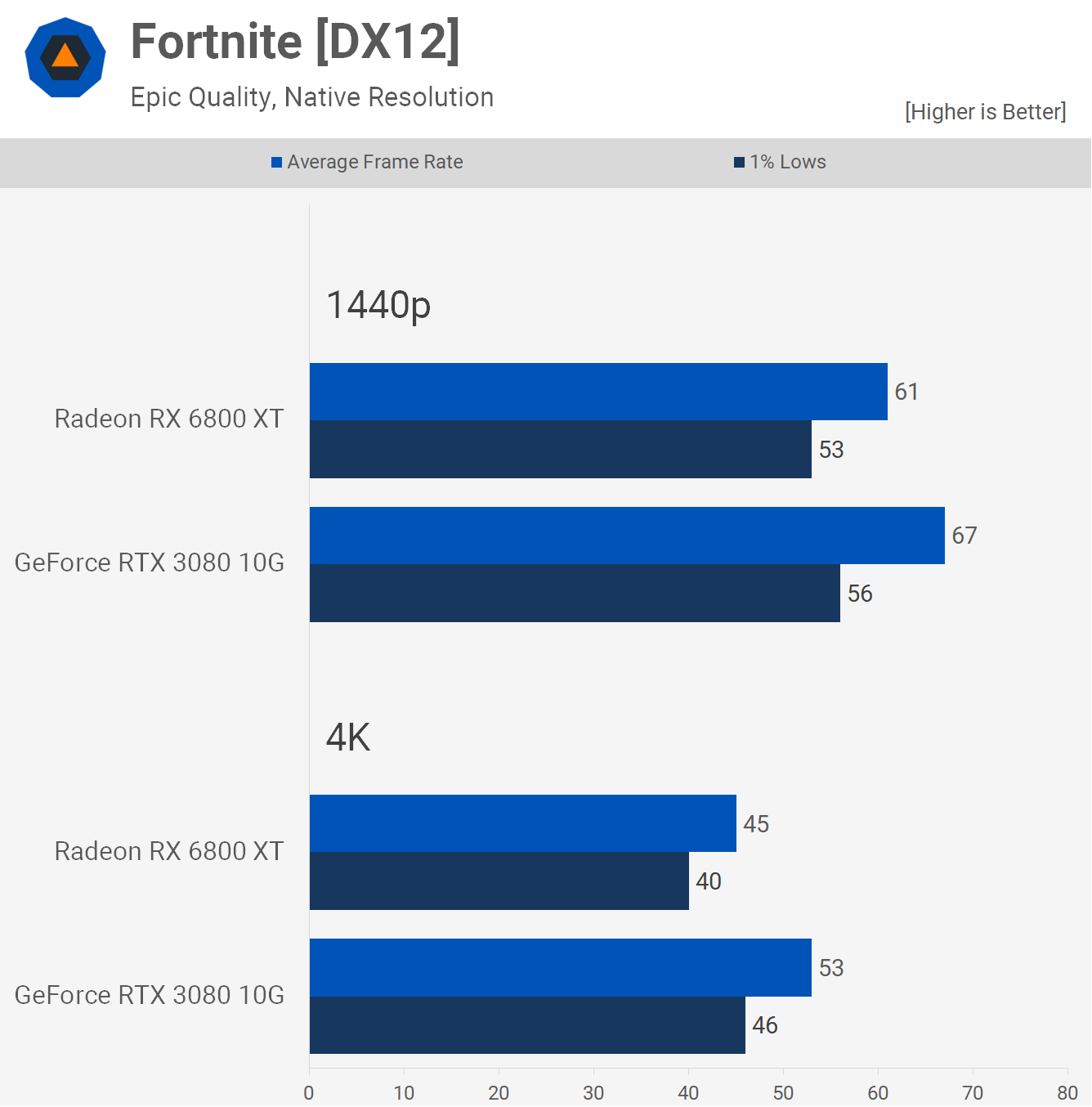

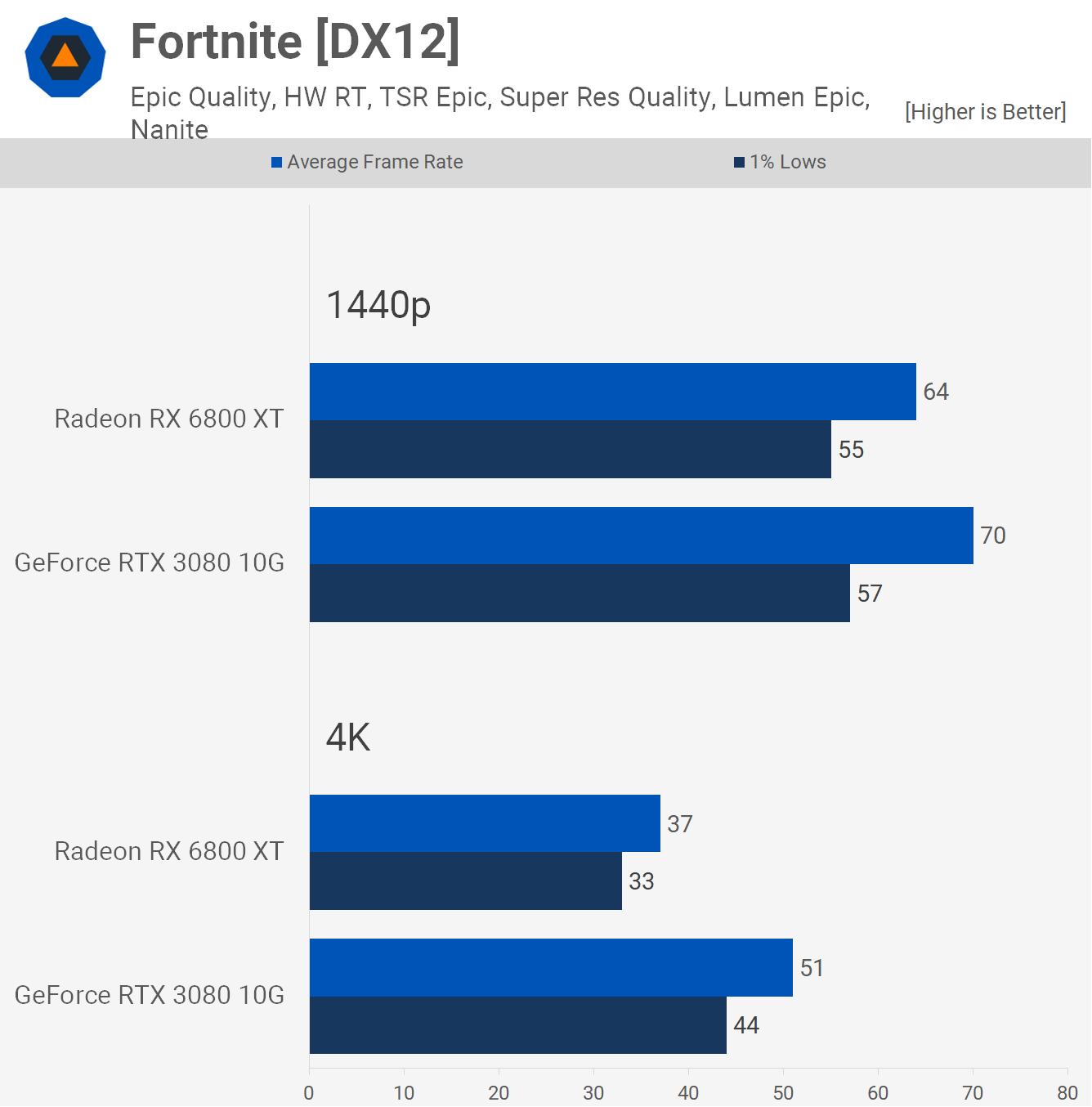

Switching to DX12 reduces performance and now the GeForce RTX 3080 is 10% faster at 1440p and 15% faster at 4K.

Using hardware ray tracing, Nanite and Lumen enabled, the GeForce RTX 3080 was 9% faster at 1440p and 38% faster at 4K. Both were reasonable at 1440p, while only the GeForce is usable at 4K.

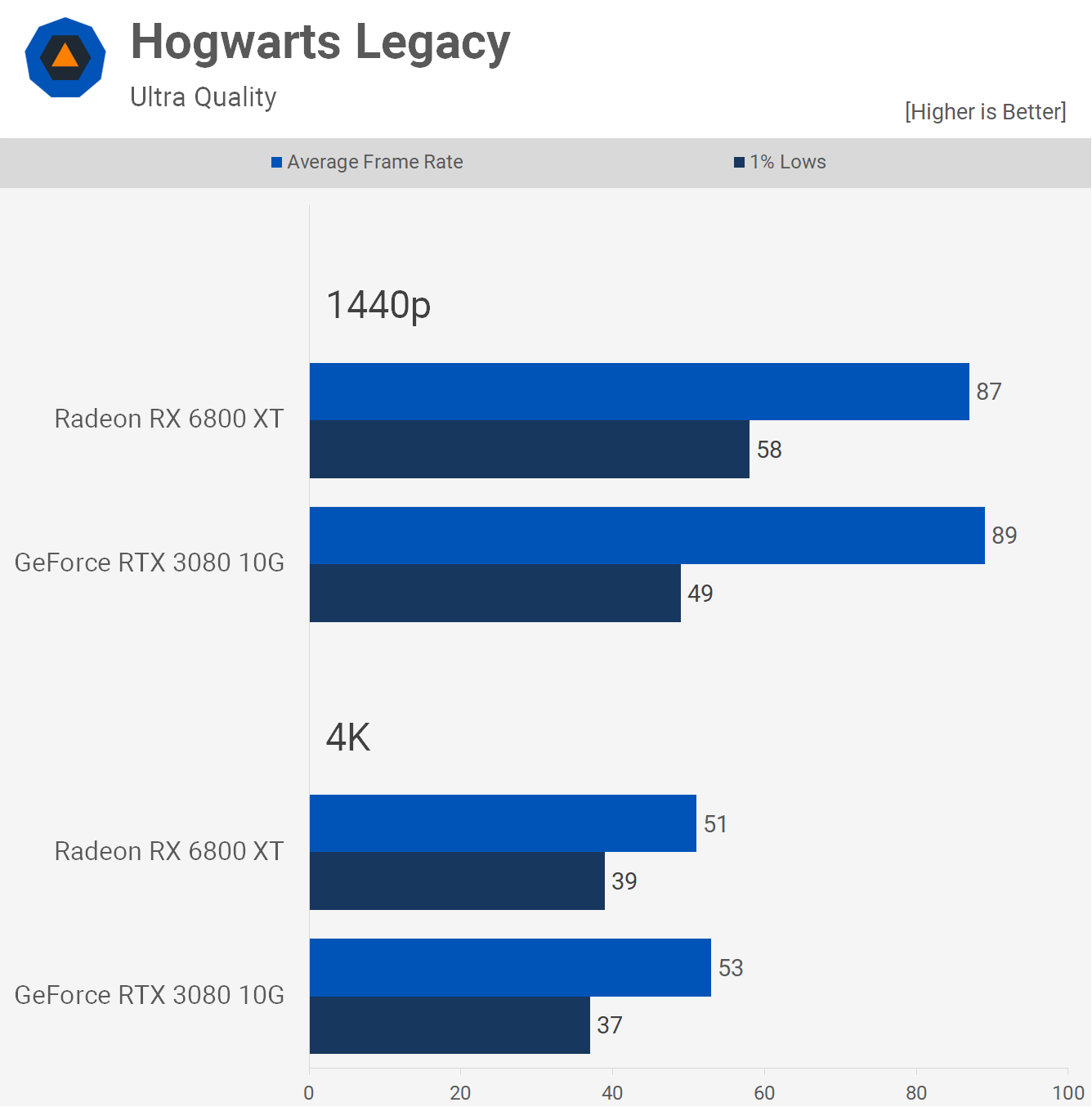

Moving on to Hogwarts Legacy we find similar performance between the two GPUs at 1440p using the ultra quality preset. The GeForce RTX 3080 was consistently a few frames faster when comparing the average frame rate, but slower when looking at the 1% lows.

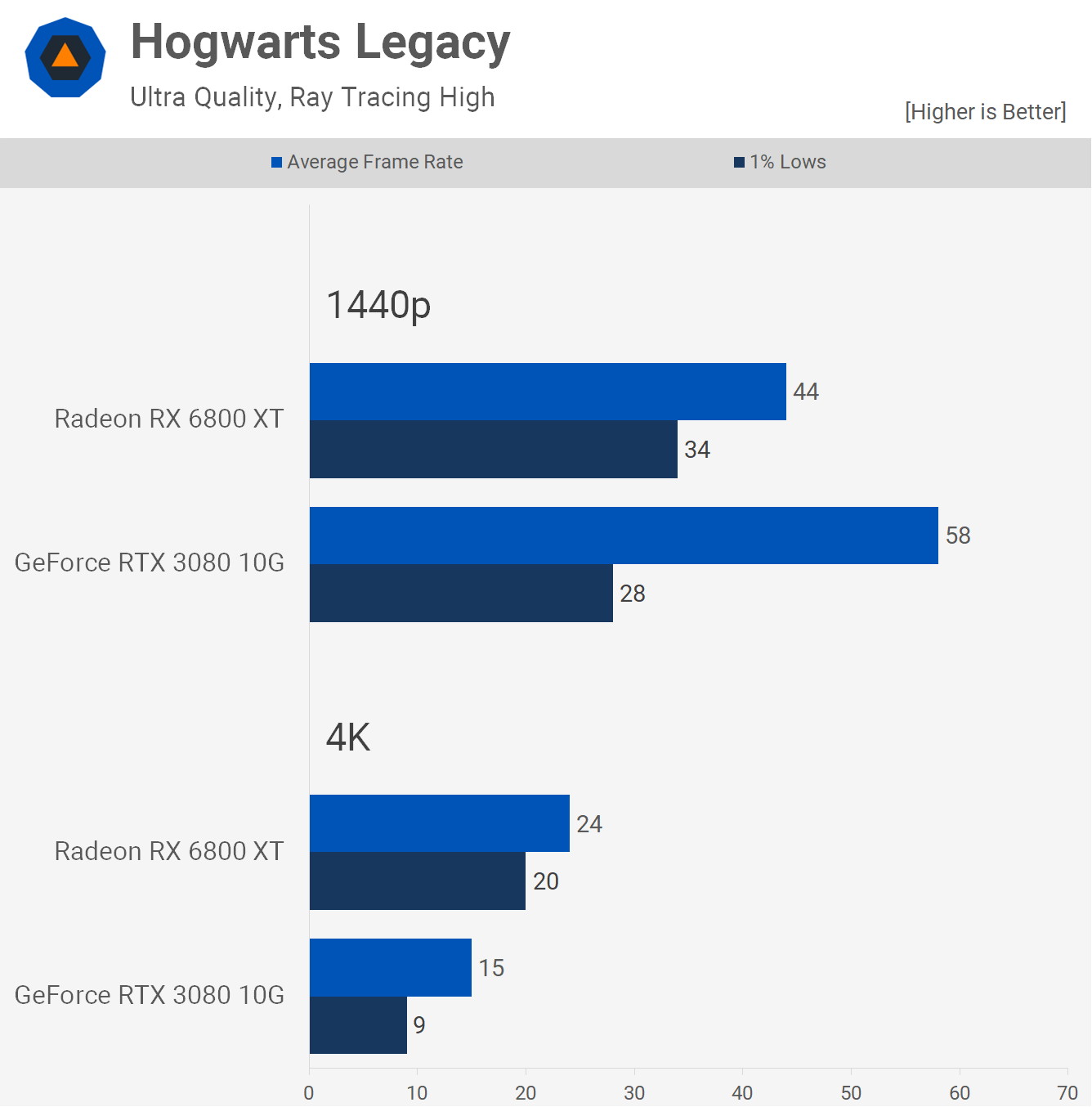

Then with ray tracing enabled, the GeForce RTX 3080 was 32% faster on average and yet despite that the 6800 XT delivered a smoother experience with less frame stutters, while at 4K the RTX 3080 was completely broken and although the 6800 XT did suffer from frame stuttering, performance overall was poor, leading to an unplayable gaming experience with sub-30 fps.

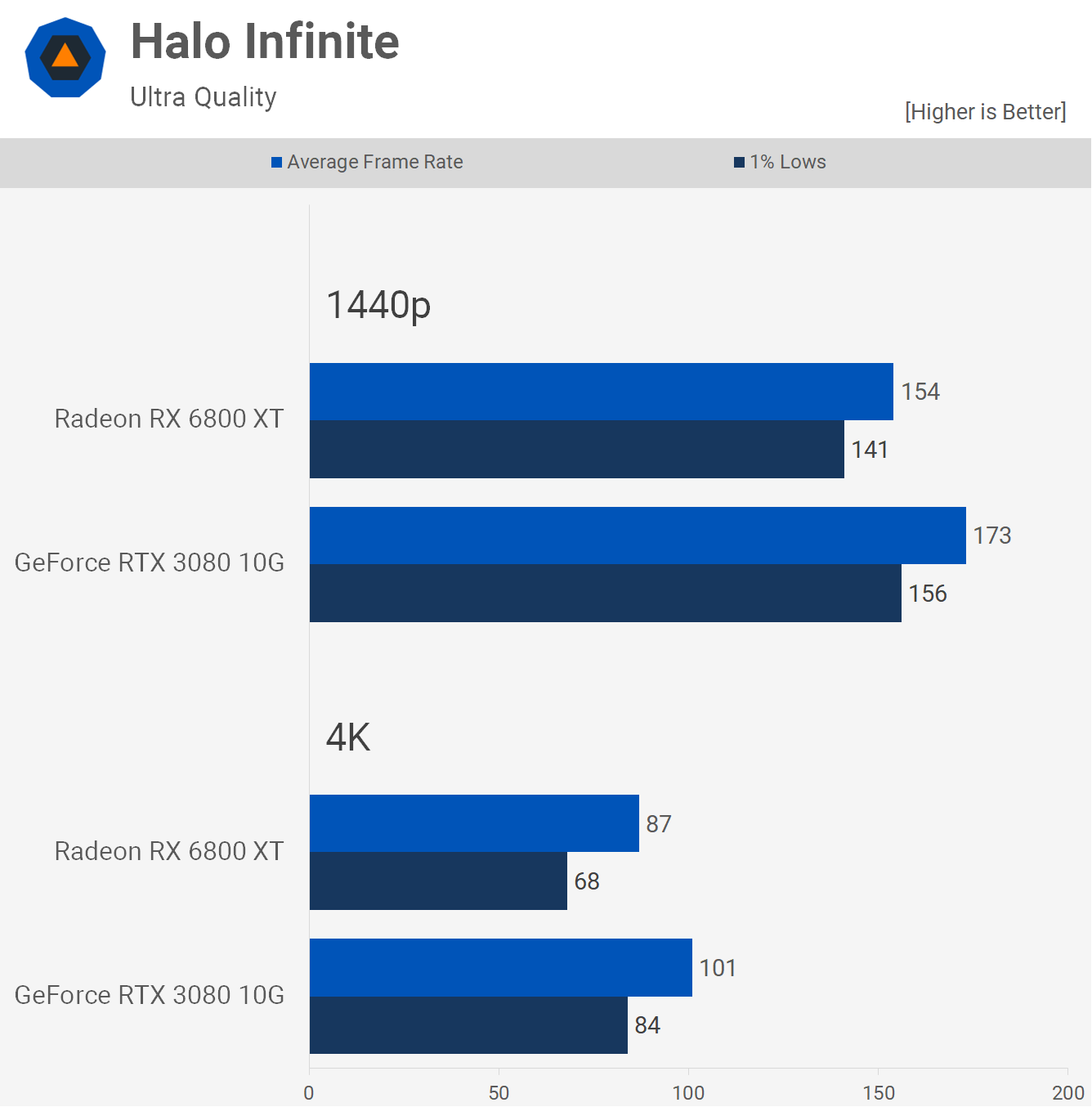

The GeForce RTX 3080 managed to come out comfortably on top in our Halo Infinite testing, delivering 12% faster performance at 1440p and 16% at 4K.

To be fair, both GPUs are exceptionally fast in this title using ultra settings, but if you're after maximum performance then the 3080 has it over the 6800 XT in this title.

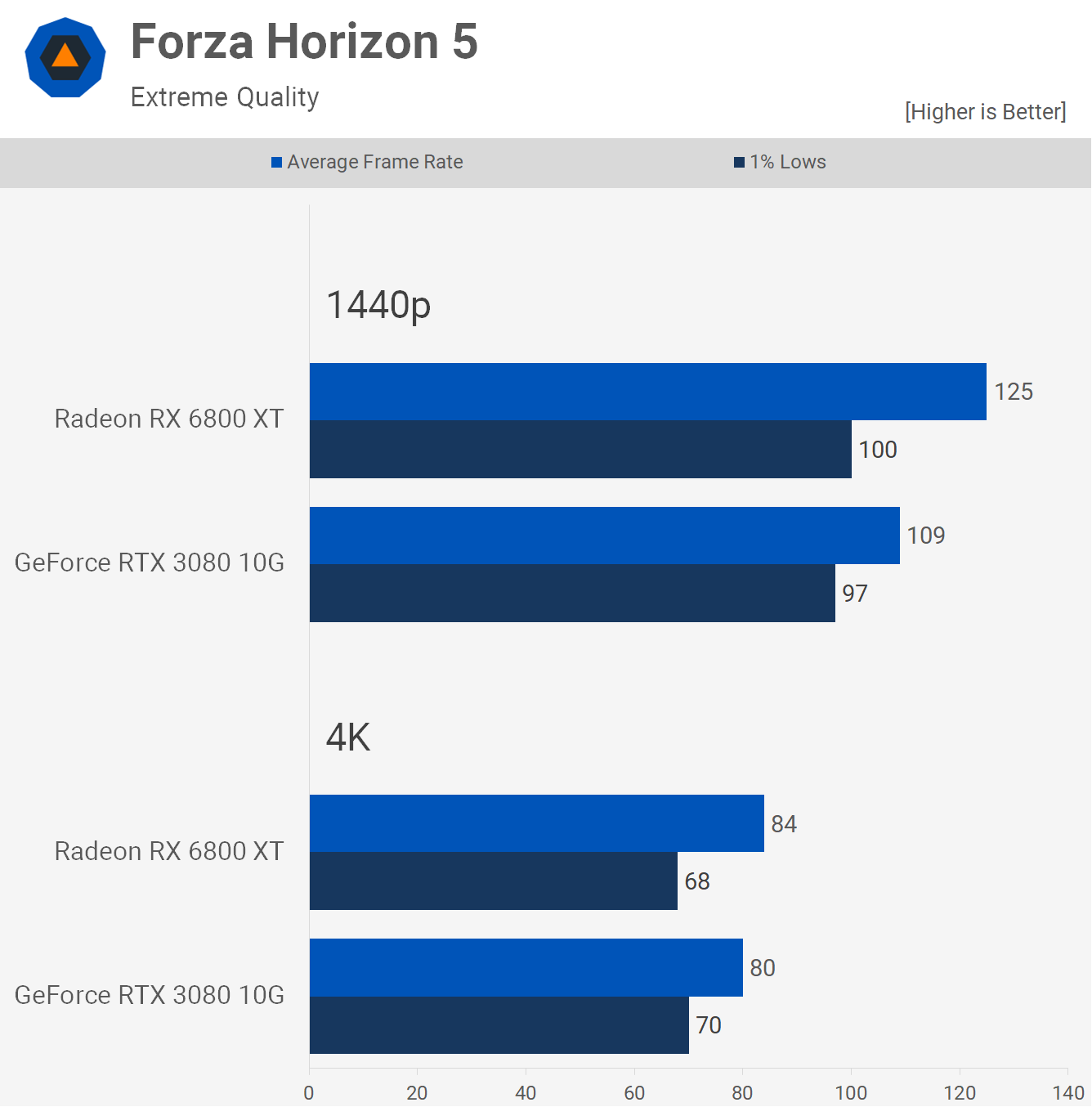

Forza Horizon 5 gives the nod to the 6800 XT at 1440p, where the Radeon was 15% faster, though the 1% lows were very similar. Then at 4K we're looking at very comparable performance, 5% greater average frame rate for the 6800 XT, while 1% lows were 3% better for the RTX 3080.

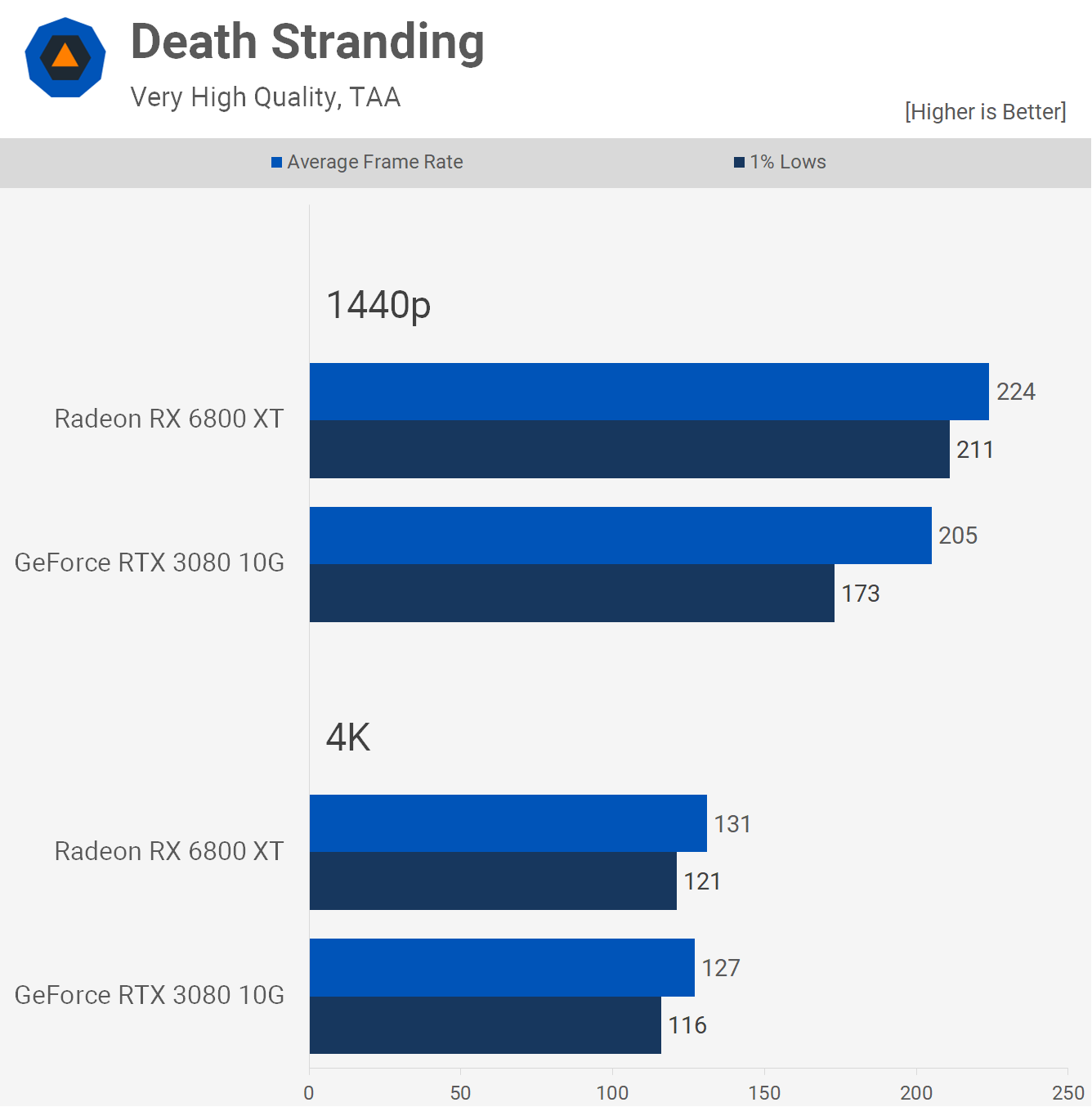

AMD enjoys a win in Death Stranding, but like Halo, frame rates are excessively high using either of these two GPUs, so the Radeon 6800 XT's 9% victory at 1440p isn't as significant. Then at 4K we find the 6800 XT to be just 3% faster, so you could say performance is identical.

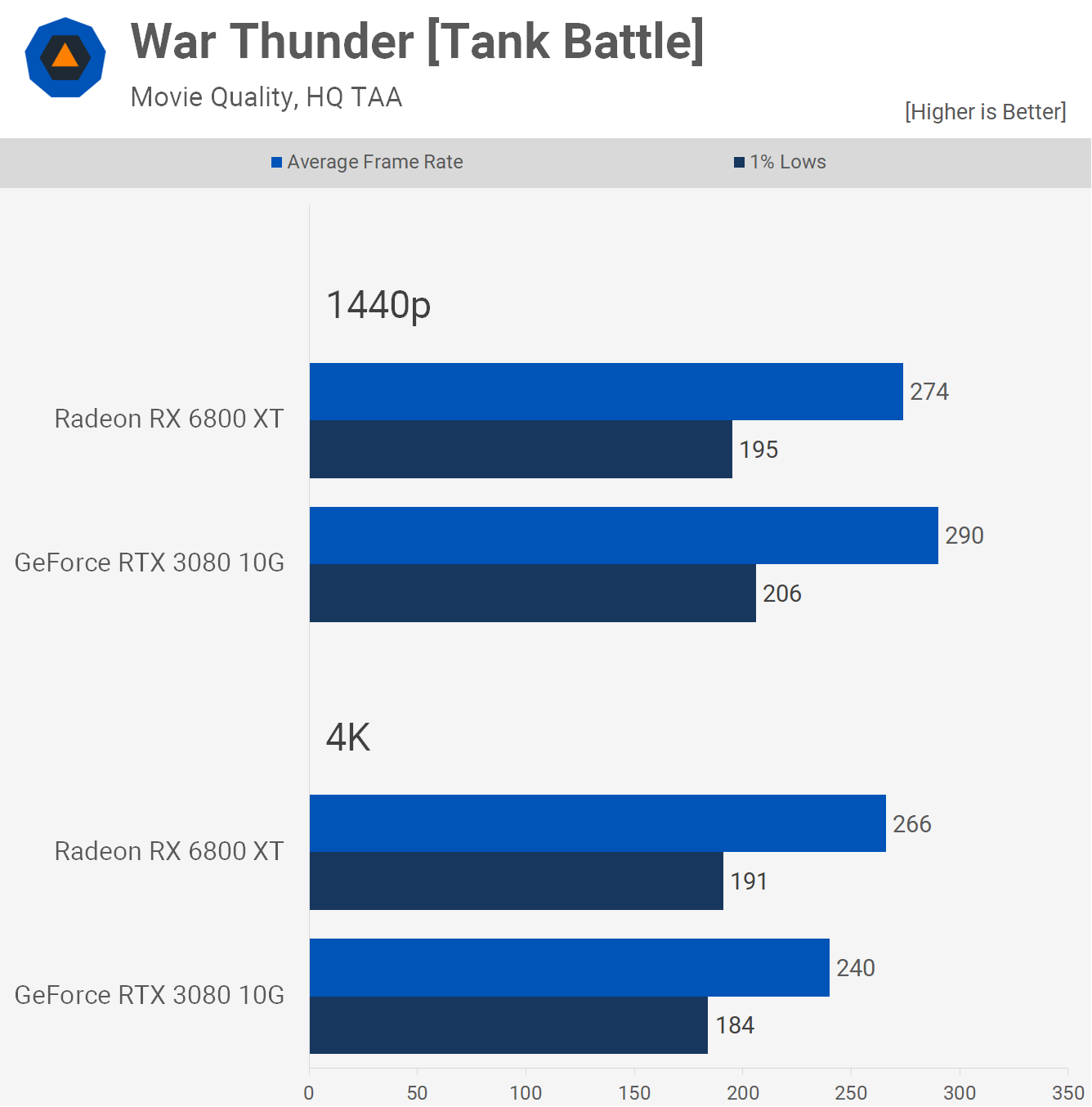

In War Thunder the RTX 3080 was 6% faster at 1440p but then oddly 10% slower at 4K. Some strange scaling here, but overall with both GPUs pushing well past 200 fps, it doesn't really matter.

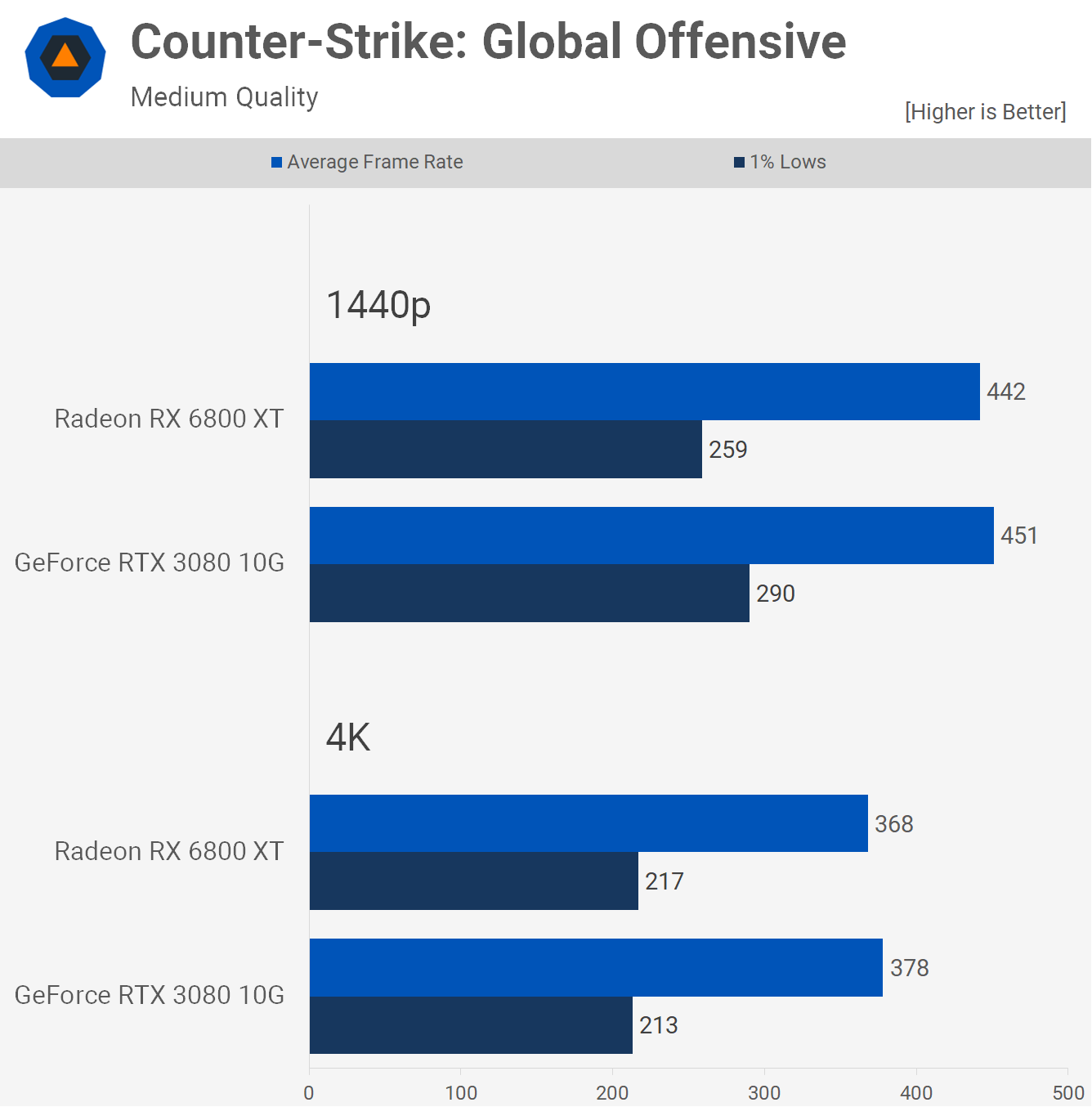

Finally, we have the ever popular Counter-Strike where both GPUs delivered near identical performance at both resolutions. Do note we're not using the workshop benchmark for this testing, instead we're using a replay from a professional match as it represents actual gameplay performance more accurately.

Performance Summary

That was a preliminary look at 14 of the 50 games we tested, some with ray tracing effects enabled and some without. Now it's time to see how these two GPUs compare across all the games tested. Starting with the 1440p data…

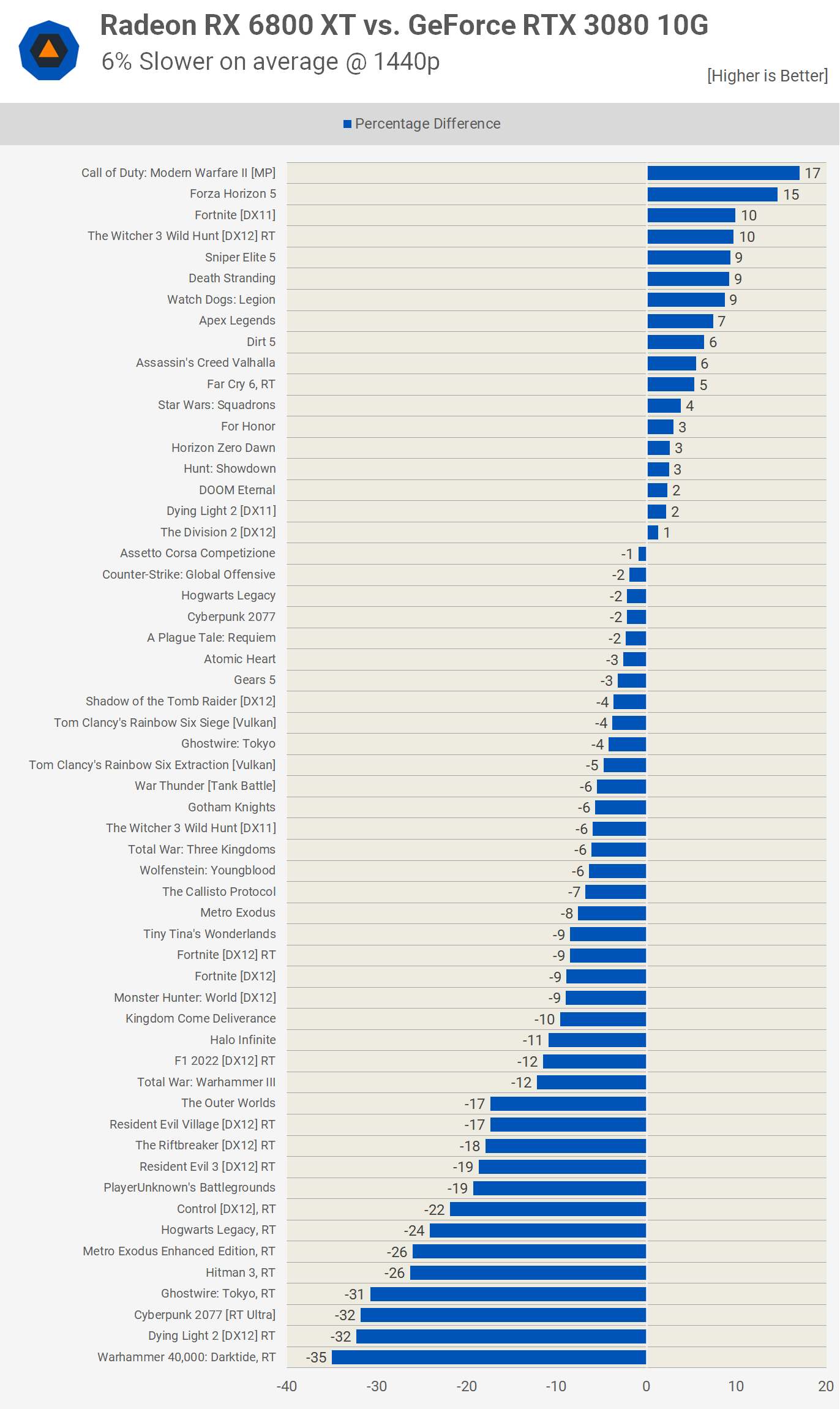

In previous tests we've found performance between these two GPUs to be relatively the same, and that certainly still holds true, but with more ray tracing tests in our 50 game suite, the Radeon 6800 XT is now 6% slower on average at 1440p.

You can see the 8 worst titles for AMD all had ray tracing enabled. Meanwhile, there were just 4 games where the 6800 XT was faster than the RTX 3080 by a 10% margin or more.

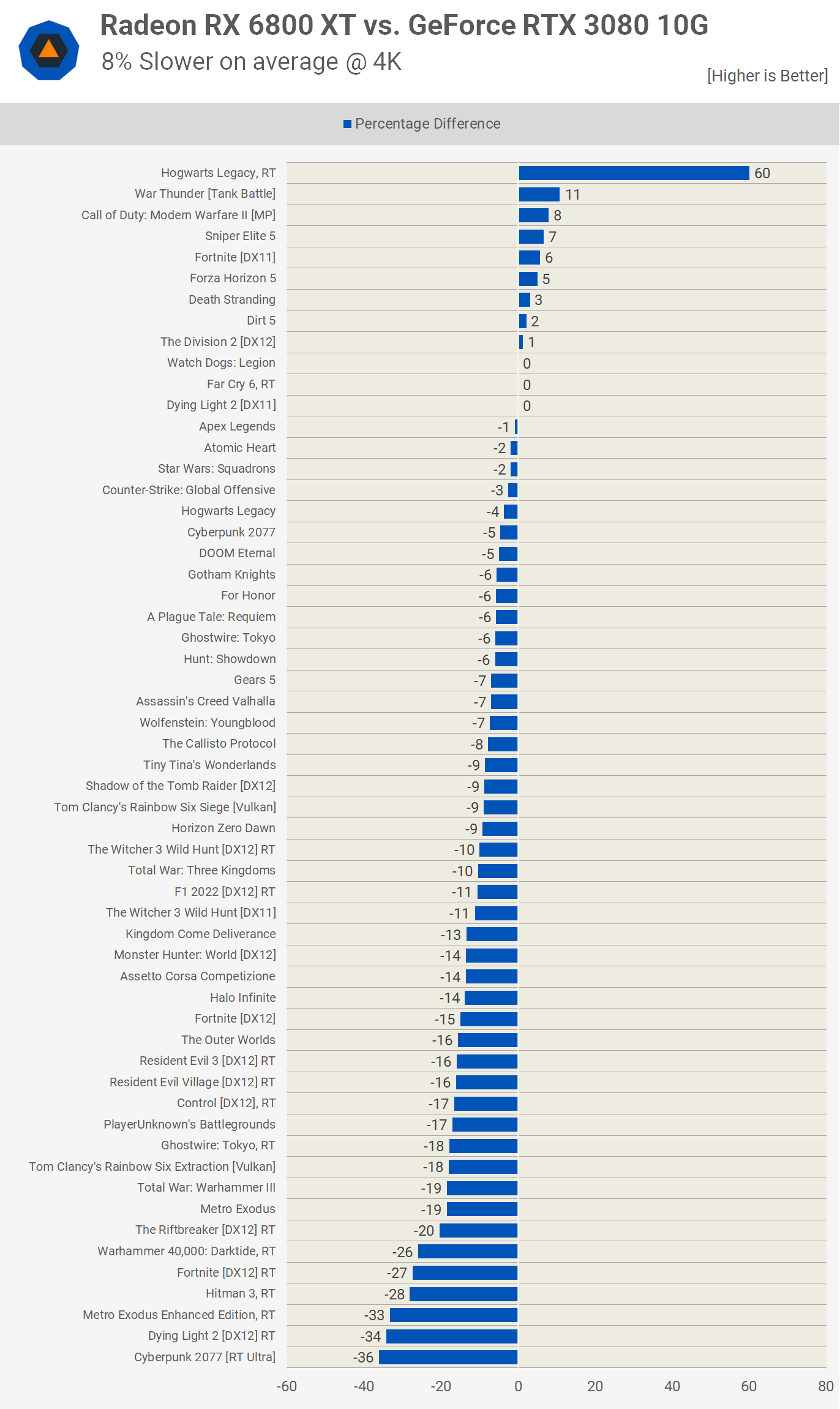

As you would expect based on the previous data, increasing the resolution to 4K further favors the GeForce RTX 3080, and now the Radeon 6800 XT is 8% slower on average. The only real outlier here is Hogwarts Legacy where the RTX 3080 runs out of VRAM, but the 6800 XT wasn't able to provide playable performance here either, so technically that result is void, and if we remove it the 6800 XT becomes 10% slower on average.

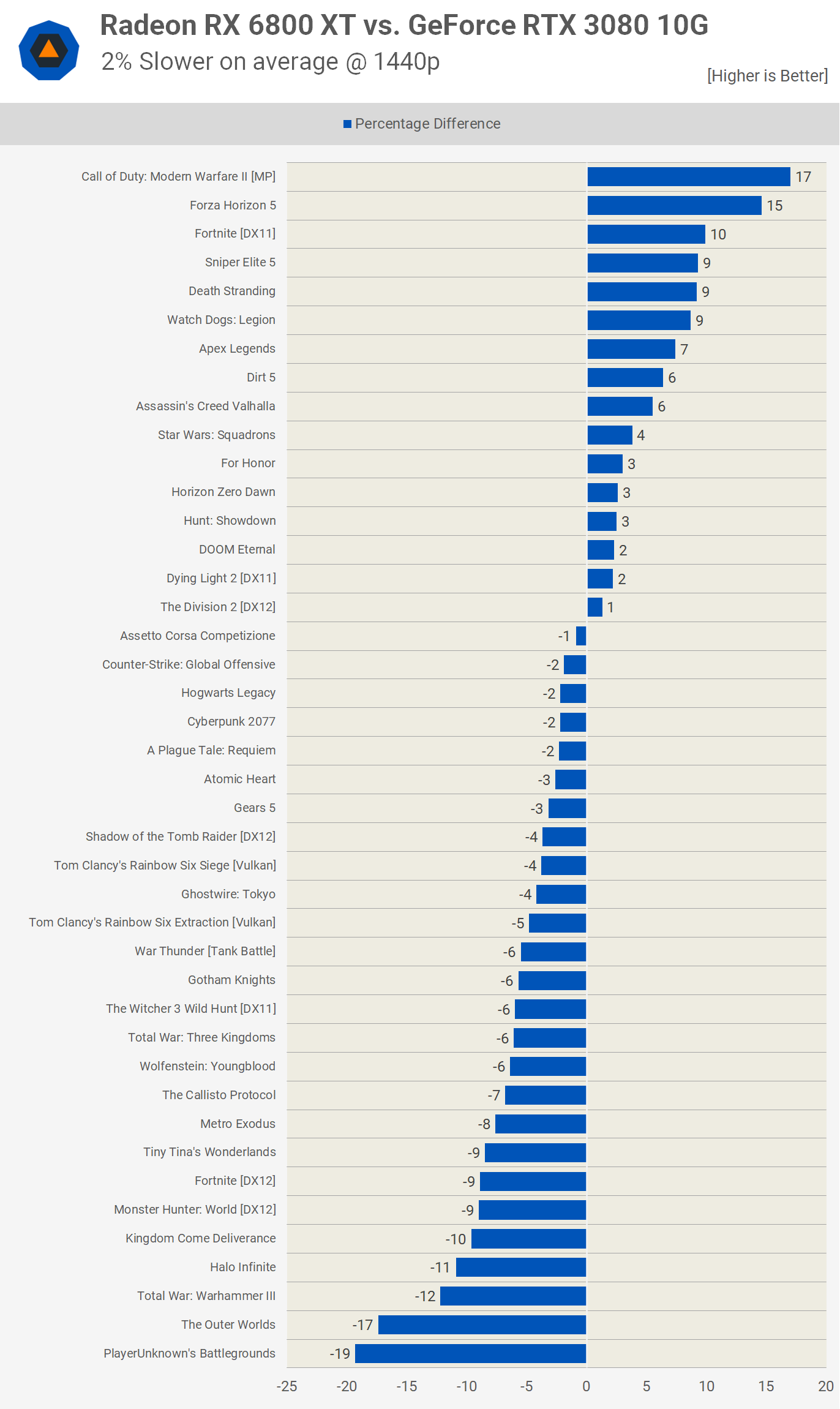

Now, if we remove the ray tracing data from our 1440p results we see that the Radeon 6800 XT is just 2% slower than the RTX 3080, reducing the number of test configurations from 57 to 42.

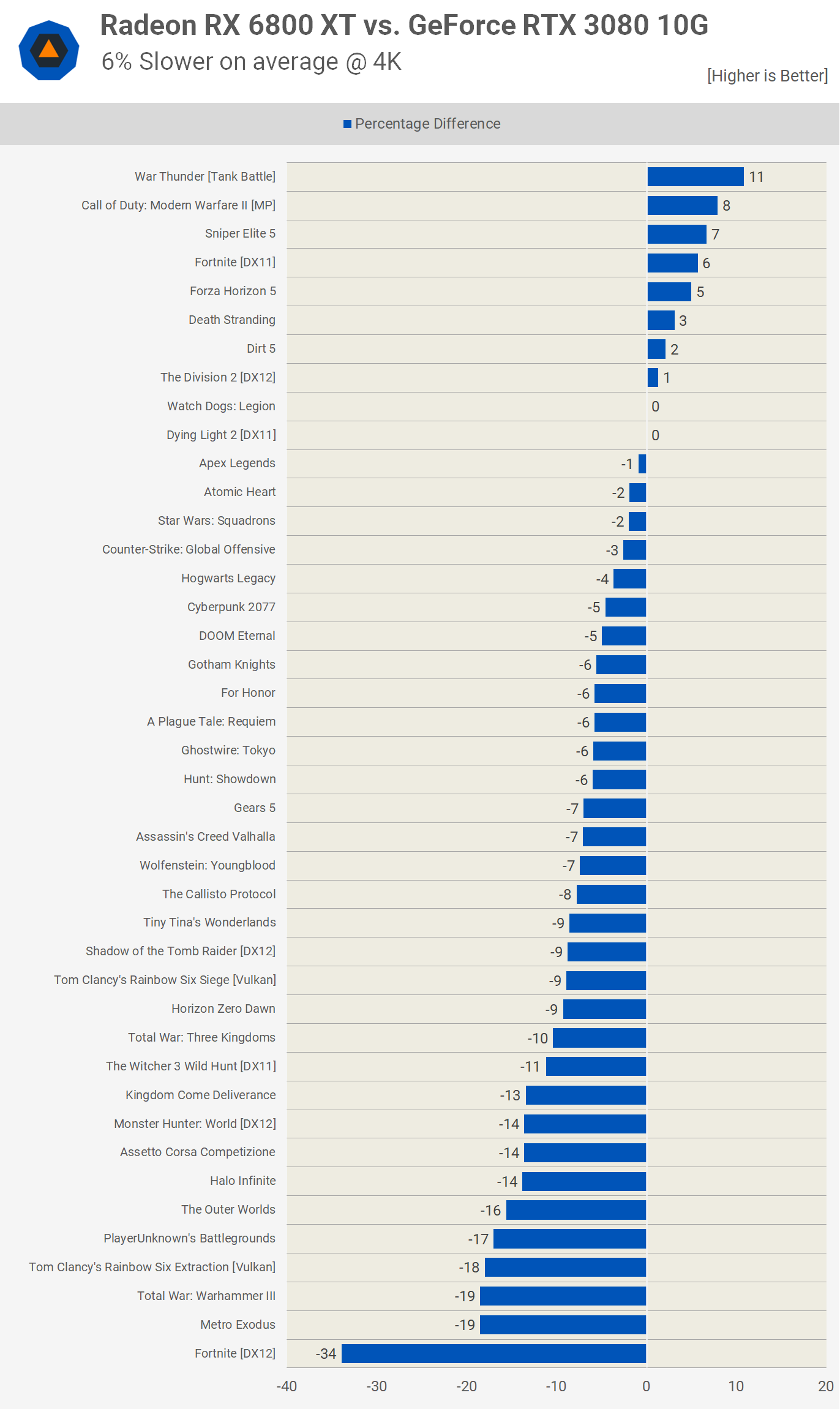

Then at 4K, we find that the Radeon 6800 XT is 6% slower than the RTX 3080 with the only real outlier being Fortnite DX12. This aligns perfectly with our 2021 data which also found the Radeon 6800 XT to be 6% slower at 4K and that data set included very few ray tracing enabled configurations.

What We Learned

So there you have it. For standard rasterization performance, the Radeon RX 6800 XT and GeForce RTX 3080 keep trading blows. However, as we've also concluded since day one, if you care at all about ray tracing performance, then the RTX 3080 remains the obvious choice.

As things stand today, the 10GB buffer of the RTX 3080 is still just enough to get away with enabling ray tracing in all of the latest games, you can potentially break performance in some instances, but some small tweaks can get it working.

Games where the 8GB RTX 3070 is completely broken such as The Callisto Protocol at 1440p with ray tracing enabled worked just fine with the RTX 3080 as just shy of 9GB is required, and we found all textures loaded in Forspoken, even at 4K using the ultra high preset which enables ray tracing by default. So the 10GB buffer is currently right on the edge with today's games.

That means the RTX 3080 remains a better choice for ray tracing, and in that sense it's aged quite well and it's certainly worlds better than the 3070/3070 Ti.

Another feature that has aged well, really well in fact, is DLSS. The current state of DLSS support and quality is excellent, this truly is the key benefit of GeForce GPUs and we've been sure to make this known for some time now – basically ever since DLSS got good with its second iteration.

Thus, not a lot has changed from our previous two revisits. Last time we concluded that we felt it was very easy to argue in favor of the RTX 3080 given those features. We'd only go for the Radeon 6800 XT if we weren't interested in ray tracing and if it was more affordable.

As it happens to be the case now though, if you want a new graphics card, the Radeon RX 6800 XT is still available in some regions at around $530, while the GeForce RTX 3080 is mostly out of stock or selling for absurdly high pricing new. Looking at the second hand market, the RTX 3080 10GB is currently around $500 on eBay, but that comes with all the caveats of an used GPU.

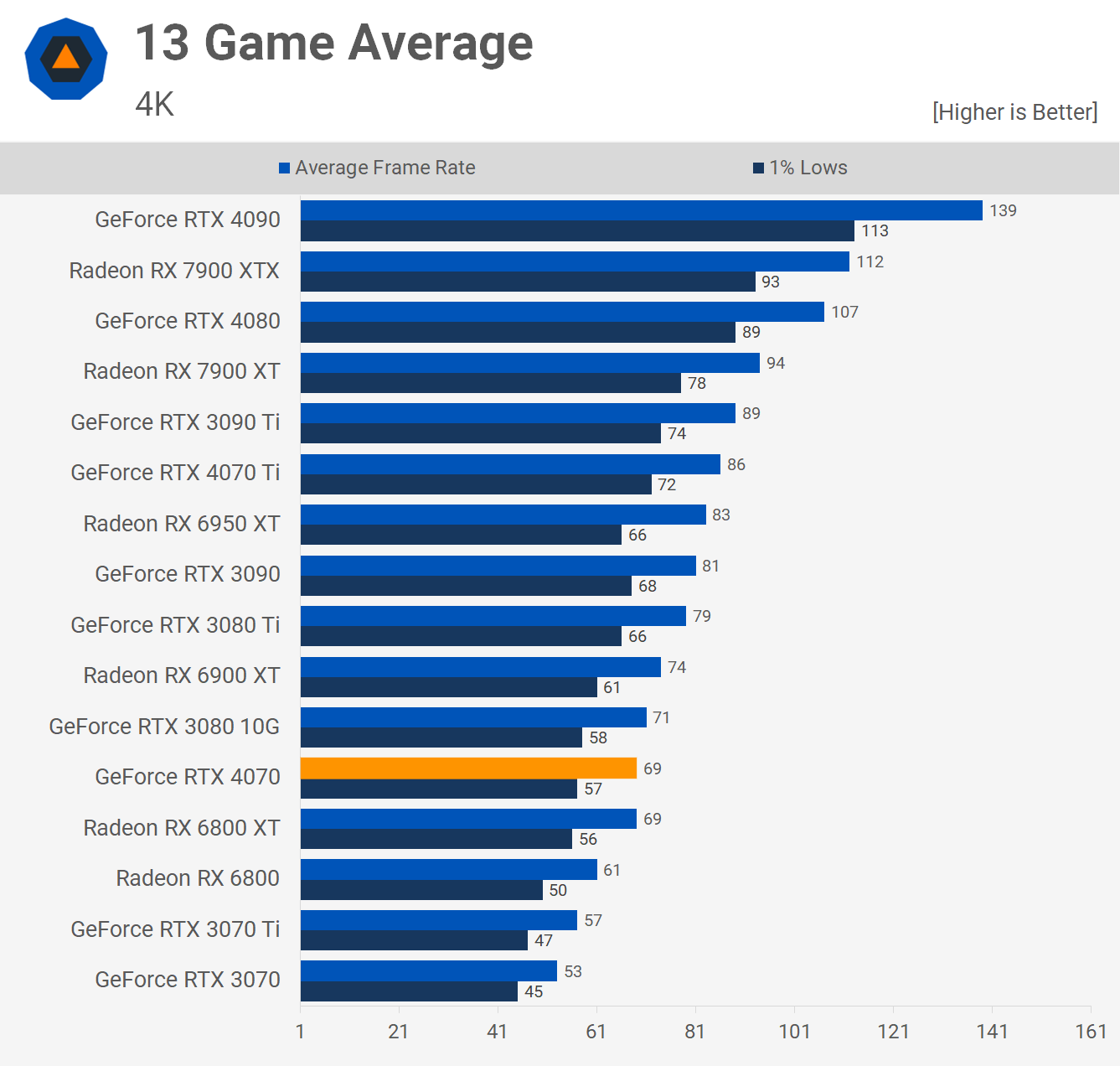

With the launch of the similar performing GeForce RTX 4070 (check out our full review), it brings interesting value at $600 compared to other launches this generation that were all very expensive in comparison, plus it also offers a 12GB VRAM buffer, so it's at least worthy of consideration if you haven't upgraded your GPU in the last two generations.