Today we're revisiting graphics card performance in Fortnite. With the new Chapter 2 Season 1 update the game's visuals have been improved and since we do include Fornite in our big benchmark features, we thought a large scale benchmark update would be in order. The last time we ran a dedicated Fortnite benchmark article was back in Chapter 1 Season 2, nearly two years ago now and since then the game's popularity has only risen.

The new season features significant changes in almost all elements of gameplay: a new map with new locations, new weapons and items, an overhaul of the game's challenge system and improved visuals. The environment still looks very Fortnite-ish, but trees, grass and water have all been given a visual upgrade. The lighting also seems more impressive now, so for those who aren't playing with competitive settings, the game actually looks very nice.

For today's session we have gathered 28 GPUs from current and previous generation graphics families from AMD and Nvidia. We've tested using our Core i9-9900K test rig clocked at 5 GHz with 16GB of DDR4-3400 memory. For testing Radeon cards driver version 19.10.1 was used and for Nvidia GPUs driver version 436.48. As for the quality settings used, testing takes place at 1080p, 1440p and 4K using both the 'Epic' and 'Low' quality presets, so we have maximum visual quality performance as well as competitive settings performance.

We based the benchmark pass on a simple run that we found accurately measured performance under very demanding conditions. For this we used the "Team Rumble" 20 v 20 game mode, waited until the second final circle and then measured a 60 second passage of gameplay which includes quite a few fast mouse flicks left and right to check for enemies, doing this heavily reduces the 1% low performance.

Benchmarks

Epic Quality Settings

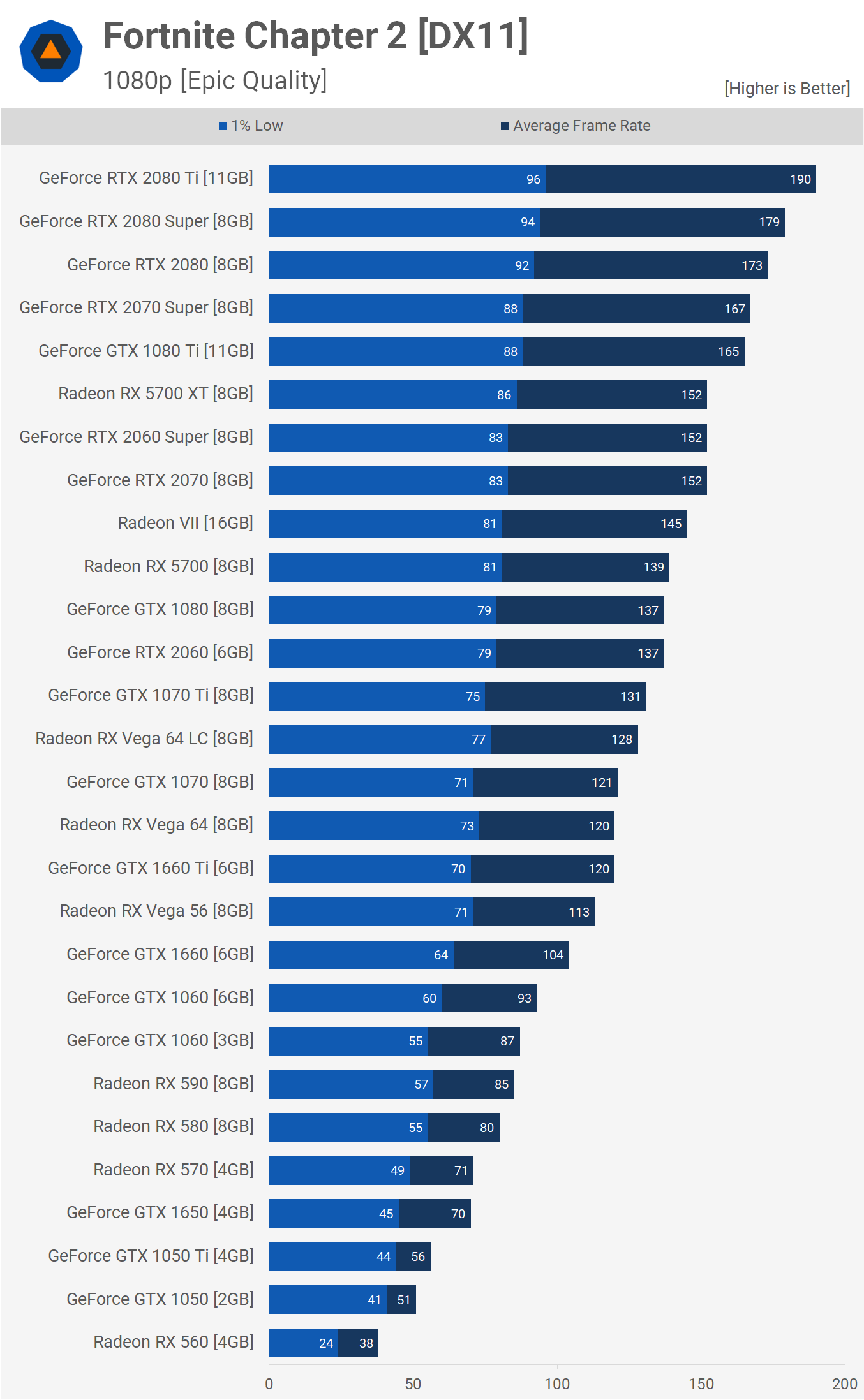

We'll start with the Epic quality results at 1080p. It doesn't take much to play Fortnite at 1080p with max quality settings. A lowly GTX 1650 will deliver 70fps on average, the same goes for an RX 570. Typically the RX 570 is a good bit faster than the 1650 but with Fortnite being an Unreal Engine game, it does tend to favor Nvidia hardware. This is more evident when comparing the GTX 1050 and RX 560. A while back we compared both GPUs in 30+ games and the RX 560 was ~2% slower on average, but in Fortnite it's a full 25% slower.

For around 120 fps you'll need either the GTX 1660 Ti, 1070 or Vega 64 – again, a weak showing from AMD.

For around 140 fps on average the GTX 1080, RTX 2060, RX 5700 or Radeon VII will work quite well. In fact, the newer Navi-based RX 5700 series GPUs perform very well. The Radeon 5700 XT, for example, matched the RTX 2060 Super and RTX 2070. Beyond that, you're looking at over 160 fps.

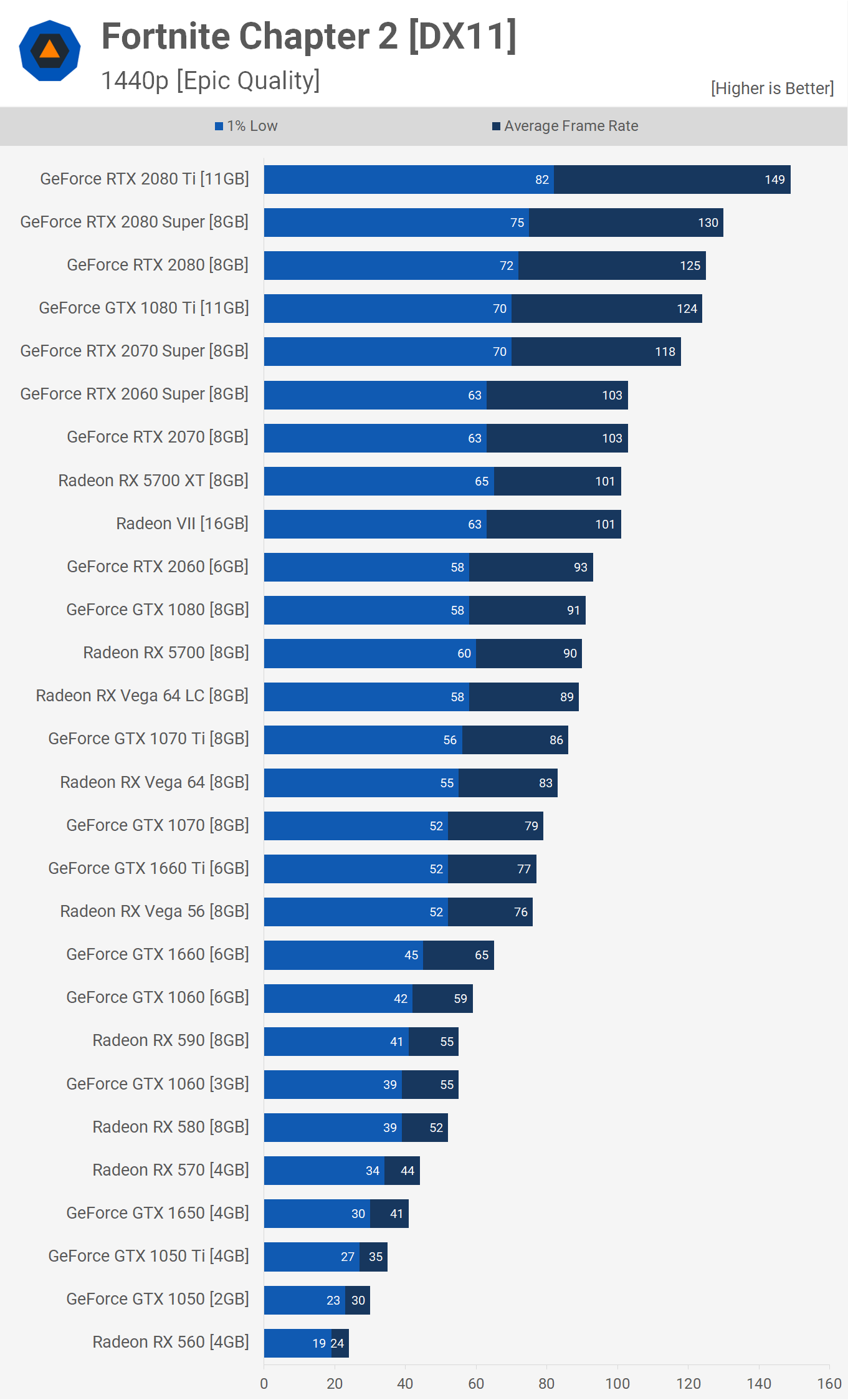

Increasing the resolution to 1440p we can see you'll need a powerful GPU to break 100 fps.

The 5700 XT hangs in there nicely with the RTX 2060 Super and RTX 2070, while the standard 5700 matched the RTX 2060 and GTX 1080. The Navi GPUs are far more efficient in this type of setting and engine than the GCN models such as the Radeon VII and Vega 64.

Vega 56, for example, only managed to match the GTX 1660 Ti, while the RX 590 came in behind the GTX 1060, a GPU it typically beats by a comfortable margin in modern AAA titles.

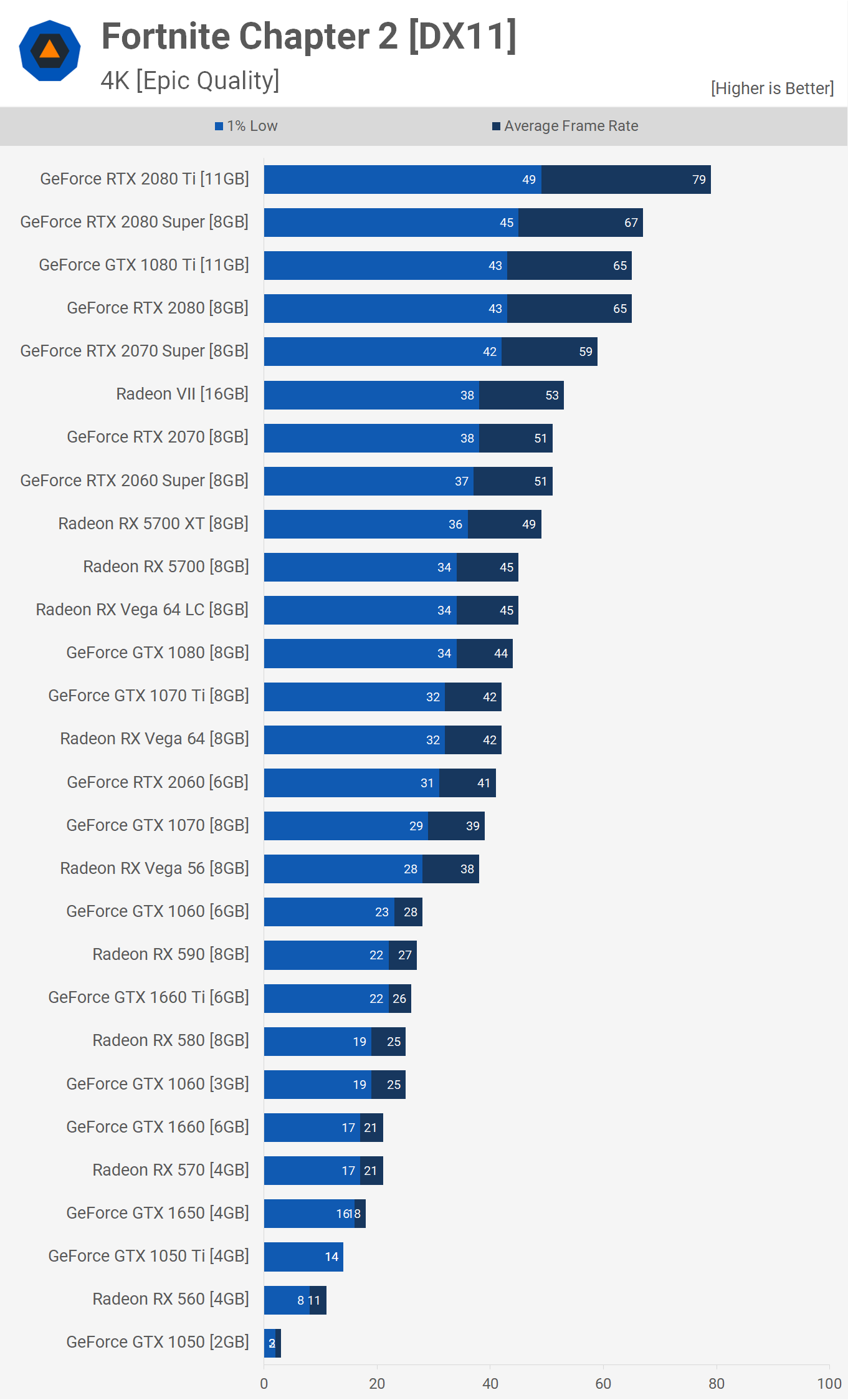

Now for those of you who play Fortnite at 4K, and I don't suspect there are many of you, but for those who do, unsurprisingly you'll want an RTX 2080 Ti to push over 60 fps. You can still achieve a 60 fps average with the standard RTX 2080 or the older 1080 Ti, so that's not too bad.

Down to Low Quality Settings

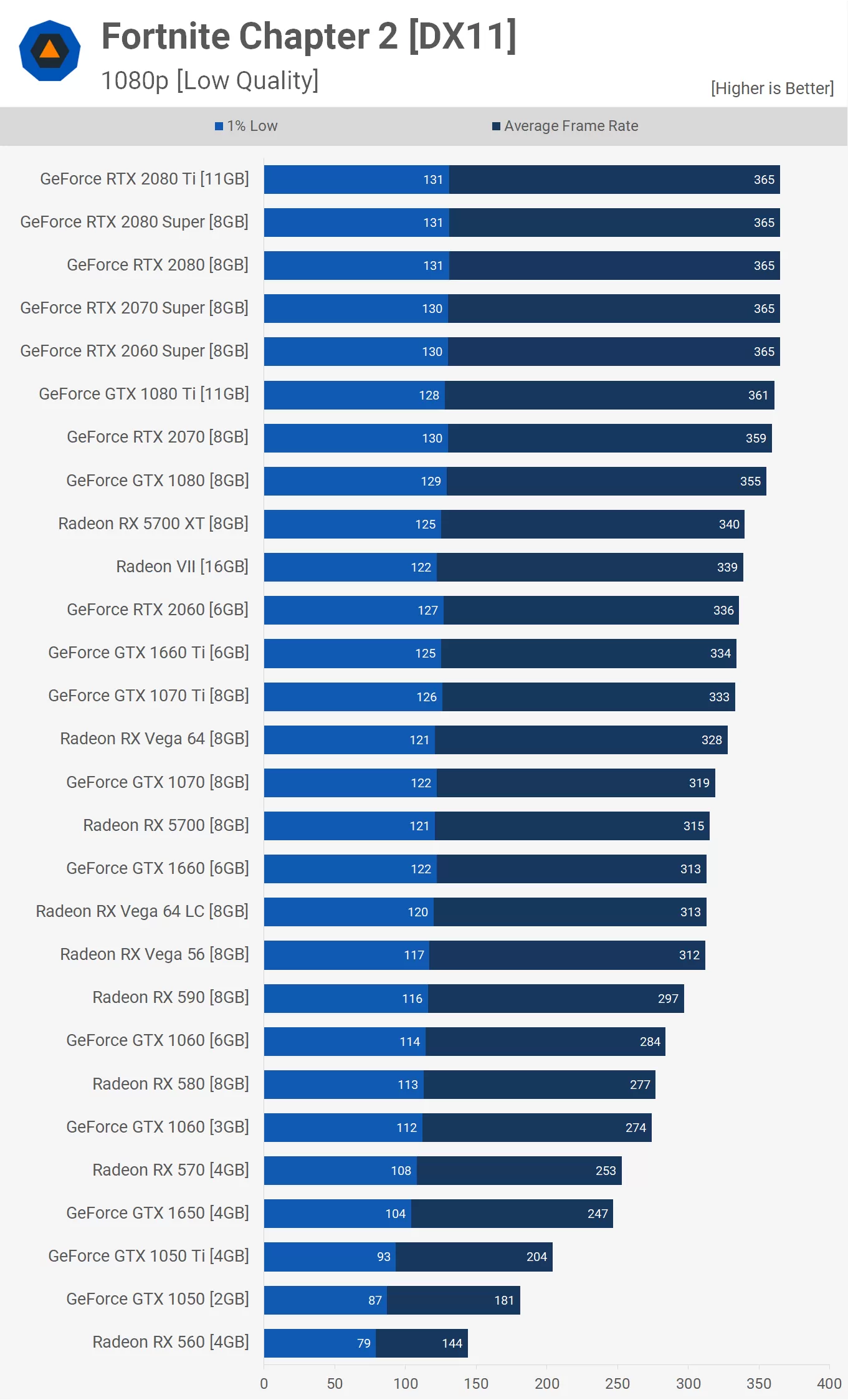

Testing with low quality settings sees some pretty extreme frame rates at 1080p using the Core i9-9900K. Even the RX 560 allowed for 144 fps on average and we have to say once you push past the GTX 1050 Ti it all seems a bit pointless. All you need here is an RX 570 or GTX 1060 – both maintained over 100 fps at all times with an average of almost 300 fps.

Something we noticed was regardless of which GPU you use, those quick peeks where you check for enemy players around you, tank the frame rate for a fraction of a second. As you can see the 1% low performance doesn't vary that much from the RX 570 to the RTX 2080 Ti – sure, we're still looking at a 21% performance increase – but that's nothing given how much more powerful the $1,000+ GeForce is.

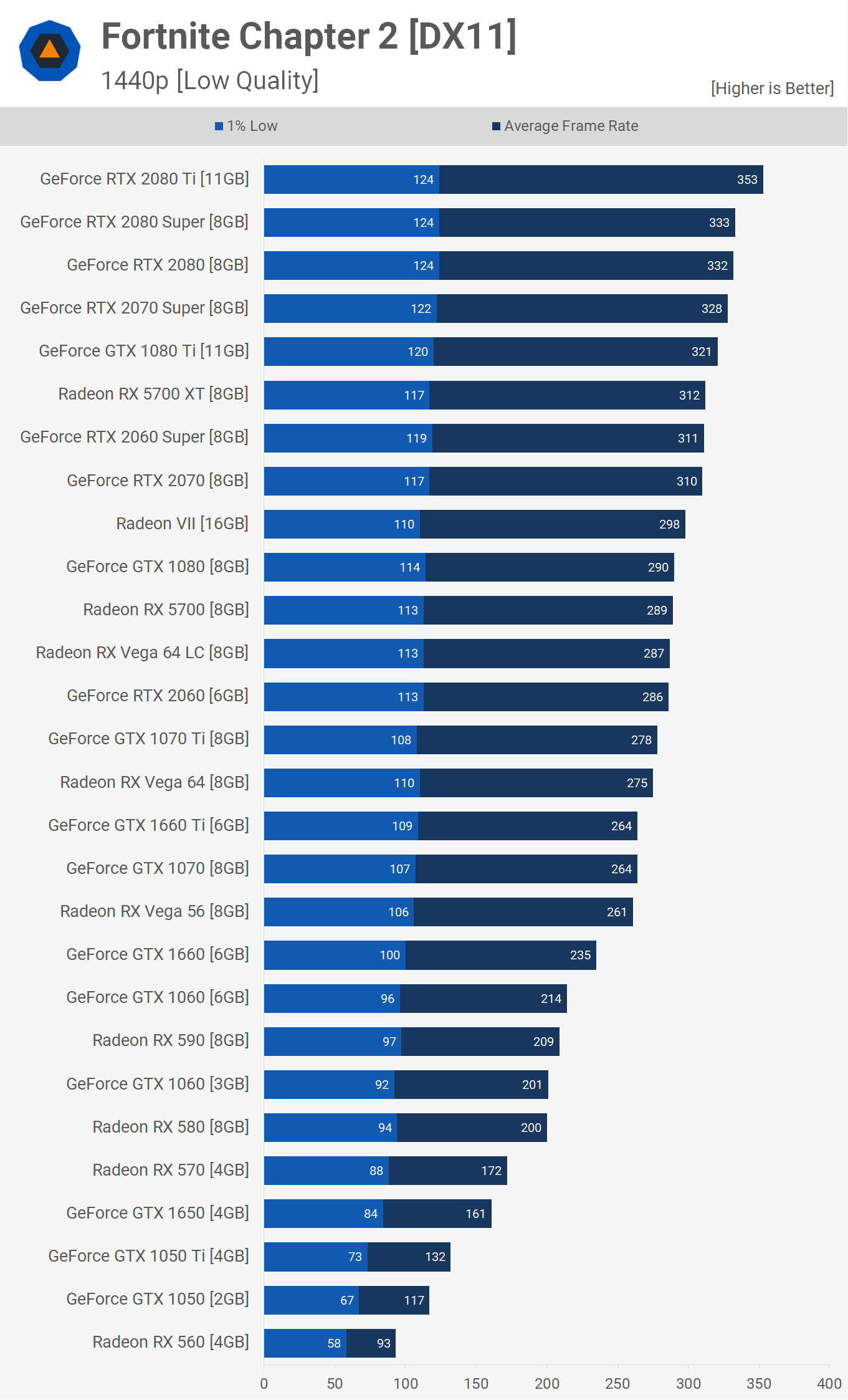

The move to 1440p does widen the gap in 1% low performance. Now the RTX 2080 Ti is 41% faster than the RX 570, though that's nothing compared to the 105% increase you'll see to the average frame rate.

Even at 1440p for those seeking over 144 fps on average, all you'll need is a GTX 1650, while models such as the GTX 1060 or RX 580 provide loads of headroom.

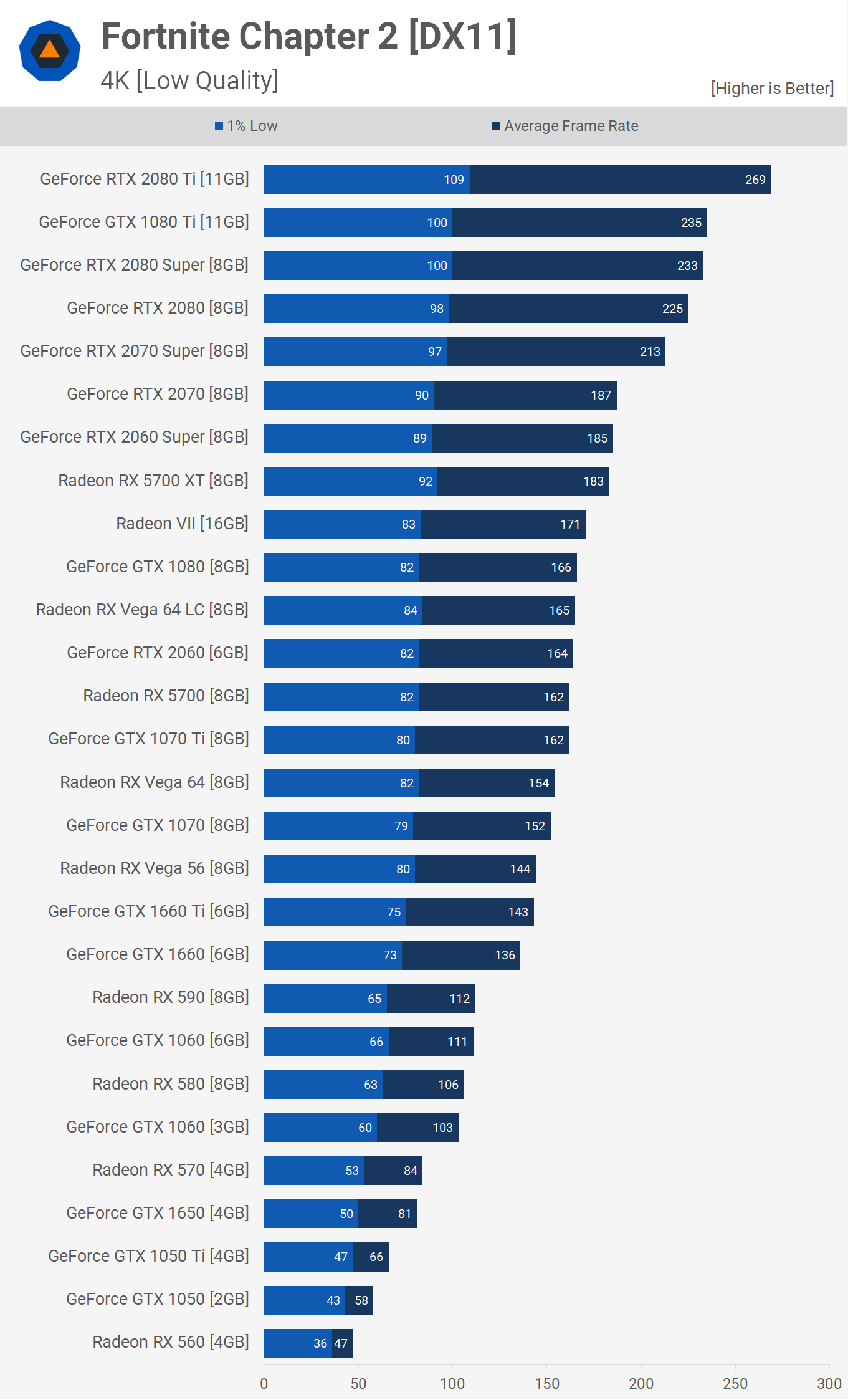

Even at 4K, using the low quality settings sees respectable performance from GPUs such as the RX 580 and GTX 1060. For 144 Hz gamers wanting to max out their refresh window, the GTX 1660 Ti, Vega 56 or the GTX 1070 are about as slow as you'd want to go at this resolution.

Beyond that though you'll be looking at over 160 fps on average, so plenty of frame rate performance with something like the RX 5700 or RTX 2060.

What We Learned

Fortnite Chapter 2 delivers a small but very noticeable visual upgrade, yet the game still runs very well on modest hardware, especially if you plan on using competitive quality settings. I personally play with almost everything on low, with the exception of the draw distance which is maxed out on the Epic setting, and the results aren't too different from what you see here. It's the shadows that seem to impact performance the most and if you're serious about playing Fortnite, this is probably the first settings we'd recommend turning off entirely.

For budget builders keen to run Fortnite smoothly on lower visual settings, we'd recommend either the GTX 1650 or RX 570, with an emphasis on the RX 570 as it's not only cheaper, but will offer a superior gaming experience in most modern titles. For example, in our day-one GTX 1650 review the GeForce GPU was 10% slower on average.

If you can afford the RX 580 or GTX 1060 they'll offer a reasonable performance increase and as an example we saw a 16% frame rate improvement at 1440p with the low quality preset over the RX 570 and GTX 1650. They're also suitable for use with 144 Hz monitors using those competitive quality settings.

Now, for gamers seeking to play Fortnite in all of its glory at 1080p, you'll require an RX 570 or GTX 1650 for just over 60 fps. Then for around 144 fps on average, be prepared to shell out $400 for either the RTX 2060 Super or 5700 XT.

Fornite is a massive title on PC and for quite some time GeForce owners had the edge, so it's surprising that we can now recommend Radeon GPUs for those primarily looking to play Fortnite, too, thanks to Navi and driver optimization improvements. About two years ago when we benchmarked the game, the GTX 1070 Ti was almost 30% faster than Vega 56 at 1440p using the Epic quality preset. Today that's down to just 13% faster and this is entirely down to the work AMD's done to optimize their drivers for Fortnite.

Shopping Shortcuts:

- GeForce RTX 2070 Super on Amazon

- GeForce RTX 2060 Super on Amazon

- GeForce RTX 2080 Ti on Amazon

- AMD Radeon RX 5700 XT on Amazon

- AMD Radeon RX 5700 on Amazon

- Radeon RX 570 on Amazon

- Radeon RX 580 on Amazon

- Radeon RX 590 on Amazon

- GeForce GTX 1650 on Amazon

- GeForce GTX 1660 on Amazon

- GeForce GTX 1660 Ti on Amazon