Final Thoughts

While they may be entering the market second with their version of the technology, AMD has laid the groundwork with FreeSync for the ideal adaptive sync standard going forward. The company has delivered on their promises to create a cheaper, more flexible, open standard for variable refresh, which compared to Nvidia's closed G-Sync implementation, makes it the better choice for gamers.

The core experience delivered by adaptive sync is inherently the same regardless of whether you choose FreeSync or G-Sync. Wherever possible, the display will refresh itself at the instant a frame from the GPU has finished rendering, removing stuttering, tearing and general jank that is present from fixed-refresh solutions. The result is smoother, more responsive gameplay, which makes playing at 40 FPS feel just as good as 60 FPS.

Aside from adding extra performance to your system, purchasing an adaptive sync monitor will give you the greatest improvement to your PC gaming experience. If you're a gamer and you're considering a display upgrade, make sure you consider one that supports variable refresh rates, especially if a high-resolution monitor is in your sights.

So why is FreeSync a better option than G-Sync? Isn't the main benefit to adaptive sync provided through both technologies?

Yes, regardless of whether you choose FreeSync or G-Sync, you'll get the crucial variable refresh feature that improves your gaming experience significantly. But FreeSync provides an extra collection of features that G-Sync doesn't, most notably the ability to choose to have v-sync enabled or disabled for refresh rates outside the variable range. This is especially handy for situations where frame rates are below the minimum refresh rate: G-Sync's choice to force v-sync on introduces stutter and an extra performance hit below the minimum refresh, which can be resolved on FreeSync by disabling v-sync.

FreeSync also provides more flexibility on the display side. Monitor manufacturers can include extra inputs (although DisplayPort is required for adaptive sync), a fully-functional on-screen display, and color adjustment options. Through a wider range of supported refresh rates (9 to 240 Hz), OEMs are free to design displays that are both faster and slower than G-Sync equivalents.

But the main advantage, at least right now, concerns price. As FreeSync is a VESA standard, and doesn't require a proprietary chip like G-Sync does, FreeSync monitors are simply cheaper. Both of the 27" 1440p 144 Hz FreeSync monitors - the $499 Acer XG270HU and the $599 BenQ XL2730Z - are cheaper than the one G-Sync equivalent, Asus' $779 ROG PG278Q. That's a huge saving of $180-280.

Another way to put this is to compare the FreeSync Acer XG270HU to the G-Sync Acer XB270HA. Both are 27-inch 144 Hz monitors with a price tag of $499, but the FreeSync equivalent comes with a free upgrade from 1080p to 1440p. That's nearly twice as many pixels in the FreeSync display for the same price.

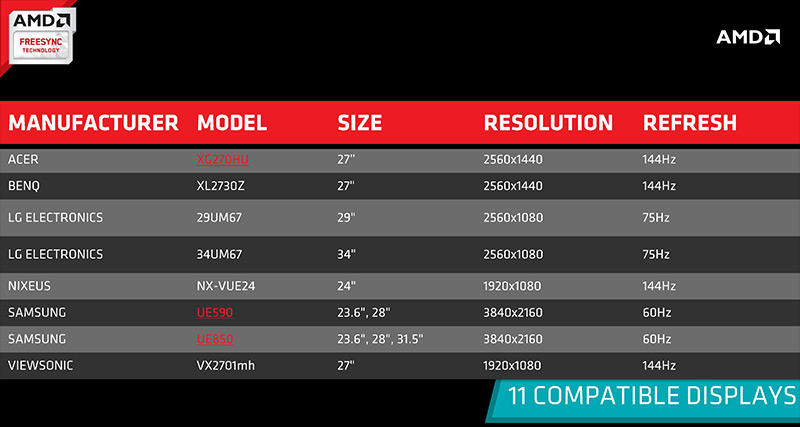

Other options include LG's 29UM67, a 29-inch IPS ultrawide monitor with a price tag of $449, the same price as the cheapest G-Sync monitor, a 24-inch 1080p 144 Hz AOC model. The display I used to review is LG's 34UM67, which retails for $649. And then there's a selection of displays which haven't been released, including a 27-inch 1080p/144Hz model from ViewSonic, a 24-inch 1080p/144 Hz model from Nixeus, and a five Ultra HD monitors in a variety of sizes from Samsung. There are certainly more monitors to come, but I'd expect all of these to be at least $100 cheaper than their G-Sync counterparts.

While FreeSync is a very promising technology that I expect will eventually become the leading adaptive sync spec on the market (once Nvidia supports VESA Adaptive Sync), there are some drawbacks to its early implementations. For this reason, I would hold off purchasing a FreeSync monitor until the technology has had some more time to mature.

The main problem is with manufacturers settling for a minimum refresh rate that's too high. Although the LG 34UM67 is otherwise a great monitor, a minimum refresh of 48 Hz doesn't give users the full benefits of adaptive sync, which has a golden zone of 40-60 Hz. You'll still have a better gaming experience on a 48-75 Hz FreeSync monitor, but monitors with a minimum of 40 Hz (like the aforementioned models from Acer and BenQ) are better options. 30 Hz, the minimum for G-Sync and the lowest refresh rate gamers will put up with, is an ideal minimum for adaptive sync monitors.

As such, and because monitors are infrequently upgraded pieces of hardware, I'd recommend waiting until manufacturers release great FreeSync monitors with minimum refresh rates of 30 Hz. This will give time for FreeSync to mature, and you'll be left with the best adaptive sync experience possible. AMD has laid great foundations, it's now up to display OEMS to deliver.

What about Nvidia GPU owners? At this stage, with G-Sync on the market and working as intended, it's hard to recommend switching to an AMD ecosystem just for cheaper adaptive sync monitors. But it also creates a tricky predicament. What if Nvidia decides to support VESA Adaptive Sync, and therefore FreeSync monitors, in the future? This would mean that G-Sync early adopters are locked in to Nvidia's ecosystem, and couldn't switch to AMD without also changing monitors. Meanwhile, AMD GPU owners who've bought in to FreeSync would suddenly be free to switch to Nvidia whenever they like, and those Nvidia owners who've waited are greeted with cheaper adaptive sync monitors.

This leads me to advise caution towards buying a G-Sync monitor. If you really want adaptive sync today, and you're an Nvidia GPU owner, it's your only option and in the short term it'll suit you just fine. But in the long run the market might change, Adaptive Sync might become the standard, and owning a G-Sync monitor might feel restrictive. It's hard to predict the future, but it's definitely something to consider.