The big picture: Facebook utilizes a three-pronged approach to fighting fake accounts including stopping the creation of accounts before they ever make it onto Facebook. The social network's detection systems also look for potential fakes as soon as they sign up and can delete those that make it past the first two defenses.

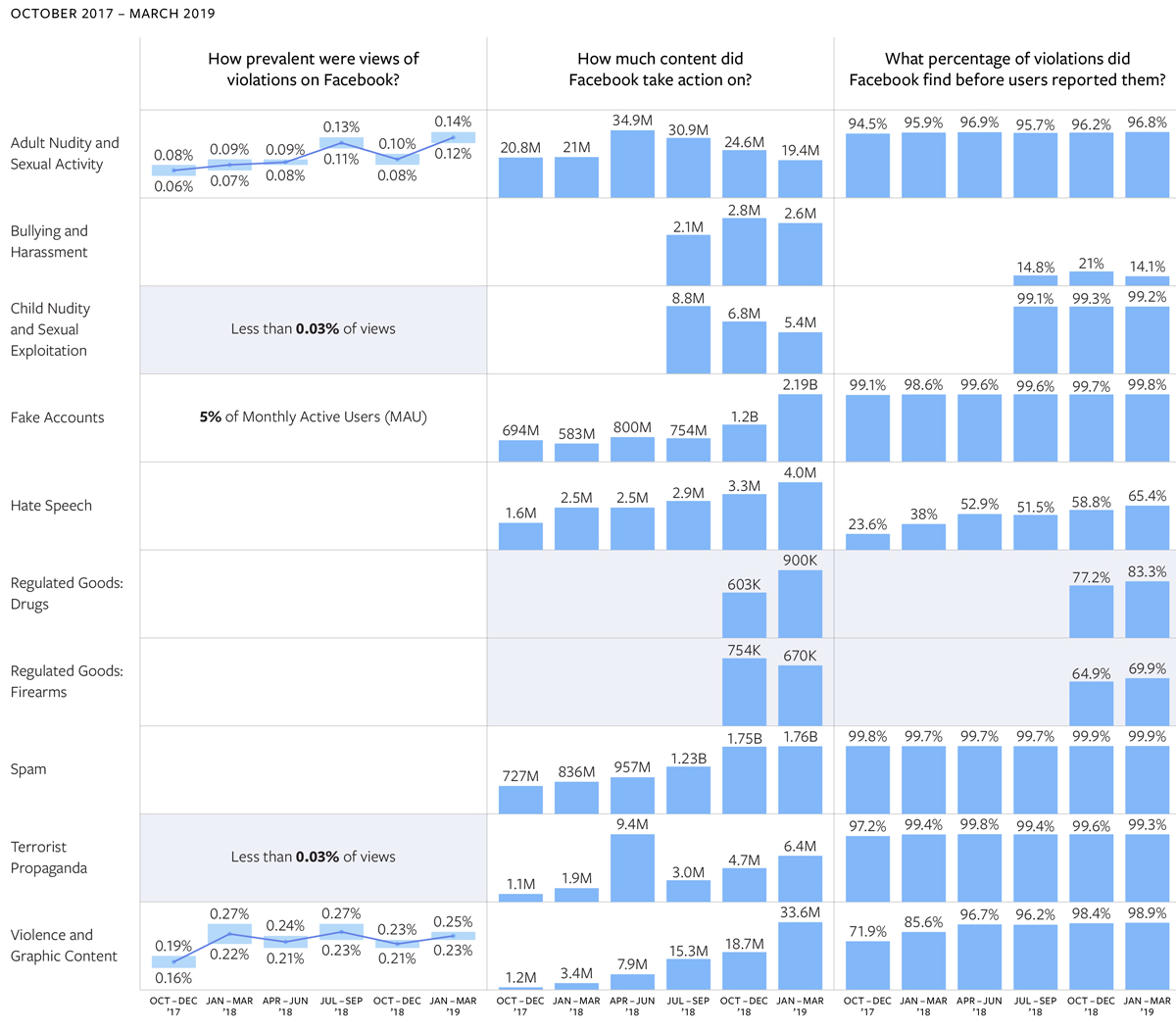

Facebook in the first quarter of 2019 disabled a staggering 2.19 billion fake accounts, up from just 1.2 billion bogus accounts in the previous quarter. The admission came on Thursday as part of Facebook’s third Community Standard Enforcement Report and is the result of automated attacks from bad actors attempting to create large volumes of accounts at once.

To put the figures into perspective, Facebook as of March 31, 2019, had 2.38 billion monthly active users on its platform.

Alex Schultz, VP of Analytics at Facebook, cautioned about putting too much stock into the figure.

The number for fake accounts actioned is very skewed by simplistic attacks, which don’t represent real harm or even a real risk of harm. If an unsophisticated, bad actor tries to mount an attack and create a hundred million fake accounts — and we remove them as soon as they are created — that’s one hundred million fake accounts actioned. But no one is exposed to these accounts and, hence, we haven’t prevented any harm to our users.

Instead, Schultz said prevalence – the frequency at which content that violates Facebook’s standards is viewed – is a better metric to go by. “While content actioned describes how many things we took down, prevalence describes how much we haven’t identified yet and people may still see,” said Guy Rosen, VP of Integrity.

As it relates to fake accounts, Facebook estimates that five percent of monthly active accounts – or around 119 million accounts – are fake.

Facebook also shared prevalence metrics for adult nudity and sexual activity as well as violence and graphic content. It is estimated that for every 10,000 times people viewed content, 11 to 14 views contained material that violated its adult nudity and sexual activity policy. And for every 10,000 views, an estimated 25 featured content that breached the violence and graphic content policy.

Facebook additionally shared a prevalence metric for global terrorism and for child nudity and sexual exploitation for the first time. The numbers in both of these categories were too low to measure using standard techniques, Rosen said, but for every 10,000 times people viewed content, less than three views featured material that violated each policy.

https://www.techspot.com/news/80211-facebook-removed-staggering-219-billion-fake-accounts-first.html