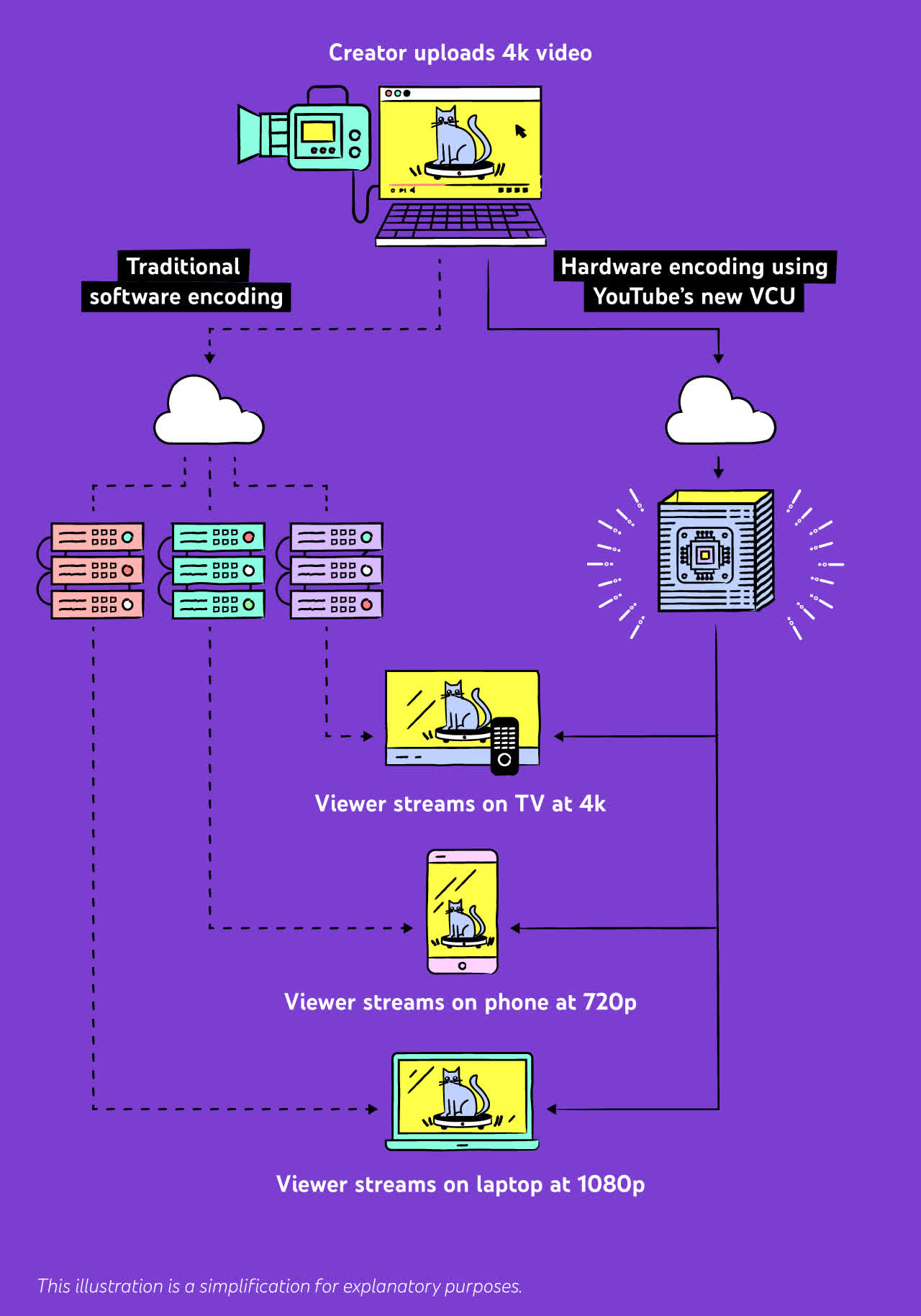

The big picture: We consume so much video on YouTube yet we seldom stop to think of the magic happening behind the scenes that makes it easy for us to watch videos and livestreams on a variety of devices. For the past six years, Google has been busy improving the process of taking a video uploaded to YouTube and turning it into 10 to 15 variations with different resolution and compression formats.

Last month, Google said it was "doubling down" on custom silicon in an effort to "boost performance and efficiency now that Moore’s Law no longer provides rapid improvements for everyone." To that end, the company hired former Intel head of Core & Client Development Group Uri Frank to lead a server chip design team in Israel.

Uri is bringing 25 years of experience in custom CPU design and delivery, which will prove useful in a number of Google projects, including a custom SoC for its Pixel smartphones. The company says it's interested in deeper integration between CPU, networking, storage, memory, and various accelerators for video transcoding, machine learning, encryption, compression, secure data summarization, and remote communication.

The end goal is to gain higher performance in various workloads with less power consumption and lower total cost of ownership, something that's even more apparent at the data center level than, say, a consumer device. For instance, Google in 2018 started employing "Argos" Video Coding Units (VCUs) for accelerating video transcoding of videos uploaded to YouTube, and we now know more about these new systems.

Transcoding is no small task for the company, as casual users and creators upload more than 500 hours of video content per minute, and people are spending more time watching videos and livestreams with every passing year. Traditionally, YouTube's infrastructure had relied on CPU-based transcoding to compress videos so that it sent the smallest possible amount of data to your device at the highest possible quality, which was costly, slow, and inefficient.

By comparison, the introduction of Argos VCUs brought a performance boost of anywhere between 20 to 33 times when compared to Google's previous solution that relied on well-tuned software transcoding on traditional servers. The company's VCU is a full-length PCIe card that hosts two Argos chips cooled by a rather large aluminum heatsink, and seems to only need one 8-pin power connector for additional power, similar to what we typically see on mid-range graphics cards.

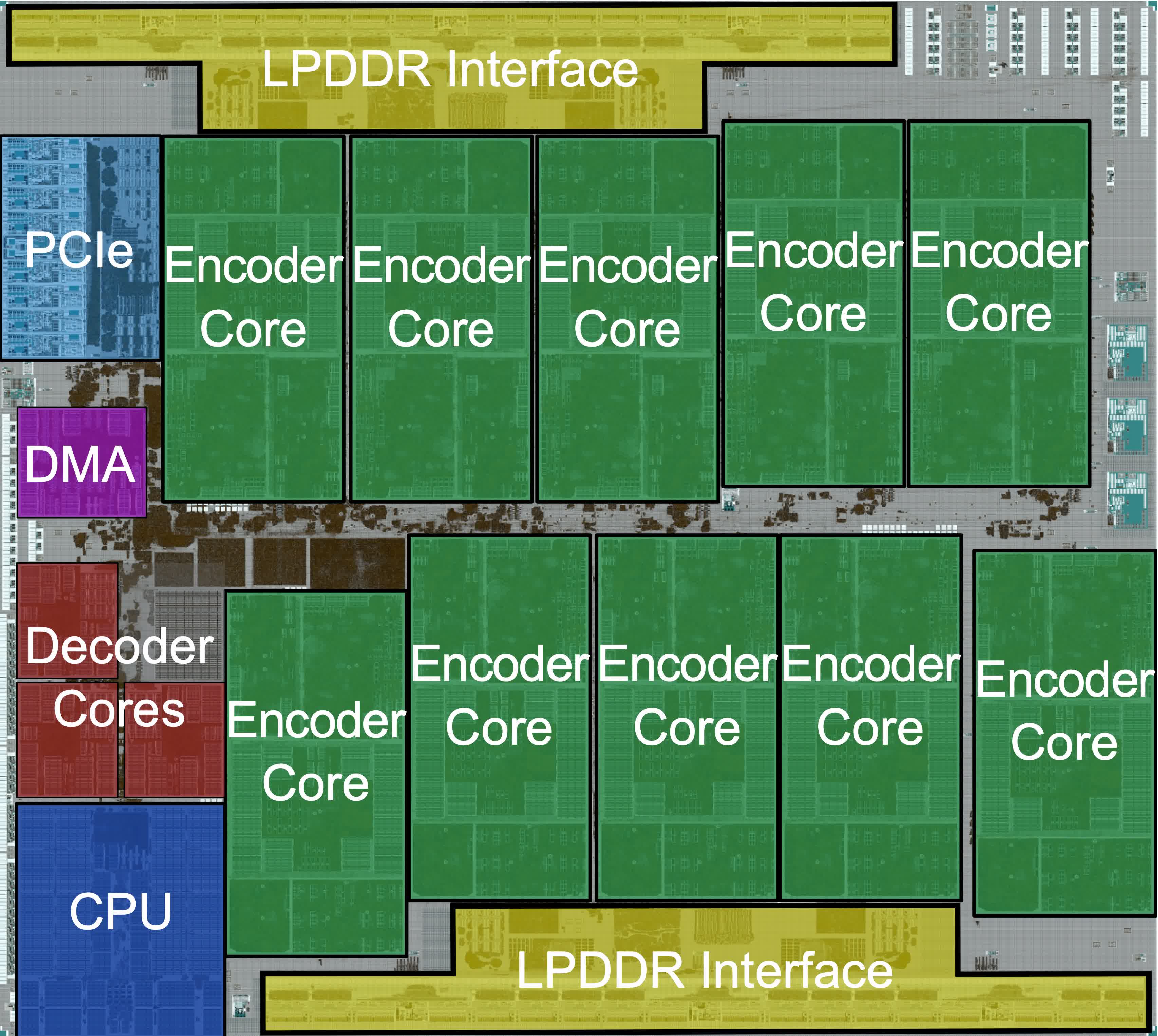

Image: Google's Argos ASIC

Each Argos chip has 10 cores which Google says are "off-the-shelf IP cores" that connect to six external LPDDR4-3200 RAM chips over four channels, for a total capacity of 8 GB dedicated for each Argos ASIC. Each core is capable of encoding a 2160p source in realtime at up to 60 frames per second using three reference frames. Still, as powerful as the Argos VCUs are, Google is only deploying them in a relatively small cluster of dedicated machines inside YouTube's existing server system, which is based around Intel Skylake CPUs and Nvidia T4 Tensor Core GPUs.

Image: H264(left) vs VP9 (right)

Google never wastes an occasion to extol the virtues of its VP9 codec, and how it compares favorably in terms of video quality against H264. This time is no different, but the company did admit that it takes five times more hardware resources to encode, which is why it's spent the last six years improving YouTube's infrastructure with custom transcoding accelerators.

A second-generation Argos VPU is already in the works, with support for the AV1 codec which offers up to 30 percent more compression when compared to VP9 with an imperceptible quality loss. AV1 requires even more computer power to encode (as well as decode for playback), but it enjoys support from several companies including Amazon, Netflix, Microsoft, Mozilla, Qualcomm, MediaTek, and even Apple -- who's been relatively slow with codec adoption and only recently added support for VP9 on macOS Big Sur 11.3.

Google hopes that providing more AV1 encodes on YouTube will encourage hardware manufacturers to include hardware-accelerated decoding in new chips, potentially solving the chicken and egg problem in the case of this codec.

https://www.techspot.com/news/89468-google-building-custom-silicon-youtube-video-transcoding.html