At the start of Nvidia’s annual GPU Technology Conference, one of CEO Jen-Hsun Huang's announcements during his keynote, which lasted over two hours, was the company’s first GPU based on its seventh-generation Volta architecture, the Tesla V100.

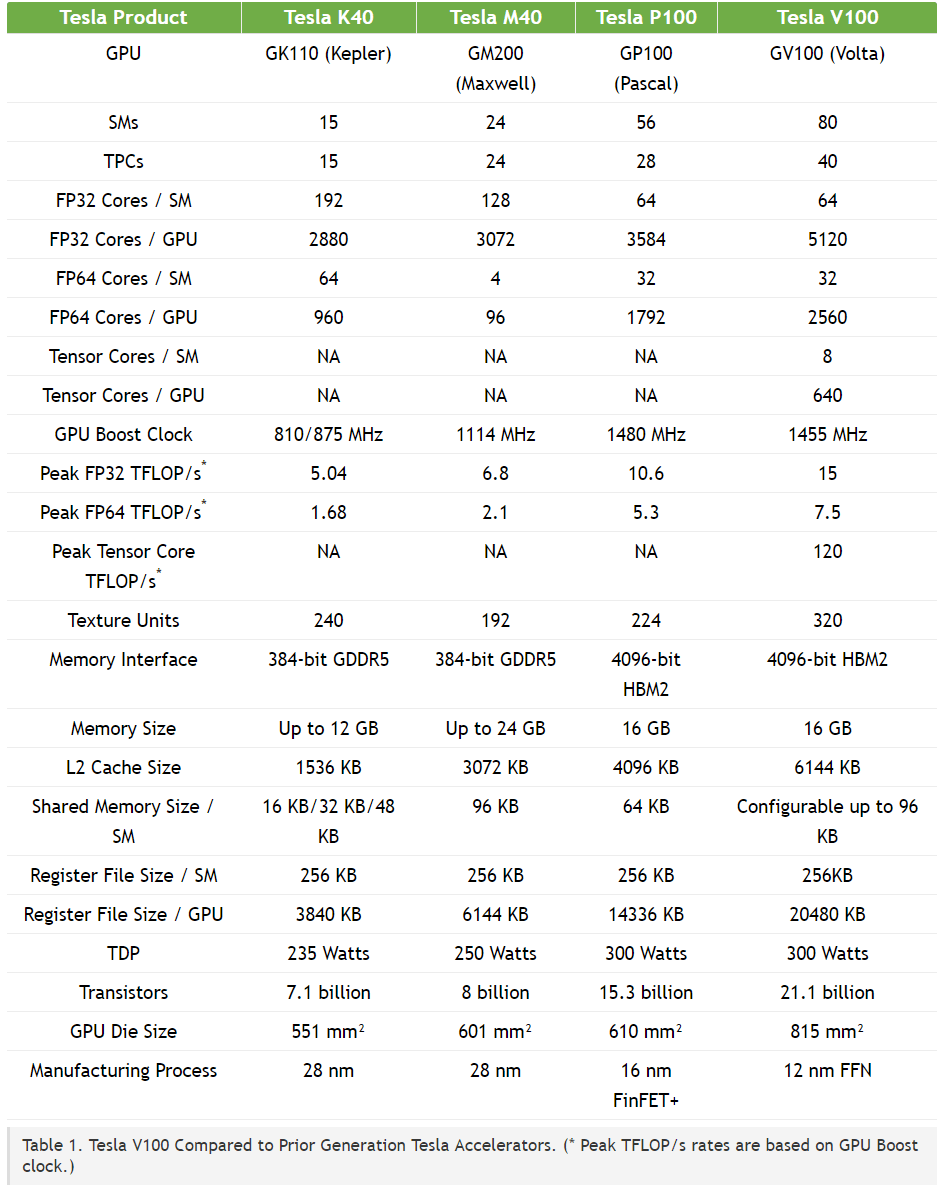

Built on a 12-nanometer FFN process, the GPU is a big leap forward from the Pascal-based Tesla P100. It features 21 billion transistors and 5120 CUDA cores with boost clock speeds of 1455MHz, along with 16GB of 4096-bit HBM2 memory (from Samsung) running at 900GB/s, all packed into an 815 square millimeter die.

Huang claims the V100, which cost $3 billion in R&D, is the most complex (and largest) chip that can be created with current semiconductor physics. It features new SM Processor architecture, which improves FP31 and FP64 performance, and is 50 percent more energy efficient than Pascal. It also comes with new Tensor cores designed for deep learning applications.

The V100 features an updated NVLink 2.0 interface that enables 300GB/s transfer speeds. The technology is now 10 times faster than standard PCIe connections, according to Huang.

The peak computation units for the V100 are as follows:

- 7.5 TFLOP/s of double precision floating-point (FP64) performance;

- 15 TFLOP/s of single precision (FP32) performance;

- 120 Tensor TFLOP/s of mixed-precision matrix-multiply-and-accumulate.

Nvidia also revealed that eight of the V100 chips would be used in an updated version of its DGX-1 supercomputer. Huang said the machine has the power to replace 400 servers, which explains its $149,000 price tag. The DGX-1V will arrive in Q3; those on a tighter budget may want to consider Nvidia’s "personal AI supercomputer" - the DGX Station. It contains four Tesla V100 GPUs and costs $69,000.

As noted by PCWorld, the V100’s reveal doesn’t necessarily mean Volta-based GeForce cards will be here in the next couple of months, but it does give an idea of what we can expect to see in the consumer GPUs.

You can find out more about the Tesla V100 here. Check out the table below to see how it stacks up against the previous five years of Tesla accelerators.

https://www.techspot.com/news/69275-nvidia-unveils-first-volta-gpu-tesla-v100.html