In the technology-based world that we live in today, it's easy to overlook the fact that computers have doubled their performance output every one and a half years since the 1970s. This performance ratio can be directly credited to Moore's law which states that the number of transistors that can be placed on an integrated circuit board can be doubled every two years. What many people don't really pay attention to, however, is the electrical efficiency of computing and how it has changed over the years.

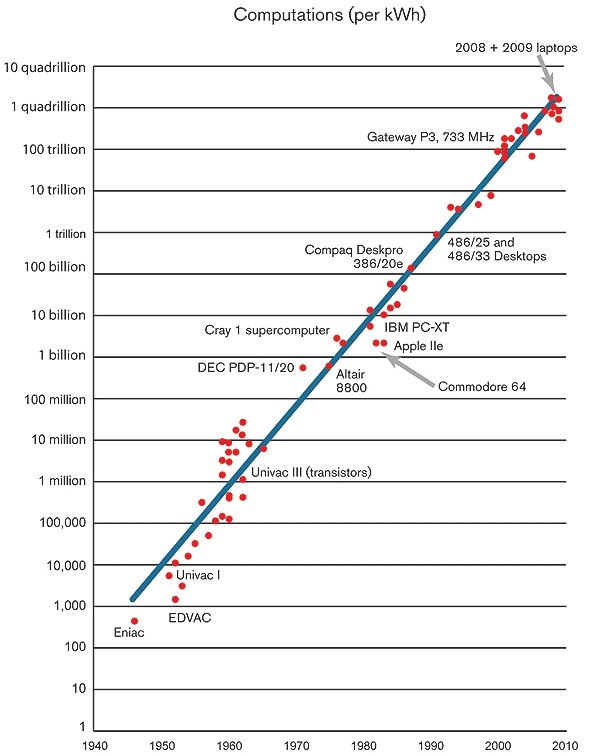

Electrical efficiency is measured by the number of computations that can be performed per kilowatt-hour of electricity consumed. As performance doubles every one and a half years, so too does electrical efficiency - it's one of the key reasons we are able to have notebook computers and more recently, extremely powerful smartphones. An investigative piece by Technology Review states that this trend of lowered power consumption will continue at the same rate for years to come.

One good example of this technology in action is the wireless no-battery sensors used to transmit data from a weather station to a display every five seconds. Developed by Joshua R. Smith, these sensors are able to capture stray energy from television and radio signals to power the device which only requires 50 microwatts on average.

A study in 1985 by physicist Richard Feynman showed that computing efficiency could be improved upon by a factor of at least a hundred billion. Data from TR shows that between 1985 and 2009, it only progressed by a factor of 40,000 which means there's still a massive amount of potential to tap into.