Robots are already replacing some human jobs but in other scenarios, robots will be expected to work alongside their human counterparts. On cruise ships, for example, robots are being used to serve mixed drinks while in Japan, humanoid robots stand behind the counter of a hotel to assist guests with checking in.

For robots that are meant to connect with the public or stand alongside real-life co-workers, how they handle interactions with humans (and vice versa) is something that researchers - like those at the University of Bristol and University College London - are actively trying to hash out.

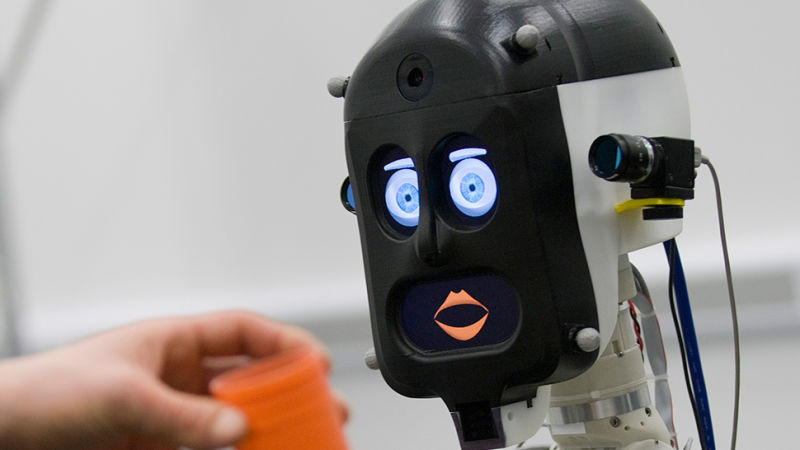

As part of a recent study, researchers from the two schools asked participants to take part in a mock cooking scenario (making an omelet) involving one of three version of their BERT2 robot: one robot that didn't communicate but didn't make any mistakes, one that didn't communicate but would make a programmed mistake and attempt to rectify it and one that could communicate and could show expressions (via displays for its face) while being programmed to also make a mistake and try to correct it.

Interestingly enough, the majority of participants preferred the third robot, the one that talked but also made mistakes despite the fact that it was 50 percent slower than the mistake-free robot.

At the end of the test, the talkative robot would ask the participant if it got the job as a kitchen assistant. After seeing the robot drop an egg and display human-like emotion earlier on, some users were reluctant to answer because they didn't want to hurt the robot's feelings. One person even flat out lied to the robot while another complained of emotional blackmail.

The research will be presented at the IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN) later this week. Optionally, you can check out a pre-print copy by clicking here.