From movies such as Blade Runner to shows like CSI, the ability to zoom in on photos or video and enhance the tiny, pixelated images to the point of near-clarity is a trope that's been around for years. While it continues to remain a fictional piece of technology, Google has come up with a system that's incredibly close to what we've seen on the big screen.

The lack of detail in images containing a small number of pixels makes it nearly impossible to create a clearer picture than what's already there. But the Google Brain team has managed to partly get around this problem by using neural networks that fill in the blanks.

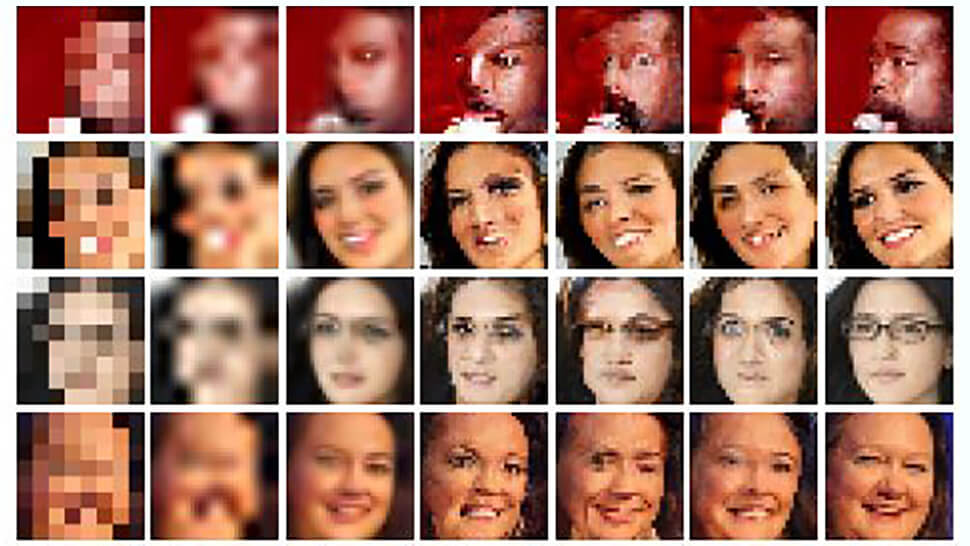

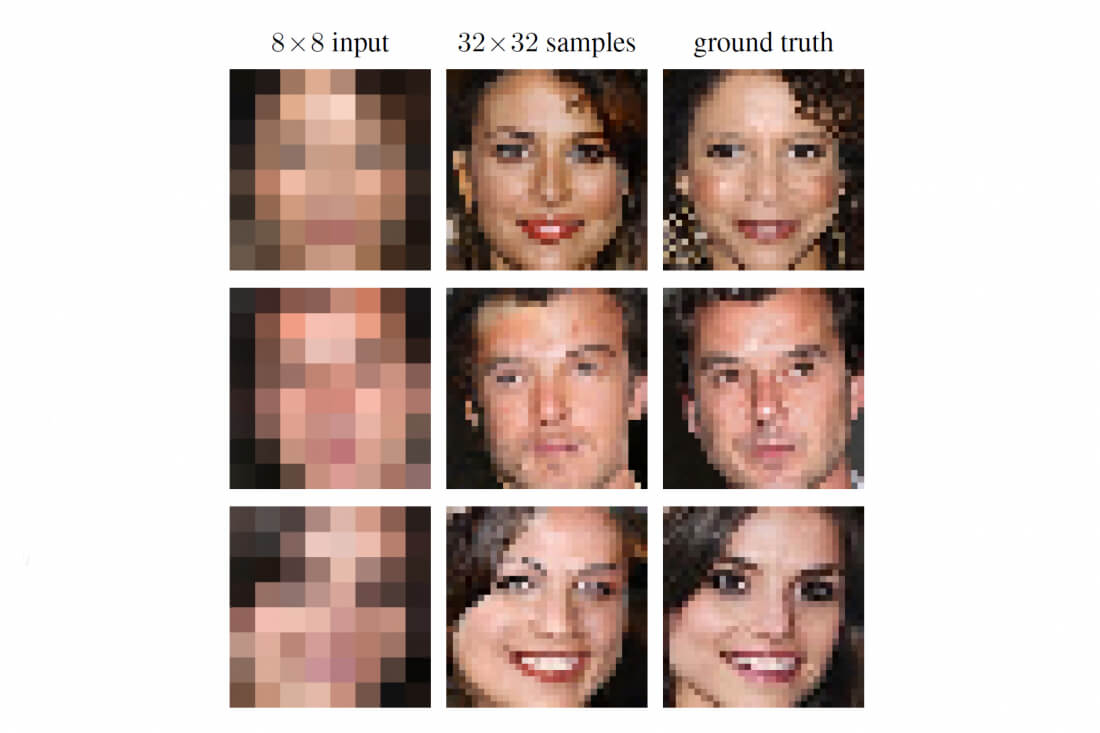

The columns below show three sets of images. Those on the far left feature the 8x8 source image, which is unidentifiable. But the column in the center shows what Google's system was able to come up with from the 64 source pixels. While the accuracy of the results varies, you can see how close they are to the real images, which are in the right column.

Ryan Dahl, Mohammad Norouzi, and Jonathon Shlens came up with this super-resolution technique by combining two neural networks. The first, the "condition" network, attempts to map the 8x8 source against similar high-res photos to get an idea of what the image should look like.

It's then the turn of the "prior" network, which adds realistic details to create the final image. It does this by checking a large number of similar high-res samples, such as faces, then adding new pixels to the source material using what it's learned from that category of image.

When the results of the two neural networks are mixed, you're left with a pretty amazing final result - especially considering the source material the system has to work with. It's still not accurate enough to be used as a way of identifying suspects, and, as noted by TNW, the images can't serve as evidence in criminal cases as they're computed approximations. But the system could still one day assist police with their work.