Turning an audio clip into a realistic video of a person speaking those words usually doesn't turn out well, but researchers at the University of Washington have developed a system that produces some amazing results.

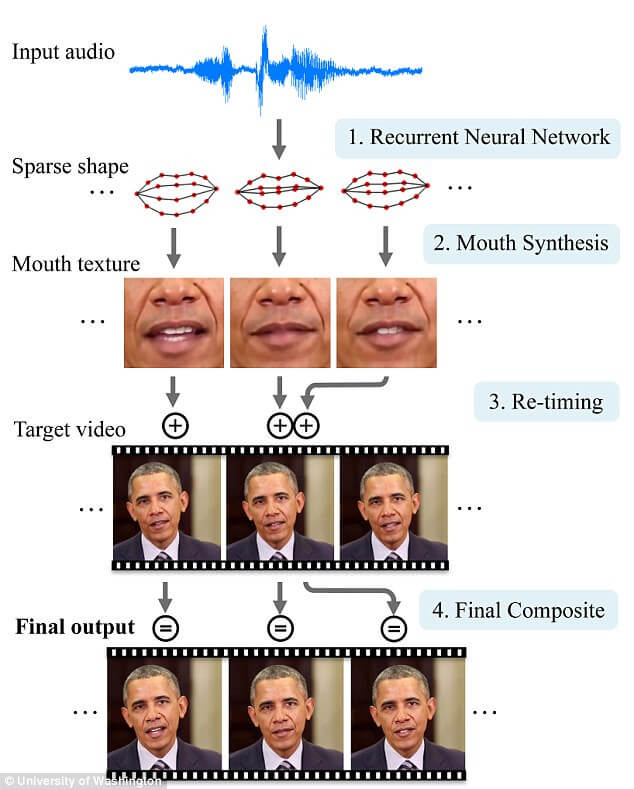

The team demonstrated their work by generating a surprisingly lifelike video of Barack Obama. They trained a neural network by feeding it 14 hours of the former President's weekly address videos. It was then able to create mouth shapes that synced with the audio clips of Obama talking about different topics. Finally, these were superimposed and blended over a clip of his face from another video (not the same one as the audio source).

The process is almost entirely automated, and while the end result isn't quite 100 percent perfect, you can see in the video below that it's not far off.

"These type of results have never been shown before," said Dr Ira Kemelmacher-Shlizerman, an assistant professor at the UW's Paul G. Allen School of Computer Science & Engineering. "Realistic audio-to-video conversion has practical applications like improving video conferencing for meetings, as well as futuristic ones such as being able to hold a conversation with a historical figure in virtual reality by creating visuals just from audio."

Previous attempts to create realistic video from audio has resulted in the "uncanny valley" issue. A term that describes manufactured human likenesses that can't quite pass as being a real person, making them appear a bit creepy.

"People are particularly sensitive to any areas of your mouth that don't look realistic," said lead author Dr Supasorn Suwajanakorn. "If you don't render teeth right or the chin moves at the wrong time, people can spot it right away, and it's going to look fake. So you have to render the mouth region perfectly."

While the process should make it easier to animate characters that appear in movies, TV shows, and game cutscenes, it does have the potential to be used for nefarious purposes, such as creating clips of political figures giving fake statements. But right now, it's not easy to produce as it requires many hours of source material, and the same system could be used to identify fake videos.