Google has long been trying to address the problem of controversial YouTube content such as conspiracy theory videos, but it hasn't enjoyed much success. Its latest tactic isn't designed to remove or stem the spread of these clips, but will instead add info and links to Wikipedia articles on the subjects, thereby providing users with alternative (as in generally accepted) viewpoints.

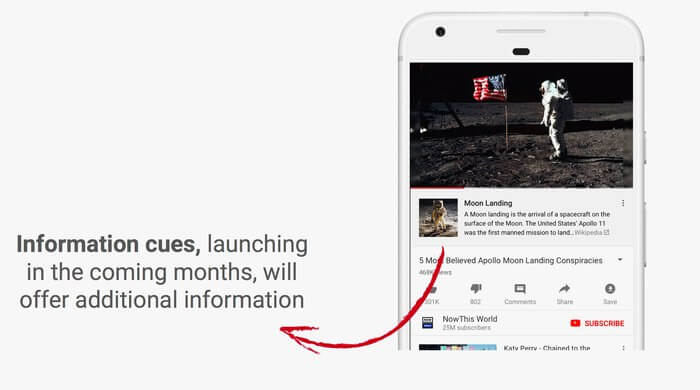

Speaking at a panel at the South by Southwest Interactive festival in Austin, YouTube CEO Susan Wojcicki said these "information cues" will appear in the form of small cards beneath YouTube videos.

Wojcicki added that the feature wouldn't appear solely on conspiracy videos, but also on topics and events that have inspired significant debate. One of the examples used was a video about the moon landings. A short extract from the Wikipedia description of the event appears directly below the clip, with a link to the Wiki page included. Wojcicki said information cues would appear alongside both documentaries and conspiracy videos on these subjects.

"People can still watch the videos, but then they have access to additional information," she said, according to Wired.

Expect to see the feature appear in other popular internet conspiracy theory videos, including those that look at chem trials, vaccinations, 9/11, and flat earth claims.

"If there is an important news event, we want to be delivering the right information," Wojcicki said. She did add that "we are not a news organization," even though 18 percent of those who use YouTube rely on the platform for their news.

YouTube faced criticism for promoting content in its search results that claimed both the Las Vegas and Florida shootings were hoaxes. In the case of the latter tragedy, a clip alleging that one of the students was a "crisis actor" became the site's top trending video before being removed.

YouTube has tried adding more human moderators, removing flagged videos, and de-monetizing the channels that create these controversial clips, but its algorithm continues to surface them as it looks for videos that already have a high number of views.

Although Wikipedia entries are written by volunteers and aren't 100 percent reliable, their inclusion should help balance the conspiracy videos' arguments. But it's unclear how they will work for breaking news events that may not have a Wiki page.