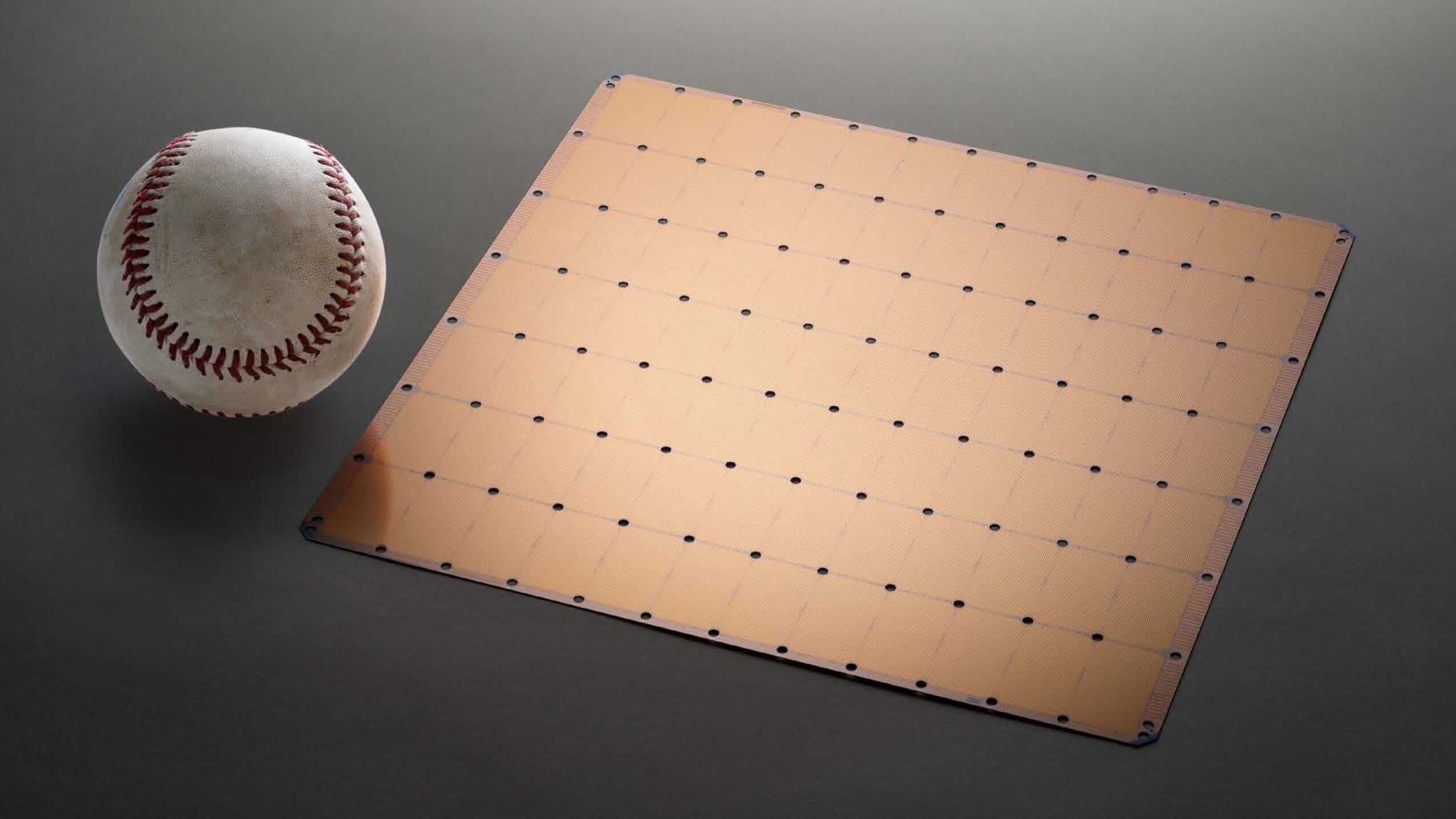

What just happened? Cerebras Systems over the summer unveiled an absolutely massive chip in the Cerebras Wafer Scale Engine. With 1.2 trillion transistors on board, it is the largest chip in the world and now it has a home in the company's latest system designed to accelerate deep learning.

Unveiled at the Supercomputing 2019 conference this week, the new CS-1 measures roughly 26 inches tall meaning three can fit in a single rack. It consumes 20kW of power, 4kW of which is dedicated to the cooling subsystem. A full 15kW is supplied to power the enormous chip with 1kW being lost to power supply inefficiencies.

The Cerebras Wafer Scale Engine powering the CS-1 is 56 times larger than the biggest GPU ever made, has 78 times more cores, 3,000 times more on-chip memory and affords 33,000 times as much bandwidth. In other words, it is ridiculously fast. Plus, it works with open source ML frameworks such as PyTorch and TensorFlow for increased flexibility.

Details on hardware specifics like clock speeds will be shared in the near future, the company said.

The CS-1 is also incredibly expensive. Specifics haven't yet been revealed but a spokesperson told Tom's Hardware that it'll cost "several million." Still, that isn't deterring everyone as the Argonne National Laboratory already has one in its possession that it is using for cancer research and basic science experiments.