Why it matters: The discovery of Sars-CoV-19 has sparked many conspiracy theories. The 5G theory, in particular, has become increasingly popular, leading to some 5G towers being burned. Facebook is attempting to reduce the impact of Coronavirus fake news by launching an anti-misinformation campaign to redirect users to reputable news sources. Despite those measures, it will be difficult to ensure all 2 billion people on the website will even see legitimate news sources.

There has been a plethora of conspiracy theories regarding the origins of Covid-19 and how it spreads. They can range from being created in a lab (which is seriously being considered by the US) to 5G cellular towers somehow spreading the virus. Facebook is trying to combat misinformation by launching anti-misinformation measures to stave off the spread of false information regarding the Coronavirus.

The first of these measures will be to start showing News Feed messages to people who have interacted with misinformation. This means people who have liked, reacted, or commented on fake news links may see a message linking them to information that has been debunked by the World Health Organization (WHO) and other reputable fact-checking sources.

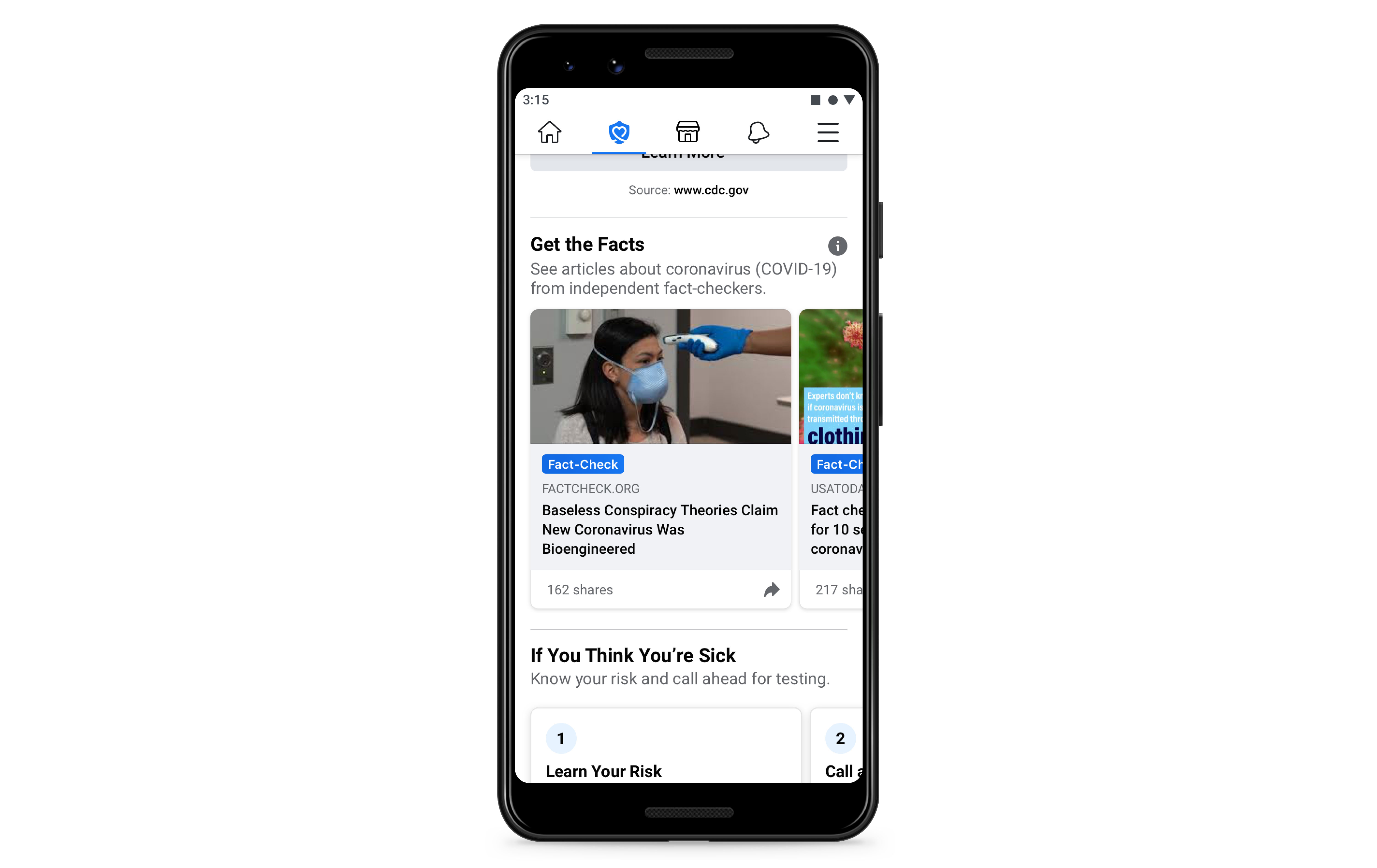

The second measure is a new section in Facebook's Covid-19 Information Center called Get the Facts. This is essentially a listing of fact check articles that also debunk popular myths about the Coronavirus. These articles are updated every week and are available now in the United States.

Facebook released additional stats regarding its fight against Coronavirus misinformation along with a peek into how it's been trying to slow distribution of those stories:

"Once a piece of content is rated false by fact-checkers, we reduce its distribution and show warning labels with more context. Based on one fact-check, we're able to kick off similarity detection methods that identify duplicates of debunked stories. For example, during the month of March, we displayed warnings on about 40 million posts related to COVID-19 on Facebook, based on around 4,000 articles by our independent fact-checking partners. When people saw those warning labels, 95% of the time they did not go on to view the original content. To date, we've also removed hundreds of thousands of pieces of misinformation that could lead to imminent physical harm. Examples of misinformation we've removed include harmful claims like drinking bleach cures the virus and theories like physical distancing is ineffective in preventing the disease from spreading."

The non-profit group Avaaz published findings that blasted Facebook for being an "epicenter" of misinformation and delaying implementation of anti-misinformation policies. The report is pretty telling and indicative of the massive uphill battle that Facebook and other social media outlets have in spreading misinformation.