In a nutshell: If you like leaving captions on your Instagram photos and videos, be prepared for a warning if they’re a bit risky. The Facebook-owned social network is utilizing artificial intelligence to identify language in captions that could be considered offensive or classed as bullying.

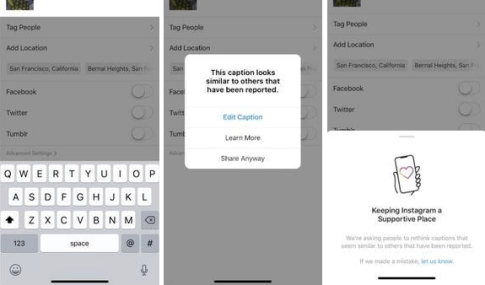

With the new system, users posting captions that Instagram believes could be offensive will see a message informing them it looks similar to others that have been reported. The company says this will give people a chance to pause and reconsider their words before posting.

Earlier this year, Instagram introduced a similar feature that also uses AI. In that case, it identifies toxic comments. The filter is powered by machine learning that has been trained using a set of test data, and takes context and relationships into consideration.

Instagram only warns users about their words being potentially offensive or bullying, so people can still ignore the prompts and post the captions/comments if they wish. But the company hopes the notifications will help reduce the number of cyberbullying incidents while educating people on what is and isn’t allowed on the platform.

“We can do more to prevent bullying from happening on Instagram, and we can do more to empower the targets of bullying to stand up for themselves,” said Adam Mosseri, head of Instagram.

“It’s our responsibility to create a safe environment on Instagram. This has been an important priority for us for some time, and we are continuing to invest in better understanding and tackling this problem.”

The AI-powered feature will launch in “select countries” first before expanding globally over the coming months.

https://www.techspot.com/news/83212-instagram-ai-warn-users-when-they-post-offensive.html