We are approaching the limits, but they're still some way off. TSMC are currently working on improving N7, while developing N5 and N3 at the same time. The latter is targeted to have a die density 3 times that of N7, although it won't be ready for volume production for another 2 to 3 years.

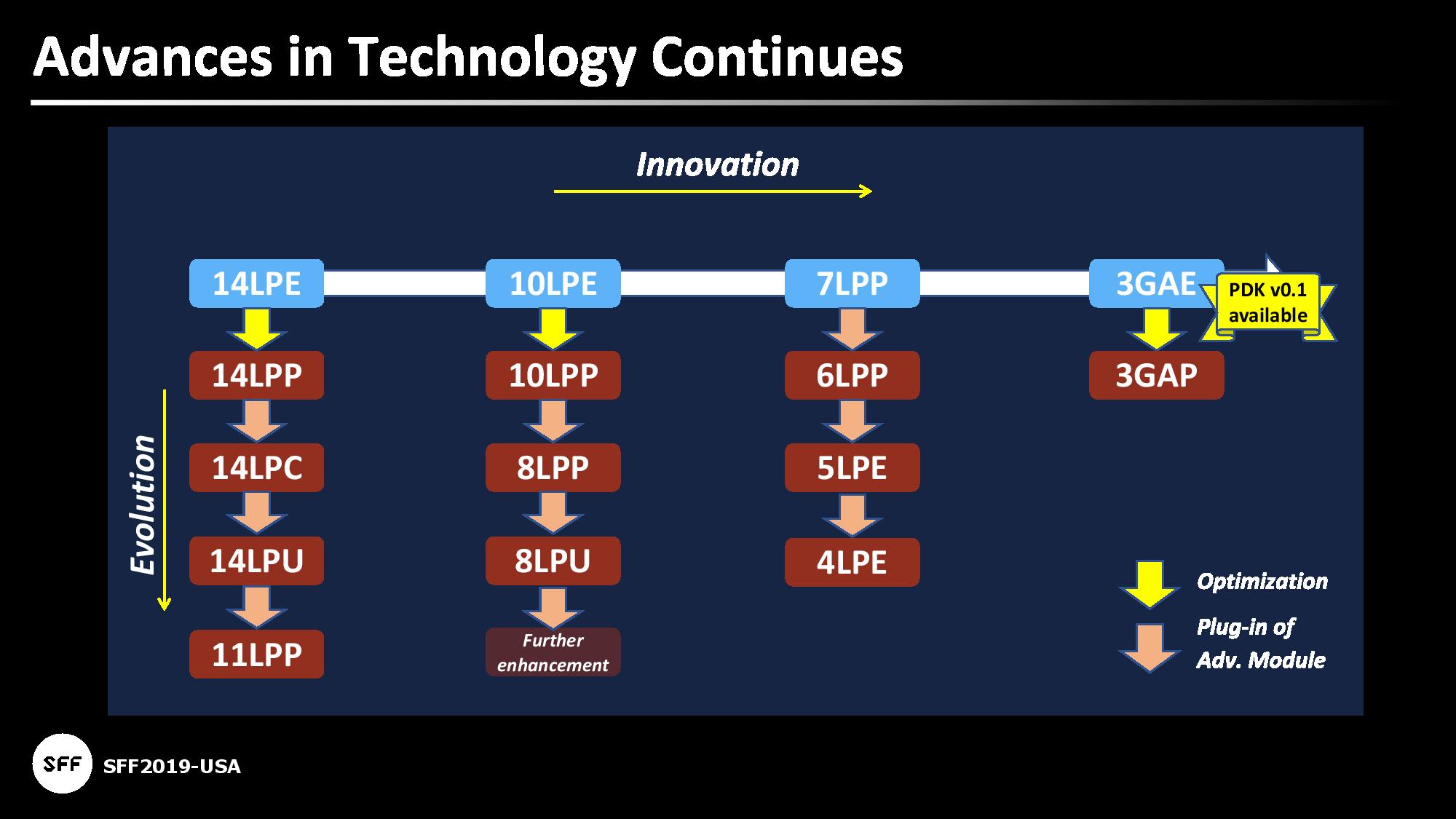

Samsung are also working on similar improvements:

Nvidia's GA102 is made on the 8LPP node, so if they plan on sticking with Samsung, there's clear scope for future monolithic designs to continue the current trend of 'more of everything.'

What kind of chip could one have with 3 times more logic density than seen in the GA102? For the same sized die, that would give you a transistor count of over 80 billion (the GA102 is 28.3b, the GA100 is 54.2b), so even though we're not going to get anywhere near that level anytime soon, it shows that the limits are nicely some distance ahead in the future.