A hot potato: Would you let someone trawl through years of social media activity if it increased your chances of getting a job? That's the basic premise of Predictim, an AI technology that analyzes babysitters' Facebook, Instagram, and Twitter accounts to come up with a "risk rating." Not too surprisingly, it's being shut out by the sites it relies on.

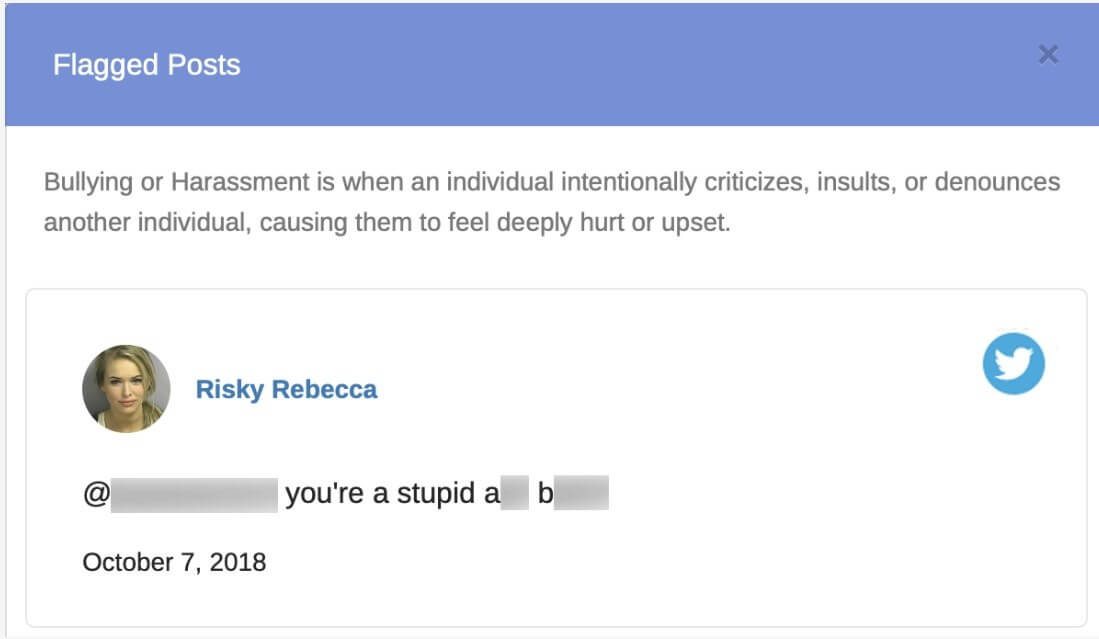

Predictim, which was funded by the University of California at Berkeley's Skydeck accelerator, uses natural language processing and computer vision algorithms to search through years of social media posts. The system then generates a report containing a risk assessment score, flagged posts, and an assessment of four different personality categories: drug abuse, bullying and harassment, explicit content, and disrespectful attitude.

Babysitters must consent to parents' request that Predictim accesses their social media accounts, but the system has received a lot of criticism since it launched last month. Not only is it invasive, but letting an algorithm decide if somebody is employable is concerning. A couple of tweets showing "disrespectful attitude" shouldn't potentially get someone blacklisted from an industry. Moreover, there's always the chance a post could be taken out of context, and even if someone was a risky babysitter, it's unlikely they'd be bragging about their heroin use and major crimes on Facebook.

As reported by the BBC, Facebook revoked most of Predictim's access to users last month after it was deemed it to be violating its policies on use of personal data. The social network is now deciding whether to block the firm entirely from the platform ---Predictim says it continues to scrape public Facebook data for its reports.

Twitter has gone one step further, telling the BBC it had recently blocked Predictim's access to site users. "We strictly prohibit the use of Twitter data and APIs for surveillance purposes, including performing background checks," a spokeswoman said. "When we became aware of Predictim's services, we conducted an investigation and revoked their access to Twitter's public APIs."

Predictim's black-box algorithm analyzes babysitters' social media accounts, reducing them to a single fit score. Beyond disgusting. Downright evil.

--- DHH (@dhh) 24 November 2018

Social media algorithms prod you to be the worst/fake you can be, hiring algorithms reject you for it. https://t.co/fi0NlZsTBV

Predictim said it uses human reviewers to check flagged posts and help prevent false positives. It charges $25 for a single scan, while $40 gets you two and it's $50 for three. The company says it is in talks with major "shared economy" companies to provide vetting for rideshare drivers or accommodation service hosts.

The scraping of profile data is a controversial topic. LinkedIn remains in a legal battle with hiQ Labs, which used the network members' public information so companies to could monitor employees, thereby determining "skills gaps or turnover risks months ahead of time."

Image credit: Shutterstock / Pindyurin Vasily