What just happened? Advancements in AI are outpacing their hardware counterparts. In hopes of keeping up, technology giants like IBM are looking to alternative types of computing for future performance boosts.

In modern computing hardware, data is shuffled from memory to processors as needed. As IBM explains, this transitional period takes a lot of time and energy. With in-memory computing, however, memory units moonlight as processors, allowing them to serve as both storage and computation components.

Eliminating the data shuffle between traditional memory and processor can reduce energy demand by as much as 90 percent.

At the International Electron Devices Meeting (IEDM) and the Conference on Neural Information Processing Systems (NeurIPS) this week, IBM is reporting 8-bit precision - the highest yet - for an analog chip, which the company says roughly doubles accuracy compared to previous analog chips. The new approach consumes 33x less energy than a digital architecture of similar precision, we're told.

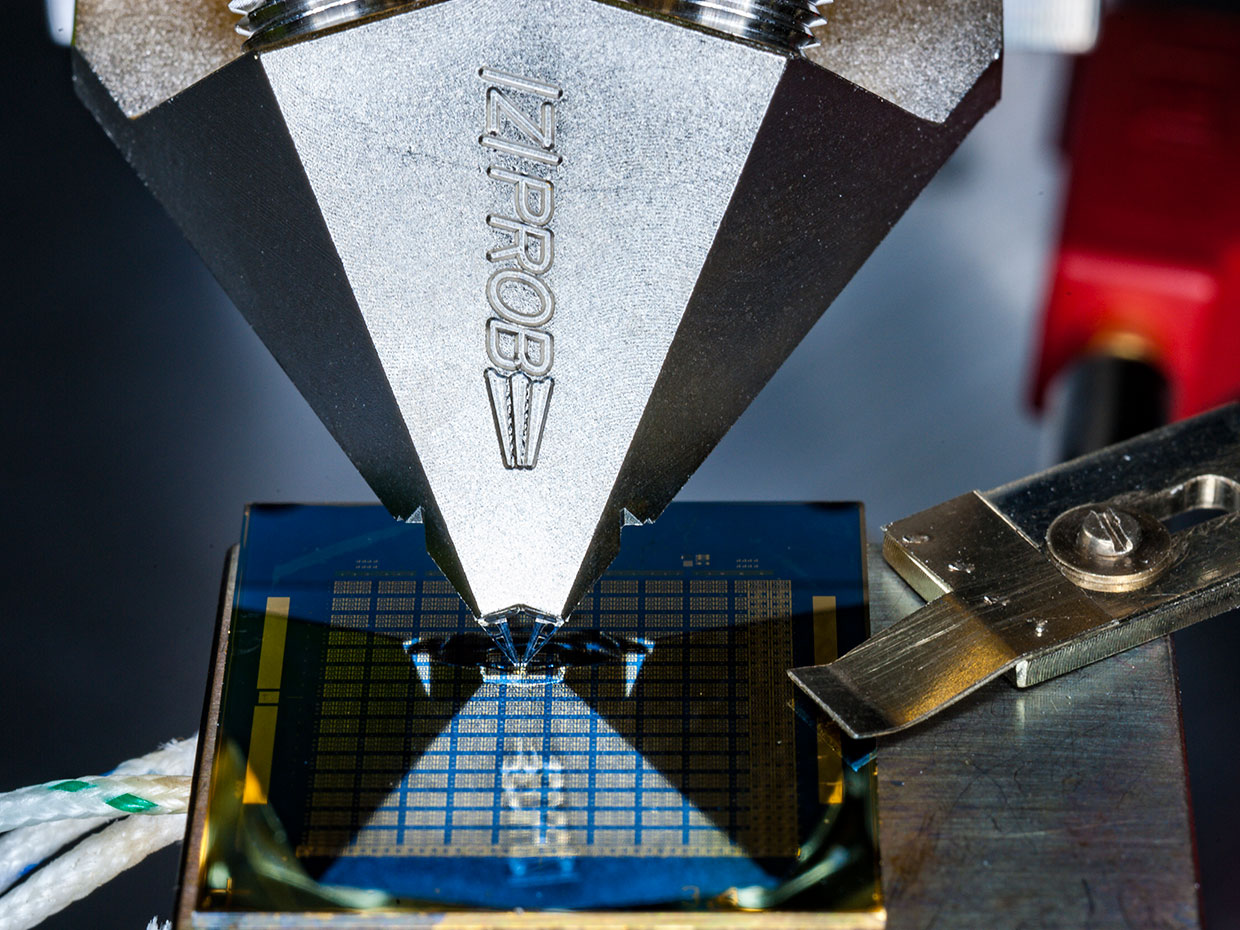

IBM's solution uses a new approach called projected phase-change memory (PCM), or Proj-PCM for short.

[In Proj-PCM], we insert a non-insulating projection segment in parallel to the phase-change segment. During the write process, the projection segment has minimal impact on the operation of the device. However, during read, conductance values of programmed states are mostly determined by the projection segment, which is remarkably immune to conductance variations. This allows Proj-PCM devices to achieve much higher precision than previous PCM devices.

IBM says the improved precision suggests in-memory computing could one day be used to achieve high-performance deep learning in low-power environments like IoT and edge applications.

IBM is also introducing new approaches on the digital side, enabling the ability to train deep learning models with 8-bit precision while preserving model accuracy across image, speed and text dataset categories. These breakthroughs will be presented in a paper titled "Training Deep Neural Networks with 8-bit Floating Point Numbers."