Having tested Turing GPUs of nearly all flavors, the latest graphics card to hit the market is the GTX 1650 which brings Nvidia's new graphics architecture to $150. Unlike the GTX 1660s that left us enthused about their prospects, with the 1650 we weren't overly impressed.

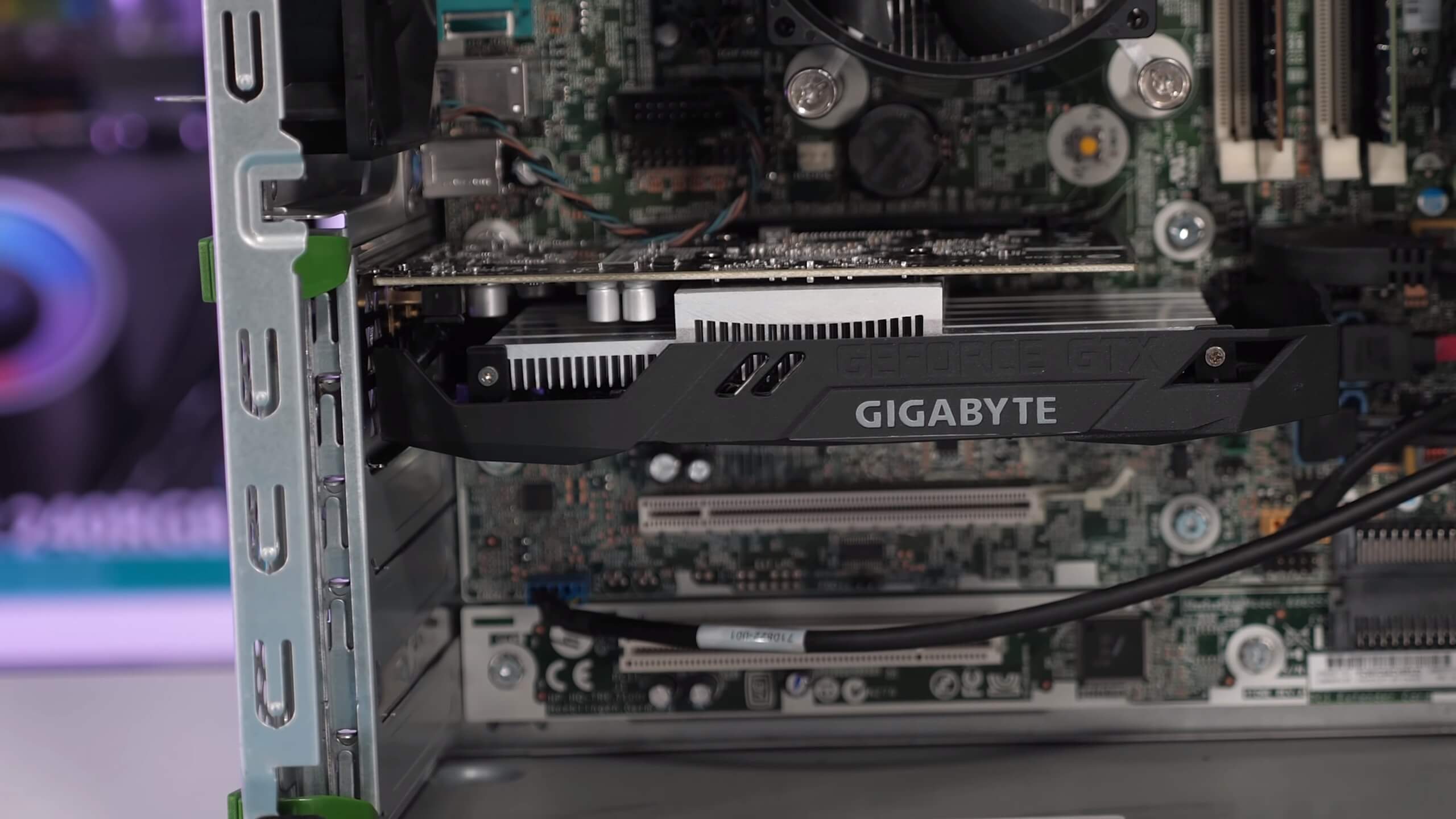

The key argument to be made with the GTX 1650 is power consumption. The card does consume less power and models without a 6-pin PCIe power connector can be used in older OEM machines, providing a cost effective upgrade giving a new lease on life. However, we questioned just how many of these older systems could take advantage of the GTX 1650 and were seriously doubting how cost effective the upgrade would really be.

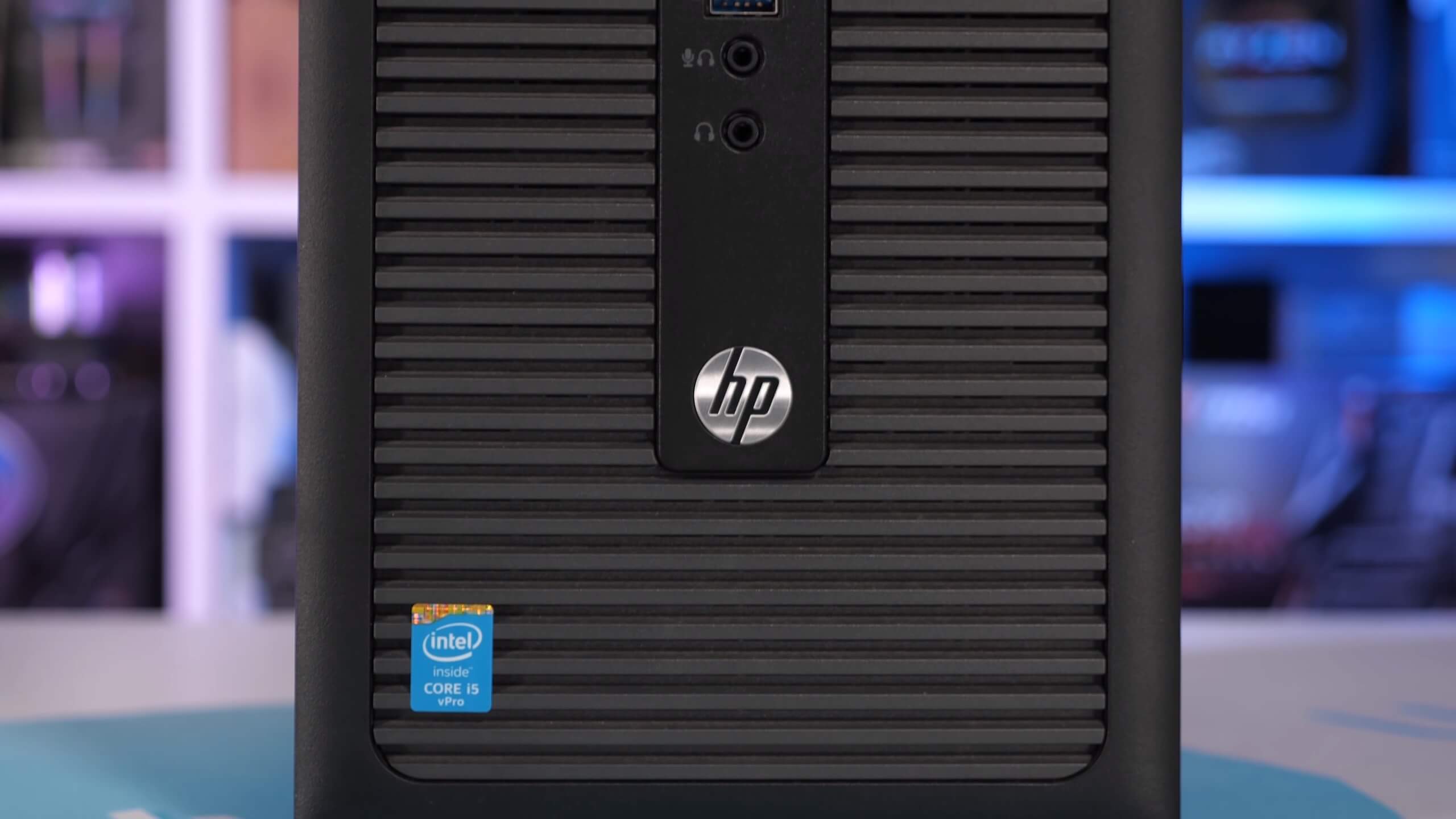

Therefore, we pledged to track down a popular OEM PC that didn't have a 6-pin PCIe power connector and the power supply couldn't be easily upgraded. This lead us to the HP Elitedesk 800 G1, a computer that most who were in favor of the GTX 1650 recommended we test with. We went shopping on eBay and found a non-SFF version of the system that cost approximately $250. We're talking a mid-tower sporting a Core i5-4690, 8GB of DDR3-1600 memory and a 128GB SSD.

Now the plan was simple: get the HP Elitedesk 800 G1, stick in the GTX 1650, 1050 Ti and RX 560 for testing and benchmark with a dozen games. Then compare those results with our high-end i9-9900K test system for good measure, see if the margins change at all. We were expecting the slower Core i5 CPU with its DDR3 memory to create a more CPU-bound situation that would see the margin reduced between the 1650 and 1050 Ti – and spoiler alert – this is exactly what we found for the most part.

Thing is, and you're going to have to bear with us, this didn't go exactly as planned.

We got our shiny old OEM PC and the second it arrived we threw in the GTX 1650, stripped out the 128GB SSD and replaced it with a 2TB SSD and installed a fresh copy of Windows 10. We transferred across all games we were going to test with overnight. The next day we were setup and ready to test. However, right away we noticed this thing was really slow, really sluggish to load games, and then super stuttery for the first 30-60 seconds we ran a test, After that it was okay, but not great. Not what you'd expect from a Haswell quad-core that runs at an all-core clock speed of 3.7 GHz.

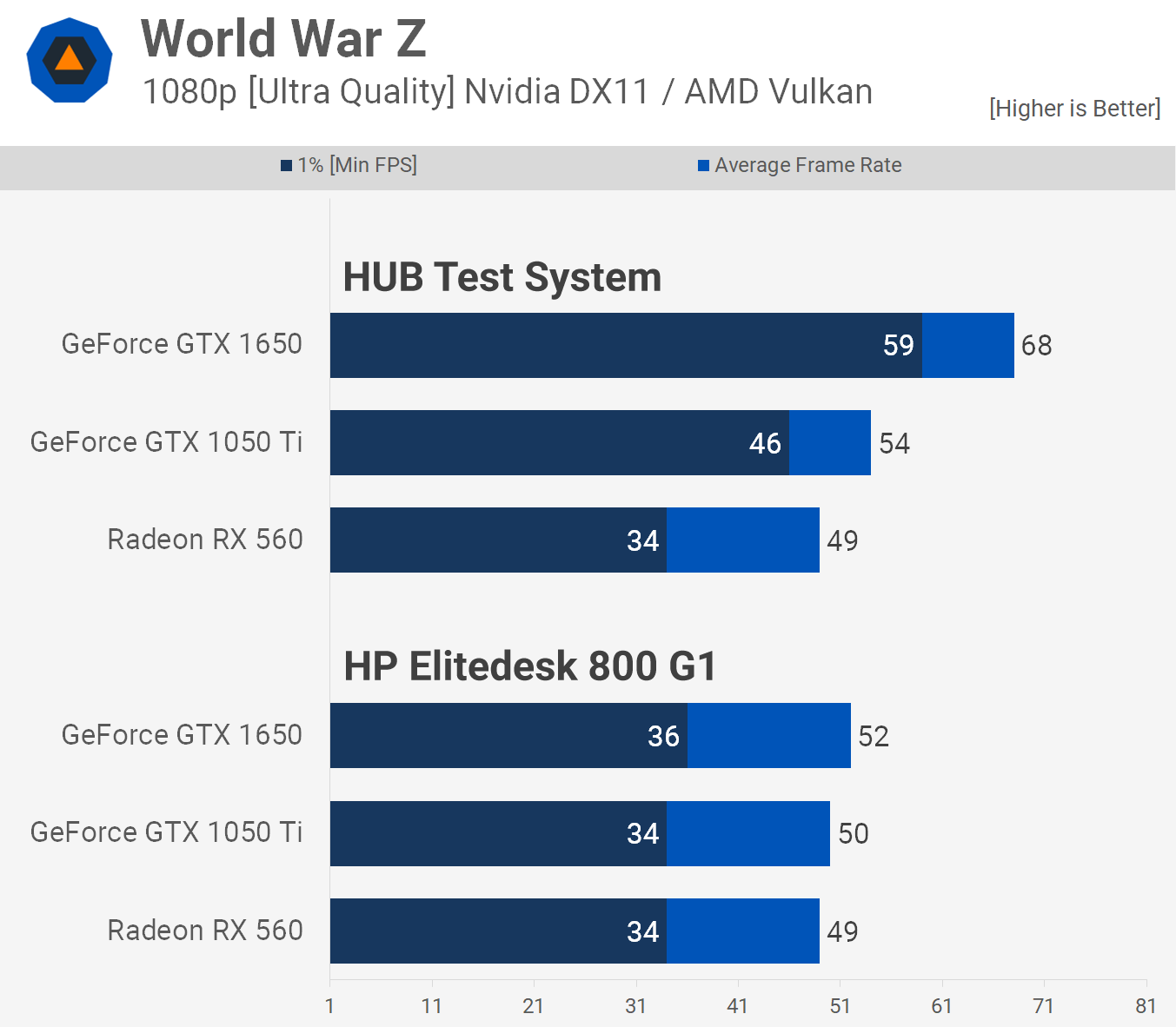

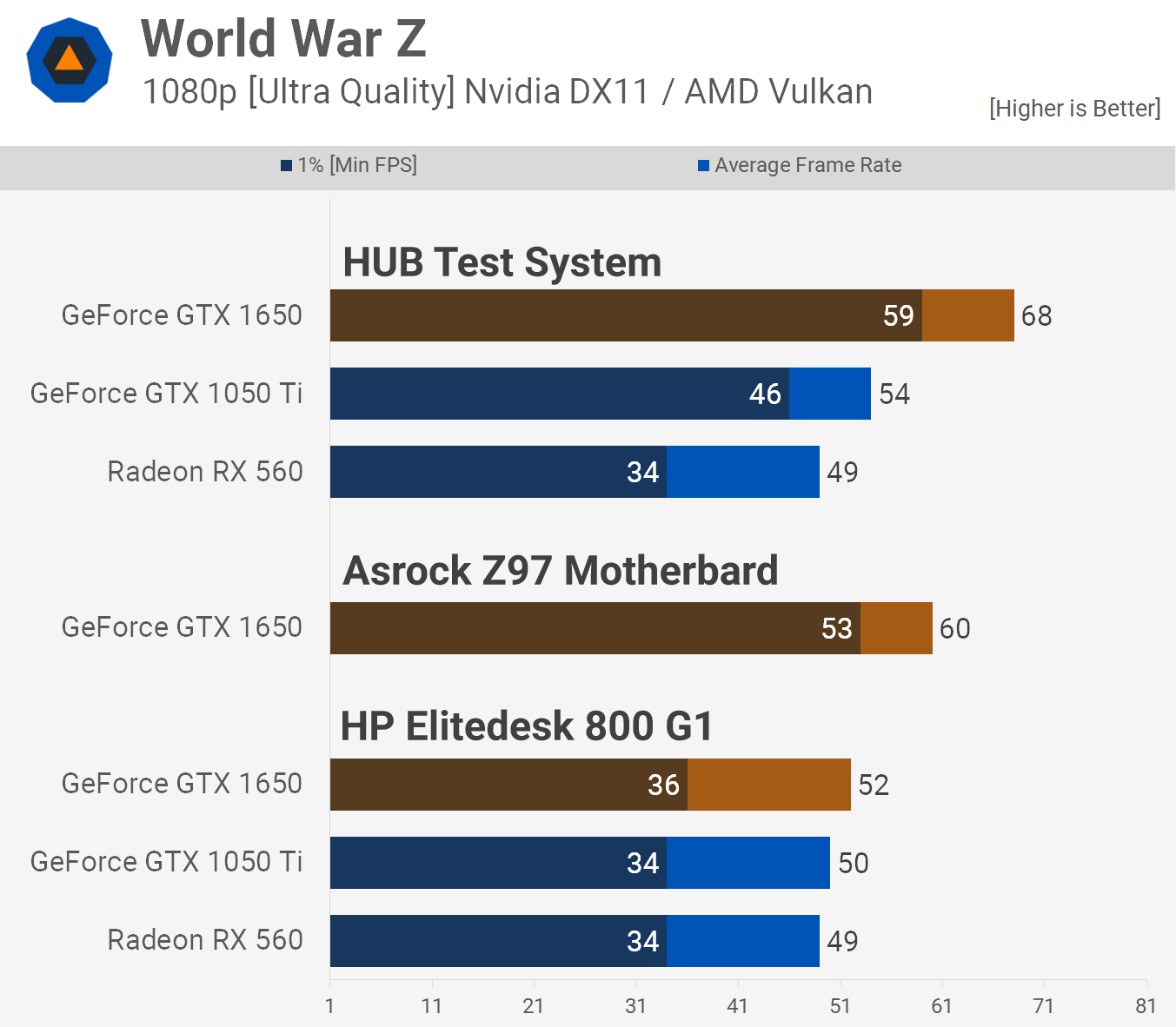

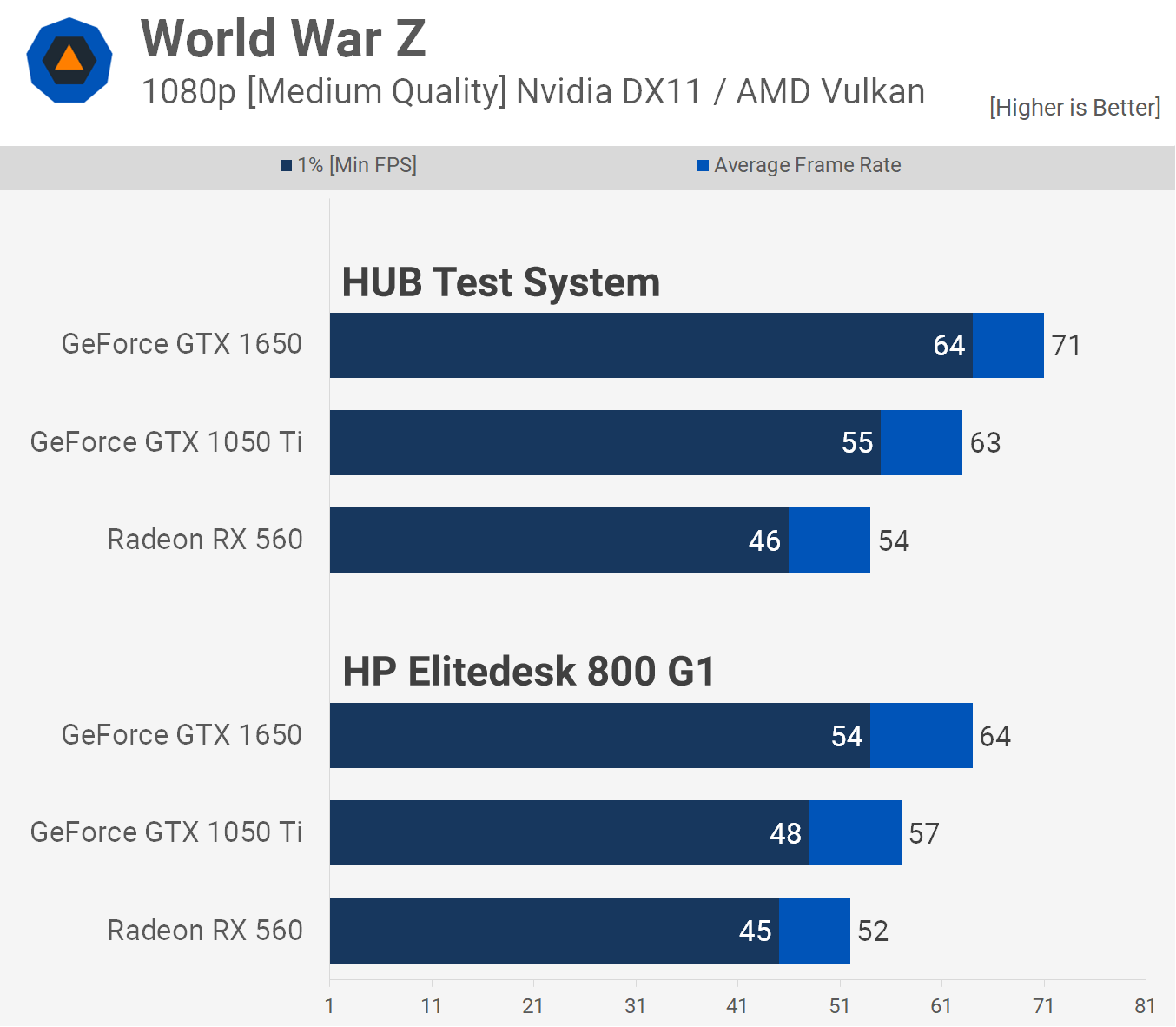

Here is one of the worst examples we came across and also happens to be the first game we tested. World War Z is very demanding on the CPU due to all the NPCs running around like mad. It basically had the Core i5-4690 pegged at 100% for the entire test, though we saw this in a lot of the games tested and a few of them we weren't expecting to see high CPU utilization, even with an older Haswell quad-core.

In this worst case scenario we saw frame time performance jump by 64% when going from the OEM PC to our high-end test system, though with a 1650 you'd get the same numbers with a Ryzen 5 2400G or modern Core i3... hell, you shouldn't be that far off with a Haswell quad-core either.

We knew something wasn't right. But to summarize in our test system the 1650 was on average 26% faster than the 1050 Ti, not an amazing performance uplift but fairly decent all the same. In the OEM PC the GTX 1650 was able to offer just a 4% performance increase...

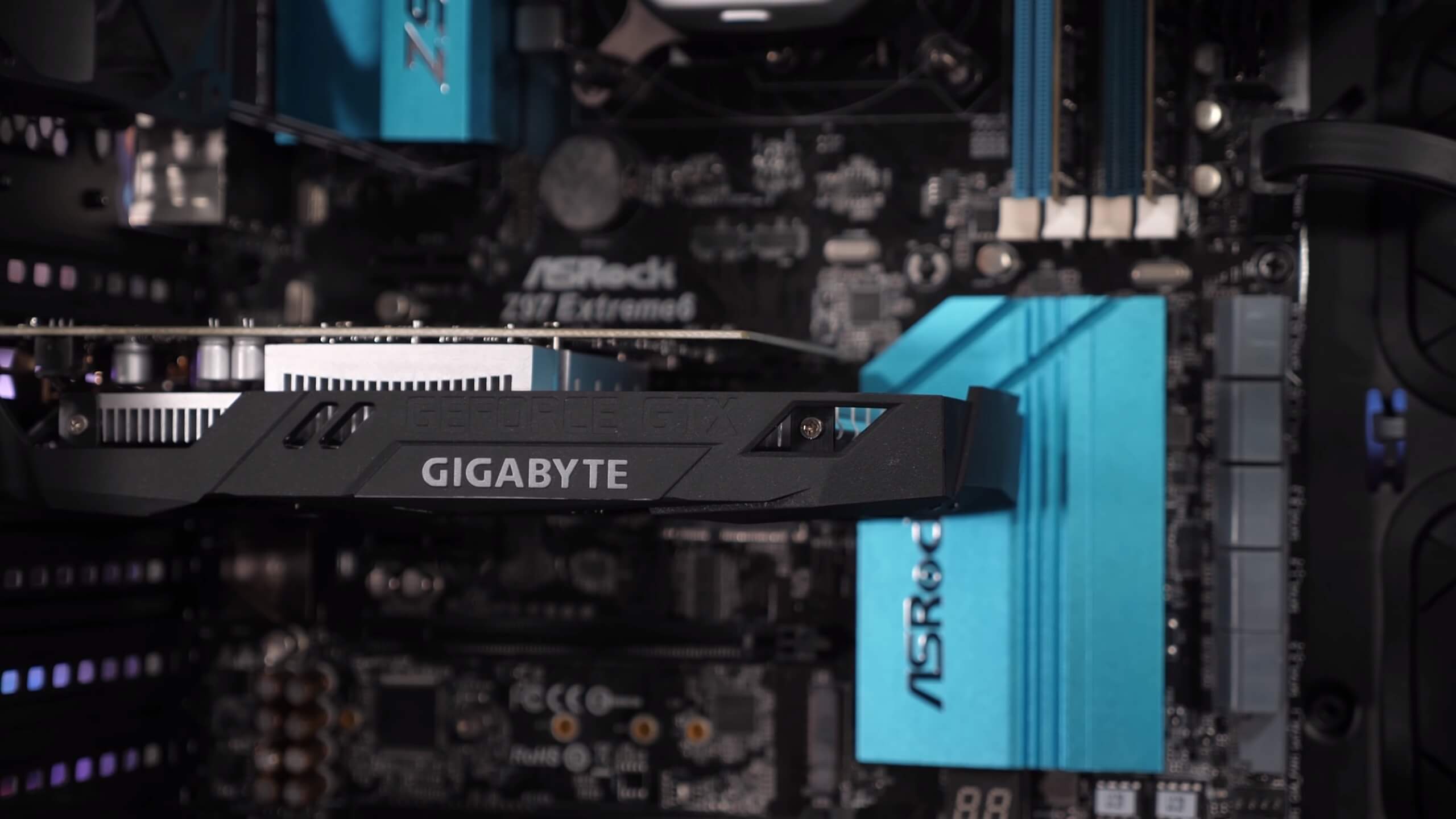

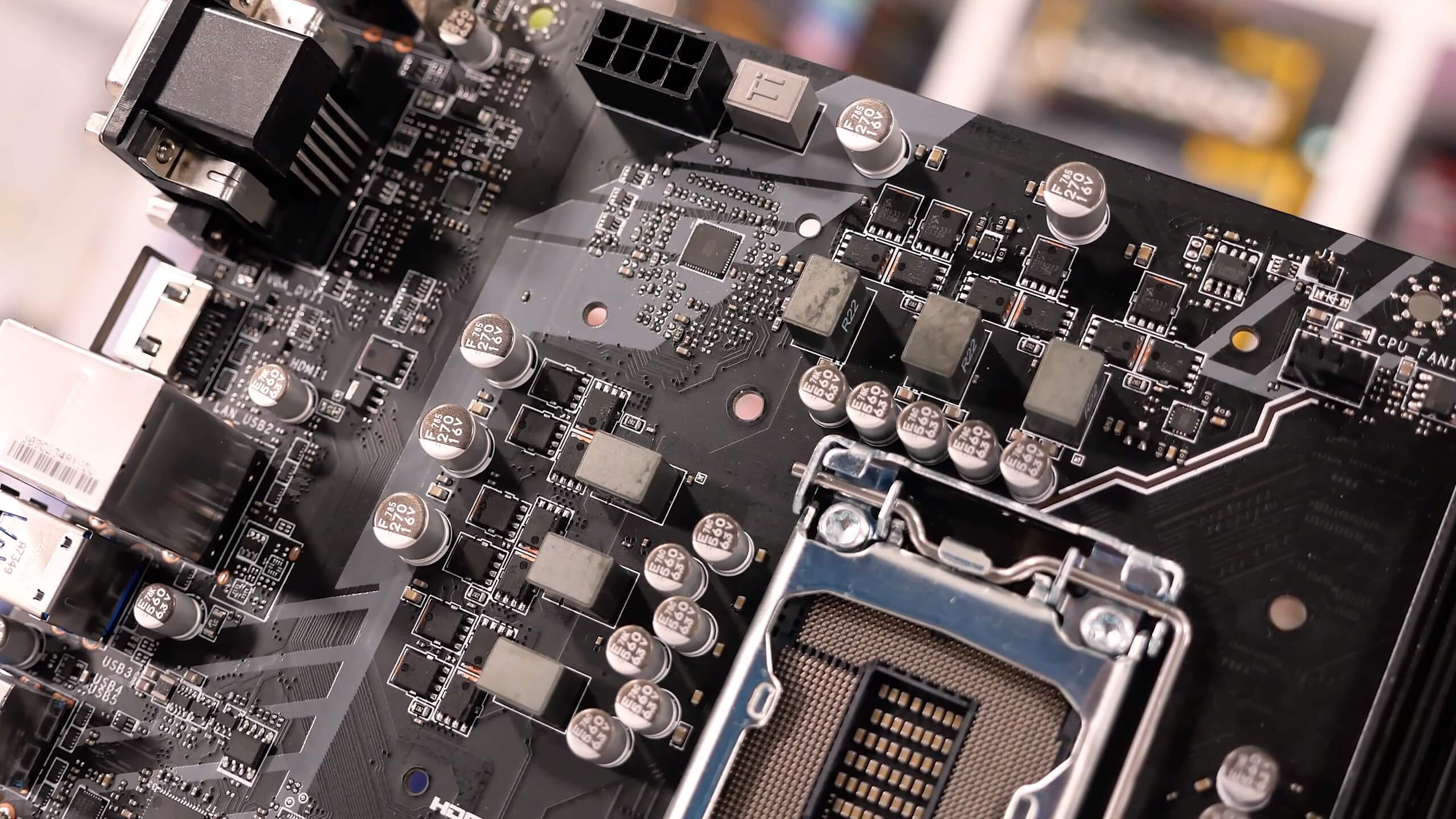

Rather than mess around with the OEM PC, we decided to take the CPU, SSD and memory out and stick it on a Z97 motherboard. It was late and rather than investigate what was going on, we wanted to make sure we weren't dealing with defective hardware. Case in point, moving to a different motherboard resulted in a performance hit compared to the 9900K, but nothing like what we saw in the OEM PC and was the kind of performance we were expecting from the Haswell quad-core.

Whereas our high-end test system was 64% faster than the HP Elitedesk when comparing 1% low performance, it was just 11% faster when using the same CPU and memory on a Z97 motherboard.

At this point we knew it was either a software or a power delivery issue with the OEM system, our money was on the latter. Taking some more time to investigate, we rebuilt the OEM rig and opened up HWinfo and ran a Blender workload to stress the CPU. We suspected it was a CPU throttling issue as the performance hit in World War Z was extreme while in other less CPU intensive titles such as Forza Horizon 4 the margins weren't nearly as bad.

With HWinfo open we noticed the CPU was only hitting an all-core frequency of 3.5 GHz, whereas on the Z97 board it was hitting 3.7 GHz, a 6% increase. But that didn't explain things entirely. Then we noticed that the Core i5's sustained CPU package power was 28% higher on the Z97 motherboard. Now this is partly down to the fact that the Z97 board increased the vcore by 5%, but we didn't think that accounted for all of the power increase, rather we believed the OEM system's VRM was throttling.

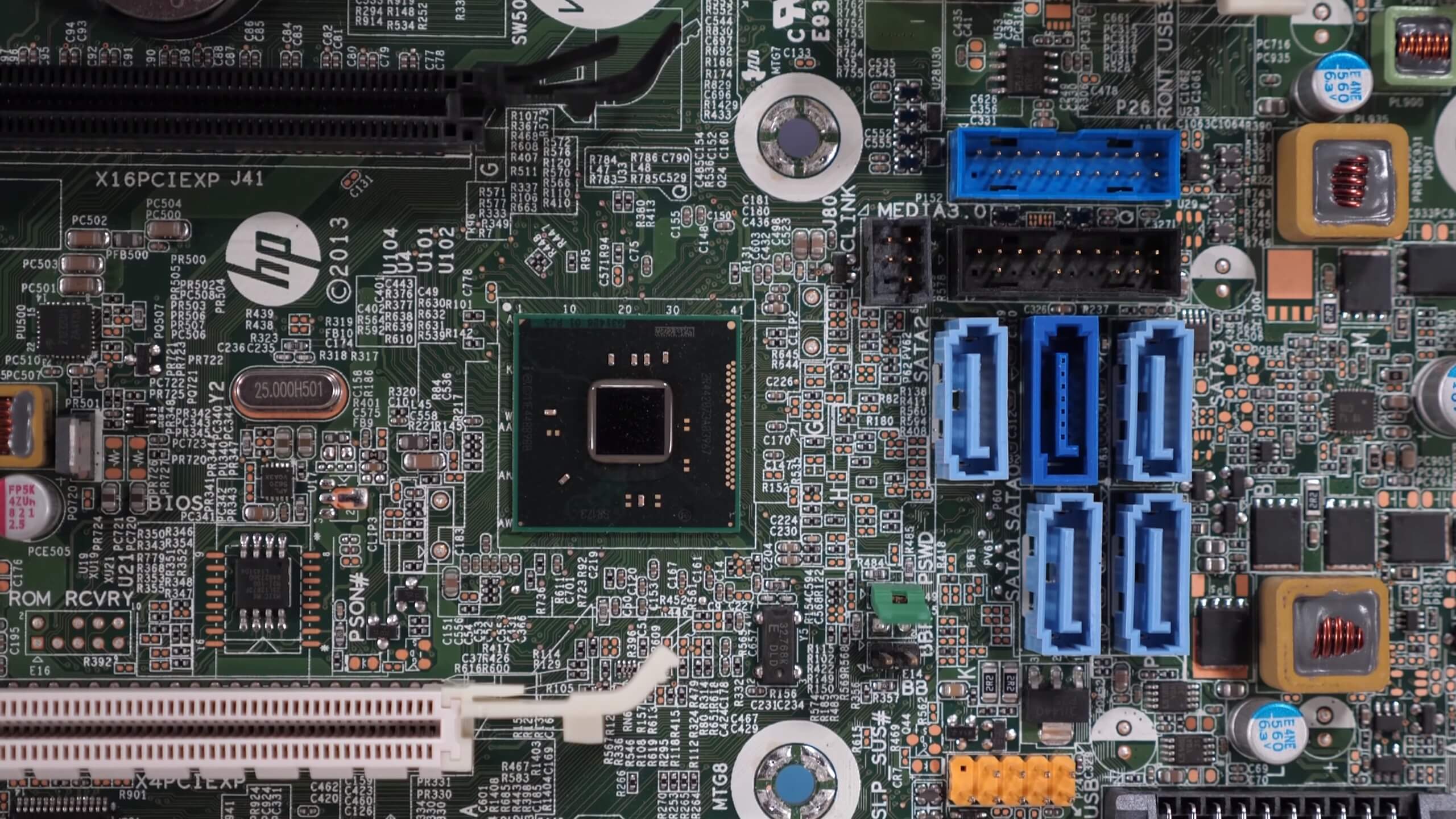

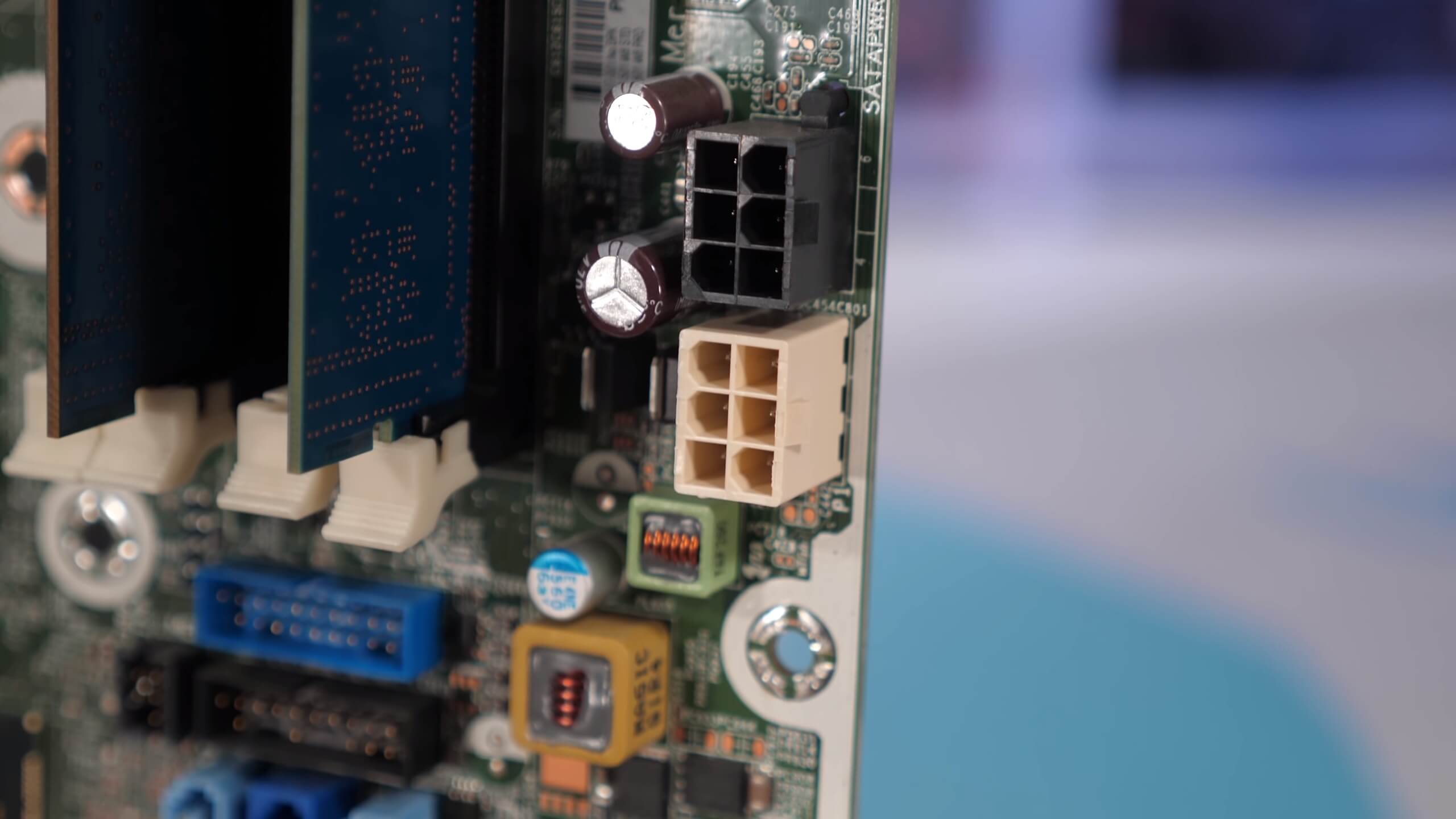

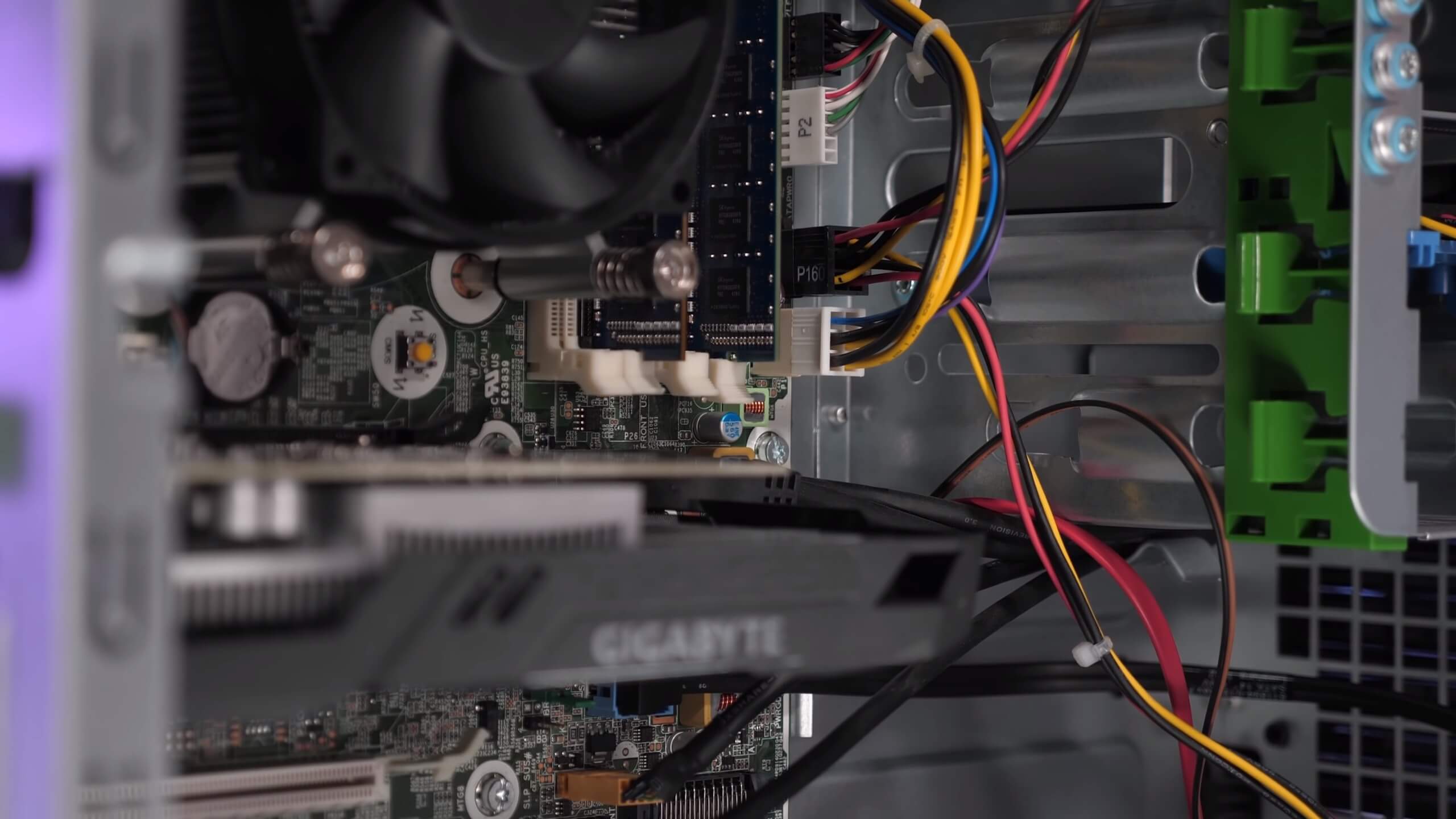

What's interesting about this HP system is that the proprietary power supply only has two power connectors coming out of it: a 6-pin and a 4-pin, that's it. No 20/24-pin ATX connector, no 8-pin, no Molex connectors, no SATA power connectors, just a 6-pin and a 4-pin power connector. Then from the motherboard there's a 6-pin power output which connects to the SATA drives. In other words, the entire PC, the motherboard, CPU, graphics card and storage all get their power from a 4-pin and 6-pin power cable.

The motherboard packs a pathetic 3-phase vcore VRM with no form of cooling, it's completely naked. Aware of this we knew the system had to be heavily power limited. So we opened up Intel Extreme Tuning Utility and found all the settings met the Intel spec, the board was limited to a Turbo Boost power max of 84 watts with a short power max of 105 watts for 8 seconds.

However, when we ran Cinebench R20 we found these limits were well out of reach of the OEM PC. Within two seconds of hitting the 'Run' button the XTU software detected 'current throttling', at a package TDP of just 38 watts. Now normally you can adjust the current limit – on the Z97 board it was set to 100 amps – yet, for the OEM system this option didn't exist. It's a hard lock to protect the motherboard and power supply.

In the end we saw a peak package TDP of just 49 watts and again a maximum all core frequency of 3.5 GHz. In contrast to that, the aftermarket Z97 motherboard allowed the Core i5-4690 to hit 3.7 GHz at a package TDP of 58 watts and no limits were imposed, 18% higher than that of the OEM system.

Now what's really interesting, despite only a 6% clock speed advantage and a 28% increase in sustained CPU package power, the Cinebench R20 CPU score was boosted by 38%. Although the CPU is reported to have all cores working at 3.5 GHz, it's not operating at full capacity, and this is why we need to look at actual performance when a processor is limited by either, thermals, power or current.

In short, this is pretty scummy. HP sold businesses a Core i5-4690 system that performed nowhere near as far as it should have. Intel is not to blame for this, rather it's a choice that rests with OEM builders. Bashing HP over their poorly designed Elitedesk 800 G1 wasn't the point of this feature but to see how the GTX 1650 performs in a popular OEM that doesn't have a PCIe power connector... let's say you could be in a surprise if you take it for granted that all OEMs will perform adequately if you throw in a discrete GPU.

With all of that said, let's check out a few more games to get a broader picture of how this thing performs with the fastest discrete GPU you can get that doesn't require external power.

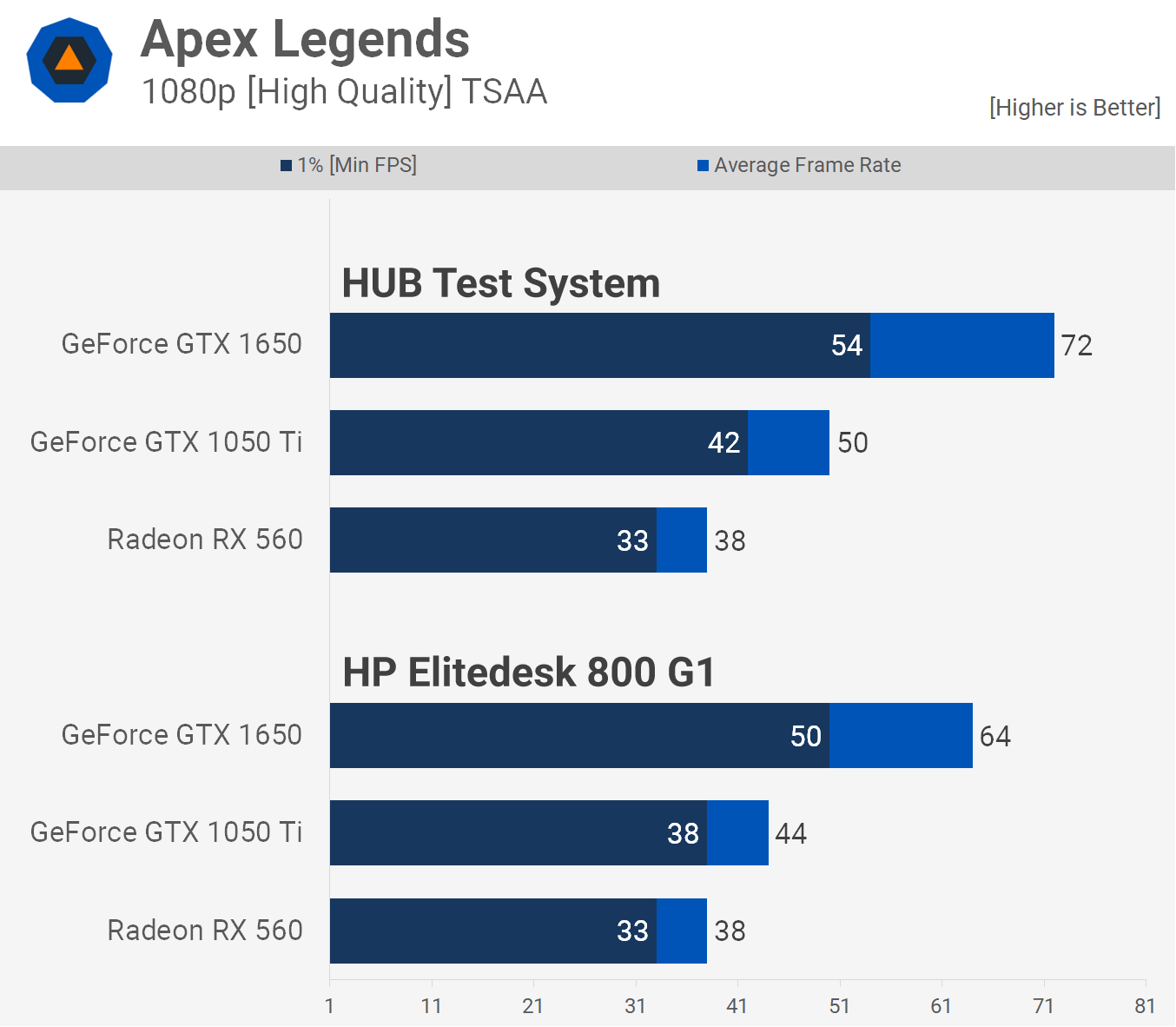

We've already seen a worst case scenario with World War Z, but here we see a much better showing for the OEM system with Apex Legends.

Here the desktop test system was just 13% faster on average when comparing the GTX 1650 numbers and more importantly the 1650 still offered a notable performance boost over the 1050 Ti and a huge performance boost over the RX 560. So here the upgrade has worked well, whether or not it's worth investing $150 in a graphics card for this computer is another story and we'll discuss that later on.

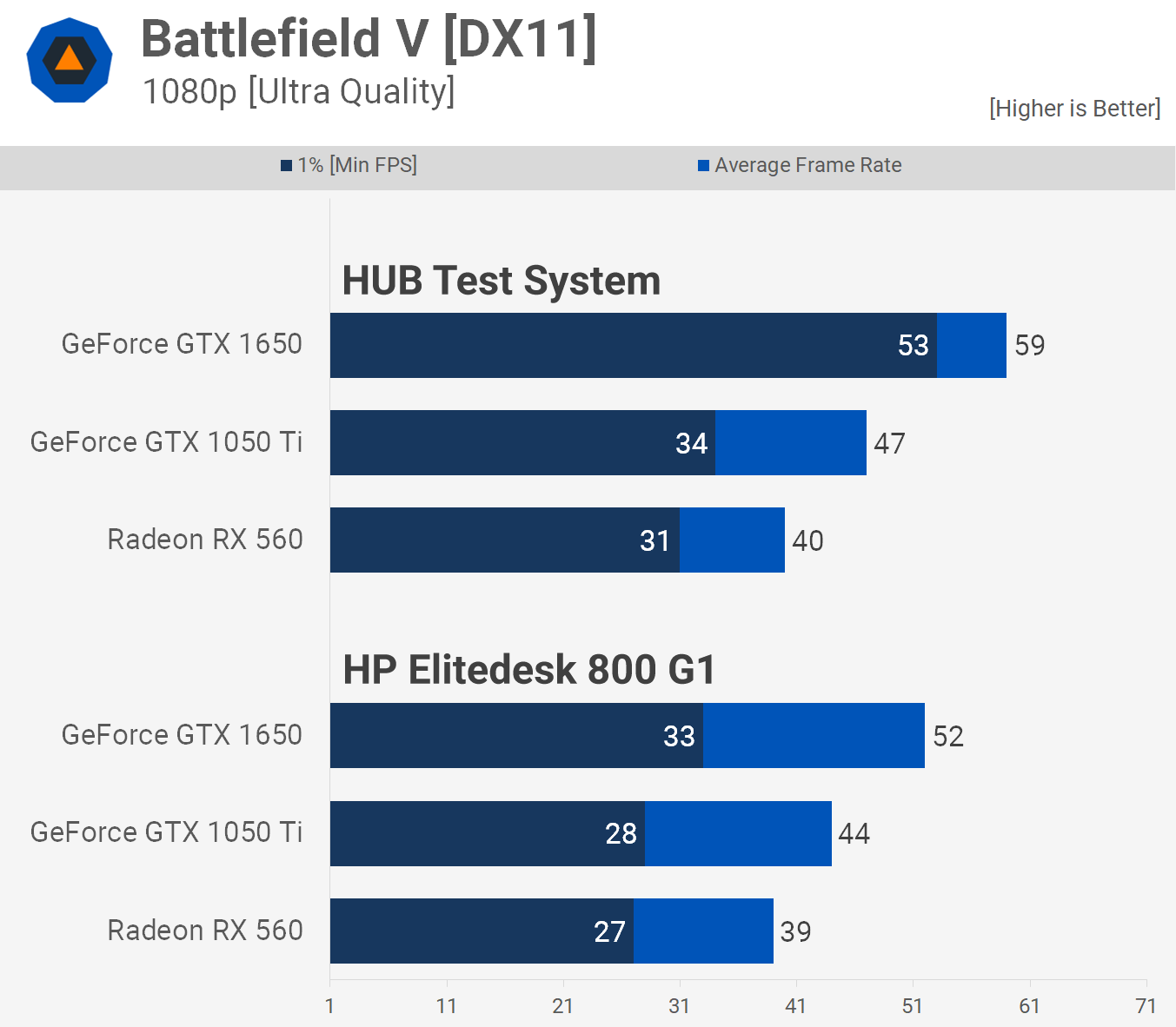

Now these results are pretty typical of what we saw in CPU intensive titles. In our test system the 1650 was 26% faster than the 1050 Ti on average and 56% faster for the 1% low result. However, when we move to the OEM system the 1650 is just 18% faster for both the average and 1% low. 18% is nothing to sneeze at but it does really hurt the value proposition of the GTX 1650.

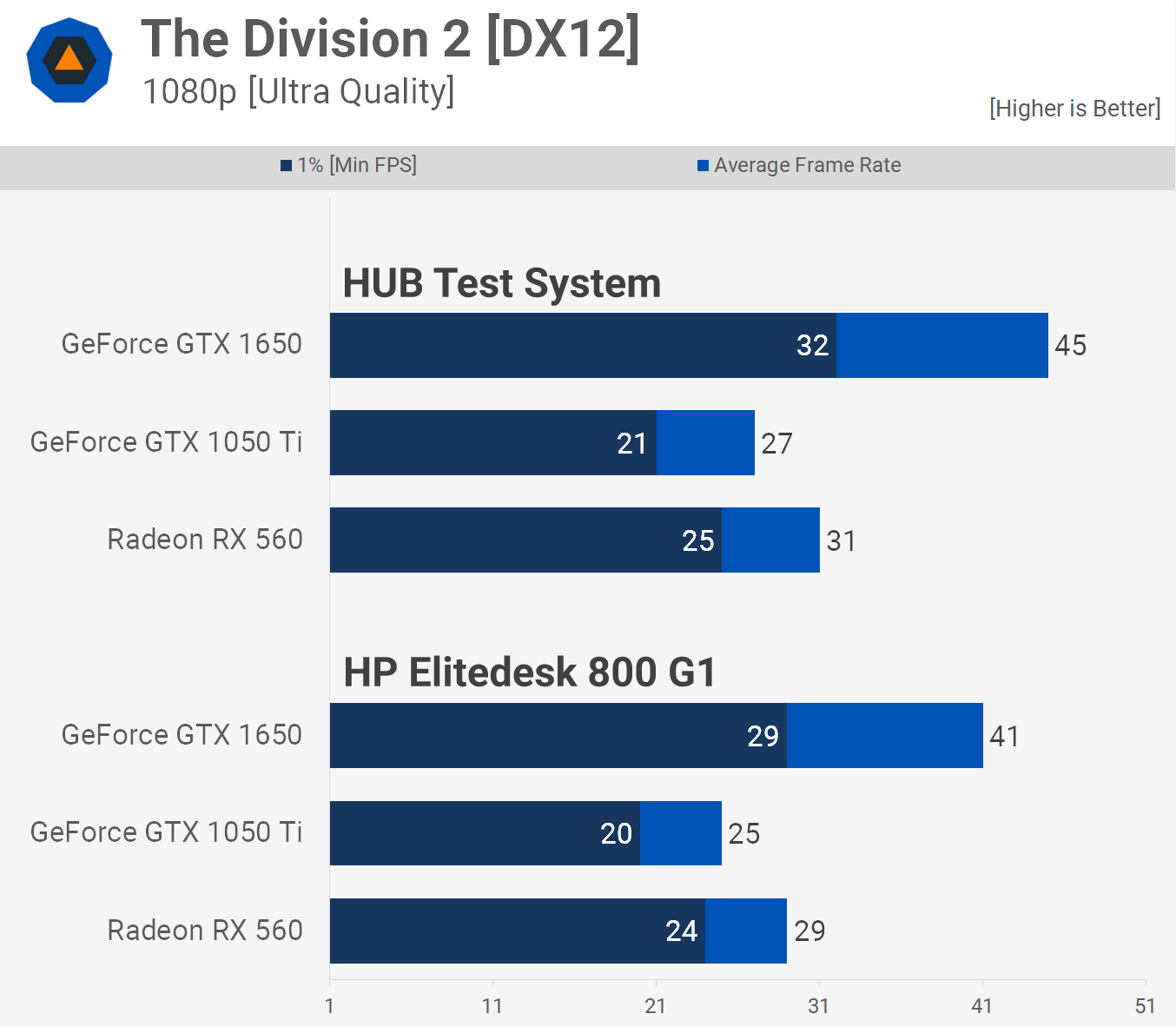

The Division 2 isn't massively CPU demanding so the performance drop is not so bad. We're not testing a terribly demanding section of the game as we typically use this for GPU testing as it's easy to replicate for accurate results. The OEM system will likely fall away in the more demanding sections, especially when playing with friends.

Far Cry New Dawn had the Core i5-4690 pegged at 100% throughout our testing and as a result we saw a 23% performance uplift when going from the OEM system to our test system for the 1650. The 1650 offered a nice 23% performance boost over the 1060 Ti in our test system, but this was down to 7% faster in the HP Elitedesk. In this instance the 1650 is really no better than the 1050 Ti and only 23% faster than the RX 560.

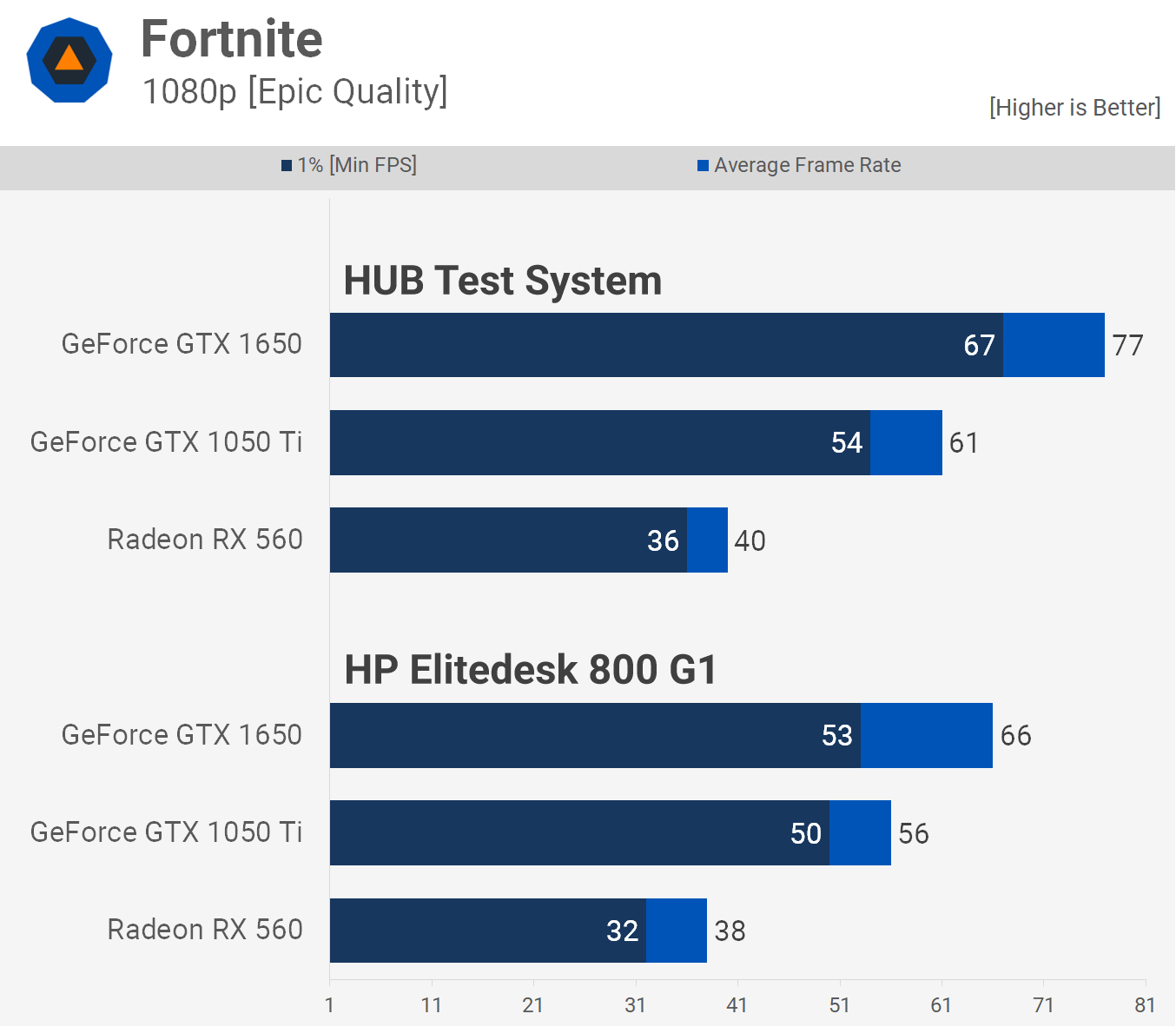

We have to say the Fortnite results are surprising. We expected to see similar margins to what we saw in Apex Legends. However the high-end test system did considerably better, particularly when you see at the 1% low.

The OEM system was 18% faster for the average, but only 6% faster for the 1% low when comparing the GTX 1650 and 1050 Ti. This means the overall gaming experience was similar and here the 1650 didn't prove to be a worthwhile upgrade.

Now this is how the two systems will compare in heavily GPU bound titles. Forza Horizon 4 will play just fine with a dual-core, so the restricted Core i5-4690 makes out just fine in the HP Elitedesk.

Testing with Just Cause 4 tells a similar story to that of titles such as Battlefield V and Fortnite. Here the test system saw the 1650 beat the 1050 Ti by 31% when comparing the average frame rate and 30% for the 1% low. However in the OEM system the 1650 was just 17% faster than the 1050 Ti for the average frame rate and a mere 3% faster for the 1% low.

With Metro Exodus the OEM system cripples 1% low performance to the point that the 1650 isn't an upgrade over the 1050 Ti.

Although the 1% low performance also takes a big hit in Resident Evil 2 the GTX 1650 did offer noticeably better performance than the GTX 1050 Ti when installed in the HP Elitedesk.

With Rainbow Six Siege we only use the single player portion of the game as there's no real way to test multiplayer with accuracy. In this less demanding part of the game the 1% low performance is still pretty weak, but as was the case with Resident Evil 2, the 1650 is still able to deliver a noticeably better experience than the 1050 Ti.

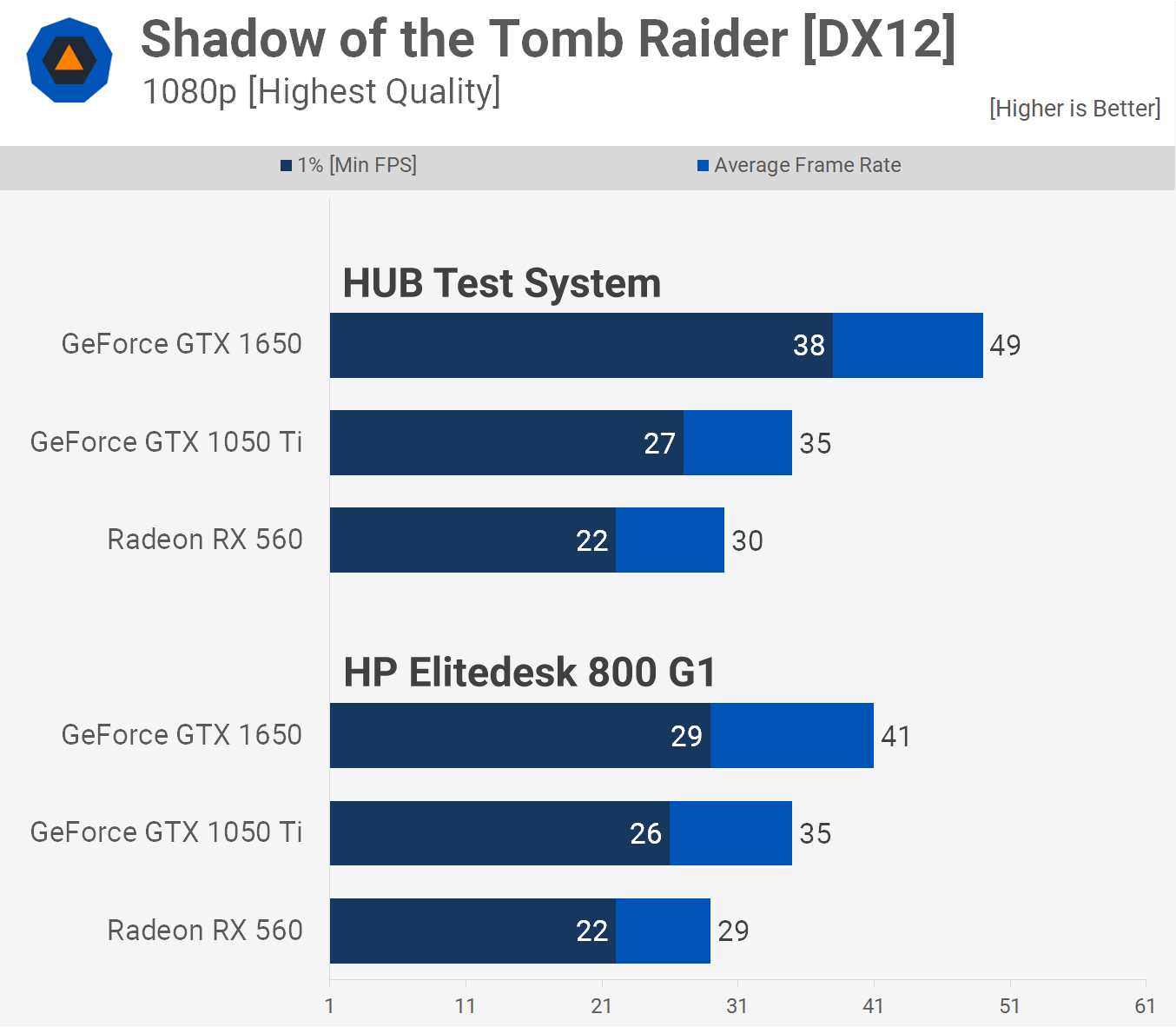

Finally we have Shadow of the Tomb Raider and again we see a noticeable downgrade in performance for the GTX 1650 on the OEM system.

Testing with Medium Settings

Before wrapping up the benchmarks we thought it would be worth looking at how the graphics card stacked up in each system using the medium quality preset. So we re-tested with Fortnite and World War Z.

Using the medium quality preset in Fortnite allowed the GTX 1650 to deliver well over 100 fps at all times in our test system. Meanwhile, in the OEM system performance was still decent, however the 1650 is no longer a worthwhile upgrade over the 1050 Ti. Here the 1% low performance was nearly identical, while the 1650 was 14% faster when comparing average frame rates.

Previously using ultra quality settings we saw identical performance between the 1050 Ti and 1650. The medium settings reduce CPU load and here the 1650 was able to pull ahead, though it was only 12% faster on average.

Performance Summary

Not much changed with the medium quality settings, at least the margins didn't. We would even argue that using medium to low quality settings plays into the 1050 Ti's favor as the GPU bound games become a little more CPU bound.

Our 12 game average graph paints a pretty accurate picture of the situation. The GTX 1650 was 34% faster than the 1050 Ti in our test system and 32% faster for the 1% low. Then in the OEM system it was 24% faster on average but more crucially just 12% faster for the 1% low, making for a less impressive upgrade coming from a 1050 Ti.

dGPU + OEM PC: Does It Make Sense?

Let's play out a few scenarios.

The first scenario is the 'hand me down', you were given a HP Elitedesk 800 G1 or similar OEM system with a proprietary power supply and motherboard. You don't have money to build a new system, so you've gotta make do with this thing. What's the best way to get gaming? Now remember this system owes you nothing, so spending a bit on it isn't too much of a big deal, at least within reason.

Do you really want to spend $150 to put a graphics card in it? We wouldn't, especially when in a decent computer that graphics card doesn't represent particularly good value. The GTX 1050 Ti seems to be a better fit, but we certainly wouldn't buy one of those new either. However, you can get them for less second hand. The average selling price on eBay at the start of the year was $105 but today we're seeing a few going for $80. That seems like a reasonable investment to get this system gaming.

Alternatively, a Radeon RX 560 for ~$50 is a real possibility and probably the way we'd go. Using medium to low quality settings will enable very playable frame rates. It's a small investment and frankly that makes sense for this scenario.

Second scenario, you're after a cheap PC and considering buying HP Elitedesk 800 G1 or a similar OEM system with a proprietary power supply and motherboard... Don't! Do your research and avoid any PC with a proprietary power supply and motherboard, it's that simple. These things are typically selling for $180 on eBay and if you were to spend that kind of money and then another $150 on a GTX 1650 to stick in it, well quite frankly you're beyond our help.

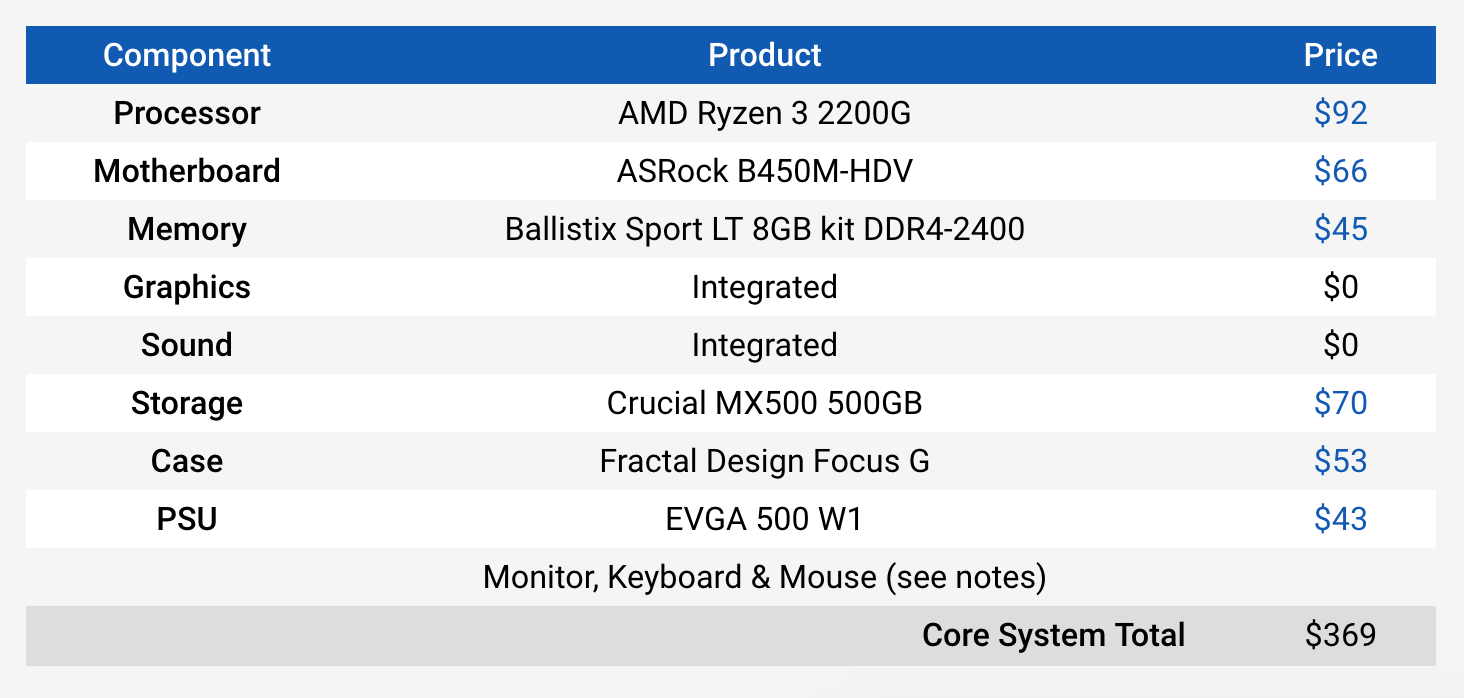

As a side note, we're currently working on updating TechSpot's PC Buying Guide and below is a preview of what the Budget Box looks like. Add a Radeon RX 570 for $130 and essentially for $500 you can build a decent machine that will decimate the HP Elitedesk 800 G1 with a GTX 1650.

Now moving onto our third scenario, you manage to buy an OEM system that's not a steaming pile of trash and won't strangle a GTX 1650 to death, but it has a power supply without a 6-pin PCIe power connector. Does it make sense to spend $150 to put a 1650 in it and enjoy around a 30% performance boost over the 1050 Ti?

That's a tough one. Again, a second-hand 1050 Ti can easily be had for $80. That makes the GTX 1650 almost 90% more expensive. Also when using medium quality settings at 1080p the experience is going to be very much the same. At this point we should say, you should watch a quick tutorial on how to change an ATX power supply. You've got the case door off, you're halfway there. Throw in a $35 power supply and chances are you're improving the system overall and now you open yourself up to a myriad of options that include new and used RX 570s that are faster.

Now getting back to our horrible HP Elitedesk 800 G1 for a moment. For the grand total of $7 shipped, we've ordered a 24-pin to 6-pin power supply adapter which will allow us to install a standard ATX power supply in the thing. Once that arrives we can throw in a cheap bronze-rated PSU and hook up an RX 570 to see how it goes. Overall performance won't be stellar, but for basically the same price as an GTX 1650 we'll be getting a few extra frames and titles such as Forza Horizon 4 will run even better.

It seems no matter which way you slice it, at $150 the GeForce GTX 1650 is a pointless product. At $100 it would be excellent, but at $150 it makes no sense, even if the intended purpose was to breathe new life into OEM systems.

Finally, there's a whole other conversation that goes beyond the scope of this article, but not that long ago a discussion started around Intel's TDP and how it's becoming less and less accurate. There was concern and rightfully so that this would cause trouble for OEMs who only build to the Intel spec with literally zero headroom.

We saw issues with Intel Core i7-8700. The non-K model throttles badly with the included box cooler and there were reports of a few OEM systems that were 20% slower than results reported by those building their own PCs. This is indeed an entirely different conversation, but it's interesting to find that this is not a new issue, even well before Intel started playing fast and loose with their TDP rating. The Core i5-4690 and all the locked Haswell Core i5 range featured an 84 watt TDP, yet this part never hit a package TDP of even 60 watts, so Intel had plenty of headroom there.