You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

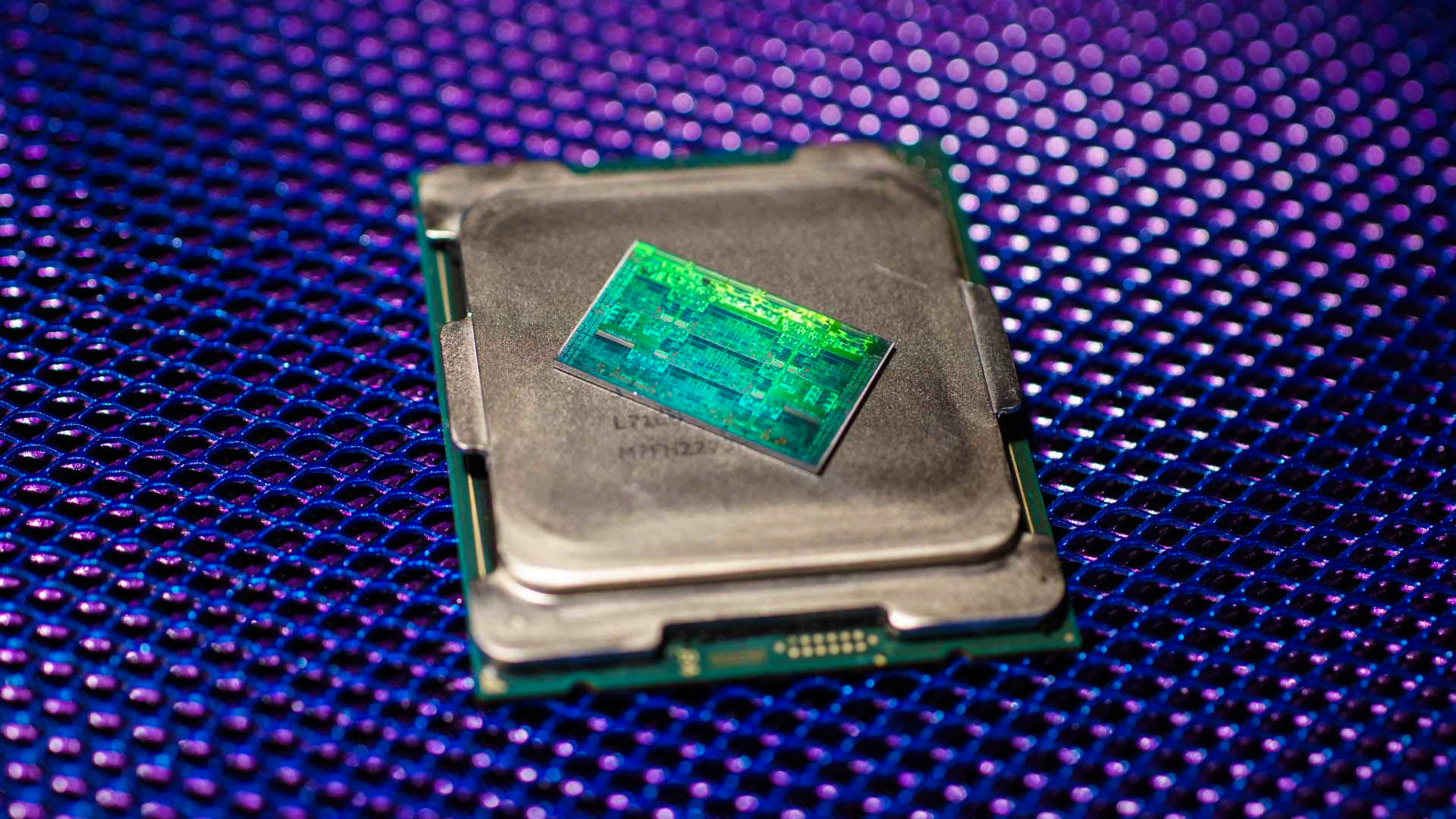

9 Years of AMD CPUs: From AMD FX to Ryzen 5000 Series, Tested

- Thread starter Steve

- Start date

No I meant the ones Intel gave them for their home systems.The construction core was an utter disaster.I often wondered back then what would have happened if they had simply stuck with optimized K10 instead. The llano APU obliterated the later 4000 and 5000 series APUs, and many simply wanted AMD to take their phenom 1100t and put it on the 32nm node bulldozer ran on.

Techspot has not tested Alder Lake. Nobody officially has.

zamroni111

Posts: 564 +304

But at much higher power consumptionInterestingly, the new Intel flagship smokes them all, as tested by Techspot. True story.

Stardock also got pay from AMD to support their first Ryzen processors. Nothign shady with eitherOf course it does. It turns out that Intel paid Stardock for the updated version that used 16 cores but ONLY 24 threads! (Someone posted the evidence of this in the comment section of the actual article about the AOTS test specifically). Stardock would never have done that on their own because before Alder Lake, there had never before been a 16-core 24-thread CPU. All 16-core CPUs had 32 threads. That leak is what is known in the industry as irrelevant.

It's about as trustworthy as SysMark, Intel's Compiler,LoserUserBenchmark and liquid cooling using a secret water chiller.

I used to really hate AMD because I had to live with an FX6300 for almost 10 years, but I am really glad I did not let that hate blind me earlier this year when I built a new PC. I almost went full blind with an Intel CPU without realizing how Intel hasn't really made any improvements and most of their CPUs were very power hungry and overpriced for a minimal performance gain compared to AMD Ryzens.

I am still not a big fan of AMD and I'd rather to not use their products if I have better options, but their Ryzen line is a very respectable line of CPUs that made me change my option, the Ryzen 5600x I have is amazing and by far one of the best CPUs in the market.

I am still not a big fan of AMD and I'd rather to not use their products if I have better options, but their Ryzen line is a very respectable line of CPUs that made me change my option, the Ryzen 5600x I have is amazing and by far one of the best CPUs in the market.

Crinkles

Posts: 254 +222

Since when are lawsuits in the USA determined by who is right as opposed to "who just doesn't want to deal with it anymore"?

No court in some US state is going to define what a CPU core is for me when I've known damn well what a CPU core is for more than 20 years before that frivolous lawsuit case was brought forward.

CPU's are well defined (really well) -might as well use wiki for this.

central processing unit (CPU), also called a central processor, main processor or just processor, is the electronic circuitry that executes instructions comprising a computer program. The CPU performs basic arithmetic, logic, controlling, and input/output (I/O) operations specified by the instructions in the program. This contrasts with external components such as main memory and I/O circuitry,[1] and specialized processors such as graphics processing units (GPUs).The form, design, and implementation of CPUs have changed over time, but their fundamental operation remains almost unchanged. Principal components of a CPU include the arithmetic logic unit (ALU) that performs arithmetic and logic operations, processor registers that supply operands to the ALU and store the results of ALU operations, and a control unit that orchestrates the fetching (from memory), decoding and execution of instructions by directing the coordinated operations of the ALU, registers and other components.

Throwing our years of experience out?

Anyway, this HAS BEEN settled the Ca. case serves as a good example of the legal definition since it is the prevailing law. Might want to update your personal definition, maybe.

But at much higher power consumption

This is almost pointless, high pwr usage / heat production by these cpus does not reduce the performance until throttling occurs.

Techspot's 'testing', not so much testing as reporting.

Early benchmarks show how Intel's Core i9-12900K is coming after the Ryzen 9 5950X's performance crown

Intel's Alder Lake processor family is poised to be a much more exciting release than Rocket Lake, which was only an incremental upgrade over Ice Lake that...

www.techspot.com

www.techspot.com

Vanderlinde

Posts: 659 +451

You guys forget a few things why the FX still was very populair:

- Multithread power, it would beat the i7 on encoding and such

- Overclocking headroom, I mean buy a 3.2Ghz model and push towards 5Ghz if you had the right motherboard, cooling and such.

Overclocking did yield more out of it, not just the raw core clocks but also the internal bus like the FSB or CPU/L3 Northbridge etc.

If I remember well, my 8320 at 4.8Ghz did around 767CB which was equal to a Ryzen 1600x.

They could take a punch, overvolting all the way through 1.6V and nothing was wrong. Unlike ryzens today. They degrade in weeks if overclocking was done improper. With the birth of Ryzen, the OC'ing kind of died at that point. PBO etc is far more advanced then all core.

- Multithread power, it would beat the i7 on encoding and such

- Overclocking headroom, I mean buy a 3.2Ghz model and push towards 5Ghz if you had the right motherboard, cooling and such.

Overclocking did yield more out of it, not just the raw core clocks but also the internal bus like the FSB or CPU/L3 Northbridge etc.

If I remember well, my 8320 at 4.8Ghz did around 767CB which was equal to a Ryzen 1600x.

They could take a punch, overvolting all the way through 1.6V and nothing was wrong. Unlike ryzens today. They degrade in weeks if overclocking was done improper. With the birth of Ryzen, the OC'ing kind of died at that point. PBO etc is far more advanced then all core.

Crinkles

Posts: 254 +222

Of course it does. It turns out that Intel paid Stardock for the updated version that used 16 cores but ONLY 24 threads! (Someone posted the evidence of this in the comment section of the actual article about the AOTS test specifically). Stardock would never have done that on their own because before Alder Lake, there had never before been a 16-core 24-thread CPU. All 16-core CPUs had 32 threads. That leak is what is known in the industry as irrelevant.

It's about as trustworthy as SysMark, Intel's Compiler,LoserUserBenchmark and liquid cooling using a secret water chiller.

Why wouldn't they? Release from 2018;

AMD and Stardock have announced the partnership for Star Control: Origins, which will be optimized for AMD Ryzen processors, Radeon FreeSync 2 technology, and eventually for the Vulkan API with a post-launch update. -Ritche Corpus, Head of Worldwide Content at AMD

The partnership also extends beyond the technical side, as we previously reported. Star Control: Origins will be offered as part of AMD's Raise the Game initiative to buyers of AMD Radeon RX Vega, Radeon RX 580 or RX 570 for free (from participating retailers, that is).Star Control: Origins will launch out of Steam Early Access on September 20th. You can already purchase the game via Green Man Gaming at a sizable 25% discount, in case you're interested. -- WCCF

Money was on the table.

Sausagemeat

Posts: 1,597 +1,423

I remember when FX was out the AMD fans were all saying that it will be faster in the future because of a core count. That turned out to be utter bull lol.

For me these graphs show that Ryzen 1st and 2nd gen werent that amazing considering there was a 5 year gap since the Fx series. And actually how impressive the 5000 series is relative to everything else AMD have sold.

Still, I’m waiting for Alder lake. I’d probably would have bought the 5000 series Ryzen 5600X if I could have obtained a GPU but I can’t.

And I have a feeling that Alderlake is going to eviscerate the 5000 series.

For me these graphs show that Ryzen 1st and 2nd gen werent that amazing considering there was a 5 year gap since the Fx series. And actually how impressive the 5000 series is relative to everything else AMD have sold.

Still, I’m waiting for Alder lake. I’d probably would have bought the 5000 series Ryzen 5600X if I could have obtained a GPU but I can’t.

And I have a feeling that Alderlake is going to eviscerate the 5000 series.

gamerk2

Posts: 1,357 +1,582

Bulldozer was a real fiasco.

Yet people bent over backward to defend it. "It'll get better once games use more cores" and all that.

I hated the design from the beginning; we already knew they wouldn't reach their target clocks from Intel's past experience with the Pentium 4, and the performance loss of a cache miss, the deep pipeline, and all the other parts of the architecture screamed "unoptimized POS".

EDIT

Bulldozer was even worse than even I (who I stress was pessimistic on it) expected. I remember when the first benchmarks of cache access times leaked; I declared them fake on the spot because, and I quote "there's no way AMD is so incompetent to design a CPU this bad." Bulldozer proved me wrong in that regard.

END EDIT

Consider at the time AMD was trading under $2, had to sell their HQ, spin off their foundry, and had debt payments in excess of the companies total value. Had Ryzen failed, AMD wouldn't exist as it currently does.

Last edited:

Alderlake is mostly for Mobile. These are going to be Intel's big-little chips...I remember when FX was out the AMD fans were all saying that it will be faster in the future because of a core count. That turned out to be utter bull lol.

For me these graphs show that Ryzen 1st and 2nd gen werent that amazing considering there was a 5 year gap since the Fx series. And actually how impressive the 5000 series is relative to everything else AMD have sold.

Still, I’m waiting for Alder lake. I’d probably would have bought the 5000 series Ryzen 5600X if I could have obtained a GPU but I can’t.

And I have a feeling that Alderlake is going to eviscerate the 5000 series.

Intel's gameplay to try and compete with AMD in the Low wattage range, as Zen scales down very well. Intel's Core tech does not. Which is why the M1 murders the Intel 15W chips yet doesn't murder AMD's 5800u in its 15w config.

Alderlake is only a small improvement on the desktop front arch wise, most of the performance per watt gains is from the move to 10nm on the desktop. Just will be another mediocre product launch. They pushed the Clocks so high on 14NM, and their IPC has only slightly improved. So the future 10NM at lower clock speeds and not much in terms of IPC improvements, not going to bold well for Intel.

Zen4 won't be too far away, with a new platform to run on. Going to really screw up intel's plans.

I'll be moving from my i7 to Zen4, that is all I know. Unless somehow Intel pulls magic out of a hat.

gamerk2

Posts: 1,357 +1,582

Of course it does. It turns out that Intel paid Stardock for the updated version that used 16 cores but ONLY 24 threads! (Someone posted the evidence of this in the comment section of the actual article about the AOTS test specifically). Stardock would never have done that on their own because before Alder Lake, there had never before been a 16-core 24-thread CPU. All 16-core CPUs had 32 threads. That leak is what is known in the industry as irrelevant.

It's about as trustworthy as SysMark, Intel's Compiler,LoserUserBenchmark and liquid cooling using a secret water chiller.

Threads do not map to cores 1:1; they get scheduled at whatever core is free at any given time. Hence why Ryzen 1 had performance issues when threads jumped across cores leading to a cache miss. Windows maximizes thread uptime, which works when you have a unified L2/L3 cache, but causes all sorts of weird performance cases in other situations.

Likewise, software engineers have been using threads for literally 50 years now; you make a thread when you have some sub-system that is not dependent on having to wait for some other part of the system in order to run. I've made programs that use hundreds of threads; Windows handles the scheduling, and everything works. I've also made programs where one thread does everything, since there was no benefit whatsoever to threading.

Anyone who thinks the number of threads must equal the number of CPU cores really has no idea about modern software design principles, let alone how CPU schedulers work.

gamerk2

Posts: 1,357 +1,582

The problem is all those little performance enhancing bits Intel has added to x86 over the years costs a lot of power. When M1/ARM scale up they'll start drawing similar power numbers for much the same reason.Alderlake is mostly for Mobile. These are going to be Intel's big-little chips...

Intel's gameplay to try and compete with AMD in the Low wattage range, as Zen scales down very well. Intel's Core tech does not. Which is why the M1 murders the Intel 15W chips yet doesn't murder AMD's 5800u in its 15w config.

Hence Intels issue: To scale down they need to reduce performance, remove features (potentially breaking compatibility), and manage an entirely different product line.

Yet AMD has been able to do just that... Make high performance x86 designs scale down. Intel has not.The problem is all those little performance enhancing bits Intel has added to x86 over the years costs a lot of power. When M1/ARM scale up they'll start drawing similar power numbers for much the same reason.

Hence Intels issue: To scale down they need to reduce performance, remove features (potentially breaking compatibility), and manage an entirely different product line.

Zen 3 is pretty competitive in terms of Performance per watt even compared to the Best ARM has to offer.

bluetooth fairy

Posts: 235 +166

Funny to see fx-8350 along with 6900xt. You could probably use something like gtx 1050 to make the fx look more relevant for its days. Oh, you do remember the days of fx's, at least. And who the hell was Matt, which is called by your host Steve there?) Man,.. what the time it was.

You guys forget a few things why the FX still was very populair:

- Multithread power, it would beat the i7 on encoding and such

- Overclocking headroom, I mean buy a 3.2Ghz model and push towards 5Ghz if you had the right motherboard, cooling and such.

Overclocking did yield more out of it, not just the raw core clocks but also the internal bus like the FSB or CPU/L3 Northbridge etc.

If I remember well, my 8320 at 4.8Ghz did around 767CB which was equal to a Ryzen 1600x.

They could take a punch, overvolting all the way through 1.6V and nothing was wrong. Unlike ryzens today. They degrade in weeks if overclocking was done improper. With the birth of Ryzen, the OC'ing kind of died at that point. PBO etc is far more advanced then all core.

Which CB? 15? Because my Ryzen 5 1600 at 3.9Ghz would score around 1300.

Mr Majestyk

Posts: 2,425 +2,259

Wish the test included non-frivolous tests other than gaming. How about running a fluid sim or Matlab script or video editing workload.

Avro Arrow

Posts: 3,721 +4,822

I'm not an American so no, it's NOT the prevailing law. As I said though, it leaves the window open to sue EVERY CPU manufacturer for not really selling CPUs in the pre-486DX days because NONE of them had an FPU included.CPU's are well defined (really well) -might as well use wiki for this.

Throwing our years of experience out?

Anyway, this HAS BEEN settled the Ca. case serves as a good example of the legal definition since it is the prevailing law. Might want to update your personal definition, maybe.

Avro Arrow

Posts: 3,721 +4,822

I don't have a problem with Intel doing this, I have a problem with it not being disclosed, that's all.Why wouldn't they? Release from 2018;

Money was on the table.

Avro Arrow

Posts: 3,721 +4,822

That's true when running several programs at once, but what if you're only running one that is capable of taxing all of the CPU's resources at once, like running AOTS on a system that's been made to be so barebones that it isn't even hooked up to the web?Threads do not map to cores 1:1; they get scheduled at whatever core is free at any given time. Hence why Ryzen 1 had performance issues when threads jumped across cores leading to a cache miss. Windows maximizes thread uptime, which works when you have a unified L2/L3 cache, but causes all sorts of weird performance cases in other situations.

Likewise, software engineers have been using threads for literally 50 years now; you make a thread when you have some sub-system that is not dependent on having to wait for some other part of the system in order to run. I've made programs that use hundreds of threads; Windows handles the scheduling, and everything works. I've also made programs where one thread does everything, since there was no benefit whatsoever to threading.

Anyone who thinks the number of threads must equal the number of CPU cores really has no idea about modern software design principles, let alone how CPU schedulers work.

I can guarantee you that to get the best numbers, the only thing running other than AOTS for that benchmark was Windows with every possible utility turned off. Under normal circumstances, of course you're correct. My PC runs hundreds of threads at once with only 6 physical cores and 6 SMT logical cores. I never meant to infer that it's all that they can do. I was inferring that in this very specific situation, it could make a difference.

I'm not automatically denying that the next-gen Intel is faster than the current-gen AMD, that would be stupid because Intel has always had good CPU performance. I'm just not liking how this benchmark was done without full disclosure being provided.

K10 was never supposed to exist. It was developed because other architectures failed and AMD needed something before Bulldozer. AMD had at least two architectures that never made it before Bulldozer. How I know it? Athlon64 architecture was ready 1999. Bulldozer architecture was started around 2007 ready around 2009. There's 8 year gap where AMD developed only K10 that was just little tweak to K8.The construction core was an utter disaster.I often wondered back then what would have happened if they had simply stuck with optimized K10 instead. The llano APU obliterated the later 4000 and 5000 series APUs, and many simply wanted AMD to take their phenom 1100t and put it on the 32nm node bulldozer ran on.

Needless to say they tried something but failed.

That is because Bulldozer was supposed to reach at least 5 GHz clock speeds with decent cooling. Due to some design flaws (L2 cache limiting clock speed) that didn't happen.The thing is, even when AMD discovered that Bulldozer's IPC was worse than Debeb/Thuban/Llano, they didn't do anything about it. I blame AMD for that because they had plenty of time to change their minds. As it is, I wouldn't touch a craptop with an FX CPU because they were pretty expensive for what you got, unlike the $170CAD I paid for my FX-8350 brand-new. The fact that they drank juice as fast as an alcoholic puts away rum coolers certainly didn't help them in the mobile space. AMD would have been better off sticking to Llano derivatives for mobile applications.

Exactly, settled. court didn't decide anything. Also whole case was not about amount of cores, it was about "double cores mean double performance" -advertising. It was never about if FX has 8 cores but if double cores also mean double performance. Steve is clearly wrong here.Throwing our years of experience out?

Anyway, this HAS BEEN settled the Ca. case serves as a good example of the legal definition since it is the prevailing law. Might want to update your personal definition, maybe.

M1 vs Ryzen 5800U is not valid comparison since M1 is not CPU. In other words, it has no support for external memory.Intel's gameplay to try and compete with AMD in the Low wattage range, as Zen scales down very well. Intel's Core tech does not. Which is why the M1 murders the Intel 15W chips yet doesn't murder AMD's 5800u in its 15w config.

Steve

What was exact test configuration?

Also what was FX-8350 core configuration?

1+1+1+1

2+2+2+2

2+2+0+0

or something else? Since Bulldozer module contain two ALU's, all but one of them can be disabled and it still works. However you cannot disable all physical cores from Ryzen because logical core alone (without physical core) is useless.

FX-8350 is no doubt 8 core since you CAN disable (I have tried it personally) half of cores (that's how Windows 7 handles them) and still you have 4 cores available. Default configuration is 4 modules 2 cores each, that is 2+2+2+2. Now if that is 4 core, then what is 1+1+1+1? 2 core??

Anyway exact configuration is missing from article.

What was exact test configuration?

SMT was enabled or not?With the exception of the 1800X, we limited all CPUs to 4 cores running at 4.2 GHz. The 1800X still ran with just 4 cores enabled, but they were clocked at 4.1 GHz as that was the highest stable frequency I could achieve with that part.

Also what was FX-8350 core configuration?

1+1+1+1

2+2+2+2

2+2+0+0

or something else? Since Bulldozer module contain two ALU's, all but one of them can be disabled and it still works. However you cannot disable all physical cores from Ryzen because logical core alone (without physical core) is useless.

FX-8350 is no doubt 8 core since you CAN disable (I have tried it personally) half of cores (that's how Windows 7 handles them) and still you have 4 cores available. Default configuration is 4 modules 2 cores each, that is 2+2+2+2. Now if that is 4 core, then what is 1+1+1+1? 2 core??

Anyway exact configuration is missing from article.

Similar threads

- Replies

- 22

- Views

- 116

- Replies

- 47

- Views

- 734

Latest posts

-

-

-

Samsung delays $37B Texas chip plant with no customers in sight

- gamerk2 replied

-

Agentic AI is all hype for now, says Gartner

- schinbone replied

-

MSI MAG 272QP X50 500Hz Review: Brighter, Faster OLED Gaming

- robert40 replied

-

Nvidia closes in on $4 trillion valuation, surpasses Apple's record

- Theinsanegamer replied

-

TechSpot is dedicated to computer enthusiasts and power users.

Ask a question and give support.

Join the community here, it only takes a minute.