Why it matters: As predicted, one of the items unveiled by AMD during its Product Premier livestream this week was the Radeon RX 6500 XT. The card arrives on January 19 with an MSRP of $199, and while AMD previously said it's expected to be the most accessible and affordable card of the last two years, it already looks like the suggested selling price was optimistic, unsurprisingly.

A report from French site CowCotland claims that the Radeon RX 6500 XT will sell for 299 Euros in the country, making it around 50% more expensive than the European MSRP, according to VideoCardz. That's around the same as or slightly less than the MSRP increase applied to other RDNA 2 cards in Europe.

In an interview with PCWorld about the Radeon RX 6500 XT, AMD CEO Dr. Lisa Su said, “We’re positioning the launch such that – and I know, you guys always say, ‘Well, yeah, they’re just saying that’ – but we really are positioning the launch at a $199 price point. It is sort of affordable to the mainstream. You know, we intend to have a lot of product out there.”

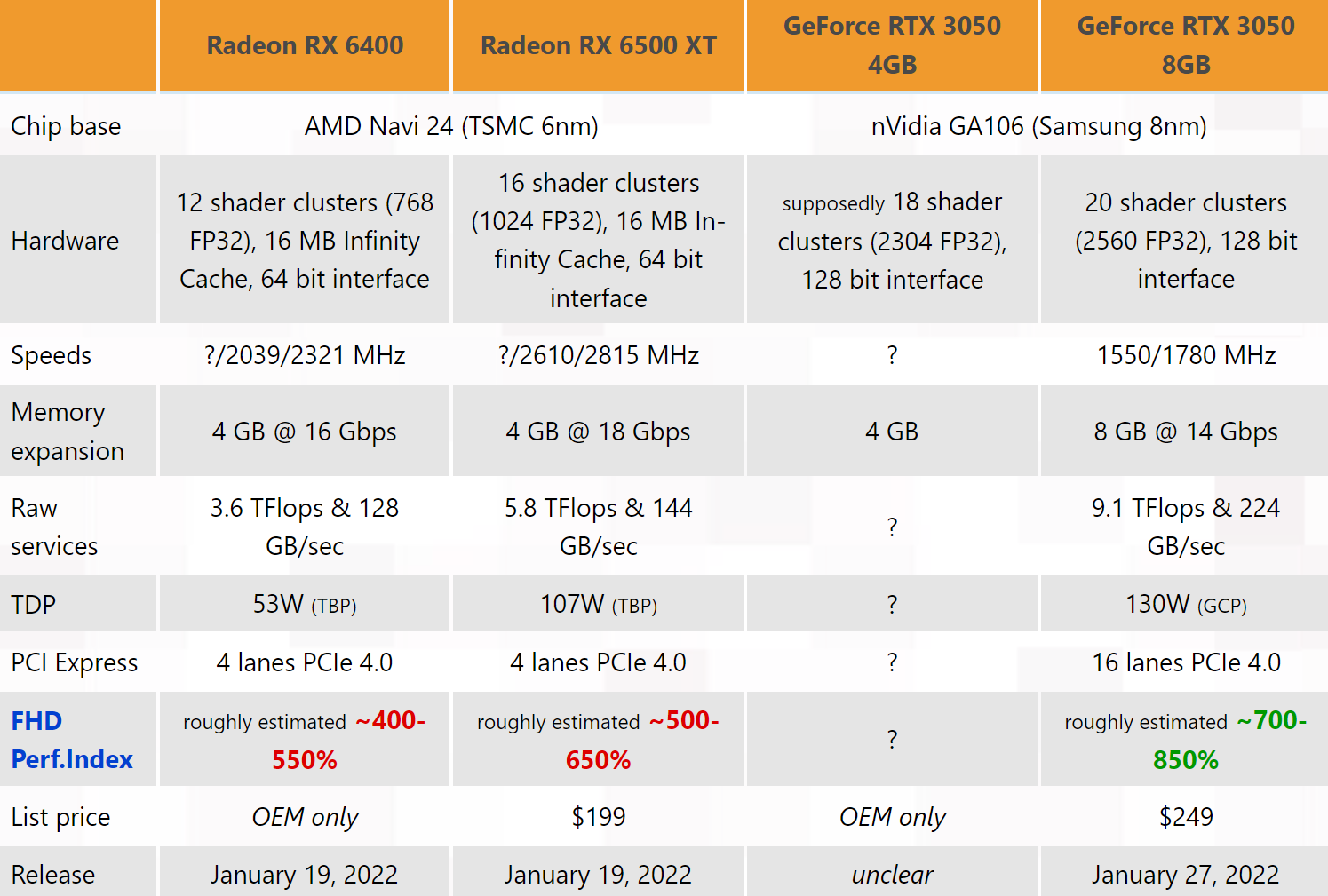

Image courtesy of 3DCenter

As for the card itself, the RX 6500 XT, built on a new 6nm architecture, features a PCIe Gen 4.0 x4 interface, 16 compute units, 16 MB of infinity cache, a 2.6 GHz game clock, and 4GB of GDDR6 on a 64-bit bus. While many may balk at the paltry amount of VRAM, using 4GB was an intentional move by AMD to make the card less appealing to miners, thereby improving availability and the selling price. That’s the plan, anyway.

It’ll certainly be interesting to see how Nvidia’s response to the RX 6500 XT, the desktop RTX 3050, will fare when it arrives in late January. Team green’s card has 8GB of VRAM and a $249 MSRP, but it will likely face more demand from miners, potentially pushing up the price and exacerbating availability problems.

You can watch AMD's full Product Premier livestream here and Nvidia's CES keynote here.

https://www.techspot.com/news/92895-radeon-rx-6500-xt-reportedly-cost-more-than.html