In brief: YouTube is continuing to fight back against inappropriate and offensive content appearing on the platform. In a recent report, the company revealed it removed a total of 58 million videos and 224 million comments in the third quarter alone.

The Google-owned site has long faced accusations that it doesn’t do enough to combat videos containing extremism, pornography, abuse, and other policy-violating material. But through a combination of people and technology, millions of these videos and channels are being flagged and removed.

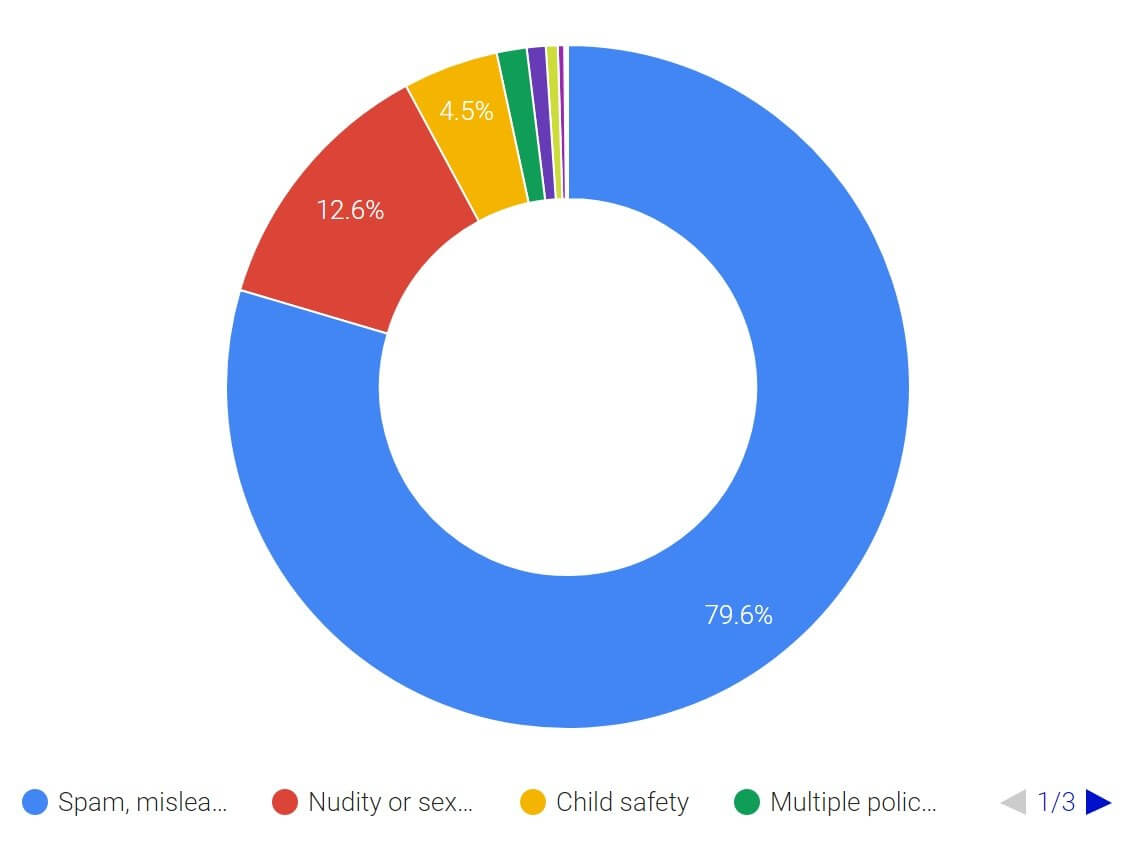

The company explains that a channel is deleted if it accrues three community guideline strikes in 90 days, or a single case of severe abuse, which includes predatory behavior. Over 1.6 million channels were removed in Q3 2018, along with the 50.2 million videos they contained. During the month of September, most of these—almost 80 percent—were deleted because they fell under the category of spam, misleading, and scam.

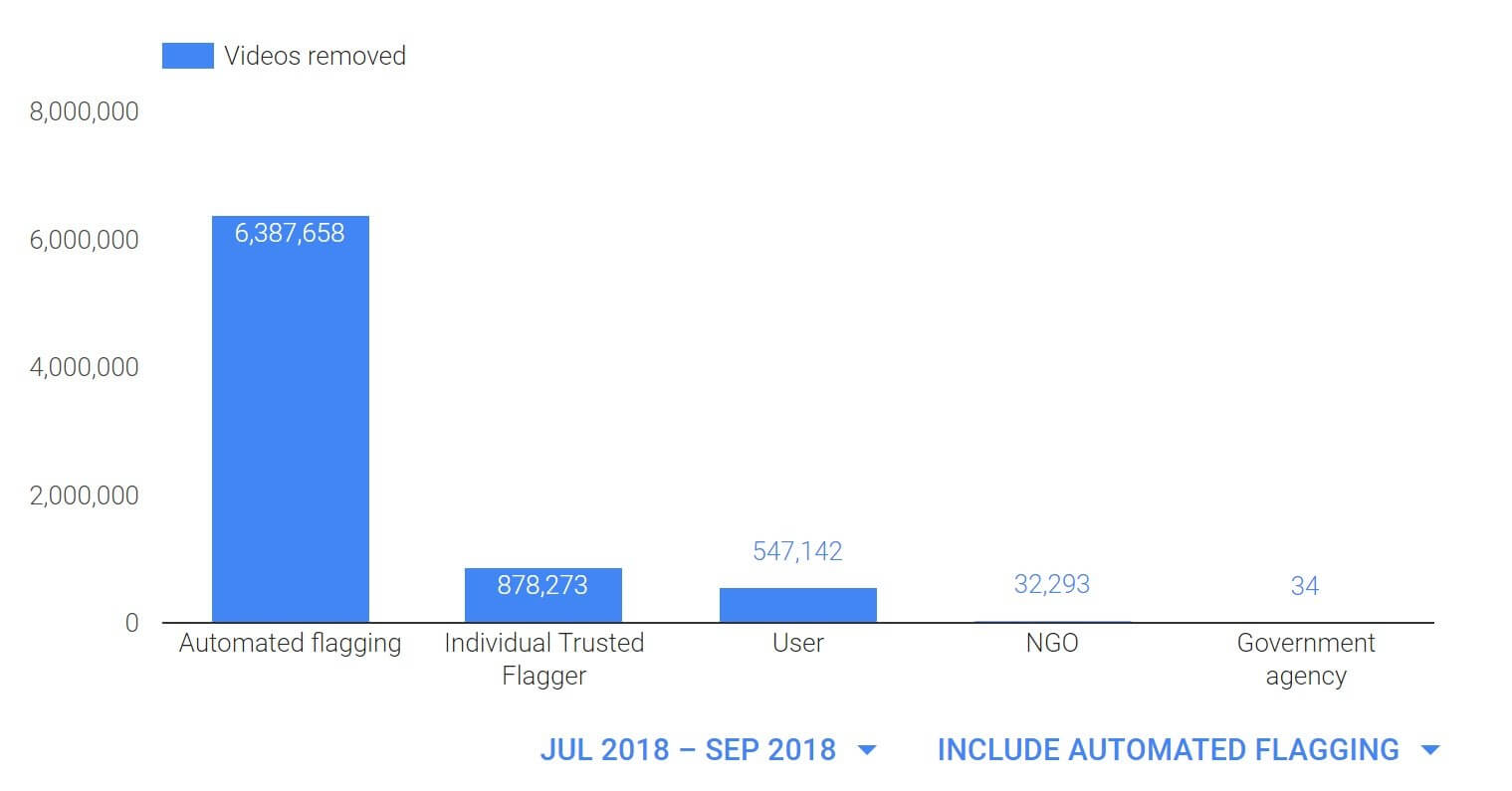

Of the 7.8 million videos that were also removed, 6.3 million were initially flagged by machines before being forwarded to human moderators for deletion, and 74.5 percent of these never got a single view. Human flaggers come in the form of trusted moderators, users, NGOs, and government agencies. Like the offending channels, most videos were deleted because of spam, misleading, and scam content (72 percent), while child safety was second at just over ten percent. Violent extremism made up just 0.4 percent of these videos.

Finally, YouTube removed a massive 224 million comments during the quarter, the vast majority of which was spam identified by automated flagging. Just 0.5 percent were deleted as a result of human flagging.

While Google has increased its number of moderators to over 100,000 this year, the 300+ hours of video that gets uploaded every minute makes it impossible to pre-check every one.

https://www.techspot.com/news/77865-youtube-took-down-58-million-videos-q3-tos.html