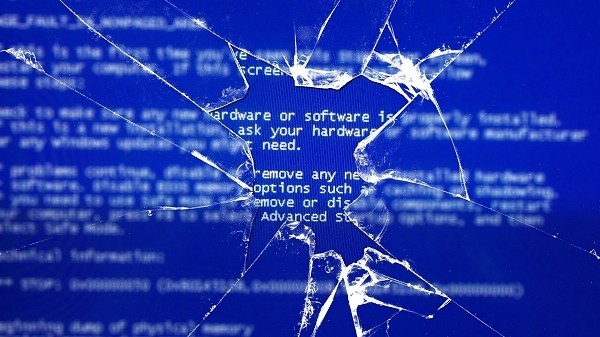

Depending on what you are doing, a computer crash can be classified as anything from a minor annoyance to a complete disaster. I've been experiencing some BSODs lately of unknown origin and let me be the first to tell you, it's pretty darn annoying when you are right in the middle of something important - like work - and everything shuts down before you get a chance to save.

Fortunately, researchers and scientists at the University College London have come up with a solution they say will end computer crashes forever.

Today's computers typically work procedurally by pulling data from memory, working on the data then sending it back to memory. This usually happens in a fixed order and until something goes wrong, all is well. When a process fails or crashes for whatever reason, however, everything hits the fan and the computer will often times lock up.

The computer that UCL has developed is different in the fact that data and instructions are essentially mirrored across several different systems. The systems work simultaneously although independent of each other - the only thing they share is a section of memory for context-sensitive data.

In the event that one system crashes or data becomes corrupted, the computer is able to rebuild that set of data from another system and start fresh again. The systems are said to execute in a random order using a pseudorandom number generator that acts as a task scheduler.

At this point, performance isn't all that great but there's certainly room to improve upon. If you're interested in learning more about this developing technology, the developers will present their findings at the IEEE International Conference on Evolvable Systems in April.

Group of technicians repair illustration (homepage) by Shutterstock.