The release of Nvidia's GeForce GTX 980 and 970 graphics cards with their Maxwell architecture is an impressive feat that cannot be understated. While we have no doubt that AMD will rebound and answer these cards at some point, as historically the graphics industry has always been a tug of war like this, right now, Nvidia's flagship cards have produced the rare hat trick: they're faster, they're cooler, and they're more efficient. More than Kepler, and more than AMD's GCN-based cards.

These new cards are already becoming well known for their staggering overclocking headroom, and the generous 125% power limit Nvidia offers for overclockers is still almost comically easy to hit. The GTX 980 is rated for an exceptionally frugal 165W, but Nvidia does tighten the reins a bit to hit that target.

Guest author Dustin Sklavos is a Technical Marketing Specialist at Corsair and has been writing in the industry since 2005. This article was originally published on the Corsair blog.

Since manufacturer TDPs can often be a bit more cherry than real world usage would indicate, we've decided to see just how efficient Nvidia's Maxwell architecture is. We've taken a GeForce GTX 980 and pushed it about as hard as we can without modifying the BIOS. We've analyzed whether you'll want to prioritize overclocking the GPU (as with GK110) or the video memory (as with GK104), as well as the performance and power impacts of going with either or both.

Our charts list our overclocks as "Low," "Moderate," and "High," and the table below details which settings correspond to which label. Remember that since Kepler, Nvidia uses offsets to overclock instead of specific clock speeds. Also, stock enjoys an automatically controlled fan and 100% power limit, while all overclocks have had the fan set manually to 75% and the power limit to 125%.

| GPU Only | VRAM Only | Both | |

| Low | +100 GPU, 0 VRAM | 0 GPU, +100 VRAM | +100 GPU, +100 VRAM |

| Moderate | +150 GPU, 0 VRAM | 0 GPU, +200 VRAM | +150 GPU, +200 VRAM |

| High | +200 GPU, 0 VRAM | 0 GPU, +300 VRAM | +200 GPU, +300 VRAM |

These overclocks correspond essentially to a peak boost clock of 1278MHz on the core at stock speeds all the way up to 1478MHz at maximum. The video memory is running at 7GHz stock, all the way up to 7.6GHz at maximum.

Our testbed and benchmarks:

- CPU: Intel Core i7-5960X @ 4GHz, 1.1Vcore

- Motherboard: ASUS X99-Deluxe

- Memory: 4x8GB Corsair Dominator Platinum DDR4-2666

- Storage: 128GB and 256GB Corsair Force LX SSDs

- CPU Cooler: Corsair Hydro Series H100i

- Power Supply: Corsair AX1200i 1200W 80 Plus Platinum

-

Benchmarks:

- BioShock Infinite, 3840x2160 Ultra Preset + DDOF

- Metro: Last Light Redux, 3840x2160 Very High Preset, no AA or PhysX

- Tomb Raider, 3840x2160 Ultimate Preset

- 3DMark Fire Strike Ultra

We also used Corsair Link to monitor power consumption through the AX1200i power supply, which is how we can report both average and peak power for each benchmark.

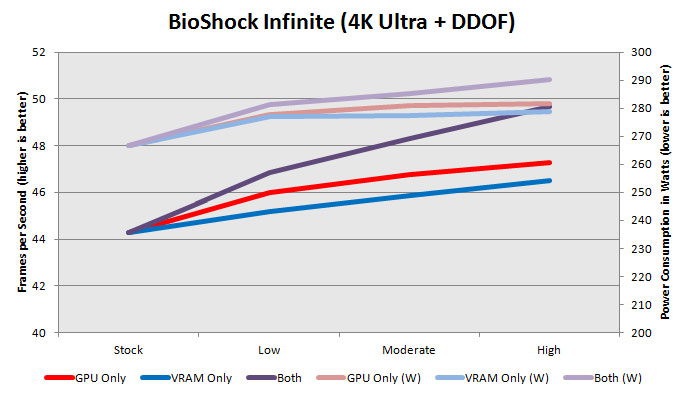

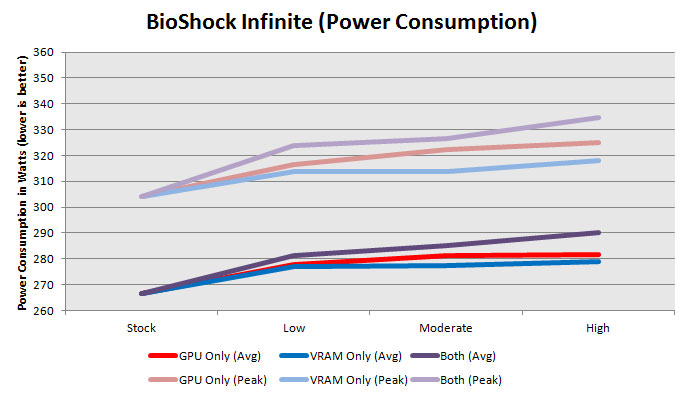

Right away you can see just how efficient the Maxwell architecture is even with the substantially increased 125% power limit. Average power consumption increases less than 30W from stock to maximum overclock (peak power increases by just 30W), but you get another ~6 fps at these extremely intense settings. When you consider that's from starting at 44fps, brushing up against 50fps is not insignificant. Overclocking the VRAM and GPU jointly seems to be the way to go with BioShock Infinite, but the GPU is preferred.

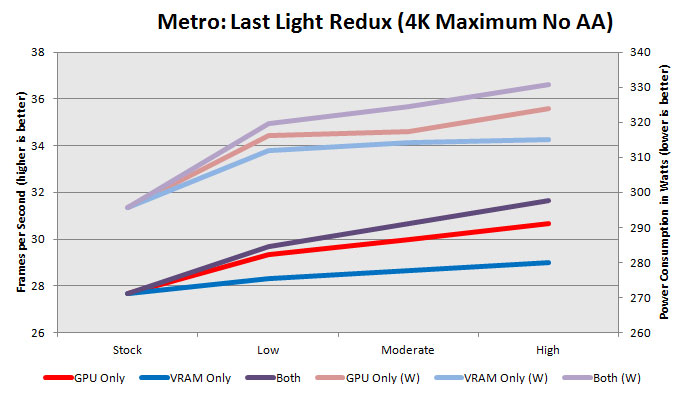

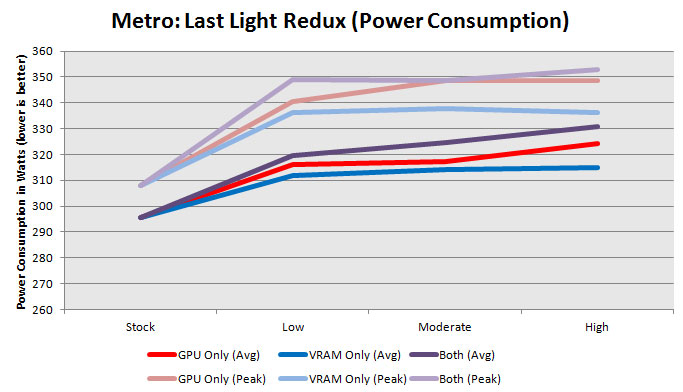

Metro: Last Light Redux is absolutely punishing on graphics hardware, and pulls easily the most power of all of our benchmarks. Power consumption seems to scale pretty linearly with performance (more on this later), and once again the GPU yields far greater benefits than the VRAM. Average power consumption hits roughly the same limit as with BioShock Infinite; an increase of about 30W. Peak power skyrockets, though, going up almost 50W.

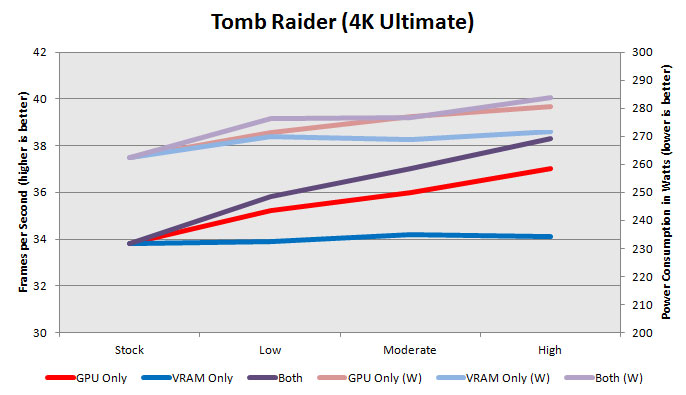

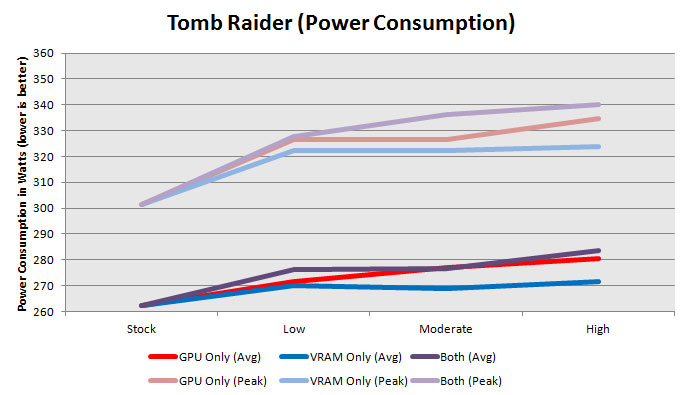

Switching gears to Tomb Raider, we find the most frugal of the actual games we've tested. Overclocking memory is only of any value when the GPU itself is being overclocked and must be fed. Power consumption increase is extremely modest, though; average power only goes up by about 20W.

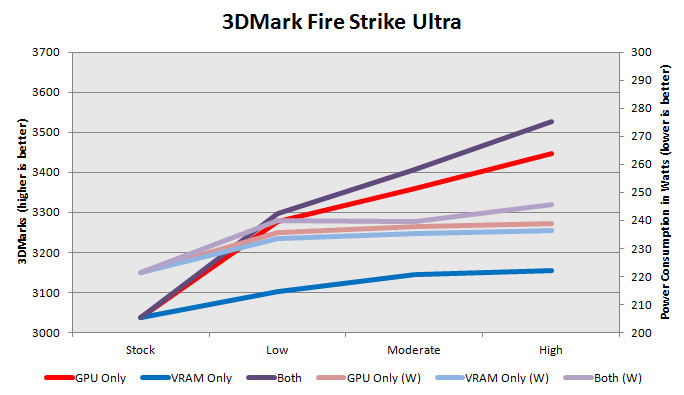

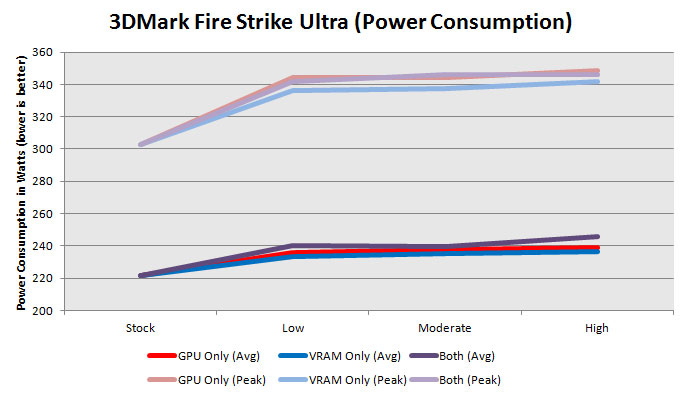

While I really like using 3DMark as a sanity check and a stability test (in conjunction with the Tomb Raider benchmark it's pretty flawless), you can see that it behaves differently from the games. Power consumption is fairly flat after the power cap has been lifted to 125%, and it's another situation where you only see a benefit from overclocking the VRAM if you've already overclocked the GPU.

Let's put everything in perspective.

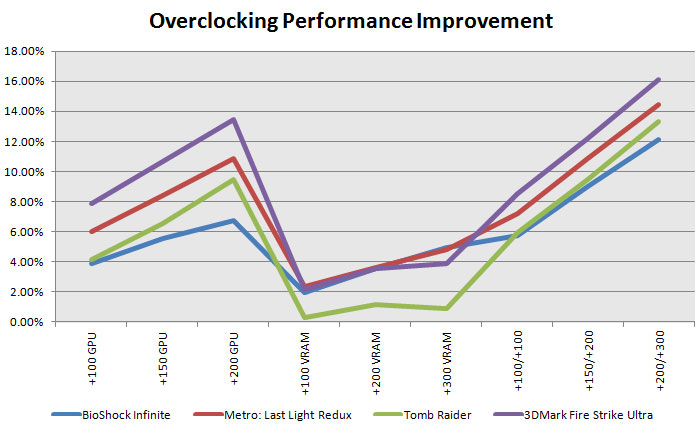

In terms of raw performance, gains from memory overclocking are modest at best, peaking at about 5% with a fairly healthy overclock to 7.6GHz on the GDDR5. That's practically where GPU performance increases start. Of course, the smart money is on overclocking them both together. You can get about a 15% increase in performance in exchange for about 25% more power, suggesting that Nvidia's default tuning was probably the sweet spot for GM204.

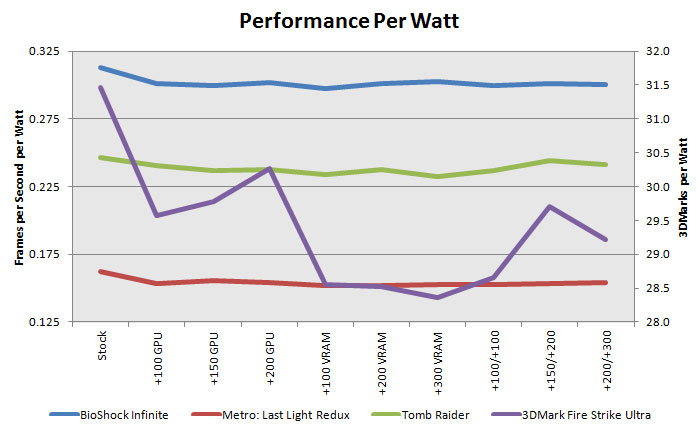

If we map things out in terms of performance per watt, what we see is pretty modest. Stock settings seem to deliver the best bang for your buck, but if you want more you can get more. Only 3DMark seems to really show any variance.

The fact is, Nvidia's Maxwell architecture lives up to the hype. It is as efficient as advertised, even after you start pulling out the stops. Stock cards have a boatload of headroom, but the reality is that overclockers are going to slam their heads into Nvidia's power limit well before they hit the limits of what the GM204 in the GTX 980 can really do. We're going to have to put these under water with an unlocked BIOS to see just how far the silicon can be pushed.