Why it matters: For a company that doesn't manufacture anything, Arm has a surprisingly large and broad impact, not only on the chip industry, but the overall tech industry and, increasingly, many other vertical industries as well. The company---which creates semiconductor chip designs that it licenses as intellectual property and then Arm's customers use the designs to build chips---is the brains behind virtually every smartphone ever made.

In addition, it has a small but growing market in data center and other network infrastructure equipment and is the long-time leader in intelligent devices of various types---from toys to cars and nearly everything in between; essentially the "things" part of IoT (Internet of Things).

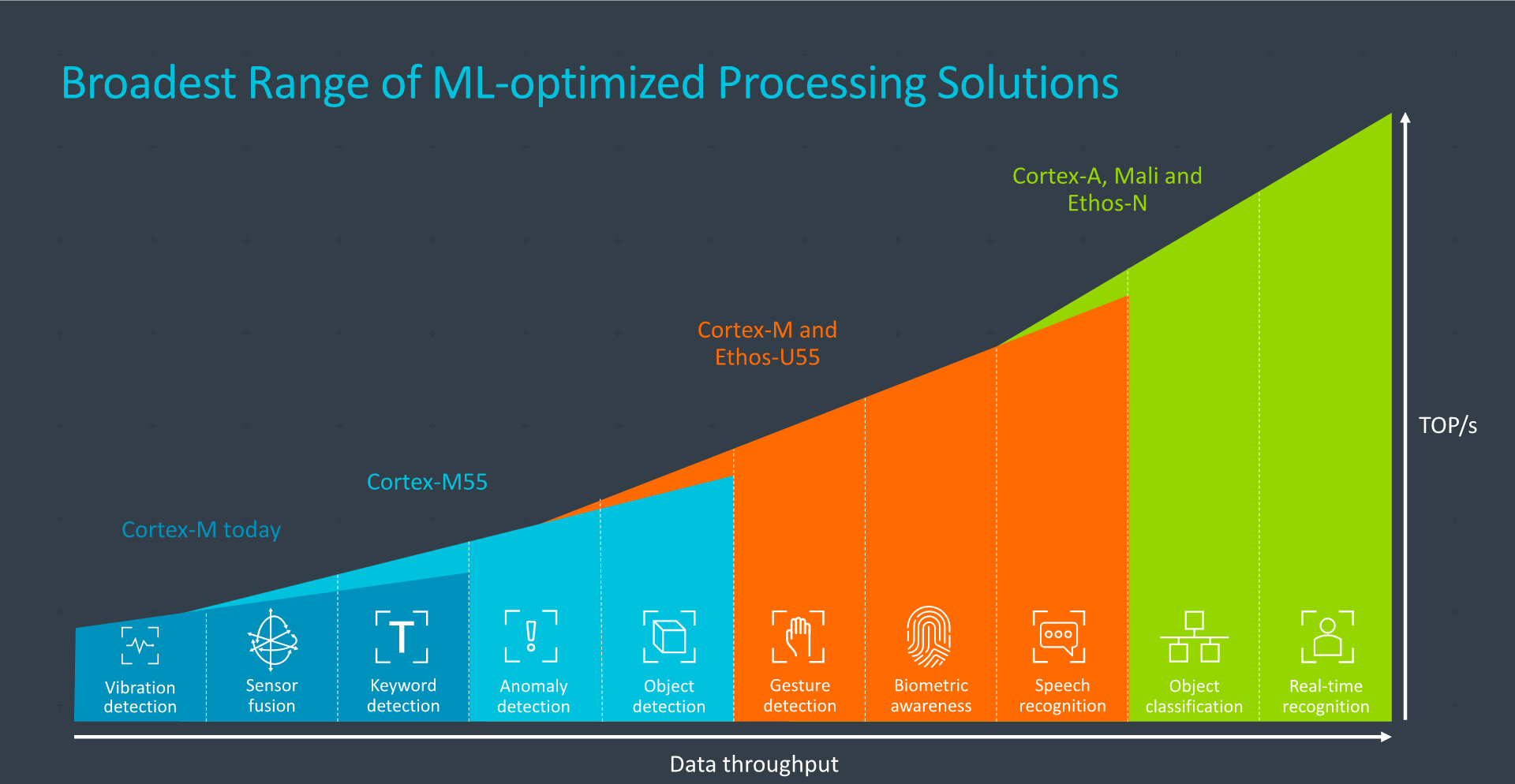

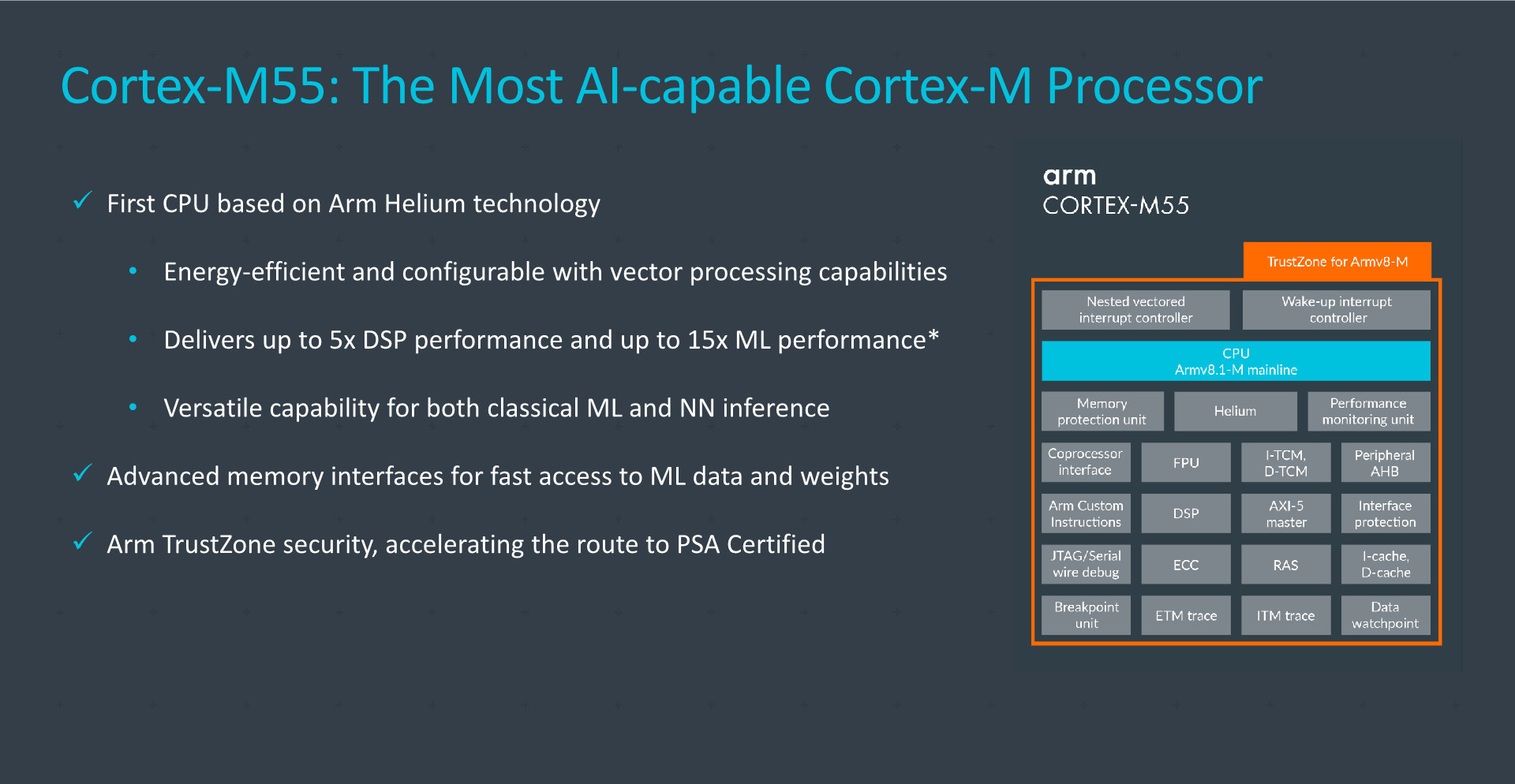

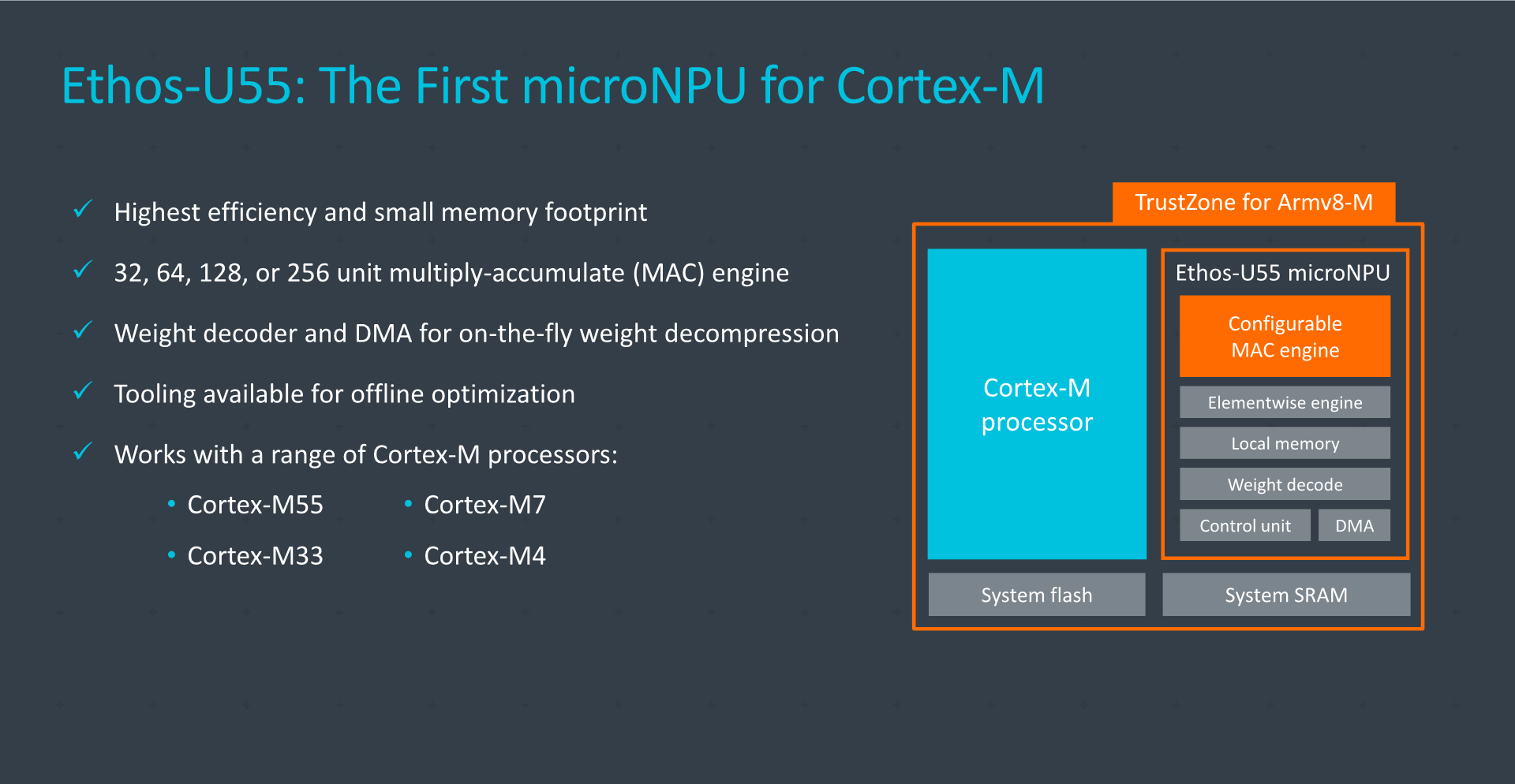

As a result, it's not terribly surprising to see the company pushing ahead on new innovations to power more devices. What is unexpected about Arm's latest announcements, however, is the degree of performance that it's enabling in microcontrollers---tiny chips that power billions of devices. Specifically, with the launch of its new Cortex-M55 Processor, companion Ethos-U55 microNPU (Neural Processing Unit) accelerator, and new machine learning (ML) software, Arm is promising a staggering 480x improvement in ML performance for a huge range of low-power applications.

That's not the kind of performance numbers you typically hear about in the semiconductor industry these days. Practically speaking, it turns something that was nearly impossible into something very doable.

More importantly, because microcontrollers are the tiny, unsung heroes powering everything from connected toothbrushes to industrial equipment, the potential long-term impact could be huge. Most notably, the addition of AI intelligence to all these types of "things" offers the promise of finally getting the kind of smart devices that many hoped for in areas from smart home to factory automation and beyond. Imagine adding voice control to the smallest of devices or being able to get advanced warnings about potential part failures in slightly larger edge computing equipment through onboard predictive maintenance algorithms. The possibilities really are quite limitless.

Practically speaking, it turns something that was nearly impossible into something very doable.

The announcements are part of an overall strategy at Arm to bring AI capabilities to its full range of IP designs. Key to that is work the company is doing on software and development tools. Because Cortex-M series designs have been around for a long time, there's a large base of applications that device designers can use to program them. However, because a great deal of ML and AI-based algorithm work is being created in frameworks, such as TensorFlow, the company is also bringing support for its new IP designs into TensorFlow Lite Micro, which is optimized for the types of smaller devices for which these new chips are intended.

In addition to software, there are several different hardware-centric capabilities that are worth calling out. First, the Cortex-M55 is the first microcontroller design to incorporate support for the company's Helium vector process technology, previously only found on larger Arm CPU cores. The M55 also includes support for Arm Custom Instructions, an important new capability that lets chips designers create custom functions that can be optimized for specific workloads.

The new Ethos-U55 is a first of its kind dedicated AI accelerator architecture that was designed to pair with the M55 for devices in which the 15x improvement in ML performance that the M55's new design offers is not enough. In addition, the combination of the M55 and the U55 was specifically intended to offer a balance of scalar, vector, and matrix processing, which is essential to efficiently running a wide range of machine-learning-based workloads.

Of course, there are quite a few steps between releasing new chip IP designs and seeing products that leverage these capabilities. Unfortunately, that means it will likely be sometime near the end of 2021 and into 2022 before we can really see the promised benefits of a nearly 500x improvement in machine learning performance. Plus, it remains to be seen how challenging it will be for low-power device designers to create the kinds of ML algorithms they'll need to make their devices truly smart. Hopefully, we'll see a large library of algorithms developed so that device designers with little to no previous AI programming experience can leverage them.

Ultimately, the promise of bringing machine learning to small, battery-powered devices is an intriguing one that opens up some very interesting possibilities for the future. It will be interesting to see how the "things" develop.

Bob O'Donnell is the founder and chief analyst of TECHnalysis Research, LLC a technology consulting and market research firm. You can follow him on Twitter @bobodtech. This article was originally published on Tech.pinions.