Forward-looking: Popular Large Language Models (LLM) such as OpenAI's ChatGPT have been trained on human-made data, which still is the most abundant type of content available on the internet right now. The future, however, could hold some very nasty surprises for the reliability of LLMs trained almost exclusively on previously generated blobs of AI bits.

In the grim dark future of the internet when the global network will be filled with AI-generated data, LLMs will essentially be unable to progress further. Instead, they'll go back to their original state, forgetting previously acquired, human-made content and throwing out only garbled piles of bits for maximum unreliability and minimal credibility.

That, at least, is the idea behind a new paper featuring the AI-generated title The Curse of Recursion. A team of researchers from UK and Canada tried to speculate what the future might hold for LLMs and the internet as a whole, imagining that much of the publicly available content (text, graphics) will eventually be contributed almost exclusively by generative AI services and algorithms.

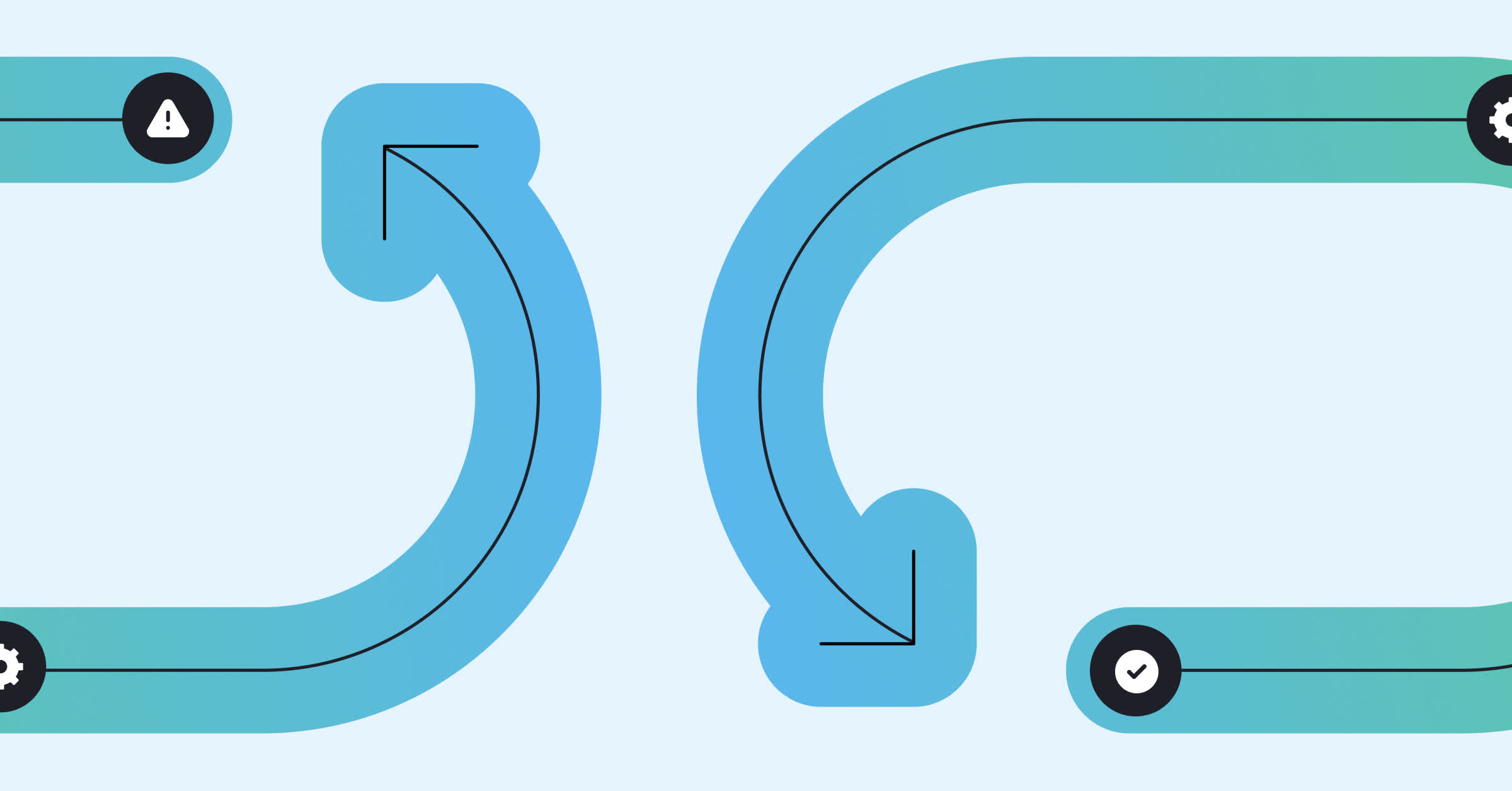

When no human writer – or very few of them – will be on the internet, the paper explains, the internet will fold onto itself. The researchers found that use of "model-generated content in training" causes "irreversible defects" in the resulting models. When original, human-made content disappears, an AI model like ChatGPT experiences a phenomenon the study describes as "Model Collapse."

Just as we've "strewn the oceans with plastic trash and filled the atmosphere with carbon dioxide," one of the paper's (human) authors explained on a human-made blog, we're now about to fill the internet with "blah." Effectively training new LLMs or improved versions of existing models (like GPT-7 or 8) will become increasingly harder, giving a substantial advantage to companies which already scraped the web before, or can control access to "human interfaces at scale."

Some corporations have already started to prepare for this AI-driven corruption of the internet, bringing down the servers of the Internet Archive during a massive, unrequested and essentially malicious in nature training "exercise" through Amazon AWS.

Like a JPEG image recompressed too many times, the internet of the AI-driven future is seemingly destined to turn into a giant pile of worthless digital white noise. To avoid the AI apocalypse, researchers are suggesting some potential remediations.

Besides retaining original, human-made training data to also train future models, AI companies could make sure that minority groups and less popular data are still a thing. This is a non-trivial solution, the researchers said, and one that requires a lot of work. What's clear, though, is that Model Collapse is an issue of machine learning algorithms that cannot be neglected if we want to keep improving current AI models.