In brief: As with many new revolutionary technologies, the rise of generative AI has brought with it some unwelcome elements. One of these is the creation of YouTube videos featuring AI-generated personas that are used to spread information-stealing malware.

CloudSEK, a contextual AI company that predicts cyberthreats, writes that since November 2022, there has been a 200-300% month-on-month increase in YouTube videos containing links to stealer malware, including Vidar, RedLine, and Raccoon.

The videos try to tempt people into watching them by promising full tutorials on how to download cracked versions of games and paid-for licensed software such as Photoshop, Premiere Pro, Autodesk 3ds Max, and AutoCAD.

Is this the sort of AI-generated face you would trust?

These sort of videos usually consist of little more than screen recordings or audio walkthroughs, but they've recently become more sophisticated through the use of AI-generated clips from platforms such as Synthesia and D-ID, making them appear less like scams in some people's eyes.

CloudSEK notes that more legitimate companies are using AI for their recruitment details, educational training, promotional material, etc., and cybercriminals are following suit with their own videos featuring AI-generated personas with "familiar and trustworthy" features.

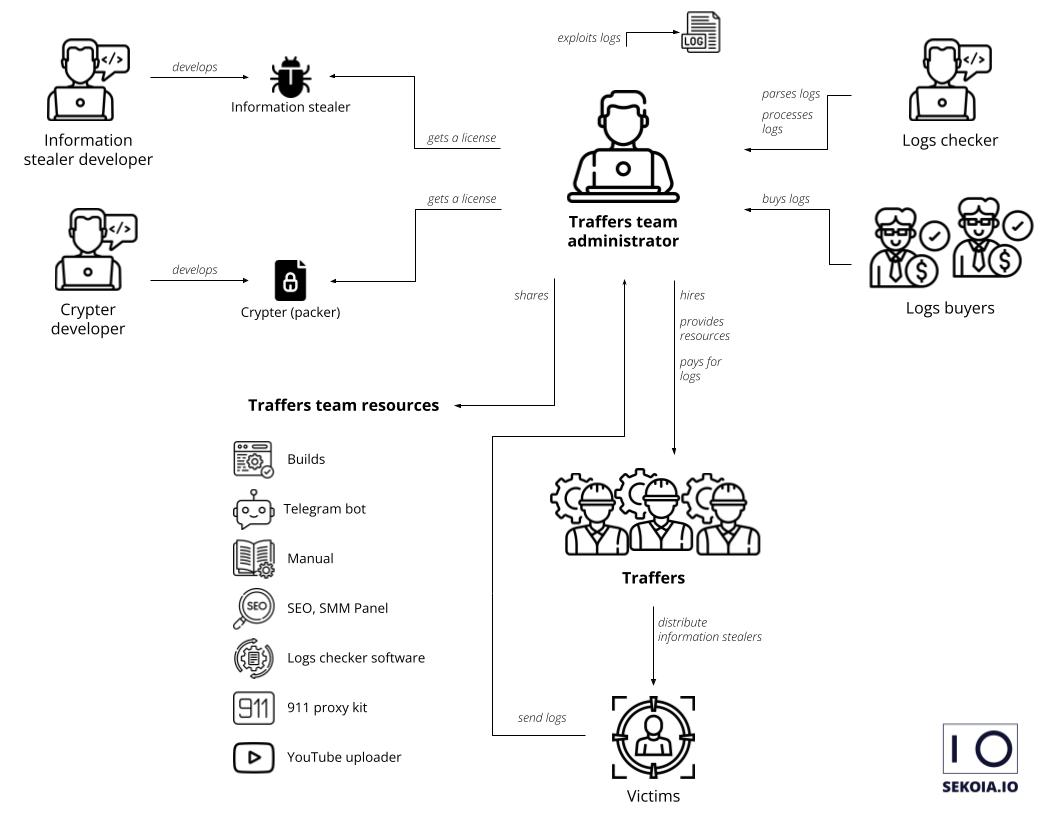

Those who are tricked into believing the videos are the real deal and click on the malicious links often end up downloading infostealers. Once installed, they can pilfer everything from passwords, credit card information, and bank account numbers to browser data, cryptowallet details, and system information, including IP addresses. Once located, the data is uploaded to the threat actor's server.

Organization of the information stealer ecosystem (sekoia.com)

This isn't the first time we've heard of YouTube being used to deliver malware. A year ago, security researchers discovered that some Valorant players were being deceived into downloading and running software promoted on YouTube as a game hack, when in fact it was the RedLine infostealer being pushed in the generative-AI videos.

Game cheats were also used as a lure in another malware campaign spread on YouTube in September. Again, RedLine was the payload of choice.

Not only does YouTube boast 2.5 billion active monthly users, it's also the most popular platform among teens, making it an alluring prospect for cybercriminals who have been circumventing the platform's algorithm and review process. One of these methods is by using data leaks, phishing techniques, and stealer logs to take over existing YouTube accounts, usually popular ones with over 100,000 subscribers.

Other tricks the hackers use to avoid detection are location-specific tags, fake comments to make a video appear legitimate, and including an exhaustive list of tags that will deceive YouTube's algorithm into recommending the video and ensuring it appears as one of the top results. They also obfuscate the malicious links in the descriptions by shortening them, linking to file hosting platforms, or making them directly download the malicious zip file.

https://www.techspot.com/news/97926-ai-generated-personas-pushing-malware-youtube.html