As Facebook draws ever closer to 2 billion monthly average users, the moderators that remove graphic and extreme content from the platform face an increasingly difficult job. Leaked copies of 100 internal documents obtained by The Guardian show it's not just sheer volume that's overwhelming workers, but confusing policies over exactly what should and shouldn't be deleted.

"Facebook cannot keep control of its content," said one source. "It has grown too big, too quickly." The publication reports that moderators often have "just 10 seconds" to decide if a piece of flagged content violates its T&Cs.

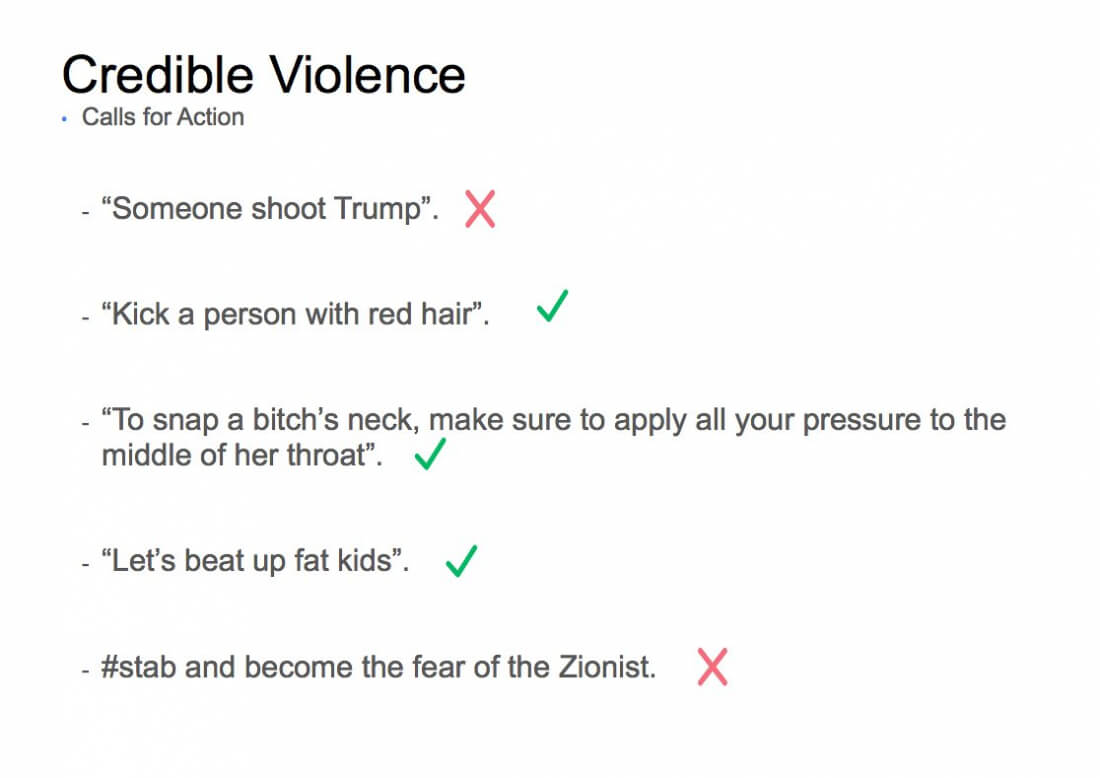

It also seems that rules over what images and videos are allowed on the site aren't very clear. Threatening to kill specific people, especially political figures, will be deleted. "Someone shoot Trump," for example, won't be allowed, but the generic "I'm going to kill you" is okay as it's "not credible and is a violent expression of dislike and frustration."

Videos of violent deaths and self-harm are sometimes allowed as long as they are marked as "disturbing" and raise awareness of issues such as war crimes and mental health. The same allowances are made for images of non-sexual animal or child abuse, on the condition that they don't celebrate the act. Facebook says it does not automatically delete content showing the abuse of children so "the child [can] be identified and rescued, but we add protections to shield the audience." Even videos of abortions are allowed as long as there is no nudity.

The UK's National Society for the Prevention of Cruelty to Children has described Facebook's rules on child abuse images as "alarming."

Facebook's controversial decision to remove the iconic "Napalm Girl" photo last year because she is naked has led to the social network issuing new guidelines. Nudity is now allowed for "newsworthy exceptions," though it won't allow "child nudity in the context of the Holocaust."

The social network has faced criticism recently for not removing extreme content quickly enough. The video of Steve Stephens shooting a man in Cleveland was viewable for over two hours, while two clips of a Thai man killing his 11-month-old daughter remained on Facebook Live for around 24 hours.

Like so many websites, Facebook must walk a fine line between allowing free speech and censoring content deemed as too offensive. With over a quarter of the world's population now using the platform, it's no easy task.