They do provide revenue numbers, but I have never found any profit by division information for Nvidia. I assume Data Center makes the most profit and gaming is much lower. But I haven't been able to verify that anywhere.Nvidia does in its financial statements. For example, in the quarter ending October 2022, the data center division had a revenue of $3.83 billion, gaming was $1.57 billion, provis was $0.2 billion, and automotive & embedded brought in $0.25 billion.

snip

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Nvidia GeForce RTX 4070 Ti Review: Can It Hit the Mainstream at $800?

- Thread starter Steve

- Start date

Overall disappointing results. Is it a good card, if you ignore price? Maybe, but it's not a great card. I was hoping for 10-15% better performance in raster but it's just not there. Is it a better value than a 7900XT? Maybe, but $800 is a lot to spend and so, it's hard to call it a value under any circumstances.

So, back to the waiting game. Now we wait to see who blinks first and drops prices. Nvidia seems intent on keeping prices high and that may be due to high cost to manufacture the product. If that's the case then Nvidia needs to go back to the lab and figure out how to build GPUs for less cost.

So, back to the waiting game. Now we wait to see who blinks first and drops prices. Nvidia seems intent on keeping prices high and that may be due to high cost to manufacture the product. If that's the case then Nvidia needs to go back to the lab and figure out how to build GPUs for less cost.

maroon1

Posts: 184 +207

799 dollar at 4070 Ti is not bad price compared to 899 dollars 7900XT

Only 4% difference at 1440p and 8% at 4K

But 4070 Ti has more features (DLSS, RT, better AV1 encoder)...... So 100 dollar cheaper is right price when compared to what the competition.... But you can still say both 4070 Ti and 7900XT are overpriced.

Only 4% difference at 1440p and 8% at 4K

But 4070 Ti has more features (DLSS, RT, better AV1 encoder)...... So 100 dollar cheaper is right price when compared to what the competition.... But you can still say both 4070 Ti and 7900XT are overpriced.

P

punctualturn

Did I called you names?Any reasonable conversation wont happen so long as you keep being emotional and call everyone who disagrees with you names.

I am sorry, but thats not true and actually your previous response plus this one, reafirm what I wrote, mental gymnastics.

Inflation is a thing. Everything costs more. You are not going to get new GPUs on a process that is more then double the cost of the previous one for $400, that simply isn't happening. AMD wont do it either. People cannot accept that the world is experiencing severe stagflation yet again.

I wont transcribe the video I linked but the answer to the price increase excuses are there.The 4070ti offers 100% better perf/$ then the 3090 did at 1080p and roughly 85% better perf/$ at 1440p. Does that hep you?

Theinsanegamer

Posts: 5,409 +10,147

They show revenue, but I was refering to margin. Margin isnt split up by group, rather it is given as one lump sum.Nvidia does in its financial statements. For example, in the quarter ending October 2022, the data center division had a revenue of $3.83 billion, gaming was $1.57 billion, provis was $0.2 billion, and automotive & embedded brought in $0.25 billion.

I'd bet good money that the margins on that $3.83 billion in datacenter were a lot higher then the $1.57 billion from gaming. Even when gaming was over $3 billion it'd be the same case.

In the 7900xt/x threads someone dug up the GDDR^ modules AMD is using, and their tray price appeared to be $22 per 2GB of VRAM. Extrapolation led the 7900xt to have a $220 memory bus.Given that the 4070 Ti's GDDR6X is only made by Micron and only used by Nvidia, the cost of the RAM is going to be notably higher than GDDR6. A couple of years ago, the latter was around $15 per module, bulk, but speeds have gone up quite a bit since then. I wouldn't be surprised if 21 Gbps GDDR6X was $20+ per module, though not as high as $33, which is how much it would be if the six modules on the 4070 Ti cost $200.

I doubt GDDR6X cost that much more, but it wouldnt surprise me if the floor for 4070ti memory price wasnt at least $150 total.

True, but my question is how much do costs factor into that? the 900 series was built on TSMC 28nm, which was the last major GPU process that was cheaper then its predecessor, and it was quite mature by the time the 900 series arrived as 28nm was used for both the 600 and 700 series as well.It is interesting to note that Nvidia has successively increased the price of the higher-spec xx70 model for 4 generations. The 3070 Ti, on Samsung's cheap 8N and 8 GDDR6X modules, launched at $599 which makes the 4070 Ti 33% more expensive. The older model itself was 20% more than the 2070 Super, which was 25% more than the 1070 Ti; that Pascal model was 25% more expensive than the old GTX 970. Had Nvidia used the same increases, between 20 and 25%, for the jump from Ampere to Ada, the 4070 Ti would have been between $718 and $748.

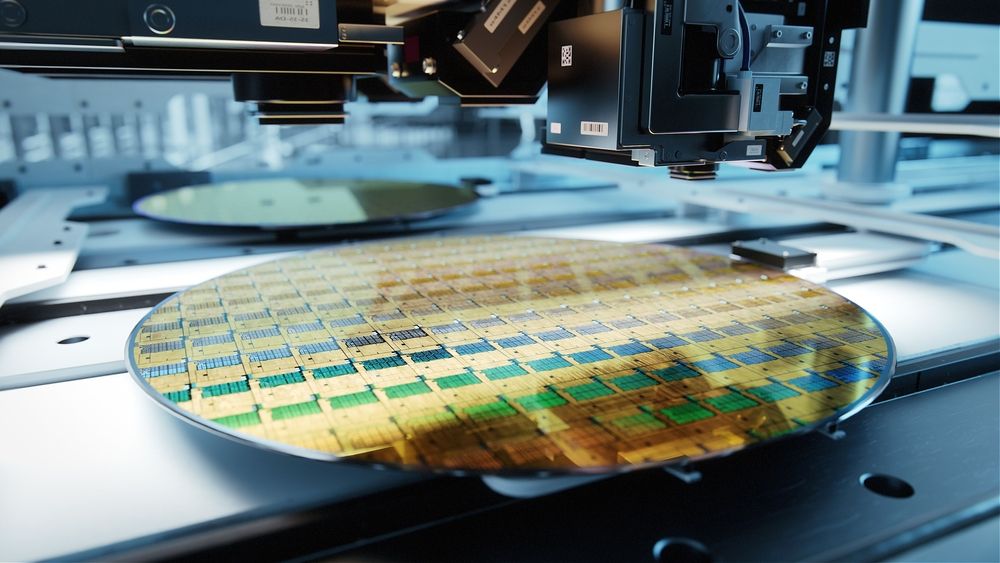

14nm was costlier then 28, and samsung 8 was costlier then that. TSMC has been jacking up prices significantly the last few nodes, and 4nm is their current premium node (which is why AMD used the cheaper 5/6nm nodes). A single 28nm wafer cost $2500 in 2014, now a single 300mm2 wafer of 3/4nm costs $20,000. the 7nm wafers were only $10,000

TSMC Will Reportedly Charge $20,000 Per 3nm Wafer

GPUs and SoCs to get more expensive

Now, assumption at the time was that nvidia used samsung 8nm because it was cheaper, if that's the case, then the new 4070ti, which is roughly the same size as the 3060, would cost over double the amount to produce, assuming the same yields. Somebody out there can do the math I'm sure and figure out the actual cost per GPU die. Rough guestimate IIRC was $100 per 3060 die. Assuming thats true, a single 4070ti die would be $200 at least, given I cant find the cost of a 8nm samsung wafer but it appears to have been $7000-8000 or so. Add another $200 for memory, and another $50 for the PCB and all of its components, you're up to $450 already, not counting the cost of manufacture, transport, or support for drivers, ece.

Is it overpriced? Yeah. Is it realistic to expect this GPU to have been a 4060 for $400? I dont honestly think so.

That's mud in nvidia's face and frankly greed has played a big part in the entire 4000 series. That's honestly not surprising though, every company on earth have been acting like robber barons since the lockdowns.Of course, this was never going to be a 4070 Ti -- let's not forget that this is Nvidia's aborted attempt at pulling the wool over the consumer's eyes, with its '4080 12GB' farce.

Theinsanegamer

Posts: 5,409 +10,147

From your last comment, I bolded it for you:Did I called you names?

For the rest of your comment, feel free to find the answers here, because honestly, its tiresome for the nvidia white knights to continue coming out on their defense without observing how they are driving the whole gaming market down.

It is, try readingI am sorry, but thats not true

You're overly emotional and cast aside arguments as "mental gymnastics" in a similar matter to how cryptobros sue "fud". Just stop man. Everyone is entitled to their own opinion but believe it or not that doesnt make everyone who disagrees with you a white knight, a shill, or part of some grand conspiracy. Expecting the 4070ti to be release at the price of a 3060 when the rest of the world is not in the bubble that was 2020 is unrealistic. Pricing hasnt stood still, and while I agree the price is silly, nvidia's net margins show that their actual profits have not accelerated as fast as the price increases, some of this is beating eater by micron, TSMC, ece, and nvidia has no control over that.and actually your previous response plus this one, reafirm what I wrote, mental gymnastics.

$450 is totally unrealistic. $600 would be the bare minimum I imagine nvidia breaks even.

Any of the graphics card cards are terrible buys : the performance is good or great but prices are absurd, so value is much worse.

I bought a second hand 3060ti for 200€ and it seems to run everything very well, in an emergency I'll turn DLSS on. Spending over 800€ just for the graphics card is absurd.

I bought a second hand 3060ti for 200€ and it seems to run everything very well, in an emergency I'll turn DLSS on. Spending over 800€ just for the graphics card is absurd.

I'm very much eyeing that one, but I'm waiting until I know more about N32.6800XT is an insane value atm.

Theinsanegamer

Posts: 5,409 +10,147

the 6800xt is good enough to ma out everything at 1080p/1440p and hit well above 60 FPS, in many cases over 100 FPS average, so plenty of room for the future.I'm very much eyeing that one, but I'm waiting until I know more about N32.

For me that'd be enough, no matter how good the N32 is its not gonna beat the perf/$.

StrikerRocket

Posts: 225 +186

I'll stick to my 3080 Asus ROG Strix I got for 620 euros, barely used. On my MSI 165 Hz monitor, it's just perfect.

I'll pass on these cards, upgrade when... I see real value and need.

I'll pass on these cards, upgrade when... I see real value and need.

takaozo

Posts: 944 +1,479

That it's a good deal. I'm currently looking at 3070 used marketI bought a second hand 3060ti for 200€ and it seems to run everything very well

Good luck finding one @ $799799 dollar at 4070 Ti is not bad price compared to 899 dollars 7900XT

Only 4% difference at 1440p and 8% at 4K

But 4070 Ti has more features (DLSS, RT, better AV1 encoder)...... So 100 dollar cheaper is right price when compared to what the competition.... But you can still say both 4070 Ti and 7900XT are overpriced.

P

punctualturn

Lets clarify, the white knight comment was about the defenders that no matter what, would not reason, not simply directed at you, but fine, I will take it back.From your last comment, I bolded it for you:

It is, try reading

You're overly emotional and cast aside arguments as "mental gymnastics" in a similar matter to how cryptobros sue "fud". Just stop man. Everyone is entitled to their own opinion but believe it or not that doesnt make everyone who disagrees with you a white knight, a shill, or part of some grand conspiracy. Expecting the 4070ti to be release at the price of a 3060 when the rest of the world is not in the bubble that was 2020 is unrealistic. Pricing hasnt stood still, and while I agree the price is silly, nvidia's net margins show that their actual profits have not accelerated as fast as the price increases, some of this is beating eater by micron, TSMC, ece, and nvidia has no control over that.

$450 is totally unrealistic. $600 would be the bare minimum I imagine nvidia breaks even.

Lets restart, inflation is a thing, correct, but you know very well that the prices that nvidia is charging (and AMD for that matter) are above the inflation, even including the current out of control inflation that we are experiencing.

Here is a quick list of how nvidia has raised the prices of the 70 family (in dollars):

970: $329

1070: 379 Ti: 399

2070: 499 No Ti models. But the Super models were simply a refresh.

3070: 499 Ti: 599

4070: ??

4070Ti: 799.

Mind you that all of those, except maybe the 2070 super and 3070, received discounts and you could find them bellow msrp.

I dont have the time now to do the percentages, but as I said, check that video I linked, which you can see more info as to what I am talking about. Until this mess started, technology/PC parts were actually getting cheaper, instead of the insanity we are currently experiencing.

In the end, nvidia started this, AMD is following it ( maybe smaller percentage) and in the end, we all are screwed.

In Spain or Portugal, frequently you see deals for about <300€ 3060TI, <400€ 3070 and <500€ 3070Ti ( all second hand). Pay attention if it's somewhat old because much of them were used for mining...That it's a good deal. I'm currently looking at 3070 used market

Mine also but only for about 6 months, impeccable look (only a little bit dust) and I tested it under benchmarks. But it's always a thing of luck.

Theinsanegamer

Posts: 5,409 +10,147

I accept.Lets clarify, the white knight comment was about the defenders that no matter what, would not reason, not simply directed at you, but fine, I will take it back.

Lets restart, inflation is a thing, correct, but you know very well that the prices that nvidia is charging (and AMD for that matter) are above the inflation, even including the current out of control inflation that we are experiencing.

Here is a quick list of how nvidia has raised the prices of the 70 family (in dollars):

970: $329

1070: 379 Ti: 399

2070: 499 No Ti models. But the Super models were simply a refresh.

3070: 499 Ti: 599

4070: ??

4070Ti: 799.

Mind you that all of those, except maybe the 2070 super and 3070, received discounts and you could find them bellow msrp.

I dont have the time now to do the percentages, but as I said, check that video I linked, which you can see more info as to what I am talking about. Until this mess started, technology/PC parts were actually getting cheaper, instead of the insanity we are currently experiencing.

In the end, nvidia started this, AMD is following it ( maybe smaller percentage) and in the end, we all are screwed.

As I mentioned in my other comment to neeyik, the 970 was very cheap. However, one must consider the price of wafers. The geforce 970 was 398mm2, but a single wafer of 28nm cost only $2,500. The current wafer price for the 4n nvidia cards is estimated to be around $20,000. That's a major cost increase, no matter how you look at it.

Memory has also gone up. The 970 used 8Gb GDDR5, which was about $6.80 per chip, and the 970 had 8 of them. the 3060 used memory that was $16 per chip. Current high end GDDR6 pricing is around $22 per chip, GDDR6x is speculated to have a high premium due to the exclusive contract with micro, speculated to be high 20s or possibly over $30.

None of this is controlled by nvidia or AMD. It is on the likes of TSMC, samsung, and micron.

GPUs are not the only thing that has gone up in price. EVERYTHING is jacked up. That's what happens when the money supply increases by 50% in 18 months. Nvidia is greedy, certianly, but as their margin shows, a lot of this money is getting eaten up by their suppliers and manufacturing costs. It's totally overpriced, but expecting it to cost $400 or $450 is unrealistic today. $600 would be a more likely break even baseline.

The guilt lies in the customer, the same happens with Apple:In the end, nvidia started this, AMD is following it ( maybe smaller percentage) and in the end, we all are screwed.

Step 1: they have to make investors happy year of year

Step 2: instead of always selling more, it's a lot easier and less supply issues if you set a higher price per unit and make more money on each unit. So if you sell less 20% less cars but earn 70% per unit, at the end you earn more money with less effort.

Step 3: companies make "research" models, they start selling more expensive and watch the market.

Step 4: if most customer buy those "overly expensive " units, then they keep pushing it unit they reach the max.

Since the RTX 30, between mining and (stupid) customers, prices went up to the sky and they couldn't keep the demand. So.... why lower the cost?! The "standard" customer is the only hurt.

Annalise the BOM cost and it didn't increase, even with the inflation, nowhere near the price increase.That's a major cost increase, no matter how you look at it.

Memory has also gone up. The 970 used 8Gb GDDR5, which was about $6.80 per chip, and the 970 had 8 of them. the 3060 used memory that was $16 per chip.

Why?

- Chip development costs decreased due to software and AI help developing the architecture; also most architectures are not new anymore but updates/ restructure from older ones; also units per wafer increased.

- gddr6x exists since at least 2020 and memory density or production complexity didn't increase much.

- newer bigger changes didn't come on rtx 30 nor rtx 40. RTX / DLSS came with series 20.

What I mean is that cost went much higher not due to production costs

Makste

Posts: 182 +132

The bar for the 4070 ti should be below the 7900xtx in this graph. Please correct it.

https://static.techspot.com/articles-info/2601/bench/FH5_4K.png

https://static.techspot.com/articles-info/2601/bench/FH5_4K.png

Last edited:

You have the audacity of scoring the WORST GPU launched EVER... an 80/100?

Get out of here...

Get out of here...

Theinsanegamer

Posts: 5,409 +10,147

I never said the increase was 100% in line with inflation, rather that it played a major part. I have also stated multiple times that nvidia is overcharging.Annalise the BOM cost and it didn't increase, even with the inflation, nowhere near the price increase.

do you have a source for any of this, and the associated cost decreases?- Chip development costs decreased due to software and AI help developing the architecture; also most architectures are not new anymore but updates/ restructure from older ones; also units per wafer increased.

That does not mean the price did not increase. Speeds, and their associated prices, HAVE gone up since their introduction in 2020. The price of GDDR6 has gone up since 2020. It is reasonable to assume that the price of GDDR6X has also gone up since 2020. I've already linked prices as they are currently, you can go back to early 2020 and find how much less they cost back then if you wish.- gddr6x exists since at least 2020 and memory density or production complexity didn't increase much.

Micron also announced it would be pursuing new pricing strategies to stabilize in come and that it would "not be cutting margins". Hopefully that is self explanitory

/cloudfront-us-east-2.images.arcpublishing.com/reuters/FHLVIJ2RCVO4LHWTTDJQONMXAY.jpg)

Memory chip maker Micron launches new pricing experiment for stability

Chipmaker Micron Technology Inc on Thursday announced it was experimenting with a new pricing model for its chips called forward pricing agreements that would aim to stabilize the steep price fluctuations that plague the industry.

Literally what? Are you arguing that nvidia made no major investments into software for the 40 series? Because DLSS 3 was kind of a thing. Ampere brought redesigned and more powerful RT cores.- newer bigger changes didn't come on rtx 30 nor rtx 40. RTX / DLSS came with series 20.

When the cost of wafers has doubled from the previous generation how on earth do you figure production costs havent gone up?What I mean is that cost went much higher not due to production costs

brucek

Posts: 1,716 +2,686

A few comments here had me briefly wondering if my personal reaction to the 4070ti was too negative, but then I saw today's videos from Gamer's Nexus and Moore's Law is Dead.

People sometimes claim that Nvidia has the influencers paid off but if this is what paid off press looks like...

People sometimes claim that Nvidia has the influencers paid off but if this is what paid off press looks like...

P

punctualturn

Nope, 970 was 3.5 GB with some shady 0.5 GB on a different path (sorry but forgot the details, long time ago and yes, I still have one of those), not 8GB.The 970 used 8Gb GDDR5

If anything, nvidia always has shortchanged everyone with the included memory on all of their "regular" GPUs.

To give you a clear idea about Nvidia's greed: This 192bit card was going to be named 4080 and pricing was going to be done accordingly. So please stop telling us that costs increased as an excuse for the price hike.

Theinsanegamer

Posts: 5,409 +10,147

That is incorrect. The 970 uses 8 full speed memory chips. What was cut down was the memory controller within the 970 itself, causing the last 0.5GB to be accessed at a much slower speed then the rest of the memory buffer.Nope, 970 was 3.5 GB with some shady 0.5 GB on a different path (sorry but forgot the details, long time ago and yes, I still have one of those), not 8GB.

If anything, nvidia always has shortchanged everyone with the included memory on all of their "regular" GPUs.

Similar threads

- Replies

- 74

- Views

- 2K

Latest posts

-

The Best Phones: Top Picks for Every Price Range

- DanteSmith replied

-

Russia-backed hacking group suspected of attack on US water system

- PurpaFur replied

-

Sony's Ghost of Tsushima brings familiar PlayStation features to PC

- Jarenien replied

-

New Affordable AMD B650 Motherboard Roundup

- Resonator replied

-

Google is set to invest over $100 billion in AI, DeepMind founder says

- kiwigraeme replied

-

TechSpot is dedicated to computer enthusiasts and power users.

Ask a question and give support.

Join the community here, it only takes a minute.