Highly anticipated: Cyberpunk 2077's third (but not final) Night City Wire info dump dropped today, and it's given us some juicy new details about the game. We got a more in-depth look at Night City's various districts and their respective gangs, and -- perhaps most importantly for us PC enthusiasts -- we finally know what sort of rig we'll need to run Cyberpunk 2077 on November 19.

We'll focus on the system requirements here since that's arguably the information PC players have been waiting for the longest. Before we list them, a quick side note: some of our readers will be pleased to know that, while Windows 10 is the recommended OS to play 2077 with, it will run just fine on Windows 7.

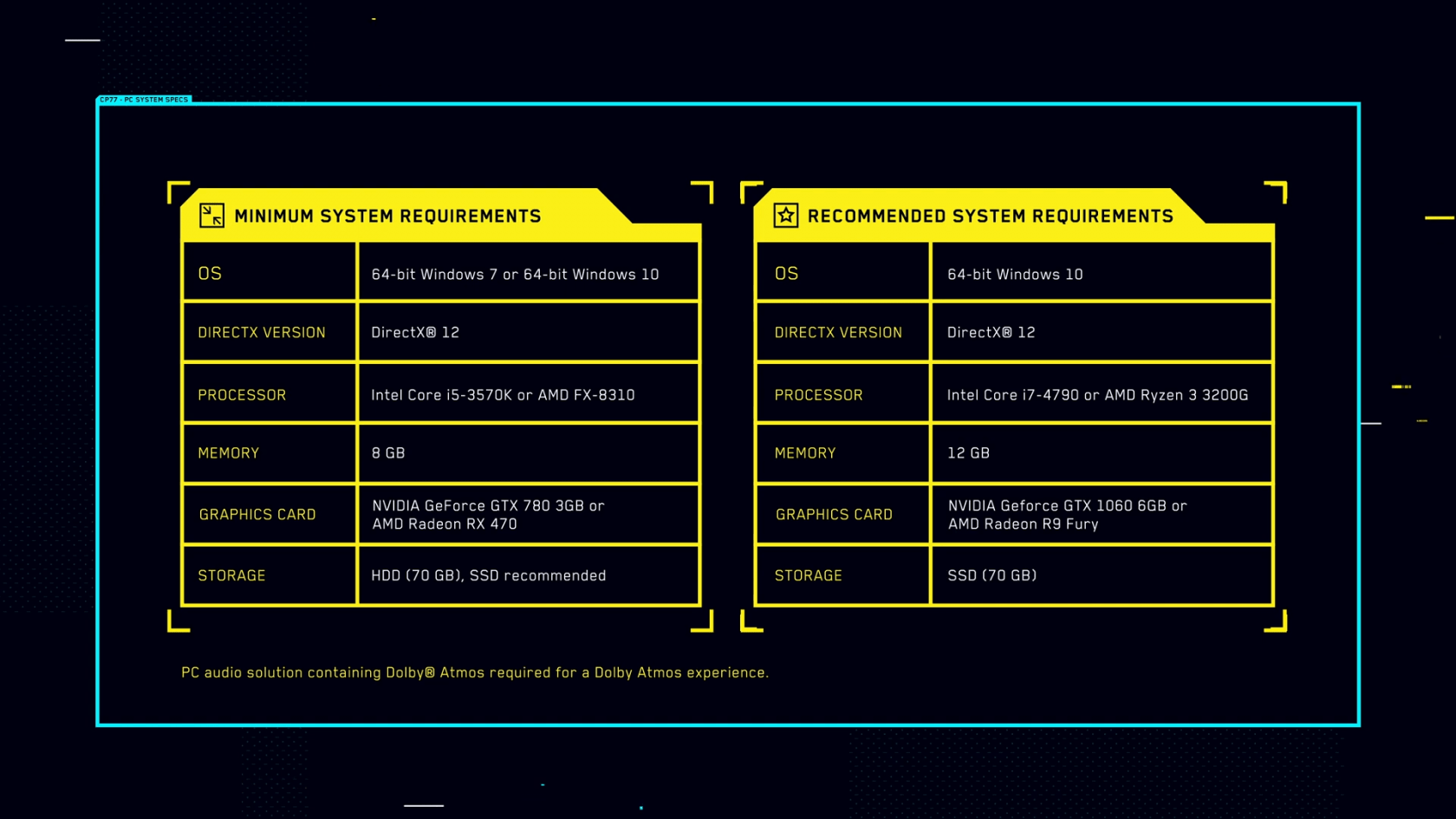

Now, onto the minimum requirements:

- Processor: Intel Core i5-3570K or AMD FX-8310

- Memory: 8 GB RAM

- Graphics: NVIDIA GeForce GTX 780 or AMD Radeon RX 470

- DirectX: Version 12

- Storage: 70 GB available space

- Additional Notes: SSD recommended

Those are surprisingly modest hardware demands for a game with as much detail and density as Cyberpunk 2077.

Many games with poorer graphics and smaller worlds ask for much more from players on the low-end, so it's nice to see CD Projekt Red is taking optimization seriously (though, of course, we'll need to play and benchmark 2077 ourselves to be certain).

Now, on to the recommended hardware configuration:

- Processor: Intel Core i7-4790 or AMD Ryzen 3 3200G

- Memory: 12 GB RAM

- Graphics: NVIDIA GeForce GTX 1060 or AMD Radeon R9 Fury

- DirectX: Version 12

- Storage: 70 GB available space

Cyberpunk 2077's recommended requirements are equally as impressive. 12GB of RAM is very easy to obtain these days, and a GTX 1060 is one of the most common GPUs out there.

You'll also want an SSD to run Cyberpunk 2077 at its recommended settings, though that isn't listed in the above list (but it is listed at the end of the Night City Wire 3 video).

It's not as if the game won't boot if you run it on a hard drive at higher settings, but CD Projekt Red seems to feel that an SSD is important enough to a smooth gameplay experience that it warranted an official requirement.

And it's not hard to see why. The developer has said from the start that Cyberpunk 2077 is an immense open world RPG with no loading screens (barring death re-loads and fast travel). With that in mind, it's probably quite the technical challenge to reduce pop-in and retain quality draw distances on much slower, 7200RPM spinning drives.

As is often the case with minimum and recommended requirement listings, it's unclear what framerate and resolution CD Projekt Red is targeting here. We'll be reaching out to the studio for clarification, but we're assuming both lists are aimed at 1080p, 30 FPS gameplay; just with different settings (and, of course, no RTX).

If you want to hop on the Cyberpunk 2077 hype train now, the game is available for pre-order on just about every publisher-agnostic digital storefront: GOG, Steam, and The Epic Games Store, to name a few.

It'll run you $60, but if you don't want to shell out that cash early, don't fret -- no in-game content will be locked behind pre-order bonuses.

https://www.techspot.com/news/86808-cyberpunk-2077-system-requirements-finally-here.html