Forward-looking: Intel has announced a partnership with Green Revolution Cooling (GRC) to develop sustainable immersion cooling for data centers. The first fruits of their partnership are findings on the usefulness of immersion cooling, described within a newly published whitepaper.

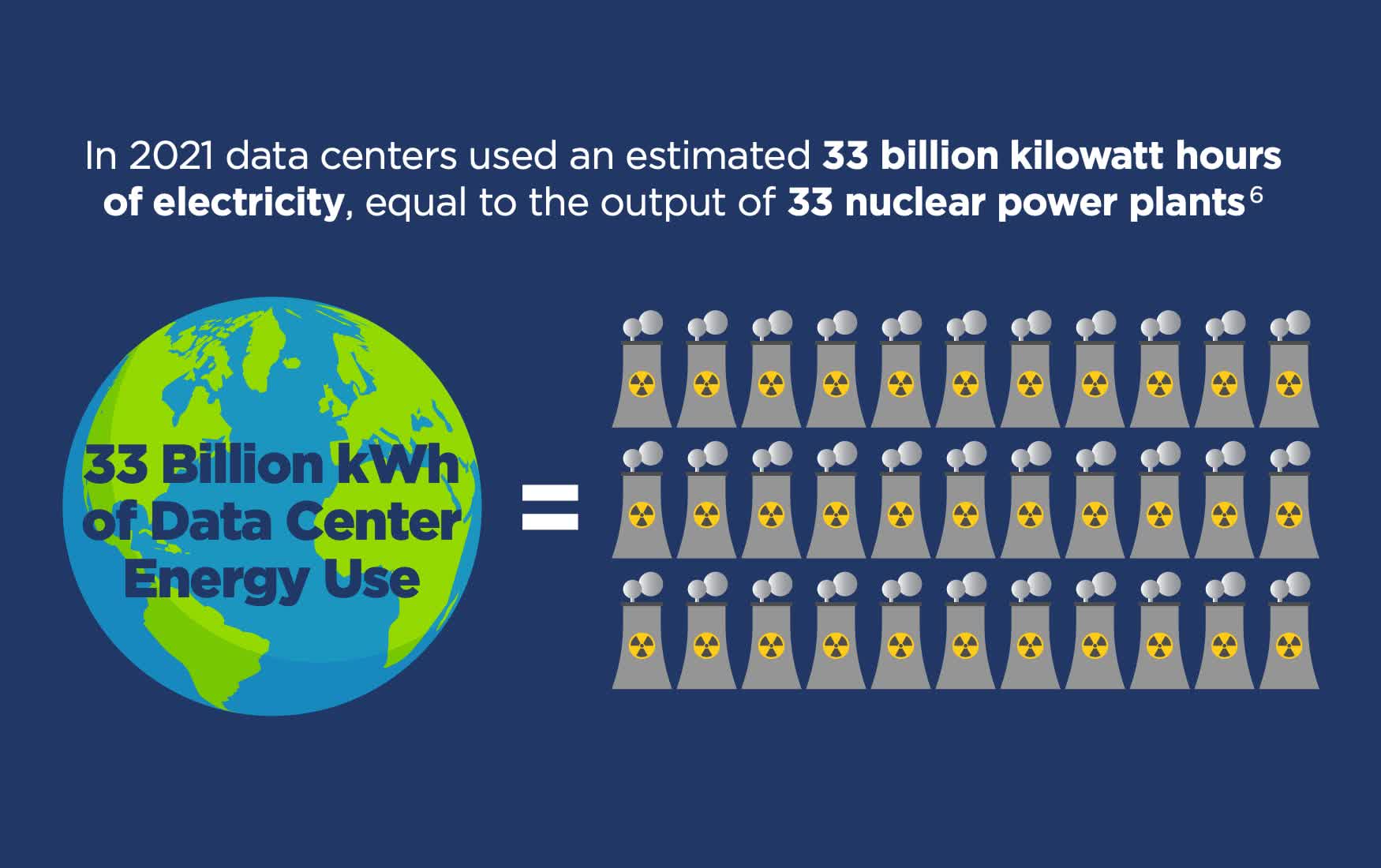

According to two estimates from 2020, data centers consume anywhere from 1.5% to 2% of the world's energy and could be consuming as much as 13% within ten years. Around half of that energy is used by the computers themselves and 25% to 40% is used by air conditioning, says the US Department of Energy.

Some data centers have made strides to improve their cooling efficiency lately but they've been negated by the rising power consumption of new hardware. According to Statista, the average power usage effectiveness, I.e. efficiency, of all large data centers has held at about 1.6 for about a decade.

In their white paper, Intel and GRC say that immersion cooling cuts out the need for server fans, which make up 10-15% of a server's power consumption. Immersion cooling can also cycle heat away faster than air cooling resulting in more efficiency gains, but the paper didn't put a number to them.

Intel and GRC express the most interest in single-phase immersion cooling, as opposed to two-phase cooling. The former uses a pump to circulate a non-conductive liquid around a tank containing multiple servers and relies on a heat interchanger to cool the liquid. It's simpler than two-phase cooling, which involves the liquid boiling into a gas before being cooled back into a liquid.

"Intel is designing silicon with immersion cooling in mind, rethinking elements like the heat sink."

Immersion cooling also has other benefits over air cooling, according to the white paper. Data centers collectively use billions of gallons of water each year for their cooling and power generation, which immersion cooling would significantly reduce. Immersion-cooled centers can also be built smaller than air-cooled centers, reducing land waste and building costs.

It does have its flaws, though. Having all your systems be submerged would be a maintenance nightmare, and also make errors more severe. However, Intel seems pretty willing to bet on it.

In May, the company announced that it was building a $700 million research lab in Oregon with a focus on sustainability initiatives, including immersion cooling, heat recapture, and water usage effectiveness. It's being joined by other companies, including Microsoft, in experimenting with immersion cooling and other strange approaches to cooling as data centers become larger and the need for sustainable solutions grows more urgent.

https://www.techspot.com/news/95323-intel-designing-new-hardware-immersion-cooling-mind.html