Microsoft has reportedly asked Intel to create a 16-core Atom processor that would be used in the company's servers. Speaking at The Linley Group Data Center Conference, Microsoft engineer Dileep Bhandarkar suggests that Intel's Xeon chips require too much power for their higher speeds and there's a "huge opportunity" to improve energy efficiency by outfitting servers with chips like Intel's Atom and AMD's Bobcat.

It was also noted that an system-on-a-chip (SoC) solution is ideal for low-power computing. "When you look at these tiny cores, another way of making them work in a very efficient way is [not to] surround them with a whole bunch of south bridges and network controllers. … Essentially, the tiny cores and systems-on-chip should go together" Bhandarkar explained to conference attendees.

Bhandarkar mentioned that Microsoft would consider deploying ARM-based servers if the chipmaker could show enough value over x86 chips. ARM would have to offer a 2x improvement per dollar per watt to make the transition worthwhile. "Instruction-set transitions are extremely painful." If anything, Bhandarkar says ARM's presence motivates Intel and AMD to deliver more efficient x86 chips.

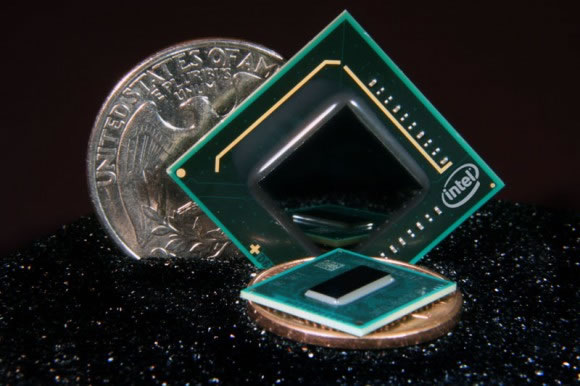

Linley Group founder and analyst Linley Gwennap believes it's only a matter of time before Intel brings the Atom to servers. "We're seeing more of the ARM guys going after the server market and just to compete on power performance per watt, Intel is going to have to rely on the Atom CPU," he said. Intel wouldn't confirm plans for a server-grade Atom, but it noted that HP currently sells an Atom-powered home media server.

https://www.techspot.com/news/42199-microsoft-pushes-intel-for-16-core-atom-processor.html