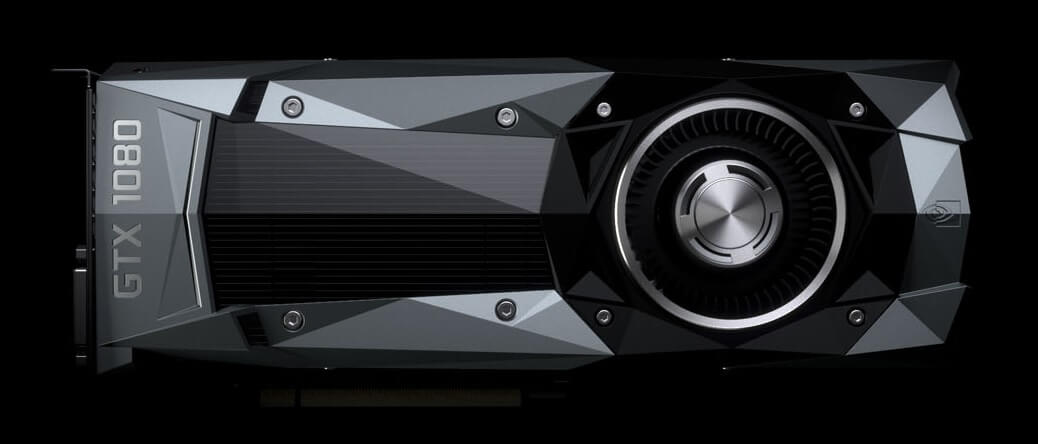

At a special event today, Nvidia announced their new flagship graphics card: the GeForce GTX 1080. The card uses a new Pascal GPU built on a 16nm FinFET process, as well as GDDR5X memory from Micron.

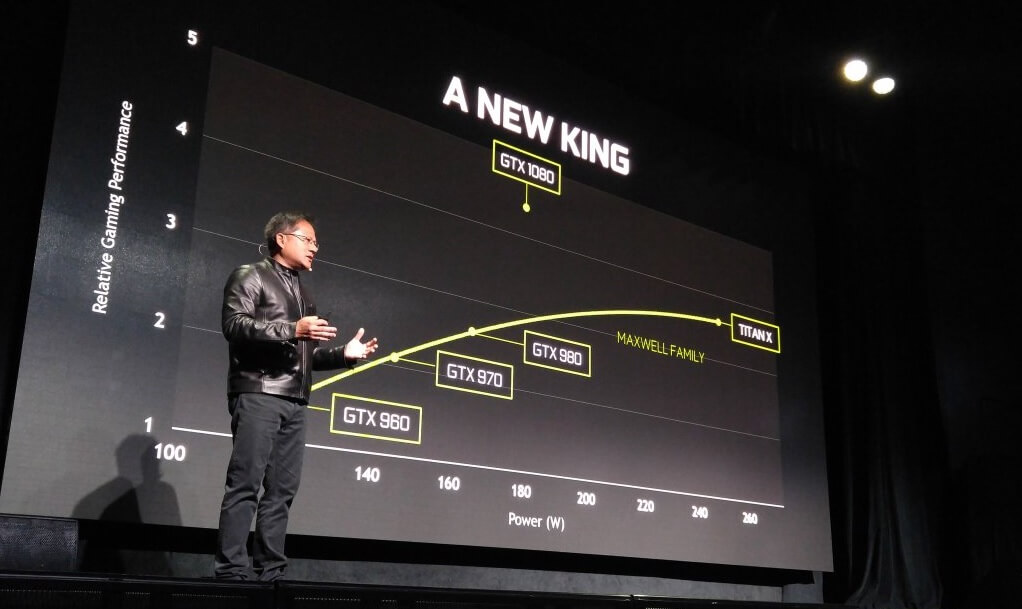

Nvidia says they spent several billions of dollars developing Pascal, and the result is a massive GPU capable of powering today and tomorrow's games at ultra quality settings. It's also energy efficient thanks to improvements in the way Nvidia delivers power to the GPU, making Pascal the company's most efficient architecture yet.

According to Nvidia, the GTX 1080 is "a whole lot" faster than the Titan X, and faster than two GTX 980s in SLI, while consuming a lot less power. The chart below sees the GTX 1080 consume around 180W of power, compared to the 165W TDP of the GTX 980, with performance around 30% faster than the Titan X.

An on-stage demo of the GTX 1080 had the card's core running at 2.1 GHz air cooled, with a temperature of less than 70°C. While these aren't the stock clock speeds for the 1080, it shows just how overclocking friendly this card will be.

The stock clock speeds for the GTX 1080 are 1607 MHz with a boost of 1733 MHz, on 2,560 CUDA cores. This suggests the card is using a GP104 core rather than the full GP100 GPU used on the Tesla P100, which comes partially-disabled with 3,584 CUDA cores.

2114MHz GPU clock. On air cooling. 67 degrees. The power and efficiency of the Pascal architecture. #GameReady pic.twitter.com/geRiyGw42M

— NVIDIA GeForce (@NVIDIAGeForce) May 7, 2016

The memory system features 8 GB of GDDR5X with an effective clock speed of 10 GHz on a 256-bit bus, providing 320 GB/s of bandwidth.

As the GTX 1080 is a Pascal-based card, gamers will get to enjoy some of Nvidia's new technologies, the best of which is Simultaneous Multi-Projection. This technology has two huge benefits: no more fish-eye lens effect in multi-monitor gaming environments, and faster VR gaming due to improved viewport efficiency.

The GTX 1080 will retail for $599 in its basic edition, and $699 for "Founder's Edition", although it's not completely clear what benefits the Founder's Edition will bring. The card will be available worldwide from May 27th.

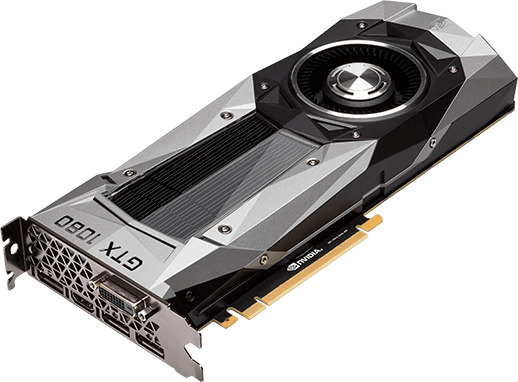

There will also be a GTX 1070 available for $379 ($449 for the Founder's Edition), which Nvidia says is faster than a GTX Titan X, packing 6.5 TFLOPs of performance and 8 GB of GDDR5 memory. The GTX 1070 will be available from June 10th.

https://www.techspot.com/news/64736-nvidia-announces-geforce-gtx-1080.html